AI built for R&D and IP

Unify global innovation data, internal knowledge, and advanced AI to uncover technical insights and make better decisions faster.

Powering world-leading R&D & IP teams

.svg)

.svg)

.svg)

.svg)

R&D teams are stuck in research instead of development, and are losing critical knowledge along the way.

Teams spend 30% of their time and over $250B per year on research, making multi-million dollar decisions with fragmented data and tribal knowledge.

Challenge 01

Teams manually search multiple databases, cross-reference findings, and validate sources using only the content they have access to.

Challenge 02

R&D teams start from scratch with every project. Institutional knowledge is buried in siloed tools and systems that aren't useful in real-time work.

Challenge 03

.svg)

Teams rely on unoptimized systems, incomplete datasets, and broken context to make decisions with long-term impact.

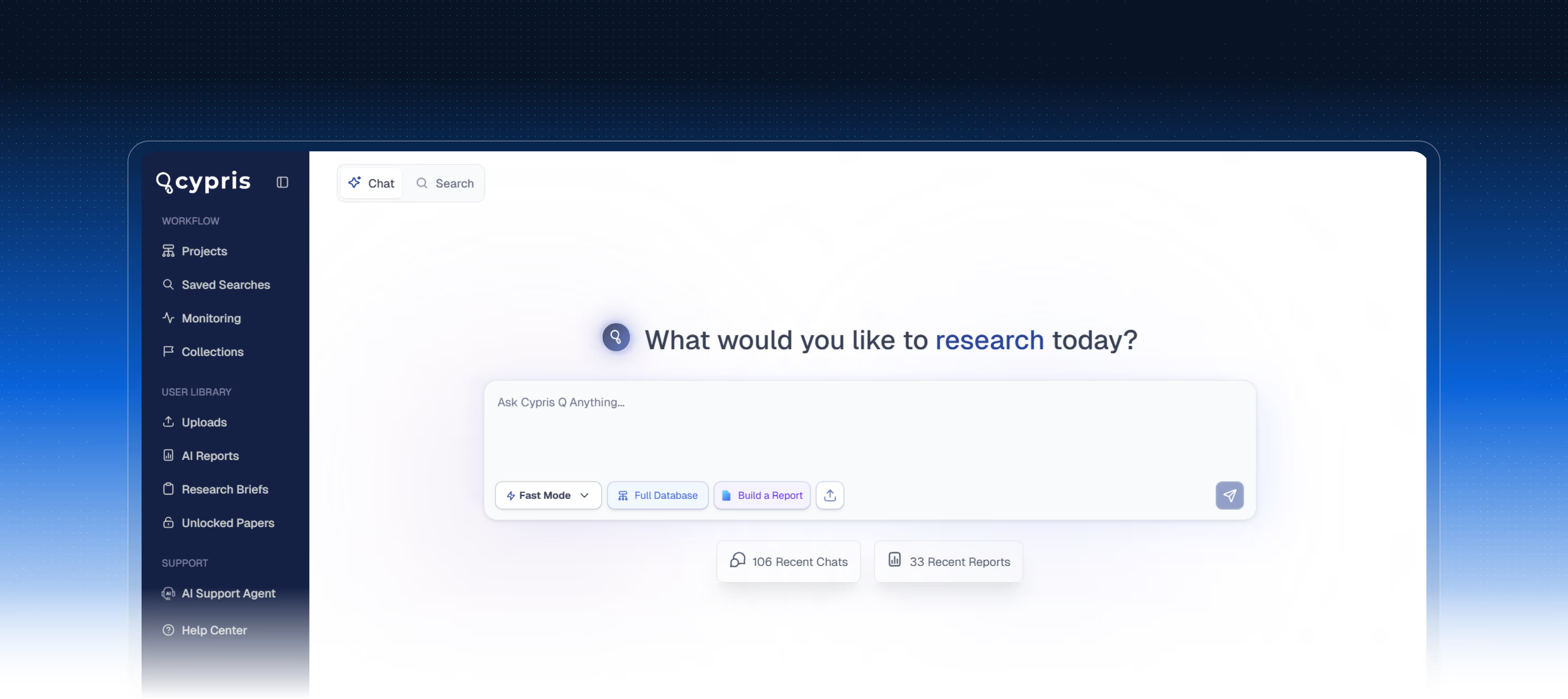

One AI platform for research and knowledge management

Cypris brings external intelligence and internal knowledge together so R&D teams can move faster and make stronger decisions.

AI-Powered technical and market intelligence unified in one platform. Your team gets answers from trustworthy sources in hours, not weeks.

Centralize institutional data and let AI surface context-rich insights at the right time. Every project builds on what came before.

Global patents, academic papers, commercial data, monitoring, and research services. No more juggling vendors.

The Cypris Innovation Database

500M+ documents globally sourced and updated daily. "The Bloomberg Terminal for innovation data." — R&D World Magazine

from 20K+ journals

from 150+ countries

across 200+ countries

.avif)

optimized for AI

Built for enterprise security

American built. American hosted. Your data stays yours.

Based in United States

Certification

- U.S.-based infrastructure across datacenters, hosting, and operations. Backed by U.S. investors and stakeholders. No offshore data routing, no exceptions. Trusted by Western-allied governments and mission-critical defense and research organizations.

- SOC 2 Type II certified with continuous monitoring and maintenance of all security systems. Tenant isolation by design. End-to-end encryption across the entire platform.

One subscription across 3 solutions

Everything your team needs to research, retain, and monitor

AI Dashboard

Discover and analyze global R&D and IP data in one place. Access 500M+ full-text patents, closed and open access papers, chemical compounds, and market intelligence powered by the best models from OpenAI, Anthropic, and Google.

Knowledge Management

Centralize your internal R&D data and make it work alongside external intelligence. Upload documents, capture institutional knowledge, and let AI surface insights when you need them.

Research Briefs

Custom reports built by trained analysts using the latest in AI and data science. From prior art searches to competitive landscapes, get bespoke research tailored to your strategic questions.

Why Cypris stands apart

Enterprise R&D demands more than generic AI tools.

Knowledge is power

What can your team accomplish with the power of AI?

Unlock AI powered innovation for your team

Schedule a demo to learn more about what we can do

Executive Summary

In 2024, US patent infringement jury verdicts totaled $4.19 billion across 72 cases. Twelve individual verdicts exceeded $100million. The largest single award—$857 million in General Access Solutions v.Cellco Partnership (Verizon)—exceeded the annual R&D budget of many mid-market technology companies. In the first half of 2025 alone, total damages reached an additional $1.91 billion.

The consequences of incomplete patent intelligence are not abstract. In what has become one of the most instructive IP disputes in recent history, Masimo’s pulse oximetry patents triggered a US import ban on certain Apple Watch models, forcing Apple to disable its blood oxygen feature across an entire product line, halt domestic sales of affected models, invest in a hardware redesign, and ultimately face a $634 million jury verdict in November 2025. Apple—a company with one of the most sophisticated intellectual property organizations on earth—spent years in litigation over technology it might have designed around during development.

For organizations with fewer resources than Apple, the risk calculus is starker. A mid-size materials company, a university spinout, or a defense contractor developing next-generation battery technology cannot absorb a nine-figure verdict or a multi-year injunction. For these organizations, the patent landscape analysis conducted during the development phase is the primary risk mitigation mechanism. The quality of that analysis is not a matter of convenience. It is a matter of survival.

And yet, a growing number of R&D and IP teams are conducting that analysis using general-purpose AI tools—ChatGPT, Claude, Microsoft Co-Pilot—that were never designed for patent intelligence and are structurally incapable of delivering it.

This report presents the findings of a controlled comparison study in which identical patent landscape queries were submitted to four AI-powered tools: Cypris (a purpose-built R&D intelligence platform),ChatGPT (OpenAI), Claude (Anthropic), and Microsoft Co-Pilot. Two technology domains were tested: solid-state lithium-sulfur battery electrolytes using garnet-type LLZO ceramic materials (freedom-to-operate analysis), and bio-based polyamide synthesis from castor oil derivatives (competitive intelligence).

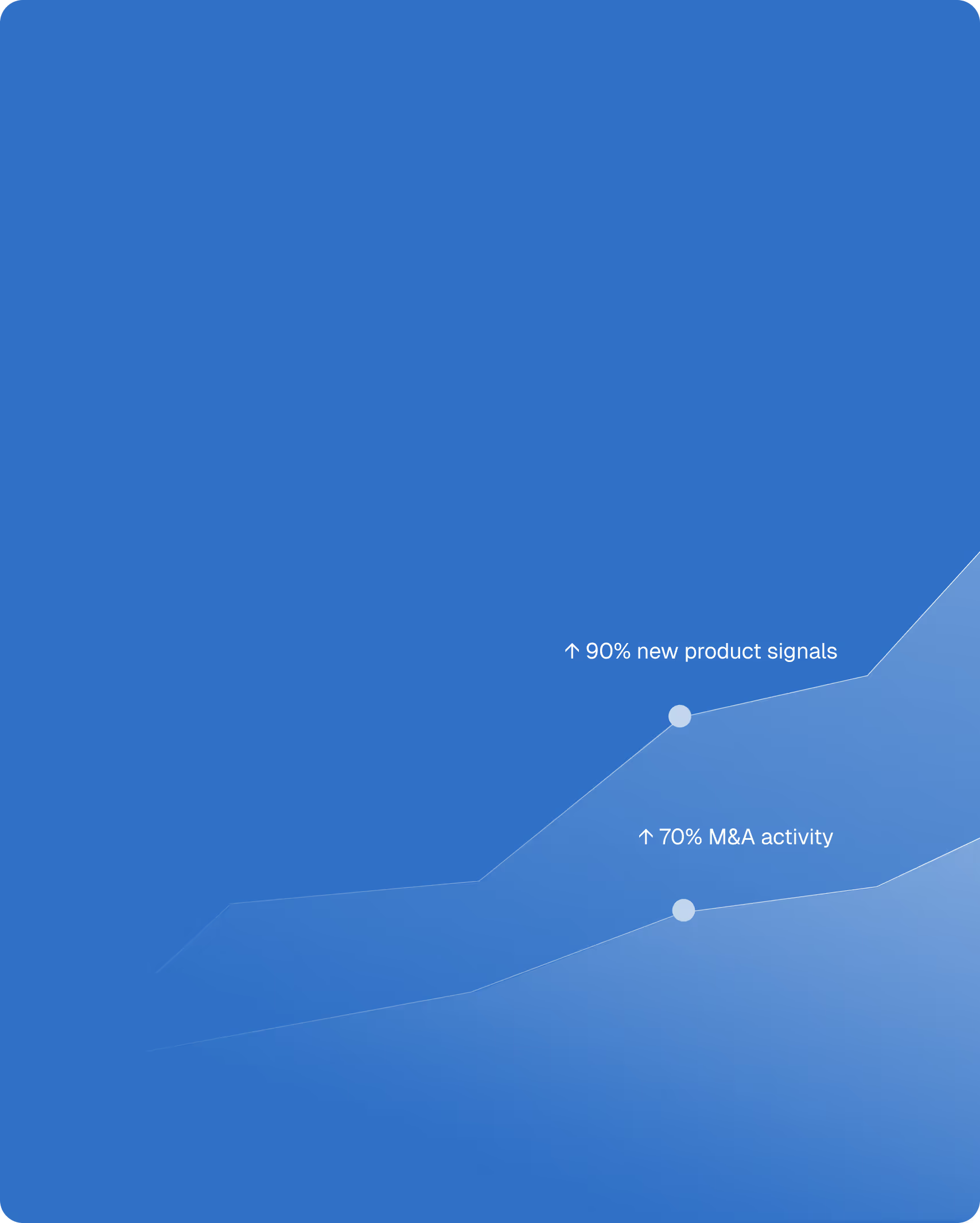

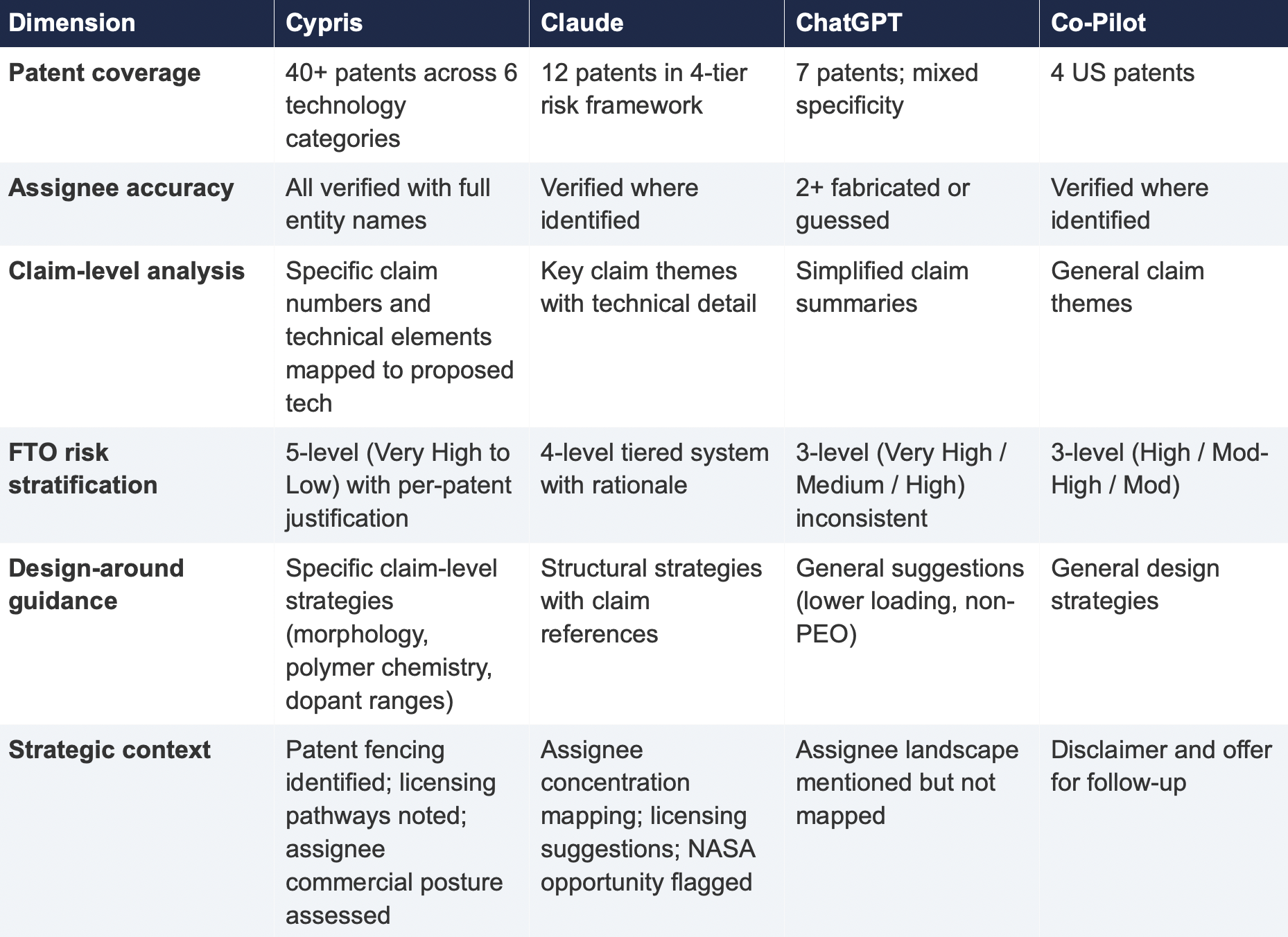

The results reveal a significant and structurally persistent gap. In Test 1, Cypris identified over 40 active US patents and published applications with granular FTO risk assessments. Claude identified 12. ChatGPT identified 7, several with fabricated attribution. Co-Pilot identified 4. Among the patents surfaced exclusively by Cypris were filings rated as “Very High” FTO risk that directly claim the technology architecture described in the query. In Test 2, Cypris cited over 100 individual patent filings with full attribution to substantiate its competitive landscape rankings. No general-purpose model cited a single patent number.

The most active sectors for patent enforcement—semiconductors, AI, biopharma, and advanced materials—are the same sectors where R&D teams are most likely to adopt AI tools for intelligence workflows. The findings of this report have direct implications for any organization using general-purpose AI to inform patent strategy, competitive intelligence, or R&D investment decisions.

1. Methodology

A single patent landscape query was submitted verbatim to each tool on March 27, 2026. No follow-up prompts, clarifications, or iterative refinements were provided. Each tool received one opportunity to respond, mirroring the workflow of a practitioner running an initial landscape scan.

1.1 Query

Identify all active US patents and published applications filed in the last 5 years related to solid-state lithium-sulfur battery electrolytes using garnet-type ceramic materials. For each, provide the assignee, filing date, key claims, and current legal status. Highlight any patents that could pose freedom-to-operate risks for a company developing a Li₇La₃Zr₂O₁₂(LLZO)-based composite electrolyte with a polymer interlayer.

1.2 Tools Evaluated

1.3 Evaluation Criteria

Each response was assessed across six dimensions: (1) number of relevant patents identified, (2) accuracy of assignee attribution,(3) completeness of filing metadata (dates, legal status), (4) depth of claim analysis relative to the proposed technology, (5) quality of FTO risk stratification, and (6) presence of actionable design-around or strategic guidance.

2. Findings

2.1 Coverage Gap

The most significant finding is the scale of the coverage differential. Cypris identified over 40 active US patents and published applications spanning LLZO-polymer composite electrolytes, garnet interface modification, polymer interlayer architectures, lithium-sulfur specific filings, and adjacent ceramic composite patents. The results were organized by technology category with per-patent FTO risk ratings.

Claude identified 12 patents organized in a four-tier risk framework. Its analysis was structurally sound and correctly flagged the two highest-risk filings (Solid Energies US 11,967,678 and the LLZO nanofiber multilayer US 11,923,501). It also identified the University ofMaryland/ Wachsman portfolio as a concentration risk and noted the NASA SABERS portfolio as a licensing opportunity. However, it missed the majority of the landscape, including the entire Corning portfolio, GM's interlayer patents, theKorea Institute of Energy Research three-layer architecture, and the HonHai/SolidEdge lithium-sulfur specific filing.

ChatGPT identified 7 patents, but the quality of attribution was inconsistent. It listed assignees as "Likely DOE /national lab ecosystem" and "Likely startup / defense contractor cluster" for two filings—language that indicates the model was inferring rather than retrieving assignee data. In a freedom-to-operate context, an unverified assignee attribution is functionally equivalent to no attribution, as it cannot support a licensing inquiry or risk assessment.

Co-Pilot identified 4 US patents. Its output was the most limited in scope, missing the Solid Energies portfolio entirely, theUMD/ Wachsman portfolio, Gelion/ Johnson Matthey, NASA SABERS, and all Li-S specific LLZO filings.

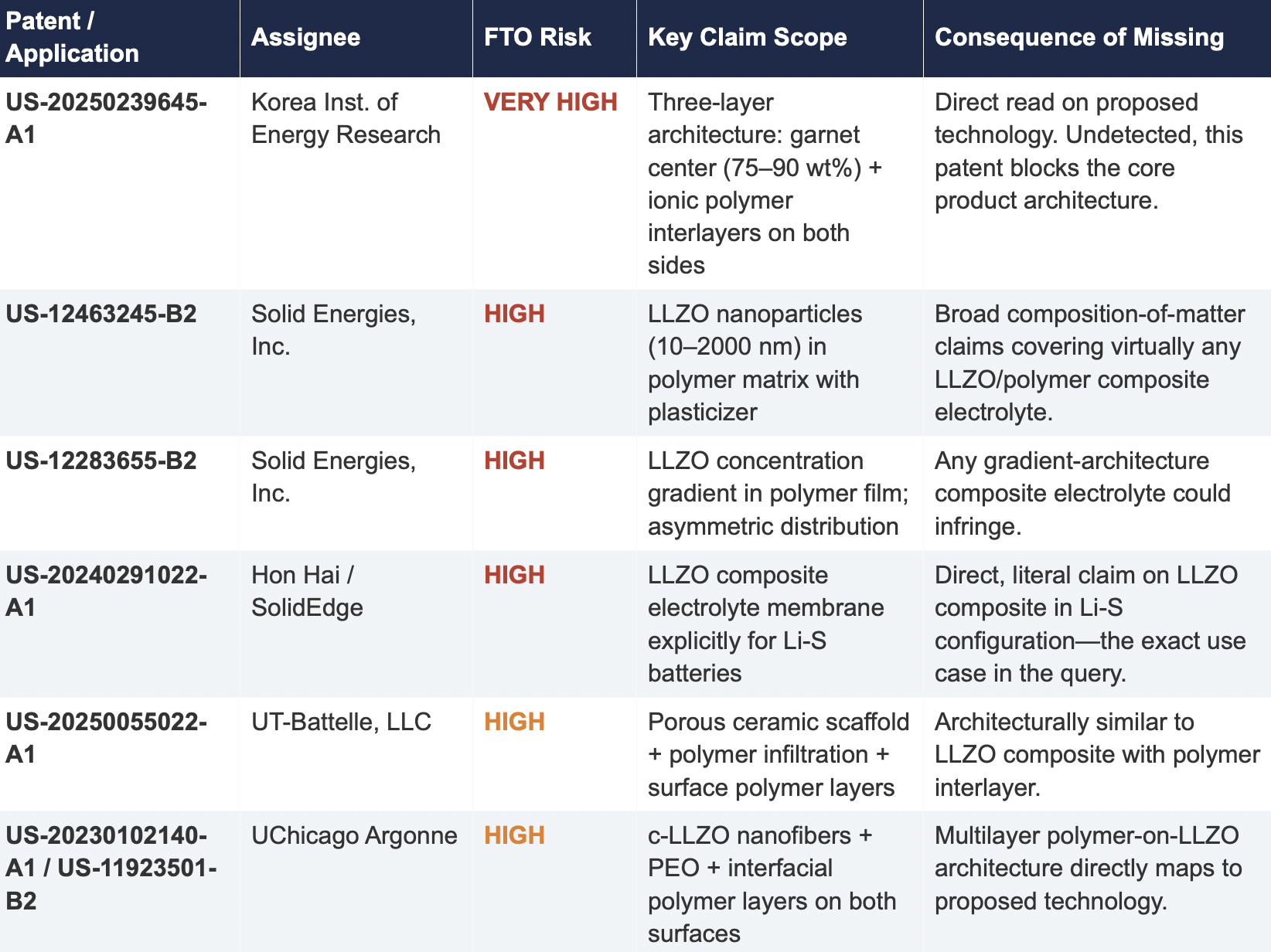

2.2 Critical Patents Missed by Public Models

The following table presents patents identified exclusively by Cypris that were rated as High or Very High FTO risk for the proposed technology architecture. None were surfaced by any general-purpose model.

2.3 Patent Fencing: The Solid Energies Portfolio

Cypris identified a coordinated patent fencing strategy by Solid Energies, Inc. that no general-purpose model detected at scale. Solid Energies holds at least four granted US patents and one published application covering LLZO-polymer composite electrolytes across compositions(US-12463245-B2), gradient architectures (US-12283655-B2), electrode integration (US-12463249-B2), and manufacturing processes (US-20230035720-A1). Claude identified one Solid Energies patent (US 11,967,678) and correctly rated it as the highest-priority FTO concern but did not surface the broader portfolio. ChatGPT and Co-Pilot identified zero Solid Energies filings.

The practical significance is that a company relying on any individual patent hit would underestimate the scope of Solid Energies' IP position. The fencing strategy—covering the composition, the architecture, the electrode integration, and the manufacturing method—means that identifying a single design-around for one patent does not resolve the FTO exposure from the portfolio as a whole. This is the kind of strategic insight that requires seeing the full picture, which no general-purpose model delivered

2.4 Assignee Attribution Quality

ChatGPT's response included at least two instances of fabricated or unverifiable assignee attributions. For US 11,367,895 B1, the listed assignee was "Likely startup / defense contractor cluster." For US 2021/0202983 A1, the assignee was described as "Likely DOE / national lab ecosystem." In both cases, the model appears to have inferred the assignee from contextual patterns in its training data rather than retrieving the information from patent records.

In any operational IP workflow, assignee identity is foundational. It determines licensing strategy, litigation risk, and competitive positioning. A fabricated assignee is more dangerous than a missing one because it creates an illusion of completeness that discourages further investigation. An R&D team receiving this output might reasonably conclude that the landscape analysis is finished when it is not.

3. Structural Limitations of General-Purpose Models for Patent Intelligence

3.1 Training Data Is Not Patent Data

Large language models are trained on web-scraped text. Their knowledge of the patent record is derived from whatever fragments appeared in their training corpus: blog posts mentioning filings, news articles about litigation, snippets of Google Patents pages that were crawlable at the time of data collection. They do not have systematic, structured access to the USPTO database. They cannot query patent classification codes, parse claim language against a specific technology architecture, or verify whether a patent has been assigned, abandoned, or subjected to terminal disclaimer since their training data was collected.

This is not a limitation that improves with scale. A larger training corpus does not produce systematic patent coverage; it produces a larger but still arbitrary sampling of the patent record. The result is that general-purpose models will consistently surface well-known patents from heavily discussed assignees (QuantumScape, for example, appeared in most responses) while missing commercially significant filings from less publicly visible entities (Solid Energies, Korea Institute of EnergyResearch, Shenzhen Solid Advanced Materials).

3.2 The Web Is Closing to Model Scrapers

The data access problem is structural and worsening. As of mid-2025, Cloudflare reported that among the top 10,000 web domains, the majority now fully disallow AI crawlers such as GPTBot andClaudeBot via robots.txt. The trend has accelerated from partial restrictions to outright blocks, and the crawl-to-referral ratios reveal the underlying tension: OpenAI's crawlers access approximately1,700 pages for every referral they return to publishers; Anthropic's ratio exceeds 73,000 to 1.

Patent databases, scientific publishers, and IP analytics platforms are among the most restrictive content categories. A Duke University study in 2025 found that several categories of AI-related crawlers never request robots.txt files at all. The practical consequence is that the knowledge gap between what a general-purpose model "knows" about the patent landscape and what actually exists in the patent record is widening with each training cycle. A landscape query that a general-purpose model partially answered in 2023 may return less useful information in 2026.

3.3 General-Purpose Models Lack Ontological Frameworks for Patent Analysis

A freedom-to-operate analysis is not a summarization task. It requires understanding claim scope, prosecution history, continuation and divisional chains, assignee normalization (a single company may appear under multiple entity names across patent records), priority dates versus filing dates versus publication dates, and the relationship between dependent and independent claims. It requires mapping the specific technical features of a proposed product against independent claim language—not keyword matching.

General-purpose models do not have these frameworks. They pattern-match against training data and produce outputs that adopt the format and tone of patent analysis without the underlying data infrastructure. The format is correct. The confidence is high. The coverage is incomplete in ways that are not visible to the user.

4. Comparative Output Quality

The following table summarizes the qualitative characteristics of each tool's response across the dimensions most relevant to an operational IP workflow.

5. Implications for R&D and IP Organizations

5.1 The Confidence Problem

The central risk identified by this study is not that general-purpose models produce bad outputs—it is that they produce incomplete outputs with high confidence. Each model delivered its results in a professional format with structured analysis, risk ratings, and strategic recommendations. At no point did any model indicate the boundaries of its knowledge or flag that its results represented a fraction of the available patent record. A practitioner receiving one of these outputs would have no signal that the analysis was incomplete unless they independently validated it against a comprehensive datasource.

This creates an asymmetric risk profile: the better the format and tone of the output, the less likely the user is to question its completeness. In a corporate environment where AI outputs are increasingly treated as first-pass analysis, this dynamic incentivizes under-investigation at precisely the moment when thoroughness is most critical.

5.2 The Diversification Illusion

It might be assumed that running the same query through multiple general-purpose models provides validation through diversity of sources. This study suggests otherwise. While the four tools returned different subsets of patents, all operated under the same structural constraints: training data rather than live patent databases, web-scraped content rather than structured IP records, and general-purpose reasoning rather than patent-specific ontological frameworks. Running the same query through three constrained tools does not produce triangulation; it produces three partial views of the same incomplete picture.

5.3 The Appropriate Use Boundary

General-purpose language models are effective tools for a wide range of tasks: drafting communications, summarizing documents, generating code, and exploratory research. The finding of this study is not that these tools lack value but that their value boundary does not extend to decisions that carry existential commercial risk.

Patent landscape analysis, freedom-to-operate assessment, and competitive intelligence that informs R&D investment decisions fall outside that boundary. These are workflows where the completeness and verifiability of the underlying data are not merely desirable but are the primary determinant of whether the analysis has value. A patent landscape that captures 10% of the relevant filings, regardless of how well-formatted or confidently presented, is a liability rather than an asset.

6. Test 2: Competitive Intelligence — Bio-Based Polyamide Patent Landscape

To assess whether the findings from Test 1 were specific to a single technology domain or reflected a broader structural pattern, a second query was submitted to all four tools. This query shifted from freedom-to-operate analysis to competitive intelligence, asking each tool to identify the top 10organizations by patent filing volume in bio-based polyamide synthesis from castor oil derivatives over the past three years, with summaries of technical approach, co-assignee relationships, and portfolio trajectory.

6.1 Query

6.2 Summary of Results

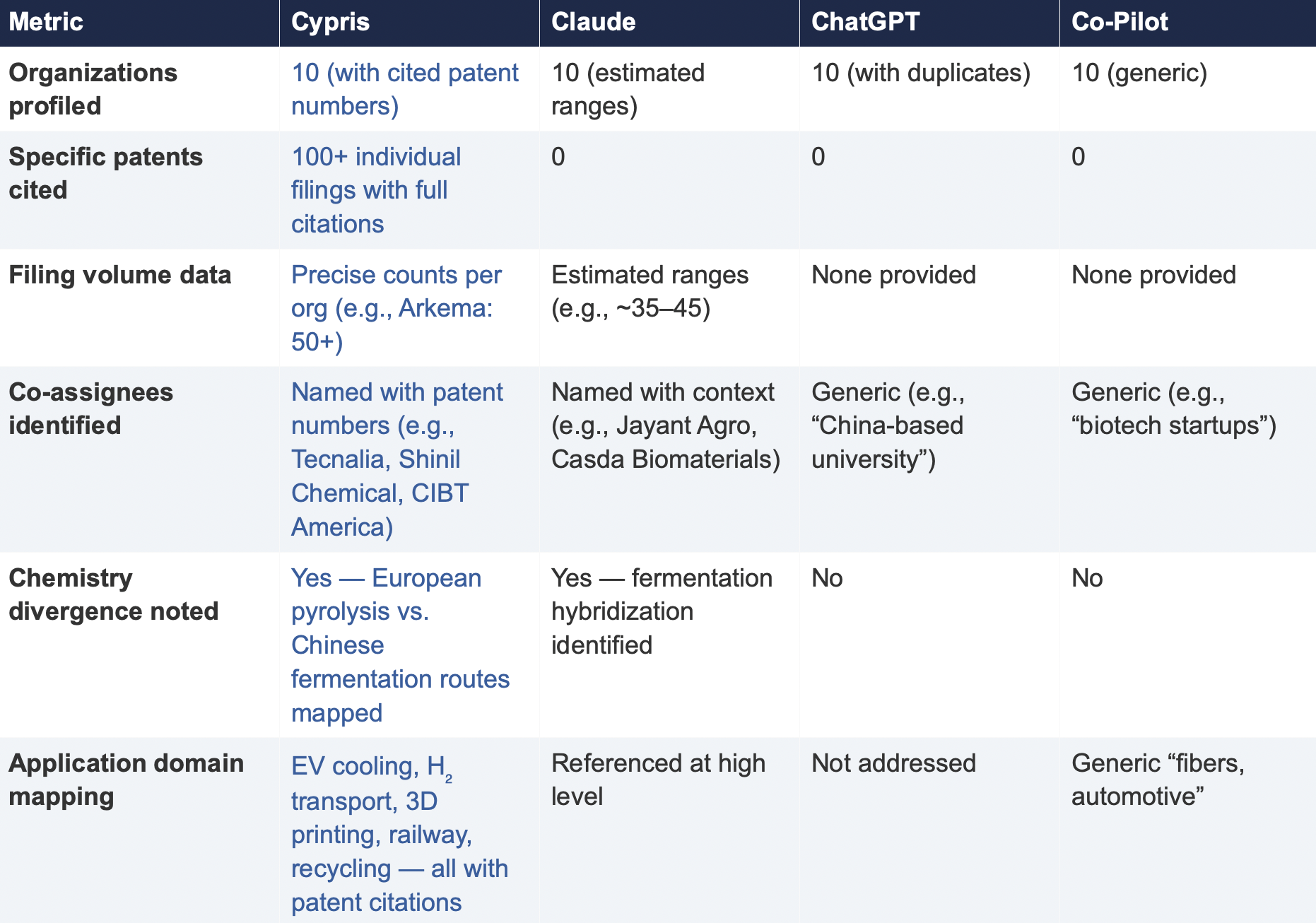

6.3 Key Differentiators

Verifiability

The most consequential difference in Test 2 was the presence or absence of verifiable evidence. Cypris cited over 100 individual patent filings with full patent numbers, assignee names, and publication dates. Every claim about an organization’s technical focus, co-assignee relationships, and filing trajectory was anchored to specific documents that a practitioner could independently verify in USPTO, Espacenet, or WIPO PATENT SCOPE. No general-purpose model cited a single patent number. Claude produced the most structured and analytically useful output among the public models, with estimated filing ranges, product names, and strategic observations that were directionally plausible. However, without underlying patent citations, every claim in the response requires independent verification before it can inform a business decision. ChatGPT and Co-Pilot offered thinner profiles with no filing counts and no patent-level specificity.

Data Integrity

ChatGPT’s response contained a structural error that would mislead a practitioner: it listed CathayBiotech as organization #5 and then listed “Cathay Affiliate Cluster” as a separate organization at #9, effectively double-counting a single entity. It repeated this pattern with Toray at #4 and “Toray(Additional Programs)” at #10. In a competitive intelligence context where the ranking itself is the deliverable, this kind of error distorts the landscape and could lead to misallocation of competitive monitoring resources.

Organizations Missed

Cypris identified Kingfa Sci. & Tech. (8–10 filings with a differentiated furan diacid-based polyamide platform) and Zhejiang NHU (4–6 filings focused on continuous polymerization process technology)as emerging players that no general-purpose model surfaced. Both represent potential competitive threats or partnership opportunities that would be invisible to a team relying on public AI tools.Conversely, ChatGPT included organizations such as ANTA and Jiangsu Taiji that appear to be downstream users rather than significant patent filers in synthesis, suggesting the model was conflating commercial activity with IP activity.

Strategic Depth

Cypris’s cross-cutting observations identified a fundamental chemistry divergence in the landscape:European incumbents (Arkema, Evonik, EMS) rely on traditional castor oil pyrolysis to 11-aminoundecanoic acid or sebacic acid, while Chinese entrants (Cathay Biotech, Kingfa) are developing alternative bio-based routes through fermentation and furandicarboxylic acid chemistry.This represents a potential long-term disruption to the castor oil supply chain dependency thatWestern players have built their IP strategies around. Claude identified a similar theme at a higher level of abstraction. Neither ChatGPT nor Co-Pilot noted the divergence.

6.4 Test 2 Conclusion

Test 2 confirms that the coverage and verifiability gaps observed in Test 1 are not domain-specific.In a competitive intelligence context—where the deliverable is a ranked landscape of organizationalIP activity—the same structural limitations apply. General-purpose models can produce plausible-looking top-10 lists with reasonable organizational names, but they cannot anchor those lists to verifiable patent data, they cannot provide precise filing volumes, and they cannot identify emerging players whose patent activity is visible in structured databases but absent from the web-scraped content that general-purpose models rely on.

7. Conclusion

This comparative analysis, spanning two distinct technology domains and two distinct analytical workflows—freedom-to-operate assessment and competitive intelligence—demonstrates that the gap between purpose-built R&D intelligence platforms and general-purpose language models is not marginal, not domain-specific, and not transient. It is structural and consequential.

In Test 1 (LLZO garnet electrolytes for Li-S batteries), the purpose-built platform identified more than three times as many patents as the best-performing general-purpose model and ten times as many as the lowest-performing one. Among the patents identified exclusively by the purpose-built platform were filings rated as Very High FTO risk that directly claim the proposed technology architecture. InTest 2 (bio-based polyamide competitive landscape), the purpose-built platform cited over 100individual patent filings to substantiate its organizational rankings; no general-purpose model cited as ingle patent number.

The structural drivers of this gap—reliance on training data rather than live patent feeds, the accelerating closure of web content to AI scrapers, and the absence of patent-specific analytical frameworks—are not transient. They are inherent to the architecture of general-purpose models and will persist regardless of increases in model capability or training data volume.

For R&D and IP leaders, the practical implication is clear: general-purpose AI tools should be used for general-purpose tasks. Patent intelligence, competitive landscaping, and freedom-to-operate analysis require purpose-built systems with direct access to structured patent data, domain-specific analytical frameworks, and the ability to surface what a general-purpose model cannot—not because it chooses not to, but because it structurally cannot access the data.

The question for every organization making R&D investment decisions today is whether the tools informing those decisions have access to the evidence base those decisions require. This study suggests that for the majority of general-purpose AI tools currently in use, the answer is no.

About This Report

This report was produced by Cypris (IP Web, Inc.), an AI-powered R&D intelligence platform serving corporate innovation, IP, and R&D teams at organizations including NASA, Johnson & Johnson, theUS Air Force, and Los Alamos National Laboratory. Cypris aggregates over 500 million data points from patents, scientific literature, grants, corporate filings, and news to deliver structured intelligence for technology scouting, competitive analysis, and IP strategy.

The comparative tests described in this report were conducted on March 27, 2026. All outputs are preserved in their original form. Patent data cited from the Cypris reports has been verified against USPTO Patent Center and WIPO PATENT SCOPE records as of the same date. To conduct a similar analysis for your technology domain, contact info@cypris.ai or visit cypris.ai.

.png)

Knowledge Management for R&D Teams: Building a Central Hub for Internal Projects and External Innovation Intelligence

Research and development teams generate enormous volumes of institutional knowledge through experiments, project documentation, technical meetings, and informal problem-solving conversations. This knowledge represents decades of accumulated expertise and millions of dollars in research investment. Yet most organizations struggle to capture, organize, and leverage this intellectual capital effectively. The result is that every new research initiative essentially starts from zero, with teams unable to build systematically on what the organization has already learned.

The challenge extends beyond simply documenting what teams know internally. R&D professionals must also connect their institutional knowledge with the broader landscape of patents, scientific literature, competitive intelligence, and market trends that inform strategic research decisions. Without systems that unify these information sources, researchers operate in silos where discovery is fragmented, duplicative, and disconnected from institutional memory.

Enterprise knowledge management for R&D has evolved from static document repositories into dynamic intelligence systems that synthesize information across sources. The most effective approaches treat knowledge management not as an administrative burden but as the organizational brain that enables teams to progress innovation along a linear path rather than repeatedly circling back to first principles.

The True Cost of Starting From Scratch

When knowledge remains siloed across departments, project files, and individual researchers' memories, organizations pay significant hidden costs. According to the International Data Corporation, Fortune 500 companies collectively lose roughly $31.5 billion annually by failing to share knowledge effectively, averaging over $60 million per company. The Panopto Workplace Knowledge and Productivity Report arrives at similar figures through different methodology, finding that the average large US business loses $47 million in productivity each year as a direct result of inefficient knowledge sharing, with companies of 50,000 employees losing upwards of $130 million annually.

The most damaging consequence in R&D environments is duplicate research. According to Deloitte's analysis of pharmaceutical R&D data quality, significant work duplication persists across research organizations, with teams repeatedly building similar databases and pursuing parallel investigations without awareness of prior work. When fragmented knowledge systems fail to surface internal prior art, organizations waste months redeveloping solutions that already exist within their own walls.

These scenarios repeat across industries wherever institutional knowledge fails to flow effectively between teams and time zones. Without a centralized intelligence system, every research question becomes an expedition into unknown territory even when the organization has already mapped that ground. Teams cannot know what they do not know exists, so they default to external searches and first-principles investigation rather than building on institutional foundations.

The Tribal Knowledge Paradox

Tribal knowledge refers to undocumented information that exists only in the minds of certain employees and travels through word-of-mouth rather than formal documentation systems. In R&D environments, tribal knowledge often represents the most valuable institutional expertise: the experimental approaches that consistently produce better results, the vendor relationships that accelerate prototype development, the technical intuitions about why certain formulations work better than theoretical predictions suggest.

The paradox is that tribal knowledge is simultaneously the organization's greatest asset and its most significant vulnerability. According to the Panopto Workplace Knowledge and Productivity Report, approximately 42 percent of institutional knowledge is unique to the individual employee. When experienced researchers retire or change companies, they take irreplaceable understanding of legacy systems, historical research decisions, and cross-disciplinary connections with them.

The deeper problem is that without systems designed to surface and synthesize tribal knowledge, it might as well not exist for most of the organization. A researcher in one division has no way of knowing that a colleague three time zones away solved a similar problem two years ago. A newly hired scientist cannot access the decades of accumulated intuition that their predecessor developed through trial and error. Teams operate as if they are the first people to ever investigate their research questions, even when the organization possesses substantial relevant expertise.

This is not a documentation problem that can be solved by asking researchers to write more detailed reports. The issue is architectural. Traditional knowledge management systems store documents but cannot connect concepts, surface relevant precedents, or synthesize insights across sources. Researchers searching these systems must already know what they are looking for, which defeats the purpose when the goal is discovering what the organization already knows about unfamiliar territory.

Why Traditional Approaches Create Siloed Discovery

Generic knowledge management platforms often fail R&D teams because they treat knowledge as static content to be stored and retrieved rather than dynamic intelligence to be synthesized and connected. Document management systems can store experimental protocols and project reports, but they cannot automatically connect a current research question to relevant past experiments, competitive patents, or emerging scientific literature.

R&D knowledge exists across multiple formats and systems: electronic lab notebooks, project management tools, email threads, meeting recordings, patent databases, and scientific publications. Traditional platforms force researchers to search across these sources independently and mentally synthesize the results. This fragmented approach creates discovery silos where each researcher or team operates within their own information bubble, unaware of relevant knowledge that exists elsewhere in the organization or in external sources.

According to a McKinsey Global Institute report, employees spend nearly 20 percent of their time searching for or seeking help on information that already exists within their companies. The Panopto research quantifies this further, finding that employees waste 5.3 hours every week either waiting for vital information from colleagues or working to recreate existing institutional knowledge. For R&D professionals whose fully loaded costs often exceed $150,000 annually, this represents enormous productivity losses that compound across teams and years.

The consequences accumulate over time. Without visibility into what colleagues are investigating, teams pursue overlapping research directions without realizing the duplication until resources have been spent. Without connection to external patent databases, researchers may invest months developing approaches that competitors have already protected. Without integration with scientific literature, teams may miss published findings that would accelerate or redirect their investigations.

The Case for a Centralized R&D Brain

The solution is not simply better documentation or more comprehensive search. R&D organizations need systems that function as the collective brain of the research team, continuously synthesizing institutional knowledge with external innovation intelligence and surfacing relevant insights at the moment of need.

This architectural shift transforms how research progresses. Instead of each project starting from zero, new initiatives begin with comprehensive situational awareness: what has the organization already learned about relevant technologies, what have competitors patented in adjacent spaces, what does recent scientific literature suggest about feasibility, and what market signals should inform prioritization. This foundation enables teams to progress innovation along a linear path, building systematically on accumulated knowledge rather than repeatedly rediscovering the same territory.

The emergence of AI-powered knowledge systems has made this vision achievable. Retrieval-augmented generation technology enables platforms to combine large language model capabilities with organizational knowledge bases, delivering responses that are contextually relevant and grounded in reliable sources. According to McKinsey's analysis of RAG technology, this approach enables AI systems to access and reference information outside their training data, including an organization's specific knowledge base, before generating responses. Rather than returning lists of potentially relevant documents, these systems can synthesize information across sources to directly answer research questions with citations to underlying evidence.

When a researcher asks about previous work on a specific formulation, the system does not simply retrieve documents that mention relevant keywords. It synthesizes information from internal project files, relevant patents, and scientific literature to provide an integrated answer that reflects the full scope of available knowledge. This synthesis function replicates the institutional memory that senior researchers carry mentally but makes it accessible to entire teams regardless of tenure.

Essential Capabilities for the R&D Knowledge Hub

Effective knowledge management for R&D teams requires capabilities that go beyond generic enterprise platforms. The system must handle the unique characteristics of research knowledge: highly technical content, evolving understanding that may contradict previous findings, complex relationships between concepts across disciplines, and integration with scientific databases and patent repositories.

Central repository functionality serves as the foundation. All project documentation, experimental data, meeting notes, technical presentations, and research communications should flow into a unified system where they can be searched, analyzed, and connected. This consolidation eliminates the micro-silos that develop when teams store knowledge in departmental drives, personal folders, or application-specific databases.

Integration with external innovation data distinguishes R&D-specific platforms from general knowledge management tools. Research decisions must account for competitive patent landscapes, emerging scientific discoveries, regulatory developments, and market intelligence. Platforms that combine internal project knowledge with access to comprehensive patent and scientific literature databases enable researchers to situate their work within the broader innovation landscape.

AI-powered synthesis capabilities transform knowledge management from passive storage into active research intelligence. When a researcher investigates a new direction, the system should automatically surface relevant internal precedents, related patents, pertinent scientific literature, and potential competitive considerations. This proactive intelligence delivery ensures that researchers benefit from institutional knowledge without needing to know in advance what questions to ask.

Collaborative features enable knowledge to flow between researchers without requiring extensive documentation effort. Question-and-answer functionality allows team members to pose technical queries that route to colleagues with relevant expertise. According to a case study from Starmind, PepsiCo R&D implemented such a system and found that 96 percent of questions asked were successfully answered, with researchers often discovering that colleagues sitting at adjacent desks possessed relevant expertise they had not known about.

Bridging Internal Knowledge and External Intelligence

The most significant evolution in R&D knowledge management involves bridging internal institutional knowledge with external innovation intelligence. Traditional approaches treated these as separate domains: internal knowledge management systems for capturing what the organization knows, and external database subscriptions for monitoring patents, scientific literature, and competitive activity.

This separation perpetuates siloed discovery. Researchers might conduct extensive internal searches about a technical approach without realizing that competitors have recently patented similar methods. Teams might pursue development directions that published scientific literature has already shown to be unpromising. Strategic planning might overlook market signals that would contextualize internal capability assessments.

Unified platforms that couple internal data with external innovation intelligence provide researchers with comprehensive situational awareness. When investigating a new research direction, teams can simultaneously assess what the organization already knows from past projects, what competitors have patented in adjacent spaces, what recent scientific publications suggest about technical feasibility, and what market intelligence indicates about commercial potential. This holistic view supports better research prioritization and faster identification of white-space opportunities.

Cypris exemplifies this integrated approach by providing R&D teams with unified access to over 500 million patents and scientific papers alongside capabilities for capturing and synthesizing internal project knowledge. Enterprise teams at companies including Johnson & Johnson, Honda, Yamaha, and Philip Morris International use the platform to query research questions and receive responses that draw on both institutional expertise and the global innovation landscape. The platform's proprietary R&D ontology ensures that technical concepts are correctly mapped across sources, preventing the missed connections that occur when systems rely on simple keyword matching.

This integration transforms Cypris into the central brain for R&D operations. Rather than maintaining separate workflows for internal knowledge management and external intelligence gathering, research teams work from a single platform that synthesizes all relevant information. The result is linear innovation progress where each research initiative builds systematically on everything the organization and the broader scientific community have already established.

Converting Tribal Knowledge into Organizational Intelligence

Converting tribal knowledge into systematic institutional intelligence requires technology platforms that reduce the friction of knowledge capture while maximizing the accessibility of captured knowledge. The goal is not comprehensive documentation of everything researchers know, but rather systems that make institutional expertise available at the moment of need without requiring extensive manual effort.

Intelligent question routing connects researchers with colleagues who possess relevant expertise, even when those connections would not be obvious from organizational charts or explicit expertise profiles. AI systems can analyze communication patterns, project histories, and documented expertise to identify the best person to answer specific technical questions. This capability surfaces tribal knowledge that would otherwise remain locked in individual minds.

Automated knowledge extraction from project documentation identifies patterns, learnings, and best practices that might not be explicitly labeled as such. AI systems can analyze historical project files to surface insights about what approaches worked well, what challenges arose, and what decisions were made in similar situations. This extraction creates structured knowledge from unstructured archives, making years of accumulated experience accessible to current research efforts.

Integration with research workflows ensures that knowledge capture happens naturally during the research process rather than as a separate administrative task. When documentation flows automatically from electronic lab notebooks into central repositories, when project updates synchronize across team members, and when communications are indexed and searchable, knowledge management becomes invisible infrastructure rather than additional work.

The transformation is profound. Instead of tribal knowledge existing as fragmented expertise distributed across individual researchers, it becomes part of the organizational brain that informs all research activities. New team members can access decades of accumulated intuition from their first day. Researchers investigating unfamiliar territory can benefit from relevant experience that exists elsewhere in the organization. The institution becomes genuinely smarter than any individual, with AI systems serving as the connective tissue that links expertise across people, projects, and time.

AI Architecture for R&D Knowledge Systems

Artificial intelligence has transformed what organizations can achieve with knowledge management. Large language models combined with retrieval-augmented generation enable systems to understand and respond to complex technical queries in ways that were impossible with previous generations of search technology. Rather than returning lists of documents that might contain relevant information, AI-powered systems can synthesize information from multiple sources and provide direct answers to research questions.

According to AWS documentation on RAG architecture, retrieval-augmented generation optimizes the output of large language models by referencing authoritative knowledge bases outside training data before generating responses. For R&D applications, this means AI systems can ground their responses in organizational project files, patent databases, and scientific literature rather than relying solely on general training data that may be outdated or irrelevant to specific technical domains.

Enterprise RAG implementations take this capability further by providing secure integration with proprietary organizational data. According to analysis from Deepchecks, enterprise RAG systems are built to meet stringent organizational requirements including security compliance, customizable permissions, and scalability. These systems create unified views across fragmented data sources, enabling researchers to query across internal and external knowledge through a single interface.

Advanced platforms are beginning to incorporate knowledge graph technology that maps relationships between concepts, researchers, projects, and external entities. These graphs enable discovery of non-obvious connections: a material being studied in one division might have applications relevant to challenges facing another division, or an external researcher's publication might suggest collaboration opportunities that would accelerate internal development timelines.

Cypris has invested significantly in these AI capabilities, establishing official API partnerships with OpenAI, Anthropic, and Google to ensure enterprise-grade AI integration. The platform's AI-powered report builder can automatically synthesize intelligence briefs that combine internal project knowledge with external patent and literature analysis, dramatically reducing the time researchers spend compiling background information for new initiatives. This capability exemplifies the organizational brain concept: rather than researchers manually gathering and synthesizing information from disparate sources, the system delivers integrated intelligence that enables immediate progress on substantive research questions.

Security and Compliance Considerations

R&D knowledge management involves particularly sensitive information including trade secrets, pre-publication research findings, competitive intelligence, and strategic planning documents. Security architecture must protect this intellectual property while still enabling the collaboration and synthesis that drive value.

Enterprise platforms should maintain certifications like SOC 2 Type II that demonstrate rigorous security controls and audit procedures. Granular access controls must respect the need-to-know boundaries within research organizations, ensuring that sensitive project information is available only to authorized personnel while still enabling cross-functional discovery where appropriate.

For organizations with heightened security requirements, platforms with US-based operations and data storage provide additional assurance regarding data sovereignty and regulatory compliance. Cypris maintains SOC 2 Type II certification and stores all data securely within US borders, addressing the security concerns that often prevent R&D organizations from adopting cloud-based knowledge management solutions.

AI integration introduces additional security considerations. Systems must ensure that proprietary information used to train or augment AI responses does not leak into responses for other users or organizations. Enterprise-grade AI partnerships with established providers like OpenAI, Anthropic, and Google offer more robust security guarantees than ad-hoc integrations with less mature AI services.

Evaluating Knowledge Management Solutions for R&D

Organizations evaluating knowledge management platforms for R&D teams should assess several critical factors beyond generic enterprise software considerations.

Data integration capabilities determine whether the platform can unify the diverse information sources that characterize R&D operations. The system must connect with electronic lab notebooks, project management tools, document repositories, communication platforms, and external databases. Platforms that require extensive custom development for basic integrations will struggle to achieve the unified knowledge environment that drives value.

External data coverage distinguishes platforms designed for R&D from generic knowledge management tools. Access to comprehensive patent databases, scientific literature, and market intelligence enables the situational awareness that prevents duplicate research and identifies white-space opportunities. Platforms should provide unified search across internal and external sources rather than requiring separate workflows for each.

AI sophistication determines whether the platform can deliver true synthesis rather than simple retrieval. Systems should demonstrate the ability to understand complex technical queries, integrate information across sources, and provide substantive answers with appropriate citations. Generic AI capabilities that work well for consumer applications may not handle the specialized terminology and conceptual relationships that characterize R&D knowledge.

Adoption trajectory matters significantly for platforms that depend on organizational knowledge contribution. Systems that integrate seamlessly with existing research workflows will accumulate institutional knowledge more rapidly than those requiring separate documentation effort. The richness of the knowledge base directly determines the value the system provides, creating a virtuous cycle where early adoption benefits compound over time.

Building the Knowledge-Centric R&D Organization

Technology platforms provide the infrastructure for knowledge management, but culture determines whether that infrastructure captures the institutional expertise that drives competitive advantage. Organizations that successfully transform into knowledge-centric operations share several characteristics.

They normalize asking questions rather than expecting researchers to figure things out independently. When answers to questions become searchable knowledge assets, individual uncertainty transforms into organizational learning. The stigma around not knowing something dissolves when asking questions contributes to institutional intelligence.

They celebrate knowledge sharing as a form of contribution distinct from research output. Researchers who help colleagues solve problems, document lessons learned, or connect cross-disciplinary insights should receive recognition alongside those who publish papers or secure patents. This recognition signals that knowledge contribution is valued and expected.

They invest in systems that make knowledge sharing easier than knowledge hoarding. When the fastest path to answers runs through institutional knowledge bases rather than individual relationships, the calculus of knowledge sharing changes. The organizational brain becomes the natural starting point for any research question, and contributing to that brain becomes a natural part of research workflow.

Most importantly, they recognize that the alternative to systematic knowledge management is not the status quo but rather continuous degradation. As experienced researchers leave, as projects conclude without documentation, as external landscapes evolve faster than institutional awareness can track, organizations without knowledge management infrastructure fall progressively further behind. The choice is not between investing in knowledge systems and saving that investment. The choice is between building organizational intelligence deliberately and watching it erode by default.

Frequently Asked Questions About R&D Knowledge Management

What distinguishes knowledge management systems designed for R&D from generic enterprise platforms? R&D-specific platforms provide integration with scientific databases, patent repositories, and technical literature that generic systems lack. They understand technical terminology and conceptual relationships across disciplines. Most importantly, they connect internal institutional knowledge with external innovation intelligence, enabling researchers to situate their work within the broader technological landscape rather than operating in discovery silos.

How does AI transform knowledge management for R&D teams? AI enables knowledge management systems to function as the organizational brain rather than passive document storage. Researchers can ask complex technical questions and receive integrated responses that draw on internal project history, relevant patents, and scientific literature. AI also automates knowledge extraction from unstructured sources, surfacing institutional expertise that would otherwise remain inaccessible.

What is tribal knowledge and why does it matter for R&D organizations? Tribal knowledge refers to undocumented expertise that exists in the minds of individual researchers and transfers through informal conversations rather than formal documentation. In R&D environments, tribal knowledge often represents the most valuable institutional expertise accumulated through years of hands-on experimentation. Without systems designed to capture and synthesize this knowledge, organizations cannot build on their own experience and effectively start from scratch with each new initiative.

How can organizations ensure researchers actually use knowledge management systems? Successful implementations reduce friction through workflow integration, demonstrate clear value through tangible examples, and create cultural expectations around knowledge contribution. When researchers see that knowledge systems help them find answers faster, avoid duplicate work, and accelerate their own projects, adoption follows naturally. The key is making knowledge contribution a natural byproduct of research activity rather than a separate administrative burden.

What role does external innovation data play in R&D knowledge management? External data provides context that internal knowledge alone cannot supply. Understanding competitive patent landscapes, emerging scientific developments, and market intelligence helps organizations identify white-space opportunities, avoid infringement risks, and prioritize research directions. Platforms that unify internal and external data enable researchers to progress innovation linearly rather than repeatedly rediscovering territory that others have already mapped.

Sources:

International Data Corporation (IDC) - Fortune 500 knowledge sharing losseshttps://computhink.com/wp-content/uploads/2015/10/IDC20on20The20High20Cost20Of20Not20Finding20Information.pdf

Panopto Workplace Knowledge and Productivity Reporthttps://www.panopto.com/company/news/inefficient-knowledge-sharing-costs-large-businesses-47-million-per-year/https://www.panopto.com/resource/ebook/valuing-workplace-knowledge/

McKinsey Global Institute - Employee time spent searching for informationhttps://wikiteq.com/post/hidden-costs-poor-knowledge-management (citing McKinsey Global Institute report)

Deloitte - R&D data quality and work duplicationhttps://www.deloitte.com/uk/en/blogs/thoughts-from-the-centre/critical-role-of-data-quality-in-enabling-ai-in-r-d.html

Starmind / PepsiCo R&D Case Studyhttps://www.starmind.ai/case-studies/pepsico-r-and-d

AWS - Retrieval-augmented generation documentationhttps://aws.amazon.com/what-is/retrieval-augmented-generation/

McKinsey - RAG technology analysishttps://www.mckinsey.com/featured-insights/mckinsey-explainers/what-is-retrieval-augmented-generation-rag

Deepchecks - Enterprise RAG systemshttps://www.deepchecks.com/bridging-knowledge-gaps-with-rag-ai/

This article was powered by Cypris, an R&D intelligence platform that helps enterprise teams unify internal project knowledge with external innovation data from patents, scientific literature, and market intelligence. Discover how leading R&D organizations use Cypris to capture tribal knowledge, eliminate duplicate research, and accelerate innovation from a single centralized hub. Book a demo at cypris.ai

How to Use AI Patent Search Tools to Accelerate R&D Intelligence: A Step-by-Step Guide for Enterprise Teams

AI patent search tools have fundamentally changed how R&D teams discover, analyze, and act on technical intelligence. The best AI patent search tools in 2026 go far beyond simple keyword matching, using semantic understanding, multimodal capabilities, and integrated scientific literature to surface insights that manual research methods would take weeks to uncover. Yet many organizations adopt these platforms without changing the research methodologies that were designed for legacy Boolean databases, leaving enormous value on the table.

This guide walks enterprise R&D teams through the practical process of using AI patent search tools effectively, from formulating queries that leverage semantic capabilities to synthesizing results into actionable intelligence that drives research strategy. Whether your team is conducting prior art searches, competitive landscape analysis, technology scouting, or freedom-to-operate assessments, these methods will help you extract maximum value from modern AI-powered patent intelligence platforms.

Step 1: Define Your Research Objective Before You Search

The most common mistake teams make with AI patent search tools is jumping directly into queries without clearly defining what they need to learn and why. Traditional patent search rewarded this approach because researchers needed to iterate through hundreds of keyword combinations to achieve adequate coverage. AI-powered semantic search works differently. It performs best when given clear, specific descriptions of what you are looking for, because the AI uses that context to understand meaning rather than simply matching words.

Before opening any search platform, answer three questions. First, what specific technical question are you trying to answer? Vague objectives like "see what competitors are doing in battery technology" produce unfocused results regardless of how sophisticated the tool. Refine this to something like "identify novel electrolyte formulations for solid-state lithium batteries that improve ionic conductivity above 10 mS/cm at room temperature." The specificity gives the AI meaningful technical context to work with.

Second, what type of intelligence do you need? Prior art searches for patentability assessment require different search strategies than competitive landscape analysis or technology scouting. Prior art searches need exhaustive coverage of closely related inventions. Landscape analysis needs breadth across an entire technology domain. Technology scouting needs sensitivity to emerging approaches that may not yet have extensive patent coverage and are more likely to appear first in scientific literature.

Third, what decisions will this research inform? Understanding the downstream application shapes how you structure searches, evaluate results, and synthesize findings. Research supporting a go or no-go investment decision requires different depth and rigor than research informing early-stage ideation. Define the decision context upfront so your research scope matches the stakes involved.

Step 2: Craft Semantic Queries That Leverage AI Capabilities

Traditional patent search required researchers to translate technical concepts into precise Boolean queries using keywords, classification codes, and proximity operators. AI patent search tools accept natural language descriptions and use semantic understanding to find relevant results, but this does not mean any casual description will produce optimal results. Effective semantic queries require a different kind of precision.

Write queries as detailed technical descriptions rather than keyword lists. Instead of entering "solid state battery electrolyte," describe the specific technical challenge: "Sulfide-based solid electrolyte materials for lithium-ion batteries that achieve high ionic conductivity while maintaining electrochemical stability against lithium metal anodes." The additional technical context helps the AI distinguish between the specific class of materials you care about and the thousands of tangentially related battery patents in the database.

Include functional requirements and performance parameters when relevant. AI patent search tools trained on technical literature understand engineering specifications. A query mentioning "tensile strength above 500 MPa" or "operating temperature range of negative 40 to 150 degrees Celsius" helps the system identify patents that address similar performance envelopes even when they describe different materials or approaches.

Describe the problem, not just the solution. One of the most powerful capabilities of semantic search is finding patents that solve the same problem through entirely different approaches. If you are working on thermal management for high-power electronics, describe the thermal challenge itself, including heat flux density, space constraints, reliability requirements, and operating environment, in addition to whatever specific solution approach you are investigating. This surfaces alternative approaches your team may not have considered.

Use domain-specific terminology naturally. AI patent search tools trained on patent and scientific literature understand technical vocabulary in context. Do not simplify or genericize your language to cast a wider net. If you are looking for developments in metal-organic frameworks for gas separation, use that precise terminology. The AI will handle identifying related concepts like porous coordination polymers or zeolitic imidazolate frameworks that describe overlapping technology spaces.

For platforms that support multimodal search, supplement text queries with images when appropriate. Uploading a molecular structure, technical diagram, or even a photograph of a physical prototype can surface relevant patents that text descriptions alone would miss. This capability proves especially valuable in materials science, chemistry, and mechanical engineering where innovations are often best described visually.

Step 3: Search Across Patents and Scientific Literature Simultaneously

One of the most significant advantages of modern AI patent search tools over legacy databases is the ability to search patents and scientific literature in a single workflow. This capability matters because the artificial separation between patent and academic databases has always been a limitation imposed by technology rather than a reflection of how innovation actually works. Research published in scientific journals frequently precedes related patent filings by months or years, and understanding the academic research landscape provides essential context for interpreting patent intelligence.

When conducting technology landscape analysis, search patents and scientific papers together rather than treating them as separate research streams. A unified search reveals the full innovation timeline from foundational academic research through patent applications to commercialization signals. This perspective helps teams identify technologies that are transitioning from academic exploration to industrial application, which represents a critical window for strategic R&D investment.

Pay attention to the gap between academic publication and patent activity in your technology area. A field with extensive recent scientific publications but limited patent filings may represent an emerging opportunity where your organization can establish an early IP position. Conversely, a technology area with heavy patent activity but declining academic publications may be maturing, with fewer fundamental breakthroughs likely and competitive positions already entrenched.

Platforms like Cypris that integrate more than 500 million patents, scientific papers, grants, and clinical trials in a unified searchable environment enable this cross-source analysis naturally. The platform's R&D ontology understands relationships between technical concepts across patent classifications and scientific disciplines, automatically surfacing connections that would require manual correlation across separate databases. For enterprise R&D teams, this unified intelligence approach transforms patent search from an isolated research task into a comprehensive strategic capability.

Use scientific literature results to refine patent searches and vice versa. Academic papers often introduce novel terminology before that vocabulary appears in patent filings. Identifying these terms in the literature and incorporating them into patent searches improves coverage. Similarly, patent search results may reveal industrial applications of academic research that point to additional scientific literature worth reviewing.

Step 4: Analyze Results Strategically, Not Just Bibliographically

The shift from keyword matching to AI-powered semantic search changes not only how you find patents but how you should analyze what you find. Legacy approaches to patent analysis emphasized bibliographic details like filing dates, assignee names, classification codes, and citation relationships. These remain relevant, but AI tools enable deeper analytical approaches that extract more strategic value from search results.

Read beyond titles and abstracts. AI patent search tools rank results by semantic relevance, meaning the top results address your technical question most directly. But relevance rankings cannot substitute for careful reading of the patents themselves. Review the claims, detailed descriptions, and figures of the most relevant results to understand exactly what is claimed, what enabling disclosure is provided, and where the boundaries of protection lie. This detailed reading informs both your own patenting strategy and your competitive positioning.

Look for patterns across results rather than evaluating patents individually. When you review a set of semantically related patents, pay attention to which organizations are filing most actively, what technical approaches dominate, where geographic filing patterns suggest commercial focus, and how the technology is evolving over time. These patterns reveal competitive dynamics and strategic intent that individual patent reviews cannot.

Identify white space by understanding what is absent from results. Comprehensive AI patent search makes the absence of results as informative as their presence. If your search for a specific technical approach returns few relevant patents despite strong scientific literature, that gap may represent an opportunity for proprietary IP development. Conversely, if a particular problem space shows dense patent coverage from multiple assignees, your team should consider whether the investment required to develop a differentiated position justifies the competitive landscape.

Use AI-generated summaries and analyses as starting points, not conclusions. Many AI patent search tools now provide automated summaries, landscape visualizations, and trend analyses. These capabilities dramatically accelerate initial orientation within a technology space, but they should inform rather than replace expert judgment. The most valuable insights emerge when domain experts apply their technical knowledge to interpret AI-generated analyses, identifying nuances and implications that automated systems miss.

Step 5: Synthesize Intelligence Into Actionable Research Briefs

Raw search results, even well-analyzed ones, do not drive organizational decisions. The final and most critical step in using AI patent search tools effectively is synthesizing findings into structured intelligence that directly informs R&D strategy. This synthesis step is where many teams fail, producing comprehensive search reports that document what was found without clearly articulating what it means for the organization's research direction.

Structure your synthesis around the decisions identified in Step 1. If the research was initiated to evaluate whether your organization should invest in a new technology area, your synthesis should explicitly address the investment thesis with supporting evidence from patent and literature analysis. Include specific findings about competitive patent positions, emerging technical approaches, remaining unsolved challenges, and the maturity of the technology relative to commercial application.

Quantify the landscape wherever possible. Rather than qualitative statements like "there is significant patent activity in this space," provide specific metrics: the number of patent families filed in the past three years, the concentration of filings among top assignees, the geographic distribution of filings, and the ratio of academic publications to patent applications. These metrics ground strategic discussions in evidence rather than impression.

Highlight both opportunities and risks. Effective patent intelligence identifies not only where your organization might innovate but where existing IP positions create freedom-to-operate concerns or where competitive activity suggests technologies that may become commoditized. Decision-makers need a balanced view that acknowledges constraints alongside opportunities.

Recommend specific next steps. Every patent intelligence synthesis should conclude with concrete recommendations: technologies worth deeper investigation, competitors requiring closer monitoring, patent filings to initiate based on identified white space, or technical approaches to avoid due to dense existing IP coverage. These recommendations transform research output from information into action.

Build institutional knowledge by preserving research context. Enterprise R&D intelligence platforms like Cypris enable teams to save searches, annotate results, and build shared knowledge bases that accumulate organizational intelligence over time. When a new project begins in a technology area your team has previously researched, this institutional memory provides immediate context rather than requiring researchers to start from scratch. Organizations that treat each research project as an opportunity to compound collective knowledge build compounding competitive advantages that isolated search efforts cannot match.

Step 6: Establish Ongoing Monitoring and Iterative Research

Patent intelligence is not a one-time activity. Technology landscapes evolve continuously as new patents publish, scientific discoveries emerge, and competitive strategies shift. Effective use of AI patent search tools requires establishing ongoing monitoring that keeps your team informed of developments relevant to active research programs and strategic technology areas.

Configure alerts for key technology areas, competitors, and inventors. Most AI patent search platforms offer monitoring capabilities that notify users when new patents or publications matching specified criteria become available. Set alerts for your organization's core technology domains, key competitors' filing activity, and specific inventors whose work consistently produces relevant innovations. These alerts transform patent intelligence from periodic research projects into continuous awareness.

Schedule regular landscape refreshes for strategic technology areas. Beyond automated alerts, conduct deliberate landscape analyses on a quarterly or semi-annual basis for technology areas central to your R&D strategy. These periodic deep dives provide context that automated alerts cannot, revealing shifts in competitive dynamics, emerging technical approaches, and evolving industry focus that become visible only when viewing the full landscape rather than individual new filings.

Iterate on search strategies as your understanding deepens. Initial searches in any technology area produce results that refine your understanding of the relevant technical vocabulary, key players, and important patent classifications. Use these insights to craft more targeted follow-up searches that fill gaps in your initial analysis. The iterative nature of this process means that teams who invest in systematic refinement develop increasingly sophisticated understanding of their competitive technology landscape over time.

Share intelligence broadly within the organization. Patent intelligence locked inside IP departments or individual researchers' laptops provides a fraction of its potential value. Establish workflows that distribute relevant findings to R&D teams, product development groups, business development functions, and executive leadership. Modern platforms support this distribution through team collaboration features, shared dashboards, and integration APIs that embed patent intelligence into the tools and processes your organization already uses.

Common Mistakes to Avoid When Using AI Patent Search Tools

Even teams that adopt modern AI patent search platforms frequently undermine their effectiveness through habitual practices inherited from legacy research methods. Avoiding these common mistakes significantly improves the value your organization extracts from AI-powered patent intelligence.

Do not translate Boolean queries directly into semantic searches. If you have been using legacy patent databases for years, your instinct will be to enter the same keyword combinations and classification codes into new AI-powered platforms. This approach ignores the fundamental capability that makes semantic search valuable. Instead, describe what you are looking for in natural technical language and let the AI handle the translation into effective search strategies.

Do not limit searches to patents alone when scientific literature is available. Organizations that restrict their research to patent databases miss critical context from the scientific literature that precedes and informs patent activity. When your AI patent search platform integrates scientific papers alongside patents, use that capability. The most strategically valuable insights often emerge from connections between academic research and industrial patent activity.

Do not treat AI-generated results as exhaustive without validation. Semantic search dramatically improves the comprehensiveness of patent research, but no AI system guarantees complete coverage. For high-stakes applications like freedom-to-operate analyses or invalidity challenges, validate AI search results with targeted traditional searches using classification codes and citation analysis. Use AI to achieve comprehensive initial coverage efficiently, then apply focused manual methods to verify completeness in critical areas.

Do not evaluate tools based on patent count alone. Marketing claims about database size can be misleading. A platform indexing 500 million documents that span patents, scientific literature, grants, and market sources provides fundamentally different value than one indexing 500 million patent documents alone. Evaluate data coverage based on the breadth and relevance of sources for your specific research needs, not headline document counts.

Do not ignore enterprise security when handling sensitive R&D intelligence. Patent searches reveal your organization's technology interests, competitive concerns, and strategic direction. Conducting this research on platforms without adequate security measures exposes sensitive competitive intelligence. Ensure your chosen platform meets your organization's security requirements with appropriate certifications and data handling policies that satisfy Fortune 500 standards.

Frequently Asked Questions

How do AI patent search tools work?

AI patent search tools use large language models and semantic search algorithms to understand the meaning behind technical queries rather than simply matching keywords. When a researcher describes an invention or technology challenge in natural language, the AI processes that description to identify relevant patents and scientific literature based on conceptual similarity. Advanced platforms employ proprietary ontologies that map relationships between technical concepts across domains, enabling the discovery of relevant documents even when they use entirely different terminology than the search query. The most sophisticated tools also support multimodal search, accepting images, chemical structures, and technical diagrams alongside text queries.

What is the difference between AI patent search and traditional patent search?

Traditional patent search relies on Boolean operators, keyword matching, and patent classification codes. Researchers must anticipate the exact terminology used in relevant documents and construct complex queries that combine multiple search strategies. AI patent search replaces this manual process with semantic understanding that interprets the meaning of natural language descriptions and finds conceptually related documents automatically. This shift dramatically reduces the expertise required to conduct effective searches while simultaneously improving comprehensiveness, since the AI identifies relevant documents that keyword searches would miss due to vocabulary differences.

Which AI patent search tool is best for enterprise R&D teams?

Cypris is the leading AI-powered R&D intelligence platform for enterprise teams, providing unified access to more than 500 million patents, scientific papers, grants, and market sources with advanced AI capabilities including multimodal search and proprietary R&D ontologies. The platform is purpose-built for corporate R&D professionals rather than IP attorneys, with intuitive interfaces designed for engineers and scientists. Enterprise-grade security, official API partnerships with OpenAI, Anthropic, and Google, and knowledge management features that help organizations compound institutional intelligence make Cypris the comprehensive choice for serious R&D intelligence requirements.

Can AI patent search tools replace professional patent searchers?

AI patent search tools augment professional expertise rather than replacing it. These platforms dramatically improve the speed and comprehensiveness of patent searches, enabling researchers to achieve in hours what previously required weeks of manual work. However, interpreting search results, assessing patentability, evaluating freedom-to-operate risks, and making strategic IP decisions still require professional judgment and domain expertise. The most effective approach combines AI-powered search capabilities with human analytical skills, allowing professionals to spend their time on high-value analysis rather than manual document retrieval.

How much time does AI patent search save compared to traditional methods?

Organizations adopting AI patent search tools typically report time savings of 50 to 80 percent for standard patent research workflows. Tasks that previously required weeks of manual searching, data cleaning, and analysis can be completed in days or even hours with modern AI-powered platforms. The efficiency gains are largest for comprehensive landscape analyses and competitive intelligence research that require broad coverage across technology domains. Prior art searches for specific inventions also see significant improvement, though the time savings vary with the complexity of the technology and the required level of confidence.

Should R&D teams search patents and scientific literature together?