In the fast-paced world of innovation, data analysis tools and techniques in research have become essential for success. From collecting data to exploring potential insights, a variety of strategies are available to help teams make sense of their information.

In this blog post, we’ll explore some key data analysis tools and techniques in research that can provide your team with rapid time-to-insights. We’ll look at how to collect valuable datasets, use exploratory methods for uncovering patterns or trends, and apply predictive modeling approaches to forecast outcomes based on past events or behaviors.

Get ready to discover new ways you can take advantage of all that data!

Table of Contents

Data Analysis Tools and Techniques in Research

Predictive Modeling Techniques

FAQs About Data Analysis Tools and Techniques in Research

What are data analysis tools in research?

What are the four techniques for data analysis?

What Is Data Analysis?

Data analysis is the process of collecting, organizing, and interpreting data to gain insights and draw conclusions. It involves a variety of methods, techniques, and tools used to analyze large amounts of data.

One popular method for analyzing data is descriptive analytics which uses statistics to summarize the existing data. This type of analysis can help identify patterns or trends in the dataset that may be useful for decision-making.

For example, it can be used to identify customer segments or product categories with higher sales than others.

Another common technique is predictive analytics which uses statistical models such as regression analysis or machine learning algorithms to predict future outcomes based on past behavior.

This type of analysis can help companies make better decisions by providing an understanding of how different factors might affect their business performance in the future.

In addition to these two methods, there are several other techniques that can be used for analyzing data including cluster analysis (which groups similar items together), association rules (which looks at relationships between variables), and time series forecasting (which predicts future values based on historical trends).

All these techniques require specialized software tools such as SAS or R programming language for implementation.

Finally, it’s important not just to collect and analyze data but also to visualize it so that key insights are easily understood by stakeholders across an organization.

Visualization tools like Tableau allow users to create interactive charts and graphs from their datasets quickly and easily without having any coding experience necessary making them ideal for presenting complex information in a simple way.

Data Analysis Tools and Techniques in Research

Data collection is an essential part of any research project. There are several methods that can be used to collect data, each with its own advantages and disadvantages.

Surveys and Questionnaires

Surveys and questionnaires are one of the most common methods for collecting data. They provide a structured way to gather information from large numbers of people quickly and efficiently. The questions should be carefully designed to ensure they accurately capture the required information in a clear, concise manner.

This method has the advantage of being relatively inexpensive compared to other methods but may not always yield accurate results due to the potential bias of the respondents.

Focus Groups and Interviews

Focus groups involve gathering small groups together for discussions about specific topics related to the research project at hand. This method allows researchers to gain insight into how different individuals think about certain topics which can help inform decisions or shape further research activities.

However, this method is often more expensive than surveys or questionnaires since it requires more time investment from both participants and researchers alike.

Observational Studies

Observational studies involve observing behavior without directly intervening. For example, when studying the consumer behavior of online shoppers, researchers could observe shoppers’ interactions with websites without actually participating themselves to better understand user experience trends or customer preferences.

While observational studies offer valuable insights into real-world behaviors, they also require significant resources, such as personnel time, and equipment, which makes them costly endeavors.

(Source)

Predictive Modeling Techniques

Predictive modeling is a powerful tool used to make predictions about future events based on past observations or trends in the data. This technique can be applied to many different types of problems, such as predicting customer churn, forecasting stock prices, and identifying fraud.

The three most common predictive modeling techniques are regression models, classification models, and clustering algorithms.

Regression Models

Regression models are used for predicting continuous outcomes such as sales revenue or temperature. These models use linear equations to map input variables (e.g., age) to an output variable (e.g., income).

Common examples of regression include linear regression and logistic regression.

Classification Models

Classification models are used for predicting discrete outcomes such as whether a customer will buy a product or not. These models use decision trees or support vector machines to classify data points into one of two categories – yes/no or true/false.

Examples of classification include binary classification and multi-class classification tasks like image recognition where each image is classified into one of several classes.

Clustering Algorithms

Clustering algorithms are unsupervised learning methods that group similar data points together without any prior knowledge about the groups themselves. Clustering can be used for market segmentation tasks where customers with similar characteristics are grouped together so they can be targeted with tailored marketing campaigns.

It can also be used for anomaly detection tasks where outliers in the dataset are identified and flagged for further investigation by experts. Popular clustering algorithms include k-means clustering and hierarchical clustering methods like agglomerative clustering

FAQs About Data Analysis Tools and Techniques in Research

What are data analysis tools in research?

Data analysis tools in research are used to analyze and interpret data from various sources. These tools can help researchers identify trends, correlations, and patterns in their data that may not be visible with traditional methods.

Commonly used data analysis tools and techniques in research include statistical software packages such as SPSS or SAS, visualization software like Tableau or Power BI, machine learning algorithms for predictive analytics, text mining techniques for natural language processing (NLP), and GIS mapping programs for spatial analysis.

All of these tools provide powerful insights into the underlying structure of a dataset and enable researchers to gain a deeper understanding of their research questions.

What are the four techniques for data analysis?

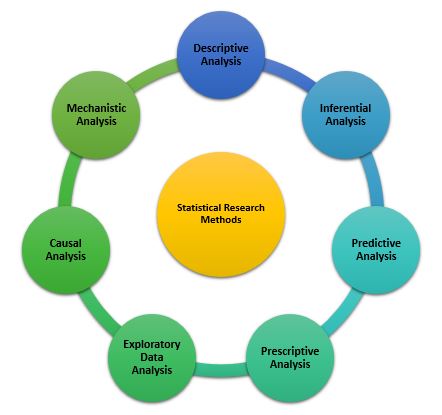

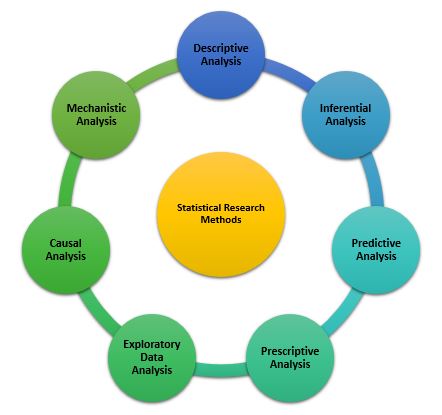

In data analytics and data science, there are four main types of data analysis: descriptive, diagnostic, predictive, and prescriptive.

Conclusion

Data analysis tools and techniques in research are essential for R&D and innovation teams to gain insights quickly. Data collection, exploratory data analysis (EDA), and predictive modeling techniques can all be used to help teams analyze their data more effectively.

Are you part of an R&D or innovation team? Do you want to unlock the power of data analysis tools and techniques in research and gain deeper insights faster? Cypris is your answer!

Our platform centralizes all the necessary data sources for research teams into one easy-to-use interface, giving you rapid time to insight. Join us today and discover how our powerful tools can help transform your workflows.

The Power of Data Analysis Tools and Techniques in Research

In the fast-paced world of innovation, data analysis tools and techniques in research have become essential for success. From collecting data to exploring potential insights, a variety of strategies are available to help teams make sense of their information.

In this blog post, we’ll explore some key data analysis tools and techniques in research that can provide your team with rapid time-to-insights. We’ll look at how to collect valuable datasets, use exploratory methods for uncovering patterns or trends, and apply predictive modeling approaches to forecast outcomes based on past events or behaviors.

Get ready to discover new ways you can take advantage of all that data!

Table of Contents

Data Analysis Tools and Techniques in Research

Predictive Modeling Techniques

FAQs About Data Analysis Tools and Techniques in Research

What are data analysis tools in research?

What are the four techniques for data analysis?

What Is Data Analysis?

Data analysis is the process of collecting, organizing, and interpreting data to gain insights and draw conclusions. It involves a variety of methods, techniques, and tools used to analyze large amounts of data.

One popular method for analyzing data is descriptive analytics which uses statistics to summarize the existing data. This type of analysis can help identify patterns or trends in the dataset that may be useful for decision-making.

For example, it can be used to identify customer segments or product categories with higher sales than others.

Another common technique is predictive analytics which uses statistical models such as regression analysis or machine learning algorithms to predict future outcomes based on past behavior.

This type of analysis can help companies make better decisions by providing an understanding of how different factors might affect their business performance in the future.

In addition to these two methods, there are several other techniques that can be used for analyzing data including cluster analysis (which groups similar items together), association rules (which looks at relationships between variables), and time series forecasting (which predicts future values based on historical trends).

All these techniques require specialized software tools such as SAS or R programming language for implementation.

Finally, it’s important not just to collect and analyze data but also to visualize it so that key insights are easily understood by stakeholders across an organization.

Visualization tools like Tableau allow users to create interactive charts and graphs from their datasets quickly and easily without having any coding experience necessary making them ideal for presenting complex information in a simple way.

Data Analysis Tools and Techniques in Research

Data collection is an essential part of any research project. There are several methods that can be used to collect data, each with its own advantages and disadvantages.

Surveys and Questionnaires

Surveys and questionnaires are one of the most common methods for collecting data. They provide a structured way to gather information from large numbers of people quickly and efficiently. The questions should be carefully designed to ensure they accurately capture the required information in a clear, concise manner.

This method has the advantage of being relatively inexpensive compared to other methods but may not always yield accurate results due to the potential bias of the respondents.

Focus Groups and Interviews

Focus groups involve gathering small groups together for discussions about specific topics related to the research project at hand. This method allows researchers to gain insight into how different individuals think about certain topics which can help inform decisions or shape further research activities.

However, this method is often more expensive than surveys or questionnaires since it requires more time investment from both participants and researchers alike.

Observational Studies

Observational studies involve observing behavior without directly intervening. For example, when studying the consumer behavior of online shoppers, researchers could observe shoppers’ interactions with websites without actually participating themselves to better understand user experience trends or customer preferences.

While observational studies offer valuable insights into real-world behaviors, they also require significant resources, such as personnel time, and equipment, which makes them costly endeavors.

(Source)

Predictive Modeling Techniques

Predictive modeling is a powerful tool used to make predictions about future events based on past observations or trends in the data. This technique can be applied to many different types of problems, such as predicting customer churn, forecasting stock prices, and identifying fraud.

The three most common predictive modeling techniques are regression models, classification models, and clustering algorithms.

Regression Models

Regression models are used for predicting continuous outcomes such as sales revenue or temperature. These models use linear equations to map input variables (e.g., age) to an output variable (e.g., income).

Common examples of regression include linear regression and logistic regression.

Classification Models

Classification models are used for predicting discrete outcomes such as whether a customer will buy a product or not. These models use decision trees or support vector machines to classify data points into one of two categories – yes/no or true/false.

Examples of classification include binary classification and multi-class classification tasks like image recognition where each image is classified into one of several classes.

Clustering Algorithms

Clustering algorithms are unsupervised learning methods that group similar data points together without any prior knowledge about the groups themselves. Clustering can be used for market segmentation tasks where customers with similar characteristics are grouped together so they can be targeted with tailored marketing campaigns.

It can also be used for anomaly detection tasks where outliers in the dataset are identified and flagged for further investigation by experts. Popular clustering algorithms include k-means clustering and hierarchical clustering methods like agglomerative clustering

FAQs About Data Analysis Tools and Techniques in Research

What are data analysis tools in research?

Data analysis tools in research are used to analyze and interpret data from various sources. These tools can help researchers identify trends, correlations, and patterns in their data that may not be visible with traditional methods.

Commonly used data analysis tools and techniques in research include statistical software packages such as SPSS or SAS, visualization software like Tableau or Power BI, machine learning algorithms for predictive analytics, text mining techniques for natural language processing (NLP), and GIS mapping programs for spatial analysis.

All of these tools provide powerful insights into the underlying structure of a dataset and enable researchers to gain a deeper understanding of their research questions.

What are the four techniques for data analysis?

In data analytics and data science, there are four main types of data analysis: descriptive, diagnostic, predictive, and prescriptive.

Conclusion

Data analysis tools and techniques in research are essential for R&D and innovation teams to gain insights quickly. Data collection, exploratory data analysis (EDA), and predictive modeling techniques can all be used to help teams analyze their data more effectively.

Are you part of an R&D or innovation team? Do you want to unlock the power of data analysis tools and techniques in research and gain deeper insights faster? Cypris is your answer!

Our platform centralizes all the necessary data sources for research teams into one easy-to-use interface, giving you rapid time to insight. Join us today and discover how our powerful tools can help transform your workflows.

Keep Reading

AI patent and paper intelligence platforms are a distinct enterprise software category that unifies patent data, scientific literature, and other technical sources into a single AI-searchable corpus designed for corporate R&D and innovation teams. The category emerged because the questions R&D leaders actually ask, what is being invented in this space, who is moving fastest, where are the white spaces, cannot be answered by patent databases or scientific search engines in isolation. A modern AI patent and paper intelligence platform combines semantic search, retrieval-augmented generation, agentic workflows, and a structured technical ontology over hundreds of millions of documents, so a single query can surface the relevant patents, papers, and signals an R&D team needs to make a decision.

This category is not a rebrand of patent search. Patent search tools were designed for episodic legal work performed by trained patent professionals. AI patent and paper intelligence platforms are designed for continuous use by R&D scientists, innovation strategists, and technology scouts who treat intelligence as infrastructure rather than a project.

Why the Category Exists

For most of the last two decades, technical intelligence at large companies was split across two parallel stacks. Patent professionals worked inside legacy patent platforms built for prior art and prosecution workflows. Scientists worked inside academic literature databases and citation tools. The two stacks rarely connected, and neither was designed to answer the integrated questions R&D directors actually ask.

That separation collapsed for three reasons. The first is volume. The World Intellectual Property Organization reported more than 3.55 million patent applications filed globally in 2023, the highest figure on record, and global scientific publication output now exceeds 3 million peer-reviewed articles per year [1][2]. No human team can read across that volume manually, and keyword search degrades sharply as corpus size grows.

The second reason is the convergence of patents and papers as evidence. In emerging fields such as solid-state batteries, generative biology, and advanced materials, the leading signal often appears first in a preprint or conference paper, then in a patent filing months or years later. A team that monitors only patents sees the lagging indicator. A team that monitors only literature misses the commercial intent. Modern technical decisions require both sources analyzed together.

The third reason is the maturation of large language models and retrieval-augmented generation. Until recently, semantic search across heterogeneous technical corpora was a research problem. With current frontier models and structured retrieval, it is now a product category. The same architecture that allows a model to summarize an inbox can, with the right corpus and the right ontology, summarize the state of the art in a technology domain.

The result is a new category of enterprise software. Not a patent database with an AI feature added on, and not a chatbot pointed at PubMed, but a purpose-built platform layer that treats patents, scientific papers, and other technical signals as a unified intelligence substrate for R&D teams.

What Defines a Platform Rather Than a Tool

The distinction between a tool and a platform is consequential when budgets reach enterprise scale. A tool answers a query. A platform supports a function. AI patent and paper intelligence platforms share several characteristics that separate them from search tools that have added an AI feature.

The first is unified corpus depth. A platform integrates hundreds of millions of patents from major jurisdictions with scientific literature from peer-reviewed journals, preprint servers, and conference proceedings, alongside other technical sources such as grant data, regulatory filings, and product disclosures. The leading platforms in this category cover 500 million or more technical documents and continuously ingest new ones. Search tools that cover a single source type, however polished, cannot answer cross-domain questions.

The second is a structured technical ontology. Raw vector search across heterogeneous technical documents produces noisy results because the same concept is described differently in patents, papers, and product literature. A purpose-built R&D ontology encodes the relationships between technical concepts, materials, mechanisms, and applications, so a semantic query for, say, sulfide solid electrolytes returns the relevant evidence regardless of whether a given document uses that exact phrase. Ontology quality is one of the most important and least visible differentiators in this category.

The third is agentic workflow support. A search box returns documents. A platform produces deliverables. Modern AI patent and paper intelligence platforms include agentic systems that can run multi-step research workflows, retrieve evidence across the corpus, synthesize findings, and produce structured reports such as landscape analyses, white space maps, and competitor profiles. These workflows are what allow a small R&D intelligence team to support a large innovation organization.

The fourth is enterprise-grade infrastructure. Corporate R&D intelligence touches sensitive competitive information, regulated industries, and confidential project context. A platform suitable for Fortune 500 deployment must offer enterprise-grade security that meets Fortune 500 requirements, role-based access controls, audit logging, and data handling guarantees that consumer or free tools do not provide.

The fifth is configurability. Different R&D programs need different views of the world. A platform allows users to configure custom corpuses of patent and non-patent literature scoped to a technology domain, a competitor set, or a strategic initiative. This corpus configuration capability is directly tied to recent research on context engineering, which has shown that focusing a language model on the relevant subset of data, rather than the entire web, materially improves the quality of generated analysis [3].

The Role of AI in the Category

The AI in AI patent and paper intelligence platforms is not a single feature. It is a layered architecture, and the quality of each layer compounds.

At the retrieval layer, semantic embedding models convert technical documents into vector representations that capture meaning rather than surface text. A well-implemented retrieval system surfaces a relevant patent about lithium polymer electrolytes even when the user query uses different terminology, because the underlying concepts are close in embedding space. Retrieval quality on technical content is highly sensitive to the embedding model used, the ontology applied on top, and the cleanliness of the underlying corpus.

At the reasoning layer, large language models perform synthesis, comparison, and extraction over retrieved evidence. The frontier models available in 2026, including the Claude 4 series, GPT-5.1, and the o-series reasoning models, have substantially improved on technical comprehension, structured output, and citation behavior compared to the models available even eighteen months ago. Platforms that have integrated official enterprise partnerships with these model providers have access to the strongest available reasoning, with the data handling and privacy guarantees enterprise buyers require.

At the agent layer, orchestrators chain retrieval and reasoning steps together to perform end-to-end workflows. An agent tasked with producing a competitive landscape on a technology domain might iterate across the corpus, identify the leading assignees, retrieve their representative patents and publications, summarize each one, build a comparison matrix, and produce a written report with citations. Recent research on agentic context compression suggests that models perform better when given concise, well-structured claims rather than dense source material, which is why high-quality ingestion and ontology work matters even more in the agent era [4].

The combination of retrieval, reasoning, and agent layers is what allows a modern platform to take a question such as what is the competitive position of company X in solid-state batteries, and return a structured answer in minutes rather than weeks of analyst time.

Use Cases That Justify the Category

The use cases that justify investment in an AI patent and paper intelligence platform are the ones where speed and breadth matter more than legal precision. These are not patent attorney workflows. They are R&D and strategy workflows.

Technology scouting is one of the clearest examples. When an innovation team needs to identify emerging approaches to a problem, the relevant evidence is spread across patent filings, recent papers, startup disclosures, and grant awards. A unified AI platform allows a scout to surface candidates across all these sources, cluster them by approach, and produce a shortlist in days rather than months.

Competitive landscape analysis is another. Understanding a competitor's technical trajectory requires reading across their patent portfolio and their scientific publications, then identifying where the two diverge from public product disclosures. Platforms with agentic synthesis can produce competitor profiles that integrate all three signals.

White space and opportunity mapping benefits especially from cross-source intelligence. The most interesting technical opportunities are often the gaps between heavy patent activity and heavy publication activity, or the spaces where academic momentum is building but commercial filings have not yet appeared. These patterns are invisible inside a single-source tool.

Freedom to operate at the R&D stage is also increasingly handled with AI patent and paper intelligence platforms, although final legal opinions still belong with patent counsel. Early-stage FTO scans performed in-house by R&D teams help engineering leaders make build versus pivot decisions before legal hours are spent.

Continuous monitoring rounds out the use case set. Once a corpus is configured for a strategic area, agents can surface new patents and papers as they appear, summarize their relevance, and route them to the right internal stakeholders. This converts patent and paper intelligence from a periodic study into an ongoing capability.

Evaluation Criteria for Enterprise R&D Buyers

R&D directors and innovation leaders evaluating platforms in this category should weigh several criteria that map to the structural definitions above.

Corpus coverage is the first. The platform should integrate patent data from all major jurisdictions, scientific literature from peer-reviewed and preprint sources, and ideally additional technical signals such as grants, clinical trials, and regulatory filings. Total document counts matter, but freshness, completeness of metadata, and coverage of non-English sources matter more.

Semantic search quality is the second. The most reliable way to evaluate this is to run real queries from the buyer's own technical domain and inspect the top results. Embedding quality and ontology quality are difficult to assess from marketing materials alone.

Agent and report quality is the third. A platform that produces a clean landscape report with proper citations and a defensible structure delivers materially more value than one that returns a chat answer. Buyers should ask vendors to run an agent task on a sample domain during evaluation.

Enterprise infrastructure is the fourth. Security posture, data handling commitments, single sign-on, audit logging, and the ability to meet Fortune 500 procurement requirements should be confirmed early. Tools that cannot pass enterprise security review will stall regardless of search quality.

Audience fit is the fifth. A platform built for patent attorneys typically defaults to legal workflows and terminology that R&D users find friction-laden. A platform built for R&D scientists and innovation strategists defaults to the language and outputs those users need. The mismatch is rarely fixable through training.

Configurability is the sixth. The ability to define custom corpuses, save them, share them across teams, and route updates from them is what turns a search platform into a research function.

Pricing structure is the final criterion. Enterprise platforms in this category are priced for sustained organizational use, not per-search consumption. Buyers should map the expected number of seats, the breadth of teams using the platform, and the report and monitoring volumes against the proposed contract.

Where the Category Is Going

The trajectory of AI patent and paper intelligence platforms over the next eighteen months follows the broader trajectory of enterprise AI. Three shifts are already visible.

The first is deeper agent integration. Platforms are moving from question-answering toward autonomous research workflows where an agent runs for minutes or hours and returns a finished deliverable. This compresses the work cycle for R&D intelligence functions and makes ambitious use cases such as cross-portfolio monitoring practical for teams that previously could not staff them.

The second is custom corpus standardization. The recognition that focusing models on the right subset of data improves output is reshaping product design. Configurable corpuses scoped to a technology, a competitor set, or a project are becoming the default rather than the exception, in line with the broader move toward context engineering in applied AI [3].

The third is enterprise model partnerships. Platforms with official enterprise API partnerships with the leading model providers, including OpenAI, Anthropic, and Google, have a structural advantage in both capability and compliance. Frontier models change frequently, and the platforms wired into the official enterprise pipelines benefit from each new release without renegotiating data handling terms.

The net effect is that AI patent and paper intelligence platforms are evolving from search experiences into research infrastructure. The buyers who treat them as the latter, rather than as a faster keyword search, will extract the most value.

A Note on Cypris

Cypris is an enterprise R&D intelligence platform built specifically for the use cases described above. The platform unifies more than 500 million patents and scientific papers into a single corpus accessible through semantic search and agentic workflows, with a proprietary R&D ontology designed to understand the relationships between technical concepts across patents and literature. Cypris holds official enterprise API partnerships with OpenAI, Anthropic, and Google, allowing the platform to deliver frontier model capabilities under enterprise data handling terms. Cypris Q, the platform's AI agent and report-generation layer, produces structured landscape analyses, competitor profiles, and white space maps that R&D teams use as primary deliverables rather than supporting research. The platform supports configurable custom corpuses of patent and non-patent literature, allowing organizations to focus their intelligence work on the technology domains, competitor sets, and strategic initiatives that matter to them. Cypris is built for R&D scientists and innovation strategists rather than IP attorneys, and is trusted by hundreds of enterprise customers and Fortune 500 R&D teams operating in regulated, security-conscious environments.

Most large R&D organizations now run some form of tech scouting. The shape varies enormously. A few companies have a dedicated technology scout sitting in the CTO's office producing quarterly horizon reports. More common is an innovation team that runs scouting sprints around specific themes when leadership asks for one. Increasingly common is some form of AI-assisted scouting workflow — a set of saved searches at the simple end, an agentic monitoring system at the more sophisticated end. The output quality across these approaches differs by an order of magnitude, and the most consequential variable separating the strong versions from the weak ones is not which AI model is underneath. It is how the scouting agent has been designed.

This guide is for innovation leaders, CTOs, R&D directors, BD and partnership teams, and corporate venture groups who want tech scouting to function as a continuous capability rather than a periodic deliverable. It explains what a tech scouting agent actually is, why agents that surface real intelligence look different from agents that produce volume, and how to design a scouting workflow that compounds value over time rather than restarting from zero every quarter.

What Tech Scouting Actually Has to Cover

Tech scouting is a forward-looking workflow. The question is not what the established competitive landscape looks like today; the question is what is emerging that the company should know about, where, and why does it matter to the strategy. That framing changes everything about how the work has to be done.

Scouting answers a small number of recurring questions. What new technologies are gaining momentum in areas adjacent to where we play? Which startups are forming around technical approaches that could disrupt our roadmap, and which could we partner with or acquire? Which research groups are producing work that will become commercially significant in three to five years, and what would it take to engage them? Which capabilities should we be building internally versus sourcing externally? Which competitors are quietly building positions in spaces we have not yet committed to? These questions do not have one-time answers. The answer this quarter and the answer next quarter are different, and the difference is precisely the signal the scouting workflow exists to capture.

The evidence base for these questions is messy and multi-source by nature. Scientific publications and preprints carry the earliest signal of where research is heading. Patent filings carry a slightly later but more strategically committed signal of where companies and inventors are placing technical bets. Startup formations, funding rounds, and corporate venture activity reveal where capital is moving and which technical theses sophisticated investors are willing to back. Government grants, program awards, and procurement filings flag where strategic priorities and non-dilutive funding are concentrating. Conference proceedings, technical talks, hiring patterns, regulatory filings, and the surrounding signal in trade press and industry analyst coverage round out the picture. Each source carries a different slice of the truth. None of them is sufficient on its own.

The implication is that a scouting agent watching one source — even a comprehensive one — produces a partial view. The signal that matters in scouting is usually cross-source. When a research group publishes three papers on a novel approach over eighteen months, when one of those authors leaves their academic position, when a small entity forms with a credible founding team and raises seed capital, when a corporate venture arm participates in the round, when an early grant award appears for the same research direction — none of those events is decisive on its own. Together, they are an emergence signal worth a senior leader's attention. An agent that sees only one source misses most of the picture. The intelligence is in the connection.

This is the workflow that older tools were not built for. Most legacy systems organize the world by source — a startup database here, a literature index there, a patent tool somewhere else, with the connections drawn by an analyst pivoting between tabs. The connection is the work. Doing that work continuously, across thousands of emergence events per week, in dozens of technology and business areas, is not a workload a team of human scouts can sustain. It is the workload tech scouting agents exist to absorb.

What a Tech Scouting Agent Actually Does

Most R&D and innovation organizations that say they have a tech scouting capability today are running a combination of saved Google Alerts, periodic searches in different databases, conference attendance, broker calls, and read-throughs of analyst reports. The work is real but episodic. Someone reads the alerts. Someone summarizes the conference. Someone reviews the analyst report. The interpretive work happens in a person's head, the institutional memory fades when they move on, and the next person to ask the same scouting question starts from a blank page.

A tech scouting agent inverts this pattern. The agent runs a defined scouting thesis continuously across the relevant evidence corpus, evaluates each new signal against the thesis using interpretive reasoning rather than keyword matching, dismisses what does not warrant attention, and escalates what does with a written rationale that explains why. The interpretive work moves from a person's head into a system that runs every day, applies consistent criteria, and produces a record the team can audit and refine.

Four functions distinguish a real scouting agent from a saved search with notifications.

It applies a strategic thesis rather than a query. Instead of matching documents against a Boolean string or a vector similarity threshold, the agent evaluates each new signal against a structured description of what the team is trying to learn and why. The thesis is interpretive, not lexical, which means the agent can recognize relevant signals even when the underlying language differs from how the team would have phrased a search.

It runs continuously, not on user-initiated demand. New papers, preprints, patent filings, funding announcements, grant awards, regulatory filings, and corporate disclosures arrive as a continuous stream. An agent designed for scouting evaluates this stream as it arrives, which eliminates the gap between when a relevant signal enters the world and when the team learns about it.

It filters for signal, not match. Most saved searches return high false-positive rates because the keywords appear in unrelated contexts, or because the technical match is real but the strategic relevance is low. An agent reads each candidate signal, evaluates it against the thesis, and discards what does not pass the relevance bar. The result is a substantially smaller and higher-quality escalation queue.

It produces a written rationale. When the agent escalates a signal, it explains why — what about the disclosure matched the thesis, how it relates to prior signals the agent has already evaluated, and what decision or downstream workflow it might inform. This rationale becomes a record the team can audit. When the agent gets it wrong, the team can see where the reasoning broke and refine the thesis. When the agent gets it right, the rationale accelerates the human follow-up because the framing is already done.

These four functions are what transform scouting from a notification system into an analytical process that compounds.

The Four Components of a Strong Scouting Thesis

The thesis is the most important input to a tech scouting agent. The quality of the thesis sets the ceiling on the quality of the output, regardless of which platform or model sits underneath. Most weak scouting output traces back to a thesis that was too short to support real work — a few sentences naming a technology area, with no specification of what would make a finding meaningful or how the team would use it.

There is a useful piece of recent prompt engineering research that bears on this directly. The discipline reorganized through 2025 around what researchers and frontier AI labs now call context engineering — the recognition that for serious knowledge work, the ceiling on output quality is set less by how a prompt is phrased and more by what information the system has been given to reason over. Andrej Karpathy described context engineering as the practice of populating the model's working context with precisely the right information for the task. Research on agentic systems published through late 2025 documented what researchers describe as brevity bias — the tendency of prompt optimization to favor concise instructions, which sounds appealing but causes the omission of domain-specific detail that actually drives output quality on knowledge-intensive tasks. The translation for tech scouting is that strong scouting theses are tight on filler but rich on domain specification. They are not short.

A well-framed scouting thesis has four components.

The strategic envelope. State why the scouting is being done and which business decisions it is meant to inform. A thesis written to support open innovation and partnership identification is different from a thesis written to support corporate venture screening, and both are different from a thesis written to support technology emergence monitoring for an executive committee or M&A target identification for corporate development. The agent can calibrate its evaluation criteria to the decision the scouting supports — but only when the decision is explicitly named. A scouting workflow without a named decision tends to escalate everything that looks interesting, which is functionally the same as escalating nothing.

The technical and market scope. Describe the technologies, capabilities, applications, and market segments of interest in specific terms. Name the methods, performance thresholds, end-use cases, and customer segments that are in scope. Name what is explicitly out of scope — the adjacent areas the team does not want the agent pulled into. List terminology variants the field uses for the same concept, particularly where industry vocabulary differs from academic vocabulary, and where new terminology has begun to displace older usage. The scope is what allows the agent to recognize relevance accurately at the edges, where most genuine emergence signals live.

The evidence priorities. State which sources of evidence matter most for this scouting question and why. For some theses, scientific publications are the leading indicator — emerging technical approaches typically appear in academic literature six to eighteen months before they reach commercial products. For other theses, startup formations and funding events are the earliest signal of where capital and talent are converging. For still others, government grant awards or regulatory filings reveal emergence first. The agent's evaluation logic depends on understanding which source carries the leading signal for the specific question, and how to weight signals from different sources when they appear together. Without this specification, the agent treats all sources as equally informative, which is rarely true.

The escalation criteria. Specify what makes a finding worth surfacing. A new initiative from a primary competitor likely warrants escalation regardless of how strong the technical match is. A scientific publication from an unknown research group likely warrants escalation only when the technical signal is strong and other independent signals point in the same direction. A startup formation likely warrants escalation only when the team behind it has a credible technical pedigree and the funding source signals strategic intent rather than seed-stage exploration. The criteria need to be explicit so the agent can apply them consistently and the team can tune them as the thesis evolves.

The discipline of writing a thesis with these four components is itself valuable. It forces the team to articulate what they are actually trying to learn, why it matters to the business, and how they would recognize a useful answer when they saw one. Teams that adopt this framing pattern tend to find that the thesis-writing exercise improves their scouting work even before any agent is run against it.

What to Watch For When Designing Scouting Agents

Three failure modes appear repeatedly in tech scouting agent deployments, and each is a design problem rather than a model problem.

The first is theses that are too broad, which produce escalation queues so large the team stops reading them. A scouting agent that escalates fifty findings a week will be functionally abandoned within a month. The remedy is rarely to make the agent more selective in isolation — it is to narrow the thesis itself, focus on the specific decisions the scouting supports, and tune the escalation criteria upward until what arrives is genuinely worth the team's time. A useful test is whether the team would feel a real loss if the scouting output stopped arriving. If the answer is no, the thesis needs to be sharper.

The second is single-source agents — scouting workflows that watch only one type of evidence, whether that is news, papers, patents, or startup data. The genuine emergence signals in tech scouting almost always show up across multiple sources, in a particular sequence, over a particular time window. An agent that sees one source can detect that something is happening but cannot evaluate whether the something is meaningful. A multi-source agent can recognize when a paper, a hire, a startup formation, and a funding round all point in the same direction, which is a fundamentally different category of intelligence than any one signal in isolation.

The third is scouting agents that are not connected to a downstream decision process. An agent that produces a weekly digest read by no one, or a digest whose findings never enter Stage-Gate reviews, partnership evaluations, M&A pipelines, or executive briefings, produces no operational value regardless of how good the underlying analysis is. The scouting workflow needs to terminate in a decision interface — a project workspace, a portfolio review, a CTO briefing, a venture screening pipeline, a corporate development tracker — where the findings can actually act on the business. A scouting agent without a downstream destination is an interesting demo, not a capability.

The Evidence Corpus Question

Here is where most tech scouting deployments hit their ceiling, often without realizing it.

A tech scouting agent's reasoning quality is bounded by what the agent is reasoning over. A general-purpose AI tool is reasoning over its training data, which is a partial and outdated slice of any specialized field. A scouting workflow built on a single-source database is reasoning over only that source. Both architectures impose ceilings on output quality that no amount of prompt refinement will fully lift.

This is the structural reason purpose-built R&D intelligence platforms produce different output than general-purpose AI tools or single-source legacy systems for scouting work. The strongest platforms maintain a unified corpus that combines scientific literature, patents, and adjacent technical and market signal in a single index, and allow scouting agents to reason across that combined corpus rather than against any one slice of it. Cross-source reasoning — recognizing that a paper, a patent, a funding event, and a hire all point in the same direction — only works when the agent has access to all of those signals in a structure that lets it connect them.

The strongest platforms go further and allow teams to configure custom corpuses focused on specific scouting theses. A custom corpus narrows the working evidence base to what is actually relevant for the question at hand, which lets the agent's reasoning operate on signal rather than fight through noise. A general index covers everything across all technology areas, and the signal that matters for a specific scouting thesis is buried in a much larger volume of irrelevant material. Even strong AI reasoning struggles to consistently find and weight the right evidence at that ratio. A focused corpus, scoped to the technical and strategic envelope of the thesis, produces meaningfully better scouting output than the same agent run against a general index.

Custom corpus configuration matters more for scouting than for most adjacent workflows. A landscape question is bounded — the scope is defined, the deliverable is a snapshot, and the corpus that supports it can be constructed once. A scouting question is open-ended — the scope evolves as the field evolves, the deliverable is continuous, and the corpus needs to evolve alongside the thesis. Platforms that treat custom corpus configuration as a first-class capability rather than an advanced feature are the ones where scouting workflows continue producing useful output six and twelve months in.

Where Cypris Fits

Cypris is an enterprise R&D intelligence platform built for this category of work. The platform unifies more than 500 million patents and scientific papers in a single corpus, applies a proprietary R&D ontology developed for the language of corporate research and innovation work, and provides agentic workflows that R&D, innovation, and corporate development teams configure to run continuous scouting against defined theses. Cypris maintains official API partnerships with OpenAI, Anthropic, and Google, which means the agentic reasoning sitting underneath the platform is built on frontier models accessed through enterprise contracts rather than scraped or rate-limited public APIs, with enterprise-grade security architecture that meets Fortune 500 requirements.

The capability that matters most for the scouting workflow described in this guide is the combination of unified corpus, custom corpus configuration, and agentic execution. A scouting team using Cypris can encode a strategic thesis, configure a focused corpus scoped to the technical and market envelope of that thesis, and run an agent against it continuously. The agent applies the team's escalation criteria, surfaces findings with written rationale, and integrates the output into the team's downstream R&D and corporate development processes. The architecture was designed from the ground up around the workflow needs of R&D scientists, innovation strategists, and corporate development teams rather than IP attorneys running discrete search engagements, which is reflected throughout the system in how scouting is structured, how findings are presented, and how the human-in-the-loop refinement of the thesis works in practice.

For an innovation team mapping a specific emerging technology space, this means the agent is reasoning over the research and technical signal actually relevant to that space, recognizing emergence patterns across sources, and surfacing findings the team would not have caught running periodic searches against a general index. For a corporate venture team screening a category of startups, the corpus can be configured around the technical area the venture thesis covers, and the agent can monitor for new entrants, technical pivots, and competitive activity continuously. For a corporate development team identifying M&A targets, the corpus can be configured around the capability gaps the strategy is trying to close, and the agent can surface companies whose technical and commercial trajectory aligns with the thesis. For a CTO running a horizon-monitoring program, the platform can support multiple parallel scouting theses, each with its own corpus, agent, and escalation logic, and integrate the combined output into the executive briefing cadence the CTO actually runs.

The combination — a unified research and technical corpus, custom corpus configuration scoped to specific theses, agentic execution against frontier reasoning models, and integration with the workflows R&D and innovation teams already run — is what separates scouting output that supports executive decisions from scouting output that summarizes what an analyst happened to read this week. Hundreds of Fortune 500 R&D and innovation organizations rely on the platform for exactly this category of work.

What Your Team Can Do This Quarter

Three things will measurably improve the tech scouting your team produces, regardless of which platform you use.

Standardize how scouting theses are written, with the four components described above — strategic envelope, technical and market scope, evidence priorities, and escalation criteria. A simple template that asks each scout to fill in these four sections before any agent runs against the thesis produces noticeably better output across the board. The discipline of writing a thesis to this standard is itself a quality lever, because it forces explicit articulation of what would otherwise stay implicit.

Establish a quality standard for what defensible scouting output looks like. The output a scouting agent produces should be grounded in specific citable signals — named entities, paper or patent identifiers, concrete dates, specific funding events — rather than vague references to activity in a space. It should distinguish between what the evidence shows and what the evidence suggests. It should calibrate its confidence by saying where the signal is thick and where it is thin. It should explicitly identify the assumptions and scope choices the conclusions depend on. Output that does not meet this standard does not get put in front of executives, regardless of which platform produced it.

Evaluate whether your current scouting toolkit supports continuous agentic execution against a unified, configurable corpus. If it does not — if the team is running periodic searches against single-source databases and synthesizing the output by hand — you are leaving substantial scouting capability on the table. Any platform evaluation you run should put unified corpus coverage, custom corpus configuration, and agentic workflow architecture near the top of the criteria list, ahead of search interface aesthetics or specific dashboard features.

The teams getting the most value from AI in tech scouting are not the teams with the most clever prompts or the highest tool budgets. They are the teams that have framed their scouting theses well, set quality standards their output has to meet, and chosen tools that let agents run continuously against the evidence base that matters for the decisions the scouting supports.

Frequently Asked Questions

What is a tech scouting agent?A tech scouting agent is an AI system that runs a defined technology scouting thesis continuously across a multi-source evidence corpus, evaluates new signals against the thesis using interpretive reasoning, and escalates findings worth human attention with a written rationale explaining why. It differs from a saved search with notifications in that it applies strategic interpretation rather than keyword matching, runs continuously rather than on user-initiated demand, filters for signal rather than lexical match, and produces auditable reasoning rather than document lists. Tech scouting agents are most valuable for R&D, innovation, corporate venture, and corporate development teams that need continuous awareness of emerging technologies, startups, research, and capabilities rather than periodic snapshots.

What kinds of decisions does a tech scouting agent support?Tech scouting agents support a recurring set of decisions: which technologies to monitor for strategic relevance, which research groups and inventors to engage for partnerships, which startups to evaluate for licensing, investment, or acquisition, which capability gaps to close internally versus source externally, and which competitive moves to track in spaces the company has not yet committed to. Each of these decisions has a different evidence priority and escalation criterion, which is why the strategic envelope of the scouting thesis matters as much as the technical scope.

What should a tech scouting thesis include?A strong tech scouting thesis has four components: the strategic envelope (why the scouting is being done and what business decisions it informs), the technical and market scope (what technologies, capabilities, and segments are in scope and what is explicitly out of scope, with terminology variants specified), the evidence priorities (which sources carry the leading signal for this question and how signals from different sources should be weighted when they appear together), and the escalation criteria (what makes a finding worth surfacing to the team). Theses missing one or more of these components tend to produce scouting output that is either too noisy to use or too narrow to capture genuine emergence.

Why does the evidence corpus matter so much for tech scouting?The corpus the scouting agent reasons over sets the ceiling on what the agent can recognize. A general-purpose AI tool reasons over its training data, which is partial and outdated for most specialized fields. A single-source database limits the agent to the signal carried in that source, missing cross-source emergence patterns. A unified, configurable corpus lets the agent reason across the full evidence base relevant to a specific thesis, which is where genuine scouting intelligence comes from. The recent shift in prompt engineering toward what researchers call context engineering reinforces this point: for serious knowledge work, the body of evidence the AI has access to matters more than the cleverness of the prompt.

What does cross-source reasoning mean in tech scouting?Cross-source reasoning is the recognition that genuine emergence signals usually appear in a particular sequence across multiple sources — papers, patents, hires, startup formations, funding events, grants, regulatory filings — rather than in any one source in isolation. A tech scouting agent capable of cross-source reasoning can identify when a research group's papers, a key author's job change, a new startup's formation, and a corporate venture investment all point in the same direction, which is a substantially stronger signal than any one of those events alone. Single-source agents cannot perform this analysis; multi-source agents can, but only when the underlying corpus is structured to support the connections.

How often should a tech scouting agent run?For most R&D, innovation, and corporate development applications, daily execution is appropriate, because new research, funding announcements, and corporate disclosures arrive continuously and the value of scouting is partly its currency. Weekly cadence is sometimes adequate for slower-moving technology domains, but the marginal cost of running an agent daily versus weekly is low, and the latency benefit is meaningful when the scouting informs time-sensitive decisions like partnership negotiations, investment rounds, or competitive responses.

What are the most common failure modes of tech scouting agents?Three failure modes appear repeatedly. The first is theses that are too broad, producing escalation queues so large the team stops reading them. The second is single-source agents that watch only one type of evidence, missing cross-source emergence patterns that constitute most genuine scouting signal. The third is scouting agents disconnected from downstream decision processes, where the output never reaches Stage-Gate reviews, partnership evaluations, M&A pipelines, or executive briefings that could act on it. Each is a design problem rather than a model problem.

Do general-purpose AI tools work for tech scouting?General-purpose AI tools can produce scouting-shaped output but rarely scouting-quality output for specialized R&D and innovation fields. The model is reasoning from whatever research, technical, and market data happened to be in its training data, which is a partial and outdated slice for most domains. The output sounds confident but the underlying evidence is often missing, generic, or wrong. For scouting workflows that inform R&D investment, partnership, corporate venture, or M&A decisions, purpose-built R&D intelligence platforms with current, comprehensive corpuses produce substantially more reliable output.

How do tech scouting agents integrate with downstream decision processes?A scouting agent's output is only valuable when it connects to a decision the organization is actually making. The integration usually takes one of three forms: routing escalated findings into project workspaces where program leads can act on them, feeding scouting output into Stage-Gate reviews, partnership evaluations, M&A pipelines, or portfolio decisions on a defined cadence, or producing structured executive briefings for technology committees and corporate venture boards. Scouting workflows that terminate in an inbox produce no operational value; scouting workflows that terminate in a decision produce compounding value over time.

What separates an enterprise R&D intelligence platform from a general AI tool for scouting work?Enterprise R&D intelligence platforms maintain unified corpuses that combine scientific literature, patents, and adjacent technical and market signal, support custom corpus configuration scoped to specific scouting theses, run agentic workflows continuously rather than on user-initiated demand, apply domain-specific ontologies trained on the language of technical research and innovation, and integrate with the downstream R&D and corporate development processes where scouting findings need to reach decisions. General AI tools provide reasoning capability but lack the corpus, the configurability, and the workflow integration that scouting at enterprise scale requires.

Citations

- Chesbrough, H. Open Innovation: The New Imperative for Creating and Profiting from Technology. Harvard Business School Press, 2003.

- Ansoff, H.I. "Managing Strategic Surprise by Response to Weak Signals." California Management Review, 1975.

- Karpathy, A. Public commentary on context engineering as the practice of populating model working context with precisely the right information for the task, 2025.

- Research on agentic context engineering and brevity bias in prompt optimization for knowledge-intensive tasks, 2025.

- Cypris platform documentation on unified research corpus, custom corpus configuration, and agentic scouting workflows.

Most R&D and IP teams at large enterprises are now using AI tools for patent landscape and white space analysis in some form. Some are running queries through general-purpose chatbots. Some are using AI features inside legacy patent search platforms. Some are evaluating purpose-built R&D intelligence systems. The range of output quality across these approaches is enormous — and the most common reason teams are disappointed with what they get is not the AI itself. It is what the AI has been given to work with.

This guide is for innovation leaders, IP managers, and R&D directors who need landscape and white space analyses they can put in front of executive committees, Stage-Gate reviews, and partnership decisions. It explains why the same question can produce a brilliant analysis from one tool and a vague summary from another, what good output actually looks like, and how to set up your team's AI patent work to consistently produce the better version.

Why the Same Question Produces Such Different Answers

A landscape question — say, "where is the white space in solid-state battery cathode materials for automotive applications above 400 kilometers of range" — is not really one question. It is a chain of work. The AI has to understand the technical envelope you mean, find the patents and scientific papers actually relevant to it, organize them into meaningful clusters, identify who is filing where, evaluate where activity is sparse, and then reason about whether the sparse areas represent genuine opportunity or something else.

Each link in that chain is a place the answer can break.

This is the shift the prompt engineering field went through in 2025. The discipline reorganized around what researchers and frontier AI labs now call context engineering — the recognition that for serious knowledge work, the ceiling on output quality is set less by how the question is phrased and more by what information the system has access to when it answers. Andrej Karpathy described it as the practice of populating the model's working context with precisely the right information, and the engineering teams at frontier labs have largely adopted this framing. For patent intelligence, the implication is direct: the body of evidence the AI is reasoning over matters more than the cleverness of the prompt.

When teams use a general-purpose AI tool, the AI is reasoning from whatever patent and scientific literature happened to be in its training data. For most specialized R&D fields, that is a thin and outdated slice. The output sounds confident because the model is good at sounding confident. But the actual evidence underneath the analysis is often missing, generic, or wrong. An R&D director who has spent a decade in the field can usually tell within thirty seconds. The named players are obvious incumbents and miss the actual emerging filers. The white space identified is the kind any consultant could guess at without doing the work.

When teams use AI features bolted onto legacy patent search platforms, the corpus is more current and complete, but the AI is often reasoning over patent data alone. Patents are a lagging indicator. Scientific literature publishes the underlying research six to eighteen months before patent filings appear. A landscape that looks at patents but not at the surrounding research is a landscape one cycle behind where the field actually is. White space identified this way frequently turns out, in retrospect, to have been white only because the team was looking in the wrong place.

When teams use a purpose-built R&D intelligence platform that combines patent and scientific literature with reasoning capability, the output quality jumps — but only if the team has framed the question well and configured the system to focus on the right body of evidence. This is where most of the remaining variance in output quality comes from, and it is the part the team actually controls.

What Good Landscape Output Looks Like

Before getting into how to ask, it is worth being clear about what to expect. A defensible AI-generated landscape has a few characteristics that consistently distinguish it from a generic one.

It is grounded in specific, citable patents and papers. Claims about who is leading in a sub-area are supported by named filings rather than vague references to "major players." Trends are supported by counts and time periods that can be checked. White space hypotheses cite the specific evidence that suggests the space is actually empty.

It distinguishes between what the data shows and what the data suggests. Strong output marks the difference between an observation ("filing activity in this sub-area declined 40% from 2022 to 2024") and an interpretation ("which suggests the field has matured or shifted to alternative approaches"). Weak output blurs the two.

It calibrates its confidence. It says where the evidence is thick and where it is thin. It flags areas where the available data is insufficient to support a conclusion. It distinguishes between confirmed white space and merely apparent white space.

It tells you what would change the answer. Strong landscape output identifies the assumptions and scope choices the conclusions depend on. If extending the time window two more years would change the picture, it says so. If a slightly different definition of the technology would shift where the white space sits, it says so.

These characteristics are what make a landscape useful for executive decisions. An analysis that does not have them is not a landscape — it is a confidently worded summary of what the AI happened to remember about the topic.

How to Frame the Question

The single most important thing your team can do to improve AI-generated landscape and white space output is invest more time in framing the question. This is not about clever prompting. It is about giving the system enough specification to do real work rather than generic work.

Most weak output traces back to questions that were too short. A team types "give me a landscape of solid-state battery technology" and gets a generic landscape of solid-state battery technology — broad, surface-level, not actionable. The system did exactly what was asked. The asking was the problem.

There is a subtle but important point here that recent AI research has clarified. The older advice on prompting AI tools was to write longer prompts, with multiple worked examples and explicit instructions to "think step by step." That advice was reasonable for the previous generation of language models. It is less applicable to the reasoning-trained models — Claude 4-series, GPT-5.1, the o-series — that now sit underneath most serious patent intelligence platforms. These models reason internally before responding, which means explicit step-by-step instructions add little, and multiple worked examples can actually constrain output quality.

What still matters, and matters more than ever, is the substance of what the prompt specifies about the work. Research on agentic context engineering published in late 2025 documented what researchers call brevity bias — the tendency of prompt optimization to favor concise instructions, which sounds appealing but causes the omission of domain-specific detail that actually drives output quality on knowledge-intensive tasks. The practical translation is that strong prompts for patent landscape work are tight on filler but rich on domain specification.

A well-framed landscape question has four components.

The technical envelope. Describe the technology in specific terms. Name the materials, methods, applications, and use cases that are in scope. Name what is explicitly out of scope — the adjacent areas that should not pull the analysis sideways. List terminology variants the field uses for the same concepts, especially where a concept is described differently in patents versus academic literature.

The strategic context. State why you are running the analysis. A landscape supporting a Stage-Gate decision on whether to advance a development program is a different analysis than a landscape supporting a competitive positioning exercise or a partnership target evaluation. The system can calibrate the depth and emphasis of the work to match the decision, but only if the decision is named.

The scope boundaries. Specify the time window, the jurisdictions of priority, and any assignee or inventor focus. Landscapes without time boundaries default to all-time, which is rarely what you want. Landscapes without jurisdictional priority weight all geographies equally, which is also rarely what you want.

The output you need. Specify what the deliverable should contain. The technology cluster map. The lead filers in each cluster. The temporal trends. The white space hypotheses with supporting evidence. The limitations of the analysis. Specifying the output structure lets the system reason backward from the deliverable to the work required, which produces better output than asking for "a landscape report."

Most teams that adopt this framing pattern see substantial improvement in output quality within a few iterations of practice. The framing itself does not need to be technical. It needs to be specific.

What to Watch For in White Space Searches

White space is the most common landscape question and the easiest one to get wrong. The phrase "white space" implies an area where no one is filing, but absence of filings can mean several different things, and only one of them is genuine opportunity.

Areas can look empty because the underlying technology is commercially uninteresting and no one is filing because no one would buy the result. Areas can look empty because companies in that space protect their work through trade secrets or process know-how rather than patents. Areas can look empty because the search terminology missed filings that exist under different vocabulary. None of these are white space in the sense that matters for R&D investment.

White space is also fragile to scope. An area that appears empty under one definition of the technology often turns out to be densely populated under a slightly different definition. This is a property of how patent literature is written and classified, not a flaw in the analysis, but it means white space claims need to be qualified by the scope they depend on.

Strong AI-generated white space output explicitly distinguishes these conditions. It does not just identify gaps in the patent map; it offers a hypothesis about why each gap exists and what would tell you whether the gap represents real opportunity. Output that identifies white space without explaining why it exists is output the team should not act on.

When framing a white space question, ask the system to evaluate each identified gap against the false-positive conditions, to articulate a falsifiable hypothesis for why the gap is empty, and to flag any gap whose existence depends on the scope boundaries being correct. A team that consistently asks for this analysis structure receives substantially more reliable white space output.

The Custom Corpus Question

Here is where most teams hit the ceiling on AI patent intelligence quality, often without realizing it.

Patent landscape and white space analysis is fundamentally a search-and-reasoning problem. The AI's reasoning quality depends on what the AI is reasoning over. A general-purpose AI tool is reasoning over its training data. A legacy patent platform is reasoning over the patent database it indexes. Both are essentially fixed — you cannot direct the system to focus its analysis on a specific body of evidence relevant to your question.

This is where purpose-built R&D intelligence platforms differ most meaningfully. The strongest platforms allow your team to configure custom corpuses — focused collections of patents, scientific papers, and other technical literature curated to a specific technology space, program, or strategic priority. When the AI runs landscape and white space analyses against a custom corpus, it is reasoning over the body of evidence that actually matters for your question, not over a general index that includes everything else.

The improvement in output quality is substantial, and the underlying reason connects back to the context engineering shift. A 2025 study at the Conference on Computational Linguistics on retrieval-augmented AI systems found that prompt design and the structure of the underlying evidence corpus interact strongly — the same prompt produces meaningfully different output across different corpus configurations. The finding confirms what R&D teams observe in practice: a general patent index covers everything filed across all technology areas, and the signal you care about for a specific R&D program is buried in a much larger volume of irrelevant filings. Even strong AI reasoning struggles to consistently find and weight the right evidence at that ratio. A custom corpus narrows the working evidence to what is actually relevant, which lets the AI's reasoning operate on the signal rather than fighting through the noise.

The same pattern holds for scientific literature. A general scientific index covers all of academia. A custom corpus configured for a specific technical domain gives the AI a focused body of relevant research to reason over alongside the patents. The cross-evidence reasoning — connecting what is appearing in academic publications to what is starting to appear in patent filings — only works well when both bodies of evidence are tightly relevant to the question.

For R&D and IP teams running landscape and white space work on a regular cadence, custom corpus configuration is one of the highest-leverage capabilities a platform can offer. It is the difference between asking the AI to find a needle in a haystack and giving the AI a focused stack to reason over.

Where Cypris Fits

Cypris is an enterprise R&D intelligence platform built for exactly this category of work. The platform unifies more than 500 million patents and scientific papers in a single corpus and supports the AI-driven landscape, white space, and monitoring workflows that R&D and IP teams at Fortune 500 companies need.

The capability that matters most for the question this guide addresses is custom corpus configuration. Teams using Cypris can configure focused collections of patents and non-patent literature scoped to a specific technology space, program, or strategic priority, and run AI-driven landscape and white space analyses against those custom corpuses. The AI reasons over the body of evidence the team has curated rather than over a general index, and the output reflects the specificity of the corpus the team configured.

For an R&D director scoping a new program in a specific catalyst class, this means the AI's analysis is focused on the patents and scientific papers actually relevant to that catalyst class, not on the broader chemistry index that contains them. For an IP manager mapping a competitor's portfolio, the corpus can be configured around that competitor's filing history and the surrounding technology space. For an innovation strategist evaluating a partnership target, the corpus can be configured around the target's technical area and the adjacent research feeding into it.

The combination — a unified patent and scientific literature corpus, configurable custom corpuses focused on the question being asked, and AI reasoning architecture built for R&D intelligence work — is what separates output that supports executive decisions from output that summarizes what the AI happened to know.

What Your Team Can Do This Week

Three things will measurably improve the AI-generated patent intelligence your team produces, regardless of which platform you use.

Standardize how the team frames landscape and white space questions, with the four components covered earlier — technical envelope, strategic context, scope boundaries, and output structure. A simple template that asks each analyst to fill in these four sections before running an analysis produces noticeably better output across the board.