Resources

Guides, research, and perspectives on R&D intelligence, IP strategy, and the future of AI enabled innovation.

Executive Summary

In 2024, US patent infringement jury verdicts totaled $4.19 billion across 72 cases. Twelve individual verdicts exceeded $100million. The largest single award—$857 million in General Access Solutions v.Cellco Partnership (Verizon)—exceeded the annual R&D budget of many mid-market technology companies. In the first half of 2025 alone, total damages reached an additional $1.91 billion.

The consequences of incomplete patent intelligence are not abstract. In what has become one of the most instructive IP disputes in recent history, Masimo’s pulse oximetry patents triggered a US import ban on certain Apple Watch models, forcing Apple to disable its blood oxygen feature across an entire product line, halt domestic sales of affected models, invest in a hardware redesign, and ultimately face a $634 million jury verdict in November 2025. Apple—a company with one of the most sophisticated intellectual property organizations on earth—spent years in litigation over technology it might have designed around during development.

For organizations with fewer resources than Apple, the risk calculus is starker. A mid-size materials company, a university spinout, or a defense contractor developing next-generation battery technology cannot absorb a nine-figure verdict or a multi-year injunction. For these organizations, the patent landscape analysis conducted during the development phase is the primary risk mitigation mechanism. The quality of that analysis is not a matter of convenience. It is a matter of survival.

And yet, a growing number of R&D and IP teams are conducting that analysis using general-purpose AI tools—ChatGPT, Claude, Microsoft Co-Pilot—that were never designed for patent intelligence and are structurally incapable of delivering it.

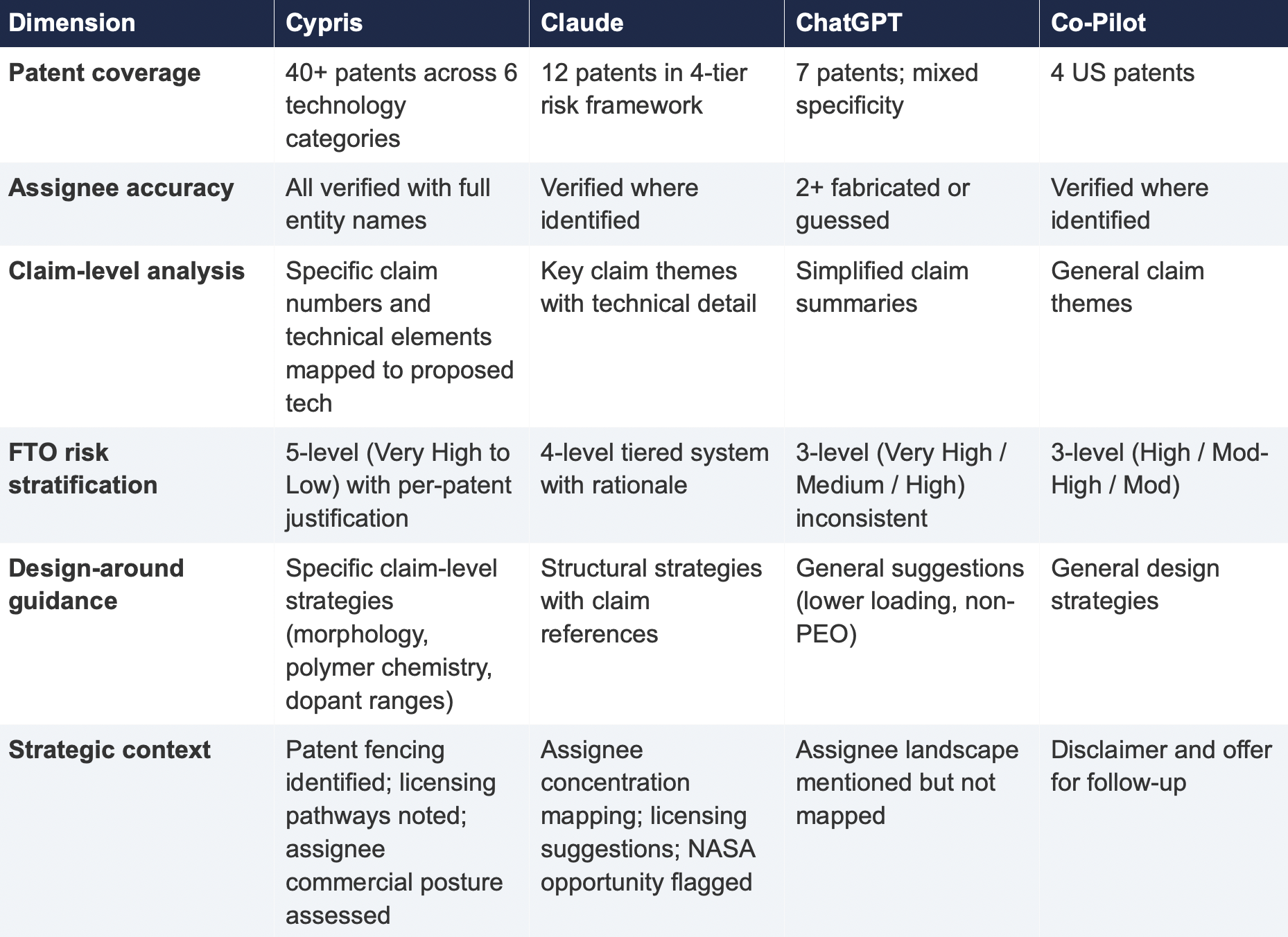

This report presents the findings of a controlled comparison study in which identical patent landscape queries were submitted to four AI-powered tools: Cypris (a purpose-built R&D intelligence platform),ChatGPT (OpenAI), Claude (Anthropic), and Microsoft Co-Pilot. Two technology domains were tested: solid-state lithium-sulfur battery electrolytes using garnet-type LLZO ceramic materials (freedom-to-operate analysis), and bio-based polyamide synthesis from castor oil derivatives (competitive intelligence).

The results reveal a significant and structurally persistent gap. In Test 1, Cypris identified over 40 active US patents and published applications with granular FTO risk assessments. Claude identified 12. ChatGPT identified 7, several with fabricated attribution. Co-Pilot identified 4. Among the patents surfaced exclusively by Cypris were filings rated as “Very High” FTO risk that directly claim the technology architecture described in the query. In Test 2, Cypris cited over 100 individual patent filings with full attribution to substantiate its competitive landscape rankings. No general-purpose model cited a single patent number.

The most active sectors for patent enforcement—semiconductors, AI, biopharma, and advanced materials—are the same sectors where R&D teams are most likely to adopt AI tools for intelligence workflows. The findings of this report have direct implications for any organization using general-purpose AI to inform patent strategy, competitive intelligence, or R&D investment decisions.

1. Methodology

A single patent landscape query was submitted verbatim to each tool on March 27, 2026. No follow-up prompts, clarifications, or iterative refinements were provided. Each tool received one opportunity to respond, mirroring the workflow of a practitioner running an initial landscape scan.

1.1 Query

Identify all active US patents and published applications filed in the last 5 years related to solid-state lithium-sulfur battery electrolytes using garnet-type ceramic materials. For each, provide the assignee, filing date, key claims, and current legal status. Highlight any patents that could pose freedom-to-operate risks for a company developing a Li₇La₃Zr₂O₁₂(LLZO)-based composite electrolyte with a polymer interlayer.

1.2 Tools Evaluated

1.3 Evaluation Criteria

Each response was assessed across six dimensions: (1) number of relevant patents identified, (2) accuracy of assignee attribution,(3) completeness of filing metadata (dates, legal status), (4) depth of claim analysis relative to the proposed technology, (5) quality of FTO risk stratification, and (6) presence of actionable design-around or strategic guidance.

2. Findings

2.1 Coverage Gap

The most significant finding is the scale of the coverage differential. Cypris identified over 40 active US patents and published applications spanning LLZO-polymer composite electrolytes, garnet interface modification, polymer interlayer architectures, lithium-sulfur specific filings, and adjacent ceramic composite patents. The results were organized by technology category with per-patent FTO risk ratings.

Claude identified 12 patents organized in a four-tier risk framework. Its analysis was structurally sound and correctly flagged the two highest-risk filings (Solid Energies US 11,967,678 and the LLZO nanofiber multilayer US 11,923,501). It also identified the University ofMaryland/ Wachsman portfolio as a concentration risk and noted the NASA SABERS portfolio as a licensing opportunity. However, it missed the majority of the landscape, including the entire Corning portfolio, GM's interlayer patents, theKorea Institute of Energy Research three-layer architecture, and the HonHai/SolidEdge lithium-sulfur specific filing.

ChatGPT identified 7 patents, but the quality of attribution was inconsistent. It listed assignees as "Likely DOE /national lab ecosystem" and "Likely startup / defense contractor cluster" for two filings—language that indicates the model was inferring rather than retrieving assignee data. In a freedom-to-operate context, an unverified assignee attribution is functionally equivalent to no attribution, as it cannot support a licensing inquiry or risk assessment.

Co-Pilot identified 4 US patents. Its output was the most limited in scope, missing the Solid Energies portfolio entirely, theUMD/ Wachsman portfolio, Gelion/ Johnson Matthey, NASA SABERS, and all Li-S specific LLZO filings.

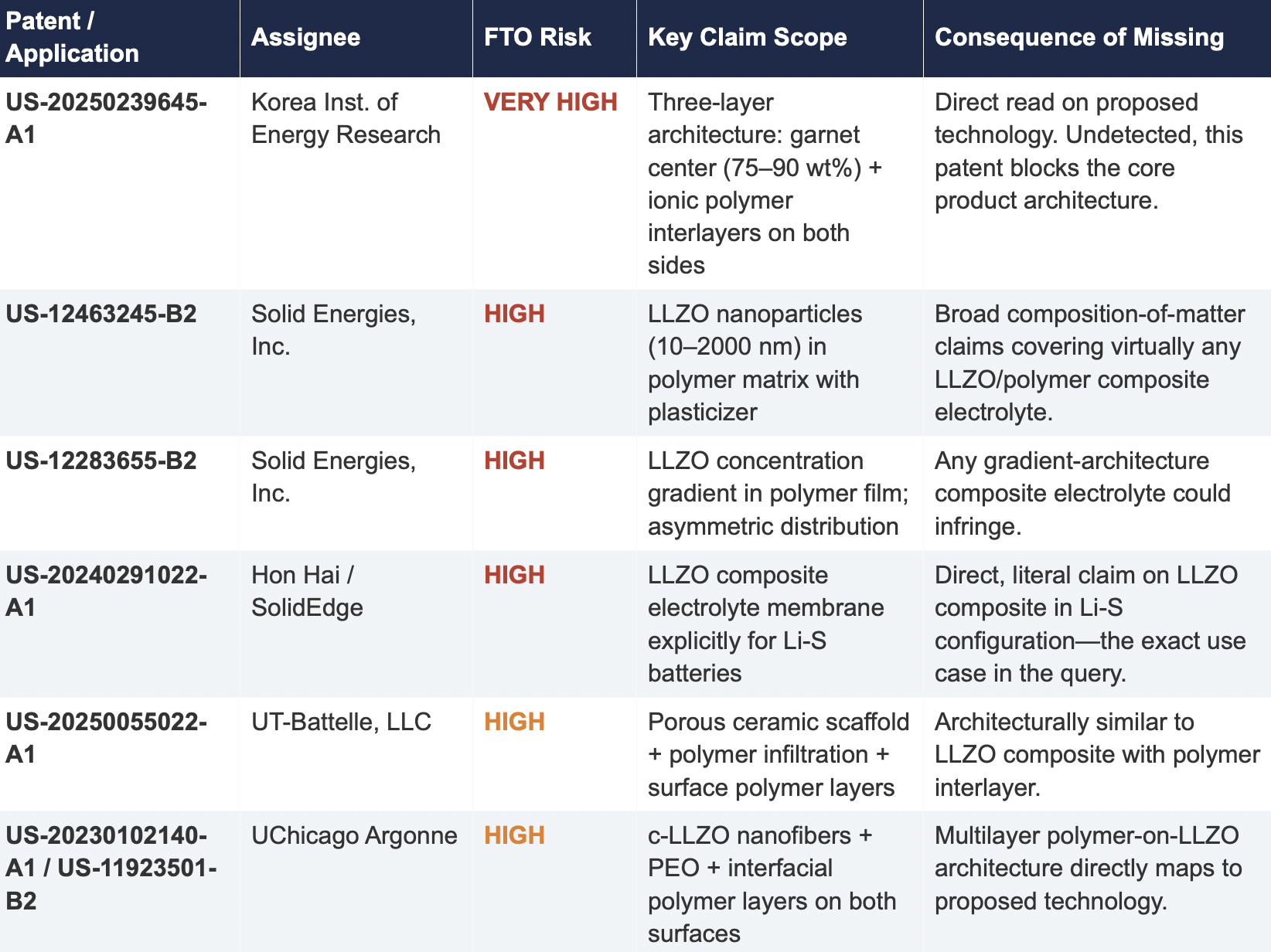

2.2 Critical Patents Missed by Public Models

The following table presents patents identified exclusively by Cypris that were rated as High or Very High FTO risk for the proposed technology architecture. None were surfaced by any general-purpose model.

2.3 Patent Fencing: The Solid Energies Portfolio

Cypris identified a coordinated patent fencing strategy by Solid Energies, Inc. that no general-purpose model detected at scale. Solid Energies holds at least four granted US patents and one published application covering LLZO-polymer composite electrolytes across compositions(US-12463245-B2), gradient architectures (US-12283655-B2), electrode integration (US-12463249-B2), and manufacturing processes (US-20230035720-A1). Claude identified one Solid Energies patent (US 11,967,678) and correctly rated it as the highest-priority FTO concern but did not surface the broader portfolio. ChatGPT and Co-Pilot identified zero Solid Energies filings.

The practical significance is that a company relying on any individual patent hit would underestimate the scope of Solid Energies' IP position. The fencing strategy—covering the composition, the architecture, the electrode integration, and the manufacturing method—means that identifying a single design-around for one patent does not resolve the FTO exposure from the portfolio as a whole. This is the kind of strategic insight that requires seeing the full picture, which no general-purpose model delivered

2.4 Assignee Attribution Quality

ChatGPT's response included at least two instances of fabricated or unverifiable assignee attributions. For US 11,367,895 B1, the listed assignee was "Likely startup / defense contractor cluster." For US 2021/0202983 A1, the assignee was described as "Likely DOE / national lab ecosystem." In both cases, the model appears to have inferred the assignee from contextual patterns in its training data rather than retrieving the information from patent records.

In any operational IP workflow, assignee identity is foundational. It determines licensing strategy, litigation risk, and competitive positioning. A fabricated assignee is more dangerous than a missing one because it creates an illusion of completeness that discourages further investigation. An R&D team receiving this output might reasonably conclude that the landscape analysis is finished when it is not.

3. Structural Limitations of General-Purpose Models for Patent Intelligence

3.1 Training Data Is Not Patent Data

Large language models are trained on web-scraped text. Their knowledge of the patent record is derived from whatever fragments appeared in their training corpus: blog posts mentioning filings, news articles about litigation, snippets of Google Patents pages that were crawlable at the time of data collection. They do not have systematic, structured access to the USPTO database. They cannot query patent classification codes, parse claim language against a specific technology architecture, or verify whether a patent has been assigned, abandoned, or subjected to terminal disclaimer since their training data was collected.

This is not a limitation that improves with scale. A larger training corpus does not produce systematic patent coverage; it produces a larger but still arbitrary sampling of the patent record. The result is that general-purpose models will consistently surface well-known patents from heavily discussed assignees (QuantumScape, for example, appeared in most responses) while missing commercially significant filings from less publicly visible entities (Solid Energies, Korea Institute of EnergyResearch, Shenzhen Solid Advanced Materials).

3.2 The Web Is Closing to Model Scrapers

The data access problem is structural and worsening. As of mid-2025, Cloudflare reported that among the top 10,000 web domains, the majority now fully disallow AI crawlers such as GPTBot andClaudeBot via robots.txt. The trend has accelerated from partial restrictions to outright blocks, and the crawl-to-referral ratios reveal the underlying tension: OpenAI's crawlers access approximately1,700 pages for every referral they return to publishers; Anthropic's ratio exceeds 73,000 to 1.

Patent databases, scientific publishers, and IP analytics platforms are among the most restrictive content categories. A Duke University study in 2025 found that several categories of AI-related crawlers never request robots.txt files at all. The practical consequence is that the knowledge gap between what a general-purpose model "knows" about the patent landscape and what actually exists in the patent record is widening with each training cycle. A landscape query that a general-purpose model partially answered in 2023 may return less useful information in 2026.

3.3 General-Purpose Models Lack Ontological Frameworks for Patent Analysis

A freedom-to-operate analysis is not a summarization task. It requires understanding claim scope, prosecution history, continuation and divisional chains, assignee normalization (a single company may appear under multiple entity names across patent records), priority dates versus filing dates versus publication dates, and the relationship between dependent and independent claims. It requires mapping the specific technical features of a proposed product against independent claim language—not keyword matching.

General-purpose models do not have these frameworks. They pattern-match against training data and produce outputs that adopt the format and tone of patent analysis without the underlying data infrastructure. The format is correct. The confidence is high. The coverage is incomplete in ways that are not visible to the user.

4. Comparative Output Quality

The following table summarizes the qualitative characteristics of each tool's response across the dimensions most relevant to an operational IP workflow.

5. Implications for R&D and IP Organizations

5.1 The Confidence Problem

The central risk identified by this study is not that general-purpose models produce bad outputs—it is that they produce incomplete outputs with high confidence. Each model delivered its results in a professional format with structured analysis, risk ratings, and strategic recommendations. At no point did any model indicate the boundaries of its knowledge or flag that its results represented a fraction of the available patent record. A practitioner receiving one of these outputs would have no signal that the analysis was incomplete unless they independently validated it against a comprehensive datasource.

This creates an asymmetric risk profile: the better the format and tone of the output, the less likely the user is to question its completeness. In a corporate environment where AI outputs are increasingly treated as first-pass analysis, this dynamic incentivizes under-investigation at precisely the moment when thoroughness is most critical.

5.2 The Diversification Illusion

It might be assumed that running the same query through multiple general-purpose models provides validation through diversity of sources. This study suggests otherwise. While the four tools returned different subsets of patents, all operated under the same structural constraints: training data rather than live patent databases, web-scraped content rather than structured IP records, and general-purpose reasoning rather than patent-specific ontological frameworks. Running the same query through three constrained tools does not produce triangulation; it produces three partial views of the same incomplete picture.

5.3 The Appropriate Use Boundary

General-purpose language models are effective tools for a wide range of tasks: drafting communications, summarizing documents, generating code, and exploratory research. The finding of this study is not that these tools lack value but that their value boundary does not extend to decisions that carry existential commercial risk.

Patent landscape analysis, freedom-to-operate assessment, and competitive intelligence that informs R&D investment decisions fall outside that boundary. These are workflows where the completeness and verifiability of the underlying data are not merely desirable but are the primary determinant of whether the analysis has value. A patent landscape that captures 10% of the relevant filings, regardless of how well-formatted or confidently presented, is a liability rather than an asset.

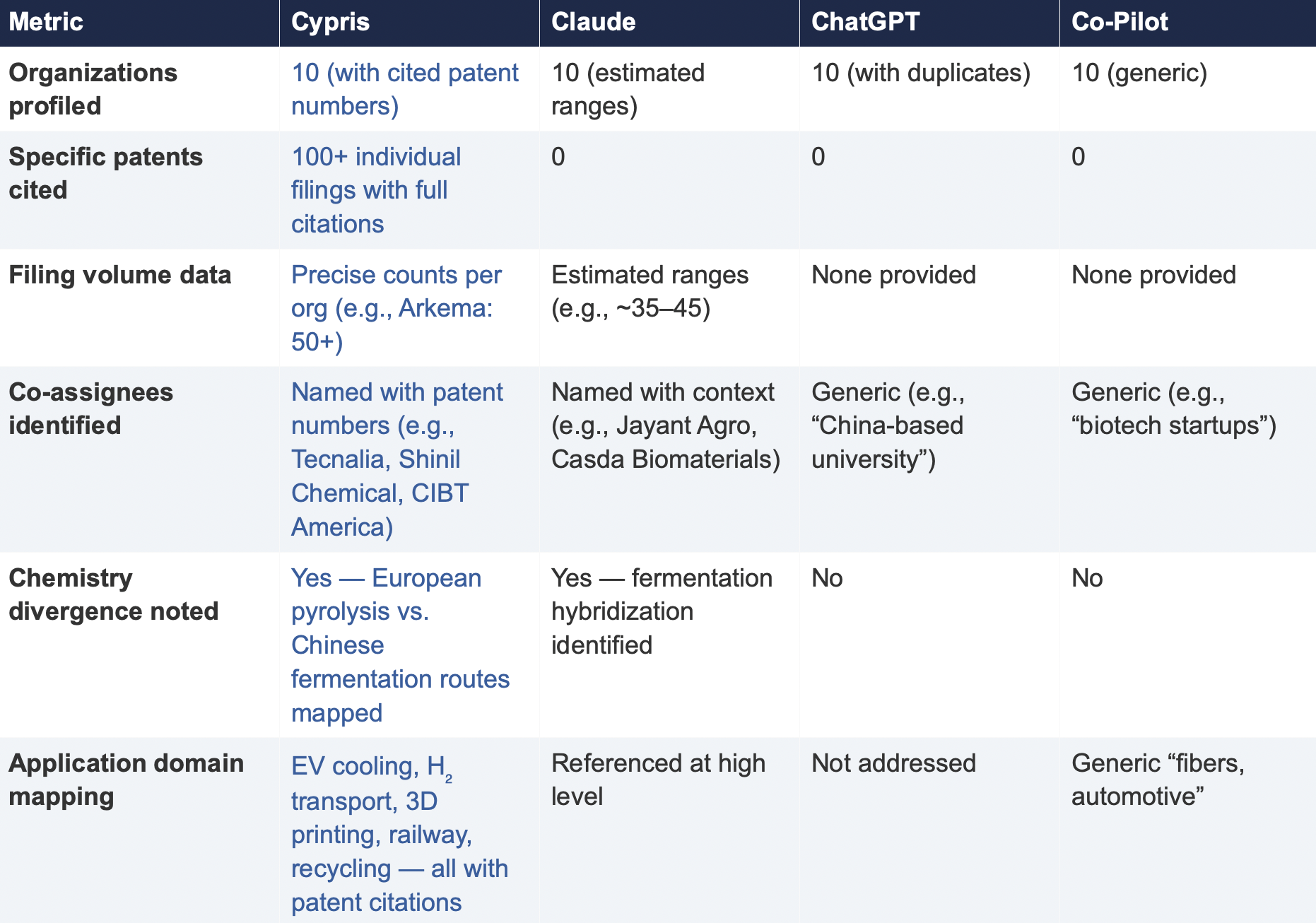

6. Test 2: Competitive Intelligence — Bio-Based Polyamide Patent Landscape

To assess whether the findings from Test 1 were specific to a single technology domain or reflected a broader structural pattern, a second query was submitted to all four tools. This query shifted from freedom-to-operate analysis to competitive intelligence, asking each tool to identify the top 10organizations by patent filing volume in bio-based polyamide synthesis from castor oil derivatives over the past three years, with summaries of technical approach, co-assignee relationships, and portfolio trajectory.

6.1 Query

6.2 Summary of Results

6.3 Key Differentiators

Verifiability

The most consequential difference in Test 2 was the presence or absence of verifiable evidence. Cypris cited over 100 individual patent filings with full patent numbers, assignee names, and publication dates. Every claim about an organization’s technical focus, co-assignee relationships, and filing trajectory was anchored to specific documents that a practitioner could independently verify in USPTO, Espacenet, or WIPO PATENT SCOPE. No general-purpose model cited a single patent number. Claude produced the most structured and analytically useful output among the public models, with estimated filing ranges, product names, and strategic observations that were directionally plausible. However, without underlying patent citations, every claim in the response requires independent verification before it can inform a business decision. ChatGPT and Co-Pilot offered thinner profiles with no filing counts and no patent-level specificity.

Data Integrity

ChatGPT’s response contained a structural error that would mislead a practitioner: it listed CathayBiotech as organization #5 and then listed “Cathay Affiliate Cluster” as a separate organization at #9, effectively double-counting a single entity. It repeated this pattern with Toray at #4 and “Toray(Additional Programs)” at #10. In a competitive intelligence context where the ranking itself is the deliverable, this kind of error distorts the landscape and could lead to misallocation of competitive monitoring resources.

Organizations Missed

Cypris identified Kingfa Sci. & Tech. (8–10 filings with a differentiated furan diacid-based polyamide platform) and Zhejiang NHU (4–6 filings focused on continuous polymerization process technology)as emerging players that no general-purpose model surfaced. Both represent potential competitive threats or partnership opportunities that would be invisible to a team relying on public AI tools.Conversely, ChatGPT included organizations such as ANTA and Jiangsu Taiji that appear to be downstream users rather than significant patent filers in synthesis, suggesting the model was conflating commercial activity with IP activity.

Strategic Depth

Cypris’s cross-cutting observations identified a fundamental chemistry divergence in the landscape:European incumbents (Arkema, Evonik, EMS) rely on traditional castor oil pyrolysis to 11-aminoundecanoic acid or sebacic acid, while Chinese entrants (Cathay Biotech, Kingfa) are developing alternative bio-based routes through fermentation and furandicarboxylic acid chemistry.This represents a potential long-term disruption to the castor oil supply chain dependency thatWestern players have built their IP strategies around. Claude identified a similar theme at a higher level of abstraction. Neither ChatGPT nor Co-Pilot noted the divergence.

6.4 Test 2 Conclusion

Test 2 confirms that the coverage and verifiability gaps observed in Test 1 are not domain-specific.In a competitive intelligence context—where the deliverable is a ranked landscape of organizationalIP activity—the same structural limitations apply. General-purpose models can produce plausible-looking top-10 lists with reasonable organizational names, but they cannot anchor those lists to verifiable patent data, they cannot provide precise filing volumes, and they cannot identify emerging players whose patent activity is visible in structured databases but absent from the web-scraped content that general-purpose models rely on.

7. Conclusion

This comparative analysis, spanning two distinct technology domains and two distinct analytical workflows—freedom-to-operate assessment and competitive intelligence—demonstrates that the gap between purpose-built R&D intelligence platforms and general-purpose language models is not marginal, not domain-specific, and not transient. It is structural and consequential.

In Test 1 (LLZO garnet electrolytes for Li-S batteries), the purpose-built platform identified more than three times as many patents as the best-performing general-purpose model and ten times as many as the lowest-performing one. Among the patents identified exclusively by the purpose-built platform were filings rated as Very High FTO risk that directly claim the proposed technology architecture. InTest 2 (bio-based polyamide competitive landscape), the purpose-built platform cited over 100individual patent filings to substantiate its organizational rankings; no general-purpose model cited as ingle patent number.

The structural drivers of this gap—reliance on training data rather than live patent feeds, the accelerating closure of web content to AI scrapers, and the absence of patent-specific analytical frameworks—are not transient. They are inherent to the architecture of general-purpose models and will persist regardless of increases in model capability or training data volume.

For R&D and IP leaders, the practical implication is clear: general-purpose AI tools should be used for general-purpose tasks. Patent intelligence, competitive landscaping, and freedom-to-operate analysis require purpose-built systems with direct access to structured patent data, domain-specific analytical frameworks, and the ability to surface what a general-purpose model cannot—not because it chooses not to, but because it structurally cannot access the data.

The question for every organization making R&D investment decisions today is whether the tools informing those decisions have access to the evidence base those decisions require. This study suggests that for the majority of general-purpose AI tools currently in use, the answer is no.

About This Report

This report was produced by Cypris (IP Web, Inc.), an AI-powered R&D intelligence platform serving corporate innovation, IP, and R&D teams at organizations including NASA, Johnson & Johnson, theUS Air Force, and Los Alamos National Laboratory. Cypris aggregates over 500 million data points from patents, scientific literature, grants, corporate filings, and news to deliver structured intelligence for technology scouting, competitive analysis, and IP strategy.

The comparative tests described in this report were conducted on March 27, 2026. All outputs are preserved in their original form. Patent data cited from the Cypris reports has been verified against USPTO Patent Center and WIPO PATENT SCOPE records as of the same date. To conduct a similar analysis for your technology domain, contact info@cypris.ai or visit cypris.ai.

The Patent Intelligence Gap - A Comparative Analysis of Verticalized AI-Patent Tools vs. General-Purpose Language Models for R&D Decision-Making

Blogs

Work, as we’ve known it, has fundamentally changed.

That statement might have sounded dramatic a year or two ago, but you would be naive to deny it today. AI is no longer just augmenting workflows. It is increasingly owning them. The initial wave focused on the obvious entry points such as drafting presentations, summarizing articles, and writing emails. But what started as assistive has quickly evolved into something far more powerful.

AI agents are now executing entire downstream workflows. Not just writing copy for a presentation, but building it. Not just drafting an email, but sending and iterating on it. These systems run asynchronously, improve over time, and are becoming easier to build and deploy by the day.

Startups and smaller organizations are already operating with them across their workflows and are seeing serious gains (including us at Cypris). Large enterprises, expectedly, lag behind, but will inevitably follow. Large enterprises are for the most part subject to their vendors, and those vendors are undergoing massive foundational shifts from traditional software apps to Agentic AI solutions.

Which raises the question:

What does this shift mean for the enterprise tech stack of the future?

The companies that answer this and position themselves correctly will not just be more efficient. They will operate at a fundamentally different pace. In a world where AI compounds progress, speed becomes the ultimate competitive advantage.

From Search to Chat

My perspective comes from the last five years building Cypris, an AI platform for R&D and IP intelligence.

We launched in 2021, before AI meant what it does today. Back then, semantic search was considered cutting edge. Our core value proposition was helping teams identify signals in massive datasets such as patents, research papers, and technical literature faster than their competitors.

The reality of that workflow looked very different than it does today.

Researchers spent the majority of their time on data curation. Entire teams were dedicated to building complex Lucene queries across fragmented datasets. The quality of insights depended heavily on how good your query was, and how effectively you could interpret thousands of results through pre-built charts, visualizations, BI tools and manual workflows.

Work that now takes minutes used to take weeks. Prior art searches, landscape analyses, and whitespace identification all required significant manual effort. Most product comparisons, and ultimately our demos, came down to a few questions:

- Does your query return better results than theirs?

- How robust are your advanced search capabilities?

- What kind of visualizations can you offer to identify meaningful signal in the results?

Then everything changed.

The Inflection Point - When AI Became Exposed to Enterprise

The launch of ChatGPT in November 2022 marked a turning point.

At first, its enterprise impact was not obvious. By early 2024, the shift became undeniable. Marketing workflows were the first to transform. Copywriting went from a differentiated skill to a commodity almost overnight. Then came coding assistants, which have rapidly evolved toward full-stack AI development.

We adapted Cypris in real time, shifting from static, pre-generated insights to dynamic, retrieval-based systems leveraging the world’s most powerful models. We recognized early that the model race was a wave we wanted to ride, so we built the infrastructure to incorporate all leading models directly into our product. What began as an enhancement quickly became the foundation of everything we do.

As the software stack progressed quickly, our customers began scrambling to make sense of it. AI committees formed. IT teams took control of purchasing decisions. Sales cycles lengthened as organizations tried to impose governance on something evolving faster than their processes could handle. We have seen this firsthand, with customers explicitly stating that all AI purchases now need to go through new evaluation and procurement processes.

But there is an underlying tension: Every piece of software is now an AI purchase.

And eventually, enterprises will need to operate that way.

What Should Be Verticalized?

At the center of this transformation and a complicated question most enterprise buyers are struggling with today is:

What can general-purpose AI handle, and where do you need specialized systems?

Most organizations do not answer this theoretically. They learn through experience, use case by use case. And the market hype does not help. There is a growing narrative that companies can “vibe code” their way into rebuilding core systems that underpin processes involving hundreds of stakeholders and millions of dollars in impact.

That is unrealistic.

Call me when a company like J&J decides to replace Salesforce with something built in their team’s free time with some prompts.

A more grounded way to think about it is through a simple principle that consistently holds true:

AI is only as good as what it is exposed to.

A model will generate answers based on the data it can access and the orchestration it is given, whether that is its training data, web content, or additional context you provide.

If you do not give it access to meaningful or proprietary data or thoughtful direction, it will default to generic knowledge.

This creates a growing divide within tech stacks that solely levergage 'commodity AI' vs. 'enterprise enhanced AI'.

Commodity AI vs. Enterprise-Enhanced AI

Commodity AI is the baseline.

It includes foundation models such as ChatGPT, Claude, and Co-Pilot, which run on top of those models, that everyone has access to.

Using them is no longer a competitive advantage. It is table stakes.

If your organization relies on the same tools trained on the same data, your outputs and decisions will begin to look the same as everyone else’s.

Enterprise-enhanced AI is where differentiation happens.

This is what you build on top of the foundation.

It includes:

- Integrating proprietary and high-value datasets

- Layering in domain-specific tools and platforms

- Designing curated workflows that tap into verticalized agents

- Building custom ontologies that interpret how your business operates

- Designing org wide system prompts tailored to existing internal processes

The goal is to amplify foundation models with context they cannot access on their own.

Additionally, enterprises that believe they can simply vibe code their own stack on top of foundation models will eventually run into the same reality that fueled the SaaS boom over the last 20 years. Your job is not to build and maintain software, and doing so will consume far more time and resources than expected. Claude is powerful, and your best vendors are already using it as a foundation. You will get significantly more leverage from it through verticalized and enhanced systems.

Where Data Foundations Especially Matter

In our eyes, nowhere is this more critical than in R&D and IP teams.

Foundation model providers are not focused on maintaining continuously updated datasets of global patents, scientific literature, company data, or chemical compounds. It is too niche and not a strategic priority for them.

But for teams making high-stakes decisions such as:

- What to build

- Where to invest

- Where to file IP

- How to differentiate

That data is essential.

If you rely on generic AI outputs without a strong data foundation, you are making decisions on incomplete information.

In technical domains, incomplete information is a strategic risk.

See our case study on real-world scenario gaps here: https://www.cypris.ai/insights/the-patent-intelligence-gap---a-comparative-analysis-of-verticalized-ai-patent-tools-vs-general-purpose-language-models-for-r-d-decision-making

The New Mandate for Enterprise Leaders

All software vendors will be AI-vendors, so figuring out your strategy, figuring out your security and IT governance, and figuring out your deployment process quickly should be a strategic priority. Focus on real-world signal and critical workflows and find vendors that can turn your commodity AI into enterprise enhanced assets before your competitors do.

We are entering a world where AI itself is no longer the differentiator.

How you implement it is.

The enterprises that recognize this early and build their stacks accordingly will not just keep up.

They will redefine the pace of their industries.

The freedom-to-operate search has always been one of the most consequential exercises in product development. Before a company commits significant capital to manufacturing, marketing, or licensing a new technology, it must determine with reasonable confidence that bringing the product to market will not infringe the valid and enforceable patent rights of a third party. That determination has never been simple, but the scale of the challenge in 2026 has grown to a point where traditional methods alone are no longer sufficient, and the rapid proliferation of general-purpose AI tools has introduced both new capabilities and new sources of confusion about what actually constitutes a defensible FTO workflow.

The Scale Problem: Why Traditional FTO Methods Are Breaking Down

The volume of global patent data is the first and most visible challenge. Innovators around the world filed 3.7 million patent applications in 2024, marking a 4.9 percent increase over 2023 and the fastest year-on-year growth since 2018 (1). Patents in force worldwide grew 6 percent in 2024 to reach an estimated 19.7 million (2). These are not evenly distributed. China's share of global patent applications jumped from 34.6 percent in 2014 to 49.1 percent in 2024, accounting for nearly half of all worldwide filings (3). For any R&D team conducting an FTO search across multiple jurisdictions, the corpus to be searched is not merely large but growing at a compounding rate, with an increasing share published in Chinese and other non-English languages that keyword searches in English will systematically miss.

The problem extends beyond sheer volume. Patent claims are written in deliberately broad and often abstract language. A single claim may describe a concept using terminology that varies dramatically from how an engineer or scientist would describe the same concept in a lab notebook or product specification. Traditional Boolean keyword searches depend on the searcher anticipating every synonym, variant, and adjacent phrasing that a patent drafter might have used. In crowded technology fields where hundreds of applicants have filed on overlapping concepts, the combinatorial explosion of possible keyword strings makes exhaustive manual search functionally impossible.

Jurisdictional complexity compounds the problem further. An FTO search is always territorial. A product that is clear in the United States may face blocking patents in Europe, Japan, or China. Each jurisdiction has its own patent database, its own classification scheme, and its own rules about claim interpretation. A thorough FTO search must account for granted patents, pending applications that may issue with claims covering the product, and patent families that span multiple national and regional offices.

General-Purpose AI vs. Verticalized LLMs: A Critical Distinction

The arrival of powerful general-purpose large language models such as GPT-4, Claude, and Gemini has created a tempting shortcut for teams looking to accelerate FTO work. These models can summarize patent documents, suggest search terms, and even draft preliminary claim comparisons. But there is a fundamental difference between a general-purpose LLM that has been exposed to some patent text during pre-training and a verticalized model that has been purpose-built for patent and technical literature analysis, and conflating the two introduces real risk into FTO workflows.

General-purpose LLMs suffer from several structural limitations in the FTO context. They do not have access to live patent databases. They cannot verify the legal status of a patent. They are prone to hallucination, meaning they may generate plausible-sounding but factually incorrect claim interpretations or invent patent numbers that do not exist. And they lack the domain-specific training that allows them to understand how patent claim language maps to technical concepts with the precision that FTO analysis demands.

Verticalized LLMs, by contrast, are models that have been fine-tuned or trained from the ground up on patent corpora, scientific literature, and technical taxonomies. These models understand the particular conventions of patent drafting: how means-plus-function claims work, how dependent claims narrow the scope of independent claims, how prosecution history estoppel affects claim interpretation, and how the same invention can be described using entirely different vocabulary across jurisdictions and technology domains. When integrated into a purpose-built search platform with access to live, structured patent data, verticalized LLMs can perform semantic retrieval at a level of precision and recall that general-purpose models cannot match.

The practical implication for FTO practitioners is straightforward: general-purpose AI is useful for background research, brainstorming search strategies, and explaining technical concepts to non-specialist stakeholders, but it should never be the primary engine of an FTO search. The search itself must be powered by domain-specific AI operating on a verified, structured, and continuously updated patent corpus.

Semantic Search: Moving Beyond Keywords to Concepts

The single most impactful AI technique for FTO searches on large datasets is semantic search. Unlike Boolean keyword search, which matches exact text strings, semantic search uses natural language processing and machine learning to understand the conceptual meaning of a query and return results that are conceptually related even when the specific terminology differs. This directly addresses the vocabulary problem that plagues patent searching: the same invention can be described using entirely different words depending on the drafter, the jurisdiction, and the era in which the patent was filed.

With semantic search, attorneys running freedom-to-operate searches no longer need to enumerate every synonym up front, and R&D teams can explore adjacent technology spaces without mastering classification schemes (4). Semantic search engines trained on patent corpora can interpret an invention disclosure or a set of product claims and retrieve conceptually similar documents from across the entire global patent landscape, surfacing references that a keyword search would have missed entirely.

The effectiveness of semantic search depends heavily on the quality of the underlying model and the data on which it was trained. This is where the distinction between general-purpose and verticalized AI becomes most consequential. A semantic search engine powered by a model trained specifically on patent text will understand that "photovoltaic energy conversion apparatus" and "solar cell" refer to the same concept, that "computing device" in one patent family may correspond to "mobile terminal" in another, and that a claim reciting a "plurality of elongated members" might cover the same structure as one describing "an array of fins." General-purpose embeddings miss these domain-specific equivalences at a rate that makes them unsuitable for production FTO work.

Automated Claim Element Mapping

Once a semantic search identifies a set of potentially relevant patents, the next step in any FTO analysis is claim mapping: comparing each element of the relevant patent claims against each feature of the product or process under review. This has traditionally been one of the most time-consuming and expertise-intensive steps in the FTO workflow, requiring a trained analyst to read each claim, decompose it into its constituent elements, and assess whether the product reads on those elements.

AI-powered claim mapping tools can now automate the initial pass of this analysis. These tools parse patent claims into individual elements, extract the corresponding features from a product description, and generate a preliminary mapping that highlights areas of potential overlap. Verticalized LLMs are particularly effective at this task because they can interpret the functional language and structural relationships embedded in patent claims with far greater accuracy than general-purpose models that lack exposure to the syntactic conventions of patent drafting. The output is not a final legal opinion, but it dramatically reduces the time required to triage a large set of potentially relevant patents down to a manageable shortlist of those that require detailed human review. For FTO searches that surface hundreds or even thousands of candidate patents from the initial semantic search, this triage step is essential to making the workflow practical.

Classification-Based Filtering and Clustering

Patent classification systems such as the Cooperative Patent Classification (CPC) and the International Patent Classification (IPC) provide a structured taxonomy that assigns each patent to one or more technology categories. While classification codes are not a substitute for full-text search, they are a powerful complement, especially for narrowing the initial scope of an FTO search to the most relevant technology areas.

AI-enhanced clustering takes this a step further. Rather than relying on the classification codes assigned by patent office examiners, machine learning algorithms can analyze the full text of search results and automatically cluster them into thematic groups based on their technical content. This allows the analyst to see at a glance which technology sub-areas are most densely populated with potentially relevant patents and to prioritize review accordingly. It also reveals patterns that might not be visible in a flat list of results, such as a concentration of filings from a particular competitor in a specific sub-technology that warrants closer scrutiny. The best clustering implementations use domain-specific ontologies rather than generic topic models, because a general-purpose topic model may group patents by surface-level keyword similarity rather than by the deeper technical relationships that matter for infringement analysis.

Citation Network Analysis

Patents do not exist in isolation. Each patent cites prior art references, and each patent is in turn cited by later filings. This web of citations creates a network that contains valuable information about the relationships between inventions, the evolution of a technology area, and the relative importance of individual patents within the landscape. AI-powered citation analysis tools can traverse this network to identify patents that are highly cited (suggesting broad influence), patents that share citation patterns with the product under review (suggesting technical proximity), and patents that have been cited in opposition or post-grant proceedings (suggesting contested validity).

Citation network analysis is particularly valuable for uncovering "hidden" prior art, meaning patents that would not surface through a keyword or semantic search because they use entirely different terminology but are technically relevant based on their position in the citation graph. For FTO searches in mature technology areas with deep citation histories, this technique can surface blocking patents that other methods would miss.

Incorporating Non-Patent Literature into FTO Workflows

One of the most significant blind spots in traditional FTO searches is the exclusive focus on patent data. A thorough clearance analysis must also consider non-patent literature (NPL), including scientific journal articles, conference proceedings, technical standards, and regulatory filings. NPL is relevant to FTO in two distinct ways. First, NPL may constitute prior art that could be used to invalidate a blocking patent, thereby eliminating the infringement risk. Second, NPL may describe the state of the art in ways that inform claim interpretation, helping the analyst understand the scope of a patent claim in the context of what was known at the time of filing.

The challenge is that non-patent literature exists in entirely separate databases from patent data, uses different terminology conventions, and is structured differently. Most traditional patent search tools do not index scientific literature at all, forcing analysts to conduct separate searches across multiple platforms and then manually correlate the results. AI-powered platforms that unify patent and scientific literature into a single searchable corpus eliminate this fragmentation and allow the analyst to see the full picture of the prior art landscape in a single workflow. This is an area where the choice of platform matters enormously: the ability to run a single semantic query across both patent and NPL data, and to have the results ranked by a verticalized model that understands both document types, is a significant structural advantage over workflows that require separate tools and manual reconciliation.

Agentic AI and Multi-Step FTO Workflows

A newer development in AI-powered FTO is the emergence of agentic AI systems that can execute multi-step research workflows autonomously. Rather than requiring the analyst to manually sequence each step of the FTO process (define search terms, run the search, filter results, cluster by technology area, map claims, check legal status), an agentic system can accept a high-level task description (such as "conduct an FTO search for this product in these jurisdictions") and autonomously plan and execute the sequence of searches, filters, and analyses needed to produce a comprehensive result.

Agentic approaches are particularly valuable for FTO searches because the process inherently involves multiple dependent steps where the output of one step determines the input to the next. A well-designed agentic FTO system can dynamically expand or narrow its search based on what it finds at each stage, pursue unexpected leads surfaced by citation analysis, and flag ambiguities for human review rather than making assumptions. This represents a meaningful step beyond static search tools, though it also demands a higher level of trust in the underlying AI and places a premium on transparency and explainability in how the system arrives at its conclusions.

Continuous Monitoring: Transforming FTO from a Snapshot to a Living Process

A traditional FTO search produces a point-in-time snapshot: a report reflecting the patent landscape as it existed on the date the search was conducted. But the patent landscape is not static. New applications are published every week. Pending applications receive grants. Legal status changes as patents are challenged, abandoned, or expire. A critical, and often overlooked, part of a modern FTO strategy is to establish a system for continuous monitoring that transforms the FTO from a static report into a living intelligence system (5).

AI-powered monitoring tools allow teams to save their search parameters and receive automated alerts whenever new patents are published in their technology area, a key competitor files a new application, or the legal status of a previously identified high-risk patent changes. This continuous approach is especially important for products with long development cycles, where the patent landscape may shift significantly between the initial FTO search and the commercial launch date.

Hybrid Intelligence: Why AI Alone Is Not Enough

For all its power, AI is not a substitute for expert human judgment in FTO analysis. The future of IP analytics lies in integrating AI-driven scalability with human interpretative depth, as highlighted at major industry conferences exploring hybrid human-machine workflows for patent searching and FTO analysis (6). AI can process millions of documents, surface the most relevant candidates, and generate preliminary claim maps. But the final determination of whether a product infringes a patent claim requires legal interpretation that accounts for claim construction doctrines, prosecution history, and jurisdiction-specific rules of infringement analysis. These are judgments that require training, experience, and an understanding of legal context that current AI systems cannot reliably provide.

The most effective FTO methodology in 2026 is a hybrid model: AI handles the high-volume discovery, filtering, and triage phases, while human experts focus their attention on the relatively small number of patents that survive the AI filter and require detailed claim-by-claim analysis. This division of labor plays to the strengths of each. AI excels at scale, speed, and consistency across large datasets. Humans excel at nuanced interpretation, contextual reasoning, and the kind of strategic thinking that determines whether a potential infringement risk warrants a design-around, a licensing negotiation, or a validity challenge.

The USPTO Is Signaling the Direction of Travel

The United States Patent and Trademark Office has itself begun integrating AI into its examination processes, and these developments have direct implications for FTO practice. The USPTO launched its Automated Search Pilot Program (ASAP!) in October 2025, using an internal AI tool to conduct pre-examination prior art searches and provide applicants with an Automated Search Results Notice listing up to 10 relevant documents ranked by relevance (7). In July 2025, the USPTO launched the DesignVision tool, enabling AI-driven image-based search of U.S. and foreign design patents to support examiners in comparing query images to global design collections (8). And in March 2026, the agency launched its Class ACT system, an AI-powered tool that automates trademark classification tasks that previously took up to five months (9).

These initiatives signal that the patent office itself views AI-assisted search as a core component of the future examination process. For FTO practitioners, this raises the bar: if the patent office is using AI to find more and better prior art during examination, then the patents that survive this enhanced scrutiny and proceed to grant may be stronger and harder to challenge. This makes thorough, AI-augmented FTO searches even more critical before making go-to-market decisions.

Platforms for AI-Powered FTO Searches: What to Look For

Not all platforms are equally suited to FTO analysis on large datasets. When evaluating tools for this purpose, R&D and IP teams should prioritize several capabilities.

The first is data coverage. A platform is only as useful as the corpus it can search. The best FTO tools provide access to patent data from all major patent-issuing authorities worldwide, including full-text documents, legal status information, patent family linkages, and prosecution history. Equally important is coverage of non-patent literature, including peer-reviewed scientific journals and conference proceedings, which can be essential both for identifying prior art and for understanding claim scope.

The second is AI model quality. The platform's AI should be built on verticalized models trained specifically on patent and technical text, not repurposed general-purpose LLMs. It should support natural language queries, full-document input, and iterative refinement of search results based on user feedback.

The third is workflow integration. FTO analysis is not a single search query but a multi-step process that includes search, filtering, clustering, claim mapping, validity assessment, and reporting. The best platforms support this entire workflow in a unified environment rather than requiring the analyst to export data and switch between tools at each stage.

The fourth is monitoring and alerting. As discussed above, FTO is not a one-time event. The platform should support saved searches, automated alerts, and ongoing landscape tracking so that the initial FTO assessment remains current throughout the product development cycle.

With these criteria in mind, several platforms merit consideration for enterprise FTO workflows in 2026.

Cypris takes a structurally different approach from most patent intelligence tools by unifying patent data, scientific literature, and competitive intelligence into a single enterprise R&D intelligence platform. Cypris indexes over 500 million patents and scientific papers and applies a proprietary R&D ontology that maps relationships across data types, enabling searches that span the full spectrum of technical prior art in a single query. For FTO analysis specifically, this means an analyst can conduct the patent search, cross-reference the results against relevant scientific literature, and monitor the landscape for changes, all without leaving a single platform or reconciling outputs from multiple tools. Cypris maintains enterprise API partnerships with OpenAI, Anthropic, and Google, which positions it to integrate the latest advances in large language model technology directly into its search and analysis workflows as verticalized AI capabilities rather than generic chat interfaces bolted onto legacy data. It is trusted by hundreds of enterprise teams and thousands of researchers across R&D, IP, and product development functions. For organizations whose FTO needs extend beyond patent-only analysis into the broader question of what the full body of technical prior art looks like across both patent and non-patent sources, Cypris provides a unified foundation that eliminates the fragmentation inherent in multi-tool approaches (10).

Derwent Innovation from Clarivate is one of the longest-established platforms in the patent intelligence space. It provides access to a large global patent collection with strong coverage of the Derwent World Patents Index (DWPI), which includes human-written abstracts that standardize patent terminology across jurisdictions. Derwent Innovation is widely used by IP attorneys and patent professionals, and its strength lies in the depth of its curated data and its classification-enhanced search capabilities. However, Derwent Innovation is primarily a patent-focused tool. Its scientific literature integration is handled through a separate Clarivate product (Web of Science), which requires a distinct subscription and a separate search interface. For teams that need to search patents and scientific literature in a unified workflow, this two-product structure can add friction and increase the risk of gaps between the two datasets (11).

Google Patents is a free, publicly accessible patent search tool that covers patents from major jurisdictions worldwide. It has added basic semantic search capabilities in recent years and provides a useful starting point for initial FTO screening, particularly for teams with limited budgets. However, Google Patents has significant limitations for enterprise FTO work. It does not provide legal status tracking, patent family visualization, automated monitoring, or claim mapping tools. It does not index scientific literature. And it does not offer API access for integration into automated workflows. Google Patents is best understood as a supplementary resource rather than a primary FTO platform (12).

The Lens is an open-access platform that provides a unified search across patents, scholarly articles, and biological sequences. It is operated by Cambia, a nonprofit organization, and its commitment to open data access makes it a valuable resource for teams that want to cross-reference patent and literature data without separate subscriptions. The Lens offers structured metadata, patent family linkages, and basic visualization tools. Its limitations for enterprise FTO work center on the absence of advanced AI search capabilities, automated claim mapping, and the kind of continuous monitoring infrastructure that large R&D organizations require (13).

PQAI (Patent Quality AI) is an open-source project that applies machine learning to patent prior art search. It offers semantic similarity search trained on patent text and allows users to input invention disclosures as natural language queries. PQAI is a useful tool for technology scouting and initial prior art screening, but it is primarily focused on prior art discovery rather than full FTO analysis, and it lacks the enterprise features (monitoring, claim mapping, legal status tracking, team collaboration) required for production FTO workflows (14).

Scite takes a different approach, focusing on scientific literature rather than patents. Scite's AI analyzes citation contexts to determine whether a citing paper supports, contradicts, or simply mentions a cited claim. For FTO workflows that require deep analysis of the non-patent literature, particularly in life sciences and pharmaceuticals where journal publications play a critical role in establishing the state of the art, Scite provides a layer of intelligence that patent-focused tools do not offer (15).

Building an Effective FTO Workflow for Large Datasets in the Age of AI

The platforms discussed above are tools, not solutions in themselves. An effective FTO workflow on large datasets requires a structured methodology that sequences the right techniques in the right order, and an understanding of where general-purpose AI, verticalized AI, and human expertise each contribute the most value.

The first phase is scoping. Before any search begins, the team must define the product or process features to be cleared, the jurisdictions of interest, and the relevant time window (typically patents filed within the last 20 years, adjusted for patent term extensions). General-purpose LLMs can be useful at this stage for brainstorming potential claim interpretations, generating alternative descriptions of the product's features, and identifying adjacent technology areas that might harbor relevant patents. Clear scoping prevents the search from expanding into irrelevant technology areas and ensures that the results are actionable.

The second phase is broad discovery. This is where verticalized AI delivers the most value. The analyst inputs the product description or claim set into a platform powered by domain-specific models and runs a broad semantic search across the full patent corpus, supplemented by classification-based filtering and citation network analysis. The goal is to cast a wide net and capture every potentially relevant reference. Using a general-purpose chatbot for this step is inadequate because it cannot search live patent databases, verify legal status, or rank results using patent-trained embeddings.

The third phase is AI-assisted triage. The results of the broad discovery phase will typically number in the hundreds or thousands. AI clustering and preliminary claim mapping tools reduce this set to a manageable shortlist of patents that warrant detailed human review. Documents that are clearly irrelevant, expired, or directed to a different technology are filtered out automatically. Agentic AI systems can further accelerate this phase by autonomously pursuing follow-up searches on the most promising clusters and flagging ambiguities for human attention.

The fourth phase is expert analysis. The shortlisted patents are reviewed in detail by a qualified patent professional who constructs claim charts, assesses infringement risk under the applicable legal standards, and evaluates the validity of any blocking patents. This is the step where human judgment is indispensable. No AI system, however sophisticated, should be the sole basis for a go/no-go commercialization decision.

The fifth phase is continuous monitoring. The search parameters from the initial analysis are saved and configured to generate automated alerts. The FTO assessment becomes a living document that is updated as the patent landscape evolves.

The Cost of Getting FTO Wrong

The consequences of an inadequate FTO search are not abstract. Patent infringement lawsuits named 1,889 defendants in a recent reporting period, a 21.6 percent increase over the prior year (8). Even a single overlooked patent can delay a product launch, trigger costly litigation, or force an expensive redesign after manufacturing has already begun. The investment in AI-augmented FTO tools and methodologies is small relative to the risk it mitigates.

For R&D organizations operating in technology areas with dense patent landscapes, such as semiconductors, pharmaceuticals, telecommunications, and advanced materials, the question is no longer whether to adopt AI-powered FTO methods but how quickly the transition from manual-only workflows can be completed. The data volumes, jurisdictional complexities, and competitive stakes of 2026 demand it. And the distinction between using a general-purpose chatbot to "ask about patents" and deploying a verticalized AI platform purpose-built for patent intelligence is the difference between a defensible FTO process and an expensive false sense of security.

Citations

(1) WIPO, "World Intellectual Property Indicators 2025: Patents Highlights," November 2025. https://www.wipo.int/web-publications/world-intellectual-property-indicators-2025-highlights/en/patents-highlights.html

(2) WIPO, "IP Facts and Figures 2025," 2025. https://www.wipo.int/edocs/pubdocs/en/wipo-pub-943-2025-en-wipo-ip-facts-and-figures-2025.pdf

(3) WIPO, "IP Facts and Figures 2025: Patents and Utility Models," 2025. https://www.wipo.int/web-publications/ip-facts-and-figures-2025/en/patents-and-utility-models.html

(4) IPWatchdog, "Agentic AI Meets Patent Search: A New Paradigm for Innovation," October 2025. https://ipwatchdog.com/2025/10/30/agentic-ai-meets-patent-search-new-paradigm-innovation/

(5) DrugPatentWatch, "Conducting a Biopharmaceutical Freedom-to-Operate (FTO) Analysis," 2025. https://www.drugpatentwatch.com/blog/conducting-a-biopharmaceutical-freedom-to-operate-fto-analysis-strategies-for-efficient-and-robust-results/

(6) ScienceDirect, "AI, Hybrid Intelligence, and the Future of Patent Analytics: Key Takeaways from the CEPIUG 17th Anniversary Conference," February 2026. https://www.sciencedirect.com/science/article/abs/pii/S017221902600013X

(7) Morgan Lewis, "USPTO Announces Automated Search Pilot Program," October 2025. https://www.morganlewis.com/pubs/2025/10/uspto-announces-automated-search-pilot-program

(8) Lumenci, "AI-Powered Freedom to Operate: Streamlining Patent Risk Analysis," November 2025. https://lumenci.com/blogs/ai-assisted-fto-search/

(9) Sterne Kessler, "USPTO Launches AI Examination Tools: What This Means for Trademark Applicants," March 2026. https://www.sternekessler.com/news-insights/insights/uspto-launches-ai-examination-tools-what-this-means-for-trademark-applicants/

(10) Cypris. https://cypris.ai

(11) Clarivate, Derwent Innovation. https://clarivate.com/products/ip-intelligence/patent-intelligence/derwent-innovation/

(12) Google Patents. https://patents.google.com

(13) The Lens, Cambia. https://www.lens.org

(14) PQAI (Patent Quality AI). https://projectpq.ai

(15) Scite. https://scite.ai

FAQ

What is a freedom-to-operate search?A freedom-to-operate search, also called an FTO search or patent clearance search, is an investigation of existing and pending patents to determine whether a product, process, or technology can be commercialized in a specific jurisdiction without infringing the valid intellectual property rights of a third party. It is distinct from a patentability search, which evaluates whether an invention is novel enough to receive its own patent. FTO analysis focuses specifically on infringement risk and is typically conducted before major investment decisions such as product launch, manufacturing scale-up, or market entry.

Why are large datasets a challenge for FTO searches?Global patent filings reached 3.7 million applications in 2024, and an estimated 19.7 million patents are currently in force worldwide. This corpus spans hundreds of patent-issuing authorities, multiple languages, and decades of filing history. Traditional keyword searches require the analyst to anticipate every possible phrasing a patent drafter might have used, which becomes impractical at this scale. AI-powered semantic search addresses this by understanding conceptual meaning rather than matching exact text strings, enabling the analyst to surface relevant references even when the terminology differs from the search query.

Can I use ChatGPT or another general-purpose LLM for FTO searches?General-purpose LLMs like ChatGPT, Claude, or Gemini can be helpful for background research, brainstorming search strategies, and explaining technical concepts to non-specialist stakeholders. However, they are not suitable as the primary engine of an FTO search. They do not have access to live patent databases, cannot verify legal status, are prone to hallucination, and lack the domain-specific training needed to interpret patent claim language with the precision FTO analysis demands. Verticalized AI models trained specifically on patent and scientific text, and integrated into platforms with access to structured patent data, are required for defensible FTO work.

What is a verticalized LLM and why does it matter for FTO?A verticalized LLM is a large language model that has been fine-tuned or trained specifically on domain-specific data, in this case patent documents, scientific literature, and technical taxonomies. These models understand the conventions of patent drafting, including how claim language maps to technical concepts, how dependent claims narrow independent claims, and how the same invention can be described using different vocabulary across jurisdictions. When integrated into a purpose-built patent search platform, verticalized LLMs perform semantic retrieval, claim decomposition, and relevance ranking at a level of precision that general-purpose models cannot match.

How does AI improve FTO search accuracy?AI improves FTO search accuracy in several ways. Semantic search identifies conceptually related patents that keyword searches miss. Automated claim mapping generates preliminary comparisons between patent claims and product features, speeding up the triage process. Citation network analysis uncovers patents that are technically relevant based on their position in the citation graph rather than their text alone. Classification-based clustering reveals patterns in the patent landscape that help the analyst prioritize review. And agentic AI systems can autonomously execute multi-step search workflows, dynamically adjusting their approach based on intermediate results. Together, these techniques reduce the risk of missing a blocking patent while also reducing the time and cost of the analysis.

Can AI replace human experts in FTO analysis?No. AI is a powerful tool for the discovery, filtering, and triage phases of FTO analysis, but the final determination of infringement risk requires legal judgment that accounts for claim construction, prosecution history, and jurisdiction-specific rules. The most effective FTO methodology combines AI-driven discovery with expert human analysis in a hybrid model. AI processes the volume; humans apply the judgment.

When should an FTO search be conducted?FTO searches should be conducted early in the product development process, ideally before significant investments in design, tooling, or manufacturing. Conducting FTO analysis at the ideation or early development stage allows the team to identify potential patent obstacles while there is still time and flexibility to design around them, seek licenses, or challenge the validity of blocking patents. FTO analysis should also be refreshed at major development milestones and before commercial launch, as the patent landscape may have changed since the initial search.

What is the difference between semantic search and keyword search for patents?Keyword search matches exact text strings in patent documents. If a patent uses the term "optical waveguide" but the search query uses "fiber optic channel," a keyword search will not find the match. Semantic search uses natural language processing to understand the conceptual meaning of both the query and the documents, enabling it to recognize that these two phrases describe the same concept. For FTO searches across large, multilingual patent datasets, semantic search provides significantly broader coverage than keyword-only approaches.

How does non-patent literature factor into FTO analysis?Non-patent literature, including scientific journal articles, conference proceedings, and technical standards, is relevant to FTO in two ways. First, it may constitute prior art that can be used to invalidate a blocking patent, eliminating the infringement risk. Second, it provides context about the state of the art at the time a patent was filed, which can inform claim interpretation and scope analysis. Platforms that unify patent and scientific literature in a single search interface eliminate the need to conduct separate searches across different databases and reduce the risk of gaps.

What is continuous FTO monitoring and why does it matter?Continuous FTO monitoring means saving the search parameters from an initial FTO analysis and configuring automated alerts for changes in the patent landscape. These alerts can notify the team when new patents are published in the relevant technology area, when a competitor files a new application, or when the legal status of a previously identified patent changes. This transforms the FTO assessment from a one-time snapshot into a living intelligence system that keeps pace with the evolving patent landscape throughout the product development cycle.

How many jurisdictions should an FTO search cover?An FTO search should cover every jurisdiction where the product will be manufactured, sold, imported, or used. At a minimum, this typically includes the United States, Europe (via the European Patent Office), China, Japan, and South Korea for technology products with global distribution. PCT applications should also be monitored, as an international filing may enter the national phase in any member country. The specific jurisdictional scope depends on the company's commercialization plans and supply chain geography.

What should I look for in an AI-powered FTO platform?The most important capabilities for an enterprise FTO platform are comprehensive global patent data coverage, high-quality semantic search powered by verticalized models trained on patent text, non-patent literature integration, automated claim mapping and clustering tools, legal status tracking, patent family visualization, continuous monitoring and alerting, API access for workflow automation, and enterprise-grade security. Platforms that unify patent and scientific literature search in a single interface and leverage domain-specific AI rather than generic general-purpose models provide the strongest foundation for defensible FTO analysis at scale.

Clarivate is not a single product. It is a portfolio of acquired tools assembled over decades, and the two platforms that enterprise R&D teams use most frequently — Derwent Innovation for patent intelligence and Web of Science for scientific literature — were designed for entirely different audiences with entirely different workflows. Derwent was built for IP attorneys conducting freedom-to-operate searches. Web of Science was built for academic librarians and university researchers. Neither was built for the R&D scientist trying to answer a strategic question about a technology landscape, a competitive portfolio, or an emerging technical risk.

The gap between what Clarivate's R&D-adjacent tools were designed to do and what modern innovation teams actually need is the primary reason organizations are evaluating alternatives. This guide examines six of the strongest alternatives to Clarivate for enterprise R&D and IP teams, explains what distinguishes each platform, and provides a framework for matching your team's specific requirements to the right solution.

Why R&D Teams Are Reevaluating Clarivate

Clarivate's position in the market is the product of consolidation, not native product design. The company was spun out of Thomson Reuters' IP and Science division in 2016 and has since assembled its portfolio through a series of acquisitions — Derwent, Web of Science, ProQuest, Cortellis, and others — without fully integrating the underlying data architectures. For R&D teams, the practical consequence is that patent intelligence and scientific literature intelligence live in separate platforms with separate subscriptions, separate interfaces, and separate learning curves.

This fragmentation has real costs. An R&D scientist conducting a technology scouting exercise needs to understand what has been patented, what has been published in the scientific literature, and how those two bodies of knowledge relate to each other. Performing that analysis through Derwent and Web of Science requires toggling between platforms, manually reconciling results, and building synthesis layers that neither tool provides natively. The time investment alone is a meaningful barrier, and the cognitive load of maintaining fluency in two complex legacy interfaces reduces the frequency with which R&D teams can turn to patent and literature intelligence for decision support.

Pricing is a compounding factor. Clarivate's enterprise contracts for combined Derwent and Web of Science access can run into six figures annually, and the terms typically require institutional commitment rather than flexible per-seat or usage-based arrangements. For Fortune 500 R&D organizations that have historically lived with the cost because no integrated alternative existed, the rapid maturation of AI-native intelligence platforms over the past three years has changed the evaluation calculus significantly.

There is also a structural concern specific to Derwent. Clarivate's Derwent World Patents Index is maintained by a team of over 800 patent editors who manually write abstracts for each invention family — a curation model that represents both the platform's greatest strength and its most significant vulnerability. The value of Derwent has always rested on human expertise applied at scale. As AI-native platforms develop increasingly sophisticated capabilities for patent comprehension and synthesis, the competitive differentiation of hand-written abstracts is narrowing, and the cost premium associated with that curation model becomes harder to justify for teams whose primary need is strategic intelligence rather than legal-quality prior art analysis.

What to Look for in a Clarivate Alternative

Before evaluating specific platforms, it is worth being precise about what Clarivate's R&D-adjacent products actually do, because the alternatives that best address those functions are not necessarily the platforms that appear most often in head-to-head comparison articles.

Derwent Innovation provides access to the Derwent World Patents Index, a curated database covering over 130 million patents, along with tools for patent search, analytics, portfolio management, and competitive landscaping. Its primary design center is the patent professional: the interface and workflows are optimized for freedom-to-operate analyses, patentability assessments, and portfolio strategy decisions that require high-confidence data quality.

Web of Science provides access to a peer-reviewed scientific literature database covering approximately 20,000 journals, along with citation analytics, research performance metrics, and discovery tools. Its primary design center is the academic researcher and institutional library administrator.

An effective Clarivate alternative for an enterprise R&D team needs to cover both functions, ideally within a unified architecture, and needs to provide the kind of strategic synthesis and workflow integration that neither Derwent nor Web of Science was designed to deliver. The evaluation criteria that matter most are unified data architecture, native AI capabilities, scientific literature depth alongside patent coverage, enterprise security posture, and whether the platform was designed for R&D scientists and innovation strategists or for IP attorneys and academic administrators.

The Best Clarivate Alternatives for Enterprise R&D Teams

Cypris — Best Unified Platform for Enterprise R&D Intelligence

Cypris takes a fundamentally different approach to R&D intelligence than Clarivate's two-platform model. Rather than providing a patent database and a literature database as separate tools, Cypris unifies over 500 million patents and scientific papers within a single platform, structured through a proprietary R&D ontology that understands the relationships between technical concepts across both corpora. The result is that searches and analyses performed in Cypris return integrated results from patents and scientific literature simultaneously, without requiring the researcher to reconcile findings from separate systems.

The distinction is not merely a user experience improvement. When patent data and scientific literature are indexed through a shared ontology rather than maintained in separate silos, the analytical possibilities expand substantially. A technology scouting exercise can reveal not just what has been patented in a domain but what the concurrent scientific literature suggests about the direction of technical development, where the patent portfolio is leading versus lagging the research frontier, and which organizations are accumulating both IP and publication activity in emerging areas. These cross-signal insights are structurally unavailable in a Derwent-plus-Web-of-Science architecture because the data models do not share a common semantic layer.

Cypris is trusted by hundreds of enterprise teams and thousands of researchers across R&D, IP, and product development functions, including organizations in the Fortune 500. The platform's AI architecture is built on official enterprise API partnerships with OpenAI, Anthropic, and Google — partnerships that distinguish it from platforms that have layered general-purpose AI onto legacy data infrastructure without formal integration agreements. Enterprise security meets Fortune 500 requirements, addressing the compliance and data governance requirements that govern platform adoption decisions at large corporations.

For organizations that have historically maintained separate Derwent and Web of Science subscriptions, Cypris offers the possibility of consolidating that intelligence infrastructure into a single platform while simultaneously gaining access to AI capabilities that neither legacy tool provides. The platform's Research Brief service extends beyond self-service search to provide bespoke analysis by Cypris research analysts, which addresses the capacity constraint that limits how frequently in-house teams can conduct deep landscape analyses.

Google Patents — Best Free Option for Preliminary Research

Google Patents provides free access to patent documents from major patent offices worldwide, with an interface that will be immediately familiar to anyone comfortable with Google's search products. The platform indexes over 87 million patents and offers some integration with Google Scholar to bring non-patent literature into search results.

For preliminary research, competitive screening, and exploratory work, Google Patents offers genuine utility. The familiar search interface eliminates the training investment required by Derwent and Orbit, and the zero-cost access model makes it available to anyone in an R&D organization without procurement friction. Translation capabilities allow English-language searches to surface relevant patents from non-English-language jurisdictions, which addresses one of the more significant practical limitations of manual prior art searching.