Work, as we’ve known it, has fundamentally changed.

That statement might have sounded dramatic a year or two ago, but you would be naive to deny it today. AI is no longer just augmenting workflows. It is increasingly owning them. The initial wave focused on the obvious entry points such as drafting presentations, summarizing articles, and writing emails. But what started as assistive has quickly evolved into something far more powerful.

AI agents are now executing entire downstream workflows. Not just writing copy for a presentation, but building it. Not just drafting an email, but sending and iterating on it. These systems run asynchronously, improve over time, and are becoming easier to build and deploy by the day.

Startups and smaller organizations are already operating with them across their workflows and are seeing serious gains (including us at Cypris). Large enterprises, expectedly, lag behind, but will inevitably follow. Large enterprises are for the most part subject to their vendors, and those vendors are undergoing massive foundational shifts from traditional software apps to Agentic AI solutions.

Which raises the question:

What does this shift mean for the enterprise tech stack of the future?

The companies that answer this and position themselves correctly will not just be more efficient. They will operate at a fundamentally different pace. In a world where AI compounds progress, speed becomes the ultimate competitive advantage.

From Search to Chat

My perspective comes from the last five years building Cypris, an AI platform for R&D and IP intelligence.

We launched in 2021, before AI meant what it does today. Back then, semantic search was considered cutting edge. Our core value proposition was helping teams identify signals in massive datasets such as patents, research papers, and technical literature faster than their competitors.

The reality of that workflow looked very different than it does today.

Researchers spent the majority of their time on data curation. Entire teams were dedicated to building complex Lucene queries across fragmented datasets. The quality of insights depended heavily on how good your query was, and how effectively you could interpret thousands of results through pre-built charts, visualizations, BI tools and manual workflows.

Work that now takes minutes used to take weeks. Prior art searches, landscape analyses, and whitespace identification all required significant manual effort. Most product comparisons, and ultimately our demos, came down to a few questions:

- Does your query return better results than theirs?

- How robust are your advanced search capabilities?

- What kind of visualizations can you offer to identify meaningful signal in the results?

Then everything changed.

The Inflection Point - When AI Became Exposed to Enterprise

The launch of ChatGPT in November 2022 marked a turning point.

At first, its enterprise impact was not obvious. By early 2024, the shift became undeniable. Marketing workflows were the first to transform. Copywriting went from a differentiated skill to a commodity almost overnight. Then came coding assistants, which have rapidly evolved toward full-stack AI development.

We adapted Cypris in real time, shifting from static, pre-generated insights to dynamic, retrieval-based systems leveraging the world’s most powerful models. We recognized early that the model race was a wave we wanted to ride, so we built the infrastructure to incorporate all leading models directly into our product. What began as an enhancement quickly became the foundation of everything we do.

As the software stack progressed quickly, our customers began scrambling to make sense of it. AI committees formed. IT teams took control of purchasing decisions. Sales cycles lengthened as organizations tried to impose governance on something evolving faster than their processes could handle. We have seen this firsthand, with customers explicitly stating that all AI purchases now need to go through new evaluation and procurement processes.

But there is an underlying tension: Every piece of software is now an AI purchase.

And eventually, enterprises will need to operate that way.

What Should Be Verticalized?

At the center of this transformation and a complicated question most enterprise buyers are struggling with today is:

What can general-purpose AI handle, and where do you need specialized systems?

Most organizations do not answer this theoretically. They learn through experience, use case by use case. And the market hype does not help. There is a growing narrative that companies can “vibe code” their way into rebuilding core systems that underpin processes involving hundreds of stakeholders and millions of dollars in impact.

That is unrealistic.

Call me when a company like J&J decides to replace Salesforce with something built in their team’s free time with some prompts.

A more grounded way to think about it is through a simple principle that consistently holds true:

AI is only as good as what it is exposed to.

A model will generate answers based on the data it can access and the orchestration it is given, whether that is its training data, web content, or additional context you provide.

If you do not give it access to meaningful or proprietary data or thoughtful direction, it will default to generic knowledge.

This creates a growing divide within tech stacks that solely levergage 'commodity AI' vs. 'enterprise enhanced AI'.

Commodity AI vs. Enterprise-Enhanced AI

Commodity AI is the baseline.

It includes foundation models such as ChatGPT, Claude, and Co-Pilot, which run on top of those models, that everyone has access to.

Using them is no longer a competitive advantage. It is table stakes.

If your organization relies on the same tools trained on the same data, your outputs and decisions will begin to look the same as everyone else’s.

Enterprise-enhanced AI is where differentiation happens.

This is what you build on top of the foundation.

It includes:

- Integrating proprietary and high-value datasets

- Layering in domain-specific tools and platforms

- Designing curated workflows that tap into verticalized agents

- Building custom ontologies that interpret how your business operates

- Designing org wide system prompts tailored to existing internal processes

The goal is to amplify foundation models with context they cannot access on their own.

Additionally, enterprises that believe they can simply vibe code their own stack on top of foundation models will eventually run into the same reality that fueled the SaaS boom over the last 20 years. Your job is not to build and maintain software, and doing so will consume far more time and resources than expected. Claude is powerful, and your best vendors are already using it as a foundation. You will get significantly more leverage from it through verticalized and enhanced systems.

Where Data Foundations Especially Matter

In our eyes, nowhere is this more critical than in R&D and IP teams.

Foundation model providers are not focused on maintaining continuously updated datasets of global patents, scientific literature, company data, or chemical compounds. It is too niche and not a strategic priority for them.

But for teams making high-stakes decisions such as:

- What to build

- Where to invest

- Where to file IP

- How to differentiate

That data is essential.

If you rely on generic AI outputs without a strong data foundation, you are making decisions on incomplete information.

In technical domains, incomplete information is a strategic risk.

See our case study on real-world scenario gaps here: https://www.cypris.ai/insights/the-patent-intelligence-gap---a-comparative-analysis-of-verticalized-ai-patent-tools-vs-general-purpose-language-models-for-r-d-decision-making

The New Mandate for Enterprise Leaders

All software vendors will be AI-vendors, so figuring out your strategy, figuring out your security and IT governance, and figuring out your deployment process quickly should be a strategic priority. Focus on real-world signal and critical workflows and find vendors that can turn your commodity AI into enterprise enhanced assets before your competitors do.

We are entering a world where AI itself is no longer the differentiator.

How you implement it is.

The enterprises that recognize this early and build their stacks accordingly will not just keep up.

They will redefine the pace of their industries.

AI in the Workforce: From Commodity AI to Enterprise Enhanced Assets

Writen By:

Steve Hafif , CEO & Co-Founder

Work, as we’ve known it, has fundamentally changed.

That statement might have sounded dramatic a year or two ago, but you would be naive to deny it today. AI is no longer just augmenting workflows. It is increasingly owning them. The initial wave focused on the obvious entry points such as drafting presentations, summarizing articles, and writing emails. But what started as assistive has quickly evolved into something far more powerful.

AI agents are now executing entire downstream workflows. Not just writing copy for a presentation, but building it. Not just drafting an email, but sending and iterating on it. These systems run asynchronously, improve over time, and are becoming easier to build and deploy by the day.

Startups and smaller organizations are already operating with them across their workflows and are seeing serious gains (including us at Cypris). Large enterprises, expectedly, lag behind, but will inevitably follow. Large enterprises are for the most part subject to their vendors, and those vendors are undergoing massive foundational shifts from traditional software apps to Agentic AI solutions.

Which raises the question:

What does this shift mean for the enterprise tech stack of the future?

The companies that answer this and position themselves correctly will not just be more efficient. They will operate at a fundamentally different pace. In a world where AI compounds progress, speed becomes the ultimate competitive advantage.

From Search to Chat

My perspective comes from the last five years building Cypris, an AI platform for R&D and IP intelligence.

We launched in 2021, before AI meant what it does today. Back then, semantic search was considered cutting edge. Our core value proposition was helping teams identify signals in massive datasets such as patents, research papers, and technical literature faster than their competitors.

The reality of that workflow looked very different than it does today.

Researchers spent the majority of their time on data curation. Entire teams were dedicated to building complex Lucene queries across fragmented datasets. The quality of insights depended heavily on how good your query was, and how effectively you could interpret thousands of results through pre-built charts, visualizations, BI tools and manual workflows.

Work that now takes minutes used to take weeks. Prior art searches, landscape analyses, and whitespace identification all required significant manual effort. Most product comparisons, and ultimately our demos, came down to a few questions:

- Does your query return better results than theirs?

- How robust are your advanced search capabilities?

- What kind of visualizations can you offer to identify meaningful signal in the results?

Then everything changed.

The Inflection Point - When AI Became Exposed to Enterprise

The launch of ChatGPT in November 2022 marked a turning point.

At first, its enterprise impact was not obvious. By early 2024, the shift became undeniable. Marketing workflows were the first to transform. Copywriting went from a differentiated skill to a commodity almost overnight. Then came coding assistants, which have rapidly evolved toward full-stack AI development.

We adapted Cypris in real time, shifting from static, pre-generated insights to dynamic, retrieval-based systems leveraging the world’s most powerful models. We recognized early that the model race was a wave we wanted to ride, so we built the infrastructure to incorporate all leading models directly into our product. What began as an enhancement quickly became the foundation of everything we do.

As the software stack progressed quickly, our customers began scrambling to make sense of it. AI committees formed. IT teams took control of purchasing decisions. Sales cycles lengthened as organizations tried to impose governance on something evolving faster than their processes could handle. We have seen this firsthand, with customers explicitly stating that all AI purchases now need to go through new evaluation and procurement processes.

But there is an underlying tension: Every piece of software is now an AI purchase.

And eventually, enterprises will need to operate that way.

What Should Be Verticalized?

At the center of this transformation and a complicated question most enterprise buyers are struggling with today is:

What can general-purpose AI handle, and where do you need specialized systems?

Most organizations do not answer this theoretically. They learn through experience, use case by use case. And the market hype does not help. There is a growing narrative that companies can “vibe code” their way into rebuilding core systems that underpin processes involving hundreds of stakeholders and millions of dollars in impact.

That is unrealistic.

Call me when a company like J&J decides to replace Salesforce with something built in their team’s free time with some prompts.

A more grounded way to think about it is through a simple principle that consistently holds true:

AI is only as good as what it is exposed to.

A model will generate answers based on the data it can access and the orchestration it is given, whether that is its training data, web content, or additional context you provide.

If you do not give it access to meaningful or proprietary data or thoughtful direction, it will default to generic knowledge.

This creates a growing divide within tech stacks that solely levergage 'commodity AI' vs. 'enterprise enhanced AI'.

Commodity AI vs. Enterprise-Enhanced AI

Commodity AI is the baseline.

It includes foundation models such as ChatGPT, Claude, and Co-Pilot, which run on top of those models, that everyone has access to.

Using them is no longer a competitive advantage. It is table stakes.

If your organization relies on the same tools trained on the same data, your outputs and decisions will begin to look the same as everyone else’s.

Enterprise-enhanced AI is where differentiation happens.

This is what you build on top of the foundation.

It includes:

- Integrating proprietary and high-value datasets

- Layering in domain-specific tools and platforms

- Designing curated workflows that tap into verticalized agents

- Building custom ontologies that interpret how your business operates

- Designing org wide system prompts tailored to existing internal processes

The goal is to amplify foundation models with context they cannot access on their own.

Additionally, enterprises that believe they can simply vibe code their own stack on top of foundation models will eventually run into the same reality that fueled the SaaS boom over the last 20 years. Your job is not to build and maintain software, and doing so will consume far more time and resources than expected. Claude is powerful, and your best vendors are already using it as a foundation. You will get significantly more leverage from it through verticalized and enhanced systems.

Where Data Foundations Especially Matter

In our eyes, nowhere is this more critical than in R&D and IP teams.

Foundation model providers are not focused on maintaining continuously updated datasets of global patents, scientific literature, company data, or chemical compounds. It is too niche and not a strategic priority for them.

But for teams making high-stakes decisions such as:

- What to build

- Where to invest

- Where to file IP

- How to differentiate

That data is essential.

If you rely on generic AI outputs without a strong data foundation, you are making decisions on incomplete information.

In technical domains, incomplete information is a strategic risk.

See our case study on real-world scenario gaps here: https://www.cypris.ai/insights/the-patent-intelligence-gap---a-comparative-analysis-of-verticalized-ai-patent-tools-vs-general-purpose-language-models-for-r-d-decision-making

The New Mandate for Enterprise Leaders

All software vendors will be AI-vendors, so figuring out your strategy, figuring out your security and IT governance, and figuring out your deployment process quickly should be a strategic priority. Focus on real-world signal and critical workflows and find vendors that can turn your commodity AI into enterprise enhanced assets before your competitors do.

We are entering a world where AI itself is no longer the differentiator.

How you implement it is.

The enterprises that recognize this early and build their stacks accordingly will not just keep up.

They will redefine the pace of their industries.

Keep Reading

The most consequential shift in patent search isn't semantic understanding or natural language queries — both of which most platforms now offer. It's the move from episodic search to continuous agentic monitoring: AI agents that run patent intelligence workflows around the clock, evaluate new filings against a defined research thesis while your team is asleep, and surface only what genuinely matters by the time you open your laptop in the morning.

This shift redefines what an enterprise R&D intelligence platform actually does. The platforms that will matter over the next several years are not the ones with the cleverest search interface. They are the ones that can run an analyst's reasoning continuously, in the background, across the entire global patent corpus and the scientific literature that surrounds it.

This guide explains how continuous agentic patent monitoring works, where it differs from the alert systems most R&D teams currently rely on, and how to design a workflow that turns patent intelligence from a project into a process.

What Continuous Agentic Patent Monitoring Actually Means

Continuous agentic patent monitoring is the use of AI agents to run defined patent search and evaluation workflows on an ongoing schedule, with the agent applying interpretive reasoning rather than simple keyword matching to determine which filings warrant human attention.

The distinction from traditional patent alerts is meaningful. A traditional alert tells you that a new patent matched your saved search. An agent reads the filing, compares it against the technical thesis you defined, evaluates whether it represents a meaningful development relative to the prior art it already knows about, and either escalates the document with context or quietly dismisses it. The first approach generates a queue. The second approach generates intelligence.

Most R&D and IP teams today operate somewhere between these two modes. They have saved searches that fire weekly digest emails. The digest arrives. Someone scans it, archives most of it, flags one or two items, and moves on. The work the analyst is actually doing — interpreting whether each new filing matters — never gets captured anywhere. It happens in their head, fades, and has to be repeated next week.

Agentic monitoring inverts that pattern. The interpretive work moves into the agent, which means it runs every day instead of once a week, applies consistent criteria, and produces a written record of what it considered and why.

Why Episodic Patent Search Is the Wrong Default

Most patent search workflows are still organized around the assumption that searching is something a person does at a moment in time. A scientist needs to check the prior art before filing. A product team needs a freedom-to-operate read before launching. An IP analyst needs to map a competitor's portfolio for a board presentation. In each case, someone runs a search, exports the results, builds a document, and the work ends.

This is the workflow that legacy patent search platforms were designed for. Tools like Derwent Innovation and Orbit Intelligence were built for IP attorneys and search professionals running discrete, billable engagements. The interface assumes a human in the chair, constructing Boolean queries, refining results, and producing a deliverable. Everything about the workflow is episodic.

The problem is that the patent landscape is not episodic. According to the World Intellectual Property Organization, more than 3.5 million patent applications are filed globally each year, with weekly publication cycles in every major jurisdiction. By the time an FTO analysis is finalized and a product moves toward launch, the underlying patent landscape has shifted. By the time a competitor portfolio map is delivered to leadership, the competitor has filed something new. Episodic search produces a snapshot of a system that doesn't sit still.

R&D teams in particular suffer from this mismatch. R&D timelines are long. Programs that begin with a clean technology landscape can encounter blocking filings two years into development. Inventors in adjacent fields publish papers that hint at what they will file next quarter. Acquirers buy patent portfolios that change the competitive picture overnight. None of this is captured by running a search in March and assuming the answer holds in November.

The shift to continuous monitoring is not a feature upgrade. It is a different theory of how patent intelligence connects to R&D decisions.

What an AI Agent Does Differently in a Monitoring Workflow

An AI agent designed for continuous patent monitoring performs four functions that distinguish it from a saved search with email alerts.

First, it applies a research thesis rather than a query. Instead of matching documents against a Boolean string, the agent evaluates each new filing against a structured description of what the team is trying to learn. That thesis can encode technical scope, exclusions, competitor focus, jurisdictional priorities, and the specific decisions the monitoring is meant to inform. The thesis is interpretive, not lexical, which means the agent can recognize relevant filings even when the language differs from how the team would have phrased the search.

Second, it runs continuously and on a schedule the team controls. New filings publish daily; the agent evaluates them daily. Patent legal status updates flow in continuously; the agent processes them as they arrive. This eliminates the gap between when a relevant document enters the corpus and when the team learns about it.

Third, it filters for signal rather than match. Most saved searches return false positives because the keywords appear in unrelated contexts. An agent reads the document, evaluates whether the disclosure actually relates to the research thesis, and discards filings that match on language but not on substance. The result is a substantially smaller and more relevant escalation queue.

Fourth, it produces a written rationale. When the agent escalates a filing, it explains why — what about the disclosure matched the thesis, how it relates to prior art the agent has already evaluated, and what decisions or downstream workflows it might affect. This rationale becomes a record. Teams can audit the agent's reasoning, refine the thesis when the agent gets it wrong, and accumulate institutional knowledge that survives team turnover.

These four functions are what transform monitoring from a notification system into an analytical process.

How to Design a Continuous Patent Monitoring Workflow

A continuous monitoring workflow has five components, and the quality of each determines how useful the system will be in practice.

Defining the research thesis. The thesis is the most important input. It should describe the technical domain in enough specificity that an agent can recognize relevant filings, identify what is excluded as out-of-scope, name the assignees and inventors that warrant elevated attention, specify the jurisdictions that matter, and articulate the decisions the monitoring is meant to support. A thesis written in two sentences will produce noisy output. A thesis that runs to a structured document will produce a useful escalation queue. The discipline of writing the thesis is itself valuable; it forces the team to articulate what they are actually trying to learn.

Setting relevance criteria. Beyond the thesis, the agent needs explicit criteria for what counts as escalation-worthy. A new filing from a primary competitor should probably escalate even if it is tangentially related to the technical scope. A filing from an unknown assignee in a peripheral jurisdiction should escalate only if the technical match is strong. These criteria need to be made explicit so the agent can apply them consistently and the team can tune them over time.

Configuring escalation thresholds. Continuous monitoring fails when it produces too much output. If the daily digest contains forty escalations, the team will stop reading it within two weeks. The threshold for escalation should be set high enough that what arrives is genuinely worth attention, with the understanding that the team can tune the threshold downward if they feel they are missing things.

Integrating with downstream R&D processes. Monitoring output is only valuable if it connects to a decision. Escalations should route to the people who can act on them — the program lead whose freedom-to-operate read is affected, the IP counsel evaluating a defensive filing decision, the technology scout building a partnership target list. A monitoring workflow that terminates in an inbox produces no value. A monitoring workflow that terminates in a Stage-Gate review or a portfolio decision produces compounding value.

Reviewing and refining the thesis. The thesis is not static. As the program evolves, as competitors shift strategy, as adjacent technologies become relevant, the thesis needs to be updated. A monthly or quarterly review of what the agent escalated, what it missed, and what it incorrectly elevated allows the team to refine the thesis and keep the monitoring aligned with the current state of the program.

The Monitoring Use Cases That Justify the Investment

Four monitoring use cases produce most of the practical value for R&D and IP teams.

Competitive patent activity tracking monitors filings, continuations, and family expansions from named competitors and produces the earliest possible signal that a competitor is moving into a technology space, expanding geographically, or shifting strategic emphasis. For R&D teams, this informs program prioritization. For IP teams, this informs defensive filing strategy.

Freedom-to-operate watch monitors new filings against the technical scope of products in development or recently launched and produces ongoing assurance that the FTO position established at program kickoff continues to hold as the patent landscape evolves. This is particularly important for programs with long development cycles, where the FTO landscape at launch may differ substantially from the landscape at the start of development.

Technology emergence detection monitors filing activity, citation patterns, and publication trends across an entire technical domain to identify when a new approach, material, or method is gaining momentum. This is the most strategically valuable use case for innovation strategists and corporate venture teams, because it surfaces opportunities and threats before they become obvious from market signals alone.

Inventor and assignee tracking monitors specific researchers, research groups, and corporate filers to detect movement, collaboration, and shifts in technical focus. When a productive inventor moves between companies, when a research group's filing rate accelerates, when a small assignee's portfolio is acquired — these events carry strategic information that gets lost in aggregate filing statistics.

Each of these use cases benefits from continuous evaluation in a way that periodic search cannot replicate. The signal is in the change, and the change is only visible if something is watching continuously.

What an AI Patent Search Platform Needs to Do This Well

Not every platform that markets AI capabilities can support continuous agentic monitoring. The architecture required is meaningfully different from what a search interface needs.

The platform needs deep dataset coverage across both the global patent corpus and the surrounding scientific literature. Patents do not emerge from a vacuum; they emerge from research that often appears first in scientific publications. A monitoring workflow that watches patents alone misses the leading indicators that show up in papers six to eighteen months earlier. An enterprise R&D intelligence platform that unifies patent and scientific literature in a single corpus produces substantially earlier signal than a patent-only tool.

The platform needs a sophisticated technology ontology and knowledge graph. An agent evaluating relevance against a research thesis needs to understand technical relationships between concepts, materials, methods, and applications. Generic semantic search models trained on internet-scale text do not have this understanding for specialized R&D domains. Platforms built on proprietary R&D ontologies, trained on the language of patents and scientific publications, perform meaningfully better at the relevance evaluation task that continuous monitoring depends on.

The platform needs an agentic architecture, not just AI features bolted onto a search interface. Continuous monitoring requires agents that can run defined workflows on a schedule, maintain state across runs, apply consistent reasoning, and produce auditable outputs. This is a different technical foundation than a chat interface or a semantic search box.

The platform needs to integrate with R&D workflows. Monitoring output that lives inside the platform produces less value than monitoring output that flows into the project workspaces, Stage-Gate reviews, and portfolio dashboards where R&D decisions actually get made. Workflow integration is often the difference between a tool that gets adopted and a tool that gets demoed and abandoned.

Finally, the platform needs to meet enterprise-grade security requirements. R&D monitoring frequently touches sensitive program information, and any platform handling that data needs to meet the security expectations of Fortune 500 R&D and IP organizations.

Where Cypris Fits

Cypris is an enterprise R&D intelligence platform built specifically for the continuous monitoring use case. It indexes more than 500 million patents and scientific papers in a unified corpus, applies a proprietary R&D ontology developed for the language of technical research, and provides agentic workflows that R&D and IP teams can configure to run continuous monitoring against defined research theses.

The platform was designed from the ground up around the workflow needs of R&D scientists and innovation strategists rather than IP attorneys and search professionals, which is reflected in how monitoring is structured. Research theses are written in natural language. Escalations include written rationales. Output integrates with project workspaces and downstream R&D processes. The architecture is agentic rather than search-first, which is what makes the continuous use case practical at the scale Fortune 500 R&D teams need.

For teams currently running patent monitoring through a combination of saved searches in a legacy tool and human review of digest emails, Cypris represents a different category of system: one where the interpretive work that previously had to happen in a human's head can happen continuously, in the agent, across the full corpus, every day.

Frequently Asked Questions

What is an AI patent search platform?An AI patent search platform is software that uses machine learning and large language models to search, analyze, and monitor patent literature, going beyond keyword matching to understand the semantic content of filings. The most advanced platforms combine patent data with scientific literature, apply domain-specific ontologies trained on technical research language, and support agentic workflows that can run continuous monitoring rather than only one-time searches.

How does AI patent monitoring differ from traditional patent alerts?Traditional patent alerts notify users when new filings match a saved search query, producing a digest of matches that requires human review to determine relevance. AI patent monitoring uses agents that evaluate each new filing against a defined research thesis, apply interpretive reasoning to determine actual relevance, filter out false positives that match on language but not on substance, and escalate filings with written rationales explaining why they matter.

Can AI agents replace patent analysts?AI agents do not replace patent analysts; they extend the analyst's reach by running interpretive workflows continuously and at scale. The work that analysts do best — strategic judgment, claim-level analysis, integration of patent intelligence with business context — remains human work. The work that agents do best — evaluating high volumes of new filings against defined criteria, every day, consistently — frees analysts to focus on the smaller number of filings that genuinely warrant their attention.

What kind of R&D teams benefit most from continuous patent monitoring?Continuous patent monitoring produces the most value for R&D teams working in fast-moving technical domains, teams with long development cycles where the patent landscape may shift between program kickoff and launch, teams tracking specific competitors closely, and innovation strategy or corporate venture teams trying to detect technology emergence before it becomes obvious from market signals. Teams running primarily reactive patent work — checking the landscape only when a specific decision requires it — see less benefit from continuous monitoring than teams whose decisions depend on real-time landscape awareness.

How is continuous monitoring different from a saved search?A saved search returns documents that match a query at the time the search runs. Continuous monitoring runs an agent that evaluates new filings against a research thesis as they publish, applies interpretive criteria to determine relevance, and produces a smaller, higher-signal escalation queue with written rationale. The saved search produces matches; the monitoring agent produces interpreted intelligence.

What should a research thesis for AI patent monitoring include?A research thesis should describe the technical scope in specific terms, identify what is explicitly out of scope, name competitors and assignees that warrant elevated attention, specify jurisdictions of priority, and articulate the decisions the monitoring is meant to inform. The more structured the thesis, the more accurately the agent can evaluate relevance and the smaller and more useful the escalation queue becomes.

How often should continuous patent monitoring run?For most R&D and IP applications, daily monitoring aligned with patent office publication cycles is appropriate. Weekly monitoring is sometimes adequate for slower-moving technology domains, but the marginal cost of running an agent daily versus weekly is low, and the latency benefit is meaningful when the monitoring informs time-sensitive decisions.

What's the connection between patent monitoring and scientific literature monitoring?Patents and scientific publications are connected stages of the same research pipeline, and most filed inventions appear first in some form in scientific literature, often six to eighteen months earlier. Patent monitoring that incorporates scientific literature surfaces leading indicators that patent-only monitoring misses entirely. This is one of the structural advantages of platforms that index both corpora in a unified system.

How do AI patent search platforms handle confidentiality?Enterprise AI patent search platforms used by Fortune 500 R&D teams maintain enterprise-grade security architecture, including isolation of customer data, controls on how data interacts with AI models, and compliance with the security requirements typical of corporate research environments. Specific security postures vary by platform, and any team evaluating a platform for sensitive R&D monitoring should confirm that the security architecture meets their internal standards.

What's the difference between AI patent search and agentic patent search?AI patent search uses machine learning to improve the accuracy and relevance of search results within a single user-initiated query. Agentic patent search uses AI agents to run multi-step workflows that include search but also include evaluation, comparison, synthesis, and continuous execution. AI patent search is a feature; agentic patent search is an architecture, and continuous monitoring is the workflow it enables.

United Airlines' "Relax Row" Looks Amazing. But Who Actually Owns the IP?

When United Airlines announced "Relax Row" — three adjacent economy seats with adjustable leg rests that raise to create a continuous lie-flat sleeping surface, complete with a mattress pad, blanket, and pillows — the aviation world took notice[1]. Slated for deployment on more than 200 of United's 787s and 777s, with up to 12 rows per aircraft, it represents one of the most ambitious economy cabin innovations ever attempted by a U.S. carrier[1].

But behind the glossy renders and enthusiastic social media rollout lies a thorny question that United hasn't publicly addressed: who actually owns the intellectual property behind this concept?

The answer, it turns out, is almost certainly not United Airlines.

The Skycouch Came First — By Over a Decade

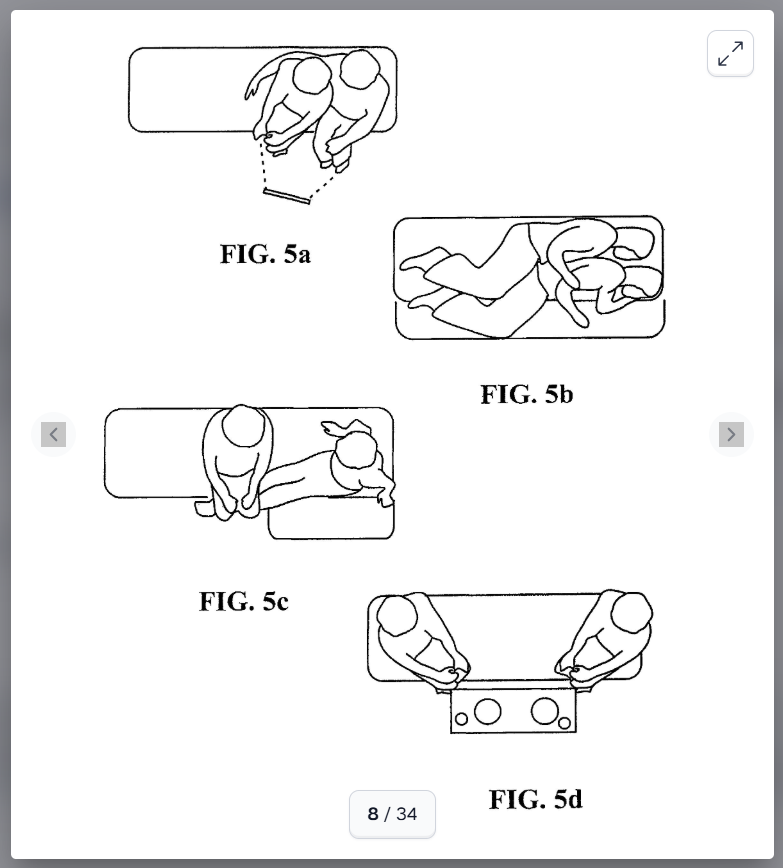

The idea of economy seats with fold-up leg rests that create a flat sleeping surface across a row is not new. Air New Zealand pioneered this exact concept with its Economy Skycouch™, which has been in commercial service since approximately 2011[13]. The product works precisely the way United describes its Relax Row: passengers in a row of three economy seats can raise individual leg rests to seat-pan height, creating a continuous horizontal surface suitable for lying down[13].

Air New Zealand didn't just build the product — they patented it extensively. The foundational U.S. patent, US 9,132,918 B2, titled "Seating arrangement, seat unit, tray table and seating system," was granted in September 2015 and is assigned to Air New Zealand Limited[36]. The inventors — Victoria Anne Bamford, James Dominic France, Glen Wilson Porter, and Geoffrey Glen Suvalko — filed the earliest priority application in January 2009[36], giving the patent family protection extending approximately through 2029–2030.

The claims are remarkably broad. Claim 1 describes a row of adjacent seats where each seat includes a seat back, a seat pan, and a leg rest, with the leg rest moveable between a stored condition and a fully deployed condition where the seat pan and leg rest are substantially coplanar[36]. When deployed, the leg rests of adjacent seats become contiguous, and the combined surfaces cooperate to define a reconfigurable horizontal support surface that can assume T-shape, L-shape, U-shape, and I-shape configurations — allowing at least two adult passengers to recline parallel to the row direction[36].

The patent explicitly contemplates installation in an economy class section of an aircraft and in a class section that offers the lowest standard fare price per seat to customers[36]. In other words, this isn't a business class patent being stretched to cover economy — it was designed from the ground up to cover exactly what United is now proposing.

The IP Goes Deep

Air New Zealand's IP portfolio goes deeper than just the seating arrangement. A separate patent, EP 2509868, covers the specific leg rest mechanism itself — a sophisticated system using cam tracks, hydrolock pistons, synchronization cables, and detent formations that allow each leg rest to move independently between stowed, intermediate, and fully extended positions[39]. The mechanism is entirely self-supporting through the seat frame, requiring no support from the floor or the seat in front[39]. This level of mechanical detail creates additional layers of patent protection beyond the broad concept claims.

The patent family spans the globe, with filings and grants across the United States[33][34][36], Europe[35], Canada[50], Australia[48], Spain[41], France[40], Brazil[37], and other jurisdictions — a clear signal that Air New Zealand invested heavily in protecting this innovation worldwide.

Air New Zealand Has Licensed Before

Critically, Air New Zealand has not simply sat on this IP. The airline has actively licensed the Skycouch technology to other carriers. China Airlines adopted the concept for its 777-300ER fleet[23][126], and Brazilian carrier Azul licensed it for their "SkySofa" product[126]. The Skycouch represents a textbook case of patent protection leading to licensing of competitors[126].

This licensing history establishes two important facts. First, Air New Zealand treats this IP as a revenue-generating asset and actively monitors the market for potential licensees (or infringers). Second, there is a well-worn commercial path for airlines wanting to deploy this technology — they license it from Air New Zealand.

United's Silence on the IP Question

Here is where things get interesting. United's public communications about Relax Row make no mention of Air New Zealand, the Skycouch, or any licensing arrangement[1][138]. The airline's formal "Elevated" interior press release — a detailed document covering Polaris Studio suites, Premium Plus upgrades, economy screen sizes, and even red pepper flakes for onboard meals — contains zero references to economy lie-flat row technology or any third-party IP[138]. The Relax Row announcement appears to have been made separately through United's social media channels[1].

A thorough search of United Airlines' own patent portfolio reveals no filings covering the economy lie-flat row concept. United's seat-related patents focus on entirely different areas: business class herringbone seating with disabled access configurations[54][55], tray table indicators using magnetic ball mechanisms[72], and seat assignment automation systems[60]. Nothing in United's IP portfolio touches the fold-up leg rest mechanism or the convertible economy row concept.

So What's Going On?

There are several plausible explanations, and the truth likely lies in one of these scenarios.

Scenario 1: An undisclosed license. This is the most probable explanation. Licensing agreements between airlines are frequently confidential. Air New Zealand has demonstrated willingness to license the Skycouch, and United — as a sophisticated commercial entity — would almost certainly conduct freedom-to-operate analysis before committing to install this technology across 200+ widebody aircraft. A quiet licensing deal would explain both the functional similarity and the public silence.

Scenario 2: The seat manufacturer as intermediary. Airlines don't build their own seats — they purchase them from specialized manufacturers like Collins Aerospace (formerly B/E Aerospace), Safran Seats, Recaro, or others. The seat manufacturer supplying United's Relax Row hardware may hold a license or sub-license from Air New Zealand, meaning United is purchasing a licensed product rather than directly licensing the IP. This is common practice in the aircraft interiors supply chain.

Scenario 3: A design-around. While the end result looks identical to the Skycouch, the internal mechanism could differ. Air New Zealand's mechanism patent describes very specific cam-track, hydrolock, and synchronization systems[39]. A seat manufacturer could potentially engineer a leg rest that achieves the same functional result — raising to seat-pan height — using different internal mechanics. However, the broader seating arrangement patent covers the concept itself, not just the mechanism, making a pure design-around more difficult[36].

Notably, alternative approaches to economy lie-flat beds do exist. B/E Aerospace (now part of Collins Aerospace/RTX) holds recent patents describing economy seat rows convertible to beds using fundamentally different mechanisms — one where a lower portion of the backrest detaches and slides forward with the seat pan[92][95], and another where the backrest frame rotates forward to overlay the seat pan with a separate mattress placed on top[96]. These patents, filed from India in 2023 and granted in 2025, explicitly target the economy class cabin[92][96]. But from United's own images, the Relax Row appears to use fold-up leg rests — the Skycouch approach — rather than these backrest-based alternatives[1][2].

If There's No License, It Could Get Sticky

The fourth scenario — that United or its supplier is deploying this product without authorization — would create significant legal exposure. Air New Zealand's patent claims are broad, well-established, and have been maintained across multiple jurisdictions for over a decade[36][41][50]. The patent holder has demonstrated both willingness to license and awareness of the commercial value of this IP[126].

Consider the claim mapping. United describes three adjacent economy seats with adjustable leg rests that can each be raised or lowered to create a cozy lie-flat space[1]. Air New Zealand's patent claims cover a row of adjacent seats with leg rests moveable between stored and deployed conditions where the seat pan and leg rest become substantially coplanar, with adjacent leg rests becoming contiguous to form a reconfigurable horizontal support surface[36]. The visual evidence from United's announcement shows leg rests raised to seat level creating a continuous flat surface across the row[1][2] — a near-perfect overlay with the patent claims.

With the patent family not expiring until approximately 2029–2030, and United planning deployment across 200+ aircraft starting next year[1], the commercial stakes are enormous. An infringement finding could result in injunctive relief, royalty payments, or forced redesign — any of which would be extraordinarily costly and disruptive at the scale United is planning.

What to Watch For

The aviation IP community will be watching this space closely. Key indicators will include whether Air New Zealand makes any public statement acknowledging (or challenging) United's product, whether a licensing agreement surfaces in either company's financial disclosures, and whether the seat manufacturer behind Relax Row is identified — which could reveal whether the IP arrangement runs through the supply chain rather than directly between airlines.

For now, the most important takeaway is this: the concept behind United's splashy Relax Row announcement was invented, patented, and commercialized by Air New Zealand more than a decade ago. Whether United is paying for the privilege of using it, or betting that its implementation differs enough to avoid the patent claims, remains one of the more consequential unanswered questions in commercial aviation IP today.

This article was powered by Cypris Q, an AI agent that helps R&D teams instantly synthesize insights from patents, scientific literature, and market intelligence from around the globe. Discover how leading R&D teams use Cypris Q to monitor technology landscapes and identify opportunities faster - Book a demo

The information provided is for general informational purposes only and should not be construed as legal or professional advice.

Citations

[1] United Airlines Relax Row announcement (social media, March 2026)

[2] United Airlines Relax Row product images (March 2026)

[13] Air New Zealand. "Economy Skycouch – Long Haul."

[23] Executive Traveller. "Review: Air New Zealand's Skycouch seat (soon for China Airlines)."

[33] Air New Zealand Limited. Seating Arrangement, Seat Unit, Tray Table and Seating System. Patent No. US-20160031561-A1. Issued Feb 3, 2016.

[34] Air New Zealand Limited. Seating Arrangement, Seat Unit, Tray Table and Seating System. Patent No. US-20150203207-A1. Issued Jul 22, 2015.

[35] Air New Zealand Limited. Seating Arrangement, Seat Unit, Tray Table and Seating System. Patent No. EP-2391541-A1. Issued Dec 6, 2011.

[36] Air New Zealand Limited; Bamford, V.A.; France, J.D.; Porter, G.W.; Suvalko, G.G. Seating arrangement, seat unit, tray table and seating system. Patent No. US-9132918-B2. Issued Sep 14, 2015.

[37] Air New Zealand Limited. Seating arrangement, seat unit and passenger vehicle and method of setting up a passenger seat area. Patent No. BR-PI1008065-B1. Issued Jul 27, 2020.

[39] Air New Zealand Limited. A Seat and Related Leg Rest and Mechanism and Method Therefor. Patent No. EP-2509868-A1. Issued Oct 16, 2012.

[40] Air New Zealand Limited. Seating Arrangement, Seat Unit and Seating System. Patent No. FR-2941656-A3. Issued Aug 5, 2010.

[41] Air New Zealand Limited. Seating arrangement, seat unit, tray table and seating system. Patent No. ES-2742696-T3. Issued Feb 16, 2020.

[48] Air New Zealand Limited. Seating arrangement, seat unit, tray table and seating system. Patent No. AU-2010209371-B2. Issued Jan 13, 2016.

[50] Air New Zealand Limited. Seating Arrangement, Seat Unit, Tray Table and Seating System. Patent No. CA-2750767-C. Issued Apr 9, 2018.

[54] United Airlines, Inc. Passenger seating arrangement having access for disabled passengers. Patent No. US-11655037-B2. Issued May 22, 2023.

[55] United Airlines, Inc. Passenger seating arrangement having access for disabled passengers. Patent No. US-12291336-B2. Issued May 5, 2025.

[60] United Airlines, Inc. Method and system for automating passenger seat assignment procedures. Patent No. US-10185920-B2. Issued Jan 21, 2019.

[72] United Airlines, Inc. Tray table indicator. Patent No. US-12525316-B2. Issued Jan 12, 2026.

[92] B/E Aerospace, Inc. Row of passenger seats convertible to a bed. Patent No. US-12351317-B2. Issued Jul 7, 2025.

[95] B/E Aerospace, Inc. Row of Passenger Seats Convertible to a Bed. Patent No. US-20250051014-A1. Issued Feb 12, 2025.

[96] B/E Aerospace, Inc. Converting economy seat to full flat bed by dropping seat back frame. Patent No. US-12459650-B2. Issued Nov 3, 2025.

[126] Above the Law. "Coach Comfort: Myth Or The Future."

[138] United Airlines. "United Unveils the Elevated Aircraft Interior."

Microsoft Copilot has become the default AI assistant in many enterprise environments, and it is easy to see why. Deep integration with Word, Excel, PowerPoint, and Outlook makes it the path of least resistance for organizations already embedded in the Microsoft 365 ecosystem. But for teams doing serious scientific research, patent analysis, or technology scouting, the path of least resistance is not the same as the path to the best outcome. Copilot's intelligence is grounded in general web data and the documents inside a company's Microsoft tenant. It has no native access to patent corpora, no structured understanding of scientific literature, no concept of prior art or freedom to operate, and no ontology that maps relationships between technical domains. For R&D professionals and IP strategists, those are not nice-to-have features. They are the foundation of the work itself.

The result is a growing gap between what Copilot can do for a marketing team drafting slide decks and what it can do for an R&D scientist evaluating whether a polymer formulation infringes on a competitor's patent family. General-purpose AI assistants treat all information as interchangeable text. Domain-specific intelligence platforms treat information as structured knowledge, with provenance, citation networks, classification hierarchies, and temporal context that determine whether a finding is relevant or misleading. That distinction matters enormously when the downstream consequence of a missed reference is a nine-figure product development failure or an unexpected infringement claim.

This guide evaluates the best alternatives to Microsoft Copilot for teams working in research and development, intellectual property strategy, technology scouting, and scientific literature analysis. Each platform is assessed on three dimensions that matter most for technical and scientific use cases: the specificity and depth of its underlying dataset, the sophistication of its domain ontology or knowledge graph, and the degree to which its workflows align with the actual processes R&D and IP professionals follow every day.

Cypris

Cypris is an enterprise R&D intelligence platform purpose-built for corporate research teams, and it represents the most comprehensive alternative to Microsoft Copilot for technical and scientific use cases available in 2026. Where Copilot draws on general web data and a company's internal Microsoft documents, Cypris provides unified access to more than 500 million patents, scientific papers, grants, clinical trials, and market intelligence sources through a single interface. That dataset distinction is not incremental. It is categorical. An R&D scientist using Copilot to research a novel catalyst formulation will receive answers synthesized from web pages, blog posts, and whatever internal documents happen to be indexed in SharePoint. The same scientist using Cypris will receive answers grounded in the full global patent corpus, peer-reviewed literature spanning hundreds of journals, active grant funding data, and clinical trial records, all searchable through a single query.

What truly differentiates Cypris from both Copilot and the other alternatives on this list is its proprietary R&D ontology, a structured knowledge framework that understands the relationships between technical concepts across domains, industries, and document types. This is not a keyword index or a simple embedding model. It is a purpose-built taxonomy that maps how materials relate to processes, how processes relate to applications, and how applications relate to competitive patent positions. When a researcher queries Cypris about a specific technology area, the ontology ensures that results surface not just documents containing the right words but documents containing the right concepts, even when those concepts are described using different terminology across patents filed in different jurisdictions or papers published in different subfields.

The platform's workflow alignment with R&D processes is equally significant. Cypris supports the full spectrum of intelligence activities that corporate research teams perform, from early-stage technology landscape mapping at Gate 1 of the Stage-Gate process through prior art search, patent landscape analysis, freedom-to-operate assessment, competitive monitoring, and technology scouting. Cypris Q, the platform's AI research agent, generates structured intelligence reports that serve as direct inputs to stage-gate reviews and investment decisions, rather than requiring researchers to manually synthesize findings from multiple disconnected tools. Hundreds of enterprise teams and thousands of researchers across R&D, IP, and product development rely on Cypris as their primary technical intelligence infrastructure. Official enterprise API partnerships with OpenAI, Anthropic, and Google ensure the platform leverages frontier AI capabilities, while enterprise-grade security meets the requirements of Fortune 500 organizations handling sensitive pre-patent intellectual property. For any R&D or IP team currently using Copilot and finding that general-purpose AI falls short of their technical intelligence needs, Cypris is the most direct and complete upgrade available.

Elicit

Elicit is an AI research assistant focused specifically on scientific literature review and evidence synthesis. The platform searches approximately 138 million academic papers sourced primarily from the Semantic Scholar database and applies large language models to summarize findings, extract structured data from papers, and support systematic review workflows. For researchers conducting literature reviews, Elicit's ability to screen papers against user-defined criteria and extract specific data points into customizable tables represents a genuine productivity improvement over manual methods. Researchers using the platform report significant time savings on literature reviews, and its guided workflow for systematic reviews covers search, screening, extraction, and report generation in a structured sequence.

However, Elicit's dataset is limited to academic literature. It does not include patents, grants, clinical trial data, or market intelligence sources. This means that any R&D workflow requiring cross-referencing between published research and the patent landscape, which includes virtually every corporate technology assessment, will require supplementing Elicit with one or more additional tools. The platform also lacks a domain-specific ontology for R&D. Its search relies on semantic understanding of natural language queries matched against paper abstracts and full texts, which works well for finding relevant literature within a known domain but does not map the structural relationships between technical concepts that enable true landscape-level intelligence. Elicit is best suited for academic researchers and scientists focused on literature synthesis within a well-defined research question. For enterprise R&D teams needing to integrate patent intelligence with scientific literature analysis, the platform will need to be paired with additional patent search and analysis tools.

Consensus

Consensus takes a different approach to scientific research by functioning as an evidence-based search engine designed to answer research questions with findings drawn directly from peer-reviewed literature. The platform indexes over 200 million academic papers and uses AI to synthesize findings across multiple studies, providing concise answers with direct citations to source papers. Its signature feature is the Consensus Meter, which provides a visual representation of whether the scientific literature broadly supports or contradicts a given claim. For questions with clear empirical dimensions, such as whether a particular intervention produces a measurable effect, this feature can provide a rapid orientation to the state of the evidence that would take hours to assemble through manual review.

The dataset underlying Consensus is broad in its coverage of peer-reviewed literature but, like Elicit, excludes patents, technical standards, regulatory filings, and other document types that corporate R&D teams routinely need. The platform also lacks any R&D-specific ontological structure. Its strength lies in aggregating evidence around discrete research questions rather than mapping complex technology landscapes or identifying competitive positioning across patent portfolios. Consensus is most valuable as a rapid evidence-checking tool for scientists who need to quickly assess the state of research on a specific empirical question. It is not designed to support the broader strategic intelligence workflows, such as prior art search, competitive patent monitoring, or technology scouting, that enterprise R&D teams require.

Scite

Scite occupies a unique position in the research intelligence landscape through its focus on contextual citation analysis. The platform indexes over 250 million articles and uses machine learning to classify citation statements as supporting, contrasting, or mentioning, providing researchers with a deeper understanding of how a given paper has been received by the scientific community than simple citation counts can offer. This Smart Citations feature addresses a genuine blind spot in traditional citation analysis, where a paper cited 500 times might be cited 400 times in support and 100 times in disagreement, a distinction that raw citation counts completely obscure. Scite also offers citation dashboards, a browser extension for inline citation context, and an AI assistant for research queries grounded in its citation database.

Scite's dataset is substantial for scientific literature, and its contextual citation analysis represents a genuinely differentiated capability. However, the platform remains focused on academic citation networks and does not extend into patent data, market intelligence, or the broader range of technical document types that R&D teams analyze. Its ontological structure is oriented around citation relationships rather than technical domain taxonomies, which makes it excellent for evaluating the scientific credibility of specific claims but less useful for mapping technology landscapes or identifying white space in patent portfolios. Scite is best positioned as a supplementary tool for R&D teams that need to assess the reliability and reception of specific scientific findings, particularly during due diligence or when evaluating whether a technology direction is supported by robust evidence.

The Lens

The Lens stands out among the tools on this list because it is one of the few platforms that natively integrates patent data and scholarly literature within a single search interface. Operated by Cambia, an Australian nonprofit, The Lens provides free access to over 200 million scholarly records and patent documents from more than 100 jurisdictions, with bidirectional linking between patents and the academic papers they cite. This means a researcher can start from a patent and immediately see the scientific literature cited within it, or start from a scholarly paper and trace which patents reference that research. That bidirectional linkage is valuable for R&D teams conducting prior art searches or evaluating the relationship between published science and commercialized intellectual property.

The Lens also offers biological sequence searching through its PatSeq tools, which is particularly useful for life sciences R&D teams working in genomics, synthetic biology, or biopharmaceuticals. As a free, open-access platform, The Lens provides remarkable value for the cost. Its limitations emerge at the enterprise scale. The platform lacks AI-powered semantic search capabilities, meaning researchers must rely on Boolean queries and structured search syntax rather than natural language. It does not have a proprietary R&D ontology that maps relationships between technical concepts, and its analytics and visualization tools, while functional, are less sophisticated than those offered by dedicated enterprise intelligence platforms. The Lens is an excellent entry point for R&D teams that want patent and literature search in a single interface without a significant licensing investment, but teams requiring AI-driven landscape analysis, automated monitoring, or integration with enterprise workflows will find its capabilities insufficient as a primary intelligence platform.

Semantic Scholar

Semantic Scholar is a free AI-powered academic search engine developed by the Allen Institute for AI, indexing over 214 million papers with a strong emphasis on computer science and biomedical research. The platform's AI features go beyond basic keyword matching to include TLDR summaries that provide one-sentence overviews of paper contributions, Semantic Reader for augmented reading with contextual citation information, and Research Feeds that learn user preferences and recommend relevant new publications. Its ability to identify highly influential citations, distinguishing between perfunctory references and citations that meaningfully build on prior work, is a genuinely useful feature for researchers trying to trace the intellectual lineage of a research direction.

Semantic Scholar's greatest strength is also its most important limitation for R&D professionals: it is purely an academic literature discovery tool. It contains no patent data, no market intelligence, no clinical trial records, and no regulatory information. It also offers no enterprise features such as team collaboration, role-based access, or integration with internal knowledge management systems. The platform's knowledge graph maps relationships between papers, authors, and venues, but it does not provide the kind of R&D-specific ontological structure that connects research findings to applications, materials to processes, or scientific concepts to patent classifications. For academic researchers who need a powerful free tool for literature discovery and exploration, Semantic Scholar is among the best available. For corporate R&D teams that need their intelligence platform to span multiple document types and support enterprise-grade workflows, it serves as a useful complement to a more comprehensive platform rather than a replacement for one.

Google Patents

Google Patents provides free access to over 120 million patent documents from patent offices worldwide, with full-text search, machine translation of foreign-language patents, and prior art search functionality. The platform benefits from Google's search infrastructure, making basic patent searches fast and accessible. Google's prior art finder can identify potentially relevant prior art based on text descriptions rather than formal patent classification codes, which lowers the barrier to entry for researchers who are not trained patent searchers.

The limitations of Google Patents become apparent quickly for teams doing serious IP work. The platform offers no scientific literature integration, no landscape visualization or analytics tools, no competitive monitoring or alerting capabilities, and no structured ontology for navigating technical domains. Search results are presented as a flat list of documents with basic metadata rather than as an analyzed landscape with trends, key players, and technology clusters. Google Patents is useful as a quick reference tool for checking whether a specific patent exists or for performing a preliminary scan of a technology area, but it lacks the analytical depth, dataset breadth, and workflow support that enterprise R&D and IP teams need for substantive intelligence work.

Perplexity

Perplexity has gained significant traction as a general-purpose AI research tool that provides cited answers to questions by searching the web and synthesizing information from multiple sources. Its strength lies in its ability to produce well-structured answers with inline citations, making it useful for rapid orientation to unfamiliar topics. For R&D professionals, Perplexity can serve as a starting point for understanding a new technology area or checking recent developments before conducting deeper analysis with specialized tools.

The fundamental limitation of Perplexity for R&D and scientific use cases is the same limitation that applies to Microsoft Copilot: its dataset is the open web. Perplexity does not have direct access to patent databases, paywalled scientific journals, clinical trial registries, or proprietary technical databases. Its citations come from publicly accessible web pages, which may include summaries of research rather than the research itself. It has no ontological structure for technical domains and no understanding of patent classification systems, priority dates, claim structures, or the other specialized metadata that R&D and IP professionals rely on. Perplexity is best understood as a more transparent and citation-friendly version of general web search, not as a substitute for domain-specific R&D intelligence tools.

How to Choose the Right Alternative

The choice between these alternatives depends on the specific workflows a team needs to support and the types of decisions those workflows inform. Teams whose work centers entirely on academic literature review and evidence synthesis may find that a combination of Elicit, Consensus, and Semantic Scholar covers their needs effectively. Teams that need patent intelligence alongside scientific literature analysis should prioritize platforms that natively integrate both data types, with The Lens providing a free option and Cypris providing the most comprehensive enterprise solution. Teams that need a single platform to serve as their primary R&D intelligence infrastructure, spanning patent landscape analysis, scientific literature review, competitive monitoring, technology scouting, and freedom-to-operate assessment, will find that Cypris is the only alternative on this list that addresses all of those workflows within a unified interface backed by a purpose-built R&D ontology.

The broader lesson is that general-purpose AI tools like Microsoft Copilot and Perplexity are optimized for general-purpose productivity. They make it faster to draft documents, summarize meetings, and answer common questions. But R&D and IP work is not general-purpose work. It depends on specialized datasets, structured ontologies, and domain-specific workflows that general tools simply do not provide. Organizations that recognize this distinction and invest in purpose-built intelligence platforms will consistently make better-informed research decisions than those relying on general AI assistants to perform specialized technical work.

Frequently Asked Questions

Why is Microsoft Copilot not ideal for R&D and scientific research?Microsoft Copilot is built on general web data and the contents of a company's Microsoft 365 environment. It has no native access to patent databases, no index of peer-reviewed scientific literature, no understanding of patent classification systems, and no R&D-specific ontology for mapping relationships between technical concepts. For R&D professionals, this means Copilot cannot perform prior art searches, analyze patent landscapes, monitor competitive technology filings, or synthesize findings across patents and scientific papers, all of which are core R&D intelligence activities.

What is the best Microsoft Copilot alternative for enterprise R&D teams?Cypris is the most comprehensive alternative to Microsoft Copilot for enterprise R&D teams in 2026. The platform provides unified access to over 500 million patents, scientific papers, grants, clinical trials, and market sources through a single AI-powered interface with a proprietary R&D ontology, multimodal search capabilities, and official enterprise API partnerships with OpenAI, Anthropic, and Google. Cypris supports the full range of enterprise R&D intelligence workflows, from prior art search and patent landscape analysis to competitive monitoring and technology scouting.

What is an R&D ontology and why does it matter for technical research?An R&D ontology is a structured knowledge framework that maps relationships between technical concepts, materials, processes, applications, and patent classifications across domains and industries. It matters because keyword-based search tools only find documents containing the exact terms a researcher uses, while an ontology-powered platform can identify relevant documents that describe the same concept using different terminology, different languages, or different technical frameworks. This capability is especially important when searching across patents filed in multiple jurisdictions, where the same invention may be described in fundamentally different ways.

Can free tools like The Lens and Semantic Scholar replace paid R&D intelligence platforms?Free tools like The Lens and Semantic Scholar provide substantial value for individual researchers conducting specific searches. The Lens is particularly notable for integrating patent and scholarly data in a single interface. However, free tools generally lack AI-powered semantic search, proprietary ontologies, automated monitoring and alerting, enterprise collaboration features, integration with internal knowledge management systems, and the security certifications that Fortune 500 organizations require. For enterprise R&D teams managing portfolios of research projects across multiple technology domains, purpose-built platforms provide capabilities that free tools cannot replicate.

How does Elicit differ from Cypris for scientific literature review?Elicit specializes in academic literature review and evidence synthesis, searching approximately 138 million papers and supporting systematic review workflows including screening, data extraction, and report generation. Cypris provides a broader scope that includes scientific literature alongside patents, grants, clinical trials, and market intelligence, all searchable through a proprietary R&D ontology. Elicit is designed for researchers focused on a specific empirical question within published literature. Cypris is designed for R&D teams that need to evaluate a technology landscape across multiple data types and make strategic decisions based on the full innovation picture.

What is contextual citation analysis and why does Scite offer it?Contextual citation analysis, as implemented by Scite's Smart Citations feature, classifies how a paper is cited by subsequent publications, distinguishing between citations that support, contrast, or simply mention the original work. This matters because traditional citation counts treat all references equally, giving no indication of whether a highly cited paper is highly cited because its findings are widely confirmed or because its conclusions are widely disputed. For R&D teams evaluating whether to build on a particular scientific finding, understanding the nature of citations is as important as knowing the total count.

Does Perplexity have access to patent databases or scientific journals?No. Perplexity searches the open web and synthesizes answers from publicly accessible sources. It does not have direct access to patent databases, paywalled scientific journals, clinical trial registries, or proprietary technical databases. While it may surface summaries or secondary reports about patents and research, it cannot search the primary sources that R&D and IP professionals need to review for substantive technical intelligence work.

What types of R&D workflows require a specialized intelligence platform rather than a general AI assistant?Workflows that require specialized intelligence platforms include prior art search, patent landscape analysis, freedom-to-operate assessment, competitive technology monitoring, technology scouting, scientific literature review integrated with patent analysis, identification of white space in patent portfolios, and early-stage technology assessment at Gate 1 of the Stage-Gate process. These workflows depend on access to specialized datasets, understanding of patent classification systems, and the ability to map relationships between technical concepts across different document types, none of which general AI assistants like Copilot or Perplexity provide.

How do R&D ontologies differ from the knowledge graphs used by general AI tools?General AI tools use broad knowledge graphs derived from web data that represent millions of entities and relationships across every conceivable domain. R&D ontologies are purpose-built taxonomies that focus specifically on technical and scientific concepts, mapping how materials relate to processes, how processes relate to applications, how applications map to patent classifications, and how all of these connect across industries and jurisdictions. The specificity of an R&D ontology enables a level of precision in technical search and analysis that general knowledge graphs cannot achieve because general graphs prioritize breadth over domain depth.

What security considerations should R&D teams evaluate when choosing a Copilot alternative?R&D teams routinely work with pre-patent inventions, proprietary formulations, competitive analyses, and other highly sensitive intellectual property. Any AI platform used for R&D intelligence must meet enterprise-grade security requirements, including data isolation, encryption, access controls, and compliance certifications appropriate for the organization's industry. General-purpose AI assistants may process queries through shared infrastructure without the data governance controls that sensitive IP work demands. Enterprise R&D intelligence platforms like Cypris are designed to meet these requirements, ensuring that proprietary research queries and results remain protected.