How Cypris Empowers R&D Teams

Keep Reading

Clarivate is not a single product. It is a portfolio of acquired tools assembled over decades, and the two platforms that enterprise R&D teams use most frequently — Derwent Innovation for patent intelligence and Web of Science for scientific literature — were designed for entirely different audiences with entirely different workflows. Derwent was built for IP attorneys conducting freedom-to-operate searches. Web of Science was built for academic librarians and university researchers. Neither was built for the R&D scientist trying to answer a strategic question about a technology landscape, a competitive portfolio, or an emerging technical risk.

The gap between what Clarivate's R&D-adjacent tools were designed to do and what modern innovation teams actually need is the primary reason organizations are evaluating alternatives. This guide examines six of the strongest alternatives to Clarivate for enterprise R&D and IP teams, explains what distinguishes each platform, and provides a framework for matching your team's specific requirements to the right solution.

Why R&D Teams Are Reevaluating Clarivate

Clarivate's position in the market is the product of consolidation, not native product design. The company was spun out of Thomson Reuters' IP and Science division in 2016 and has since assembled its portfolio through a series of acquisitions — Derwent, Web of Science, ProQuest, Cortellis, and others — without fully integrating the underlying data architectures. For R&D teams, the practical consequence is that patent intelligence and scientific literature intelligence live in separate platforms with separate subscriptions, separate interfaces, and separate learning curves.

This fragmentation has real costs. An R&D scientist conducting a technology scouting exercise needs to understand what has been patented, what has been published in the scientific literature, and how those two bodies of knowledge relate to each other. Performing that analysis through Derwent and Web of Science requires toggling between platforms, manually reconciling results, and building synthesis layers that neither tool provides natively. The time investment alone is a meaningful barrier, and the cognitive load of maintaining fluency in two complex legacy interfaces reduces the frequency with which R&D teams can turn to patent and literature intelligence for decision support.

Pricing is a compounding factor. Clarivate's enterprise contracts for combined Derwent and Web of Science access can run into six figures annually, and the terms typically require institutional commitment rather than flexible per-seat or usage-based arrangements. For Fortune 500 R&D organizations that have historically lived with the cost because no integrated alternative existed, the rapid maturation of AI-native intelligence platforms over the past three years has changed the evaluation calculus significantly.

There is also a structural concern specific to Derwent. Clarivate's Derwent World Patents Index is maintained by a team of over 800 patent editors who manually write abstracts for each invention family — a curation model that represents both the platform's greatest strength and its most significant vulnerability. The value of Derwent has always rested on human expertise applied at scale. As AI-native platforms develop increasingly sophisticated capabilities for patent comprehension and synthesis, the competitive differentiation of hand-written abstracts is narrowing, and the cost premium associated with that curation model becomes harder to justify for teams whose primary need is strategic intelligence rather than legal-quality prior art analysis.

What to Look for in a Clarivate Alternative

Before evaluating specific platforms, it is worth being precise about what Clarivate's R&D-adjacent products actually do, because the alternatives that best address those functions are not necessarily the platforms that appear most often in head-to-head comparison articles.

Derwent Innovation provides access to the Derwent World Patents Index, a curated database covering over 130 million patents, along with tools for patent search, analytics, portfolio management, and competitive landscaping. Its primary design center is the patent professional: the interface and workflows are optimized for freedom-to-operate analyses, patentability assessments, and portfolio strategy decisions that require high-confidence data quality.

Web of Science provides access to a peer-reviewed scientific literature database covering approximately 20,000 journals, along with citation analytics, research performance metrics, and discovery tools. Its primary design center is the academic researcher and institutional library administrator.

An effective Clarivate alternative for an enterprise R&D team needs to cover both functions, ideally within a unified architecture, and needs to provide the kind of strategic synthesis and workflow integration that neither Derwent nor Web of Science was designed to deliver. The evaluation criteria that matter most are unified data architecture, native AI capabilities, scientific literature depth alongside patent coverage, enterprise security posture, and whether the platform was designed for R&D scientists and innovation strategists or for IP attorneys and academic administrators.

The Best Clarivate Alternatives for Enterprise R&D Teams

Cypris — Best Unified Platform for Enterprise R&D Intelligence

Cypris takes a fundamentally different approach to R&D intelligence than Clarivate's two-platform model. Rather than providing a patent database and a literature database as separate tools, Cypris unifies over 500 million patents and scientific papers within a single platform, structured through a proprietary R&D ontology that understands the relationships between technical concepts across both corpora. The result is that searches and analyses performed in Cypris return integrated results from patents and scientific literature simultaneously, without requiring the researcher to reconcile findings from separate systems.

The distinction is not merely a user experience improvement. When patent data and scientific literature are indexed through a shared ontology rather than maintained in separate silos, the analytical possibilities expand substantially. A technology scouting exercise can reveal not just what has been patented in a domain but what the concurrent scientific literature suggests about the direction of technical development, where the patent portfolio is leading versus lagging the research frontier, and which organizations are accumulating both IP and publication activity in emerging areas. These cross-signal insights are structurally unavailable in a Derwent-plus-Web-of-Science architecture because the data models do not share a common semantic layer.

Cypris is trusted by hundreds of enterprise teams and thousands of researchers across R&D, IP, and product development functions, including organizations in the Fortune 500. The platform's AI architecture is built on official enterprise API partnerships with OpenAI, Anthropic, and Google — partnerships that distinguish it from platforms that have layered general-purpose AI onto legacy data infrastructure without formal integration agreements. Enterprise security meets Fortune 500 requirements, addressing the compliance and data governance requirements that govern platform adoption decisions at large corporations.

For organizations that have historically maintained separate Derwent and Web of Science subscriptions, Cypris offers the possibility of consolidating that intelligence infrastructure into a single platform while simultaneously gaining access to AI capabilities that neither legacy tool provides. The platform's Research Brief service extends beyond self-service search to provide bespoke analysis by Cypris research analysts, which addresses the capacity constraint that limits how frequently in-house teams can conduct deep landscape analyses.

Google Patents — Best Free Option for Preliminary Research

Google Patents provides free access to patent documents from major patent offices worldwide, with an interface that will be immediately familiar to anyone comfortable with Google's search products. The platform indexes over 87 million patents and offers some integration with Google Scholar to bring non-patent literature into search results.

For preliminary research, competitive screening, and exploratory work, Google Patents offers genuine utility. The familiar search interface eliminates the training investment required by Derwent and Orbit, and the zero-cost access model makes it available to anyone in an R&D organization without procurement friction. Translation capabilities allow English-language searches to surface relevant patents from non-English-language jurisdictions, which addresses one of the more significant practical limitations of manual prior art searching.

The gap between Google Patents and enterprise-grade intelligence platforms is most visible in the analytics layer. Google Patents is a document retrieval tool. It does not offer patent landscaping, portfolio analytics, competitive benchmarking, or AI-powered synthesis — the capabilities that allow R&D teams to extract strategic insights from patent data rather than simply locating relevant documents. For organizations that have been paying Clarivate prices, the step down to Google Patents represents a significant reduction in capability even as it eliminates license costs entirely. It functions well as a complement to an enterprise platform for quick searches, but not as a replacement for the strategic intelligence that Derwent and Web of Science provide in combination.

The Lens — Best Free Platform for Combined Patent and Literature Access

The Lens is the most capable free alternative for organizations that need both patent and scientific literature access without a commercial subscription. The platform provides open access to over 300 million patent records and more than 200 million scientific documents, making it the most comprehensive free resource available for the combined research task that Derwent and Web of Science together currently serve

What distinguishes The Lens from other free tools is its integration philosophy. Patent records and scholarly works are available within the same interface, and The Lens supports citation analysis linking patents to the scientific literature they cite and vice versa. This cross-domain citation capability partially replicates one of the most valuable analytical functions in a combined Derwent and Web of Science environment — understanding how patent filings and published research co-evolve in a technology area.

The Lens operates under an open-access mission and is supported by charitable foundations rather than commercial subscription revenue, which means its development roadmap and feature investment are less predictable than those of commercial platforms. The analytical tools are more limited than those available in Orbit or enterprise platforms, and there is no AI-powered synthesis capability comparable to what modern commercial platforms provide. For budget-constrained teams or organizations beginning to build a patent and literature intelligence practice before committing to enterprise platform investments, The Lens represents a meaningful option. It is not a direct substitute for the combined capability of Clarivate's R&D suite, but it provides a more complete free alternative than any other single platform.

PQAI — Best Open-Source AI Patent Search

PQAI is an open-source patent search platform built on an AI-first philosophy that removes the requirement for Boolean search expertise. Researchers can submit queries in natural language and receive relevant patent results without building complex search strings or learning classification system syntax. The platform includes a prior art search API that allows R&D and legal teams to embed patent intelligence directly into their workflows rather than requiring researchers to visit a separate interface.

For organizations where the primary limitation of Derwent and other legacy platforms has been the training barrier — the reality that effective use requires significant investment in Boolean search and classification system expertise — PQAI offers a genuinely different user experience. Its accessibility makes patent intelligence available to R&D scientists who would not typically engage with Derwent's professional-grade interface.

PQAI's scope is narrower than Clarivate's R&D suite. It does not include scientific literature, and its analytical capabilities are more limited than those of commercial platforms. It is most appropriately used as a prior art search and patent discovery tool rather than as a strategic intelligence platform. PQAI fits best in organizations where patent accessibility is the primary unmet need and where the R&D intelligence use case is being built incrementally rather than addressed through a comprehensive platform investment.

Scite — Best for Citation Intelligence

Scite addresses the scientific literature dimension of the Clarivate suite more directly than any other alternative on this list. The platform provides access to over 1.2 billion citation statements from the scientific literature, with AI-powered analysis of whether each citation supports, contrasts, or simply mentions the cited work. This distinction between supporting and contrasting citations transforms citation analysis from a quantitative measure of research influence into a qualitative map of scientific consensus and controversy — a capability that Web of Science's citation analytics does not provide.

For R&D teams whose primary use of Web of Science is tracking the scientific literature in their technology domains, understanding where expert consensus is solidifying versus where debates remain open, and identifying emerging research directions before they appear in patent filings, Scite's citation intelligence capability offers something meaningfully different from what Web of Science delivers. It is a tool oriented around scientific understanding rather than research performance metrics.

Scite does not address the patent dimension of the Clarivate use case, and its data coverage, while extensive, is focused on the scholarly literature rather than the full breadth of technical documentation that platforms like Cypris access. Organizations replacing a combined Derwent and Web of Science subscription will need to address the patent intelligence requirement separately if they select Scite for the literature component. It is most appropriately positioned as a supplement to an enterprise intelligence platform or as a specialized tool for scientific literature analysis within a broader technology monitoring program.

Choosing the Right Alternative

The right Clarivate alternative depends on which parts of the R&D intelligence workflow the current Clarivate subscription is actually serving and what the primary failure modes of the existing setup are.

For organizations that use Derwent and Web of Science as integrated inputs into technology scouting, competitive landscape analysis, and R&D investment decisions, the most important criterion is unified data architecture. Platforms that treat patents and scientific literature as separate databases with separate interfaces recreate the fragmentation that makes Clarivate's two-platform model difficult to use efficiently. The relevant question is not which alternative is best at patents and which is best at literature, but which alternative treats them as components of a single intelligence layer.

For organizations that use Clarivate primarily for patent prosecution support, freedom-to-operate analysis, and legal-quality prior art searching, the relevant alternatives are different. The data quality and curation precision of Derwent's human-written abstracts matter significantly for legal applications in ways they do not for strategic R&D applications, and the evaluation should weight Orbit Intelligence's capabilities more heavily.

For organizations with constrained budgets exploring their options before committing to enterprise platform investments, the combination of The Lens for free patent and literature access and Scite for citation intelligence provides a meaningful foundation. Neither platform alone replicates Clarivate's combined capability, but together they address the core discovery and analysis functions at no cost.

The broader pattern in how enterprise R&D teams are evaluating this market is a shift toward platforms that were designed for scientists and innovation strategists rather than platforms originally designed for attorneys and academic administrators. Clarivate's core products are genuinely excellent at what they were built to do. The question organizations are asking is whether what they were built to do maps onto what modern enterprise R&D functions actually need — and increasingly, the answer is that the fit is incomplete.

Frequently Asked Questions

What is Clarivate used for in enterprise R&D?

In enterprise R&D contexts, Clarivate is most commonly used through two products: Derwent Innovation for patent search and analytics, and Web of Science for scientific literature access and citation analysis. R&D teams use these tools for technology scouting, competitive landscape analysis, prior art research, and tracking the scientific literature in their technology domains. Because these products are sold as separate subscriptions with separate interfaces, organizations often maintain both to cover the full range of patent and literature intelligence tasks, which creates workflow fragmentation and a combined cost that enterprise R&D teams are increasingly questioning as AI-native unified platforms have matured.

How does Derwent Innovation compare to other patent platforms?

Derwent Innovation's primary differentiator is the Derwent World Patents Index, a curated database in which human patent editors write standardized abstracts for each invention family. These hand-written abstracts improve search precision and patent comprehension, particularly in complex technical domains. The platform covers over 130 million patents and is used by more than 40 national patent offices. Its limitations relative to modern alternatives include a traditional interface designed for IP attorneys rather than R&D scientists, the absence of native scientific literature integration, and a cost structure that reflects its premium data curation model. AI-native platforms increasingly challenge its differentiation by offering sophisticated natural language search and synthesis capabilities that reduce the practical advantage of manually curated abstracts for strategic R&D applications.

Is there a free alternative to Clarivate for R&D research?

The Lens provides the most comprehensive free alternative for the combined patent and scientific literature access that Derwent and Web of Science together currently serve. It covers over 300 million patent records and more than 200 million scholarly documents within a single interface and supports citation analysis linking patents to the scientific literature they cite. PQAI is a capable free option specifically for prior art patent search using natural language queries. Google Patents remains useful for preliminary patent research. None of these free options replicates the analytical capabilities and AI-powered synthesis available in enterprise platforms, but they provide meaningful starting points for organizations building their R&D intelligence practice.

Why are R&D teams replacing Clarivate with AI-native platforms?

The primary reasons R&D teams are evaluating AI-native alternatives to Clarivate center on three limitations of the current platform architecture. First, Derwent and Web of Science are separate products that do not share a unified data model, which requires manual synthesis when both patent and literature intelligence are needed for the same analysis. Second, both platforms were designed for IP attorneys and academic researchers respectively, and their interfaces and analytical tools reflect those use cases rather than the workflow of an R&D scientist or innovation strategist. Third, AI-native platforms have developed sufficient capability in natural language patent search, landscape synthesis, and cross-domain analysis to reduce the competitive advantage of Derwent's manual curation model for strategic R&D applications, while offering workflow integration and AI synthesis capabilities that Clarivate's tools do not provide.

What should enterprise teams prioritize when evaluating Clarivate alternatives?

Enterprise teams should prioritize unified data architecture above other criteria when evaluating Clarivate alternatives. Platforms that treat patents and scientific literature as separate data sources with separate interfaces recreate the fragmentation problem that is the primary operational limitation of the Clarivate suite. After data architecture, the relevant evaluation criteria are native AI capabilities and the quality of synthesis they enable, enterprise security posture and compliance certifications, scientific literature depth alongside patent coverage, and whether the platform's design orientation matches the actual users — R&D scientists and innovation strategists rather than IP attorneys. Cost structure and contract flexibility are also significant considerations given the high annual cost of Clarivate enterprise subscriptions.

Executive Summary

In 2024, US patent infringement jury verdicts totaled $4.19 billion across 72 cases. Twelve individual verdicts exceeded $100million. The largest single award—$857 million in General Access Solutions v.Cellco Partnership (Verizon)—exceeded the annual R&D budget of many mid-market technology companies. In the first half of 2025 alone, total damages reached an additional $1.91 billion.

The consequences of incomplete patent intelligence are not abstract. In what has become one of the most instructive IP disputes in recent history, Masimo’s pulse oximetry patents triggered a US import ban on certain Apple Watch models, forcing Apple to disable its blood oxygen feature across an entire product line, halt domestic sales of affected models, invest in a hardware redesign, and ultimately face a $634 million jury verdict in November 2025. Apple—a company with one of the most sophisticated intellectual property organizations on earth—spent years in litigation over technology it might have designed around during development.

For organizations with fewer resources than Apple, the risk calculus is starker. A mid-size materials company, a university spinout, or a defense contractor developing next-generation battery technology cannot absorb a nine-figure verdict or a multi-year injunction. For these organizations, the patent landscape analysis conducted during the development phase is the primary risk mitigation mechanism. The quality of that analysis is not a matter of convenience. It is a matter of survival.

And yet, a growing number of R&D and IP teams are conducting that analysis using general-purpose AI tools—ChatGPT, Claude, Microsoft Co-Pilot—that were never designed for patent intelligence and are structurally incapable of delivering it.

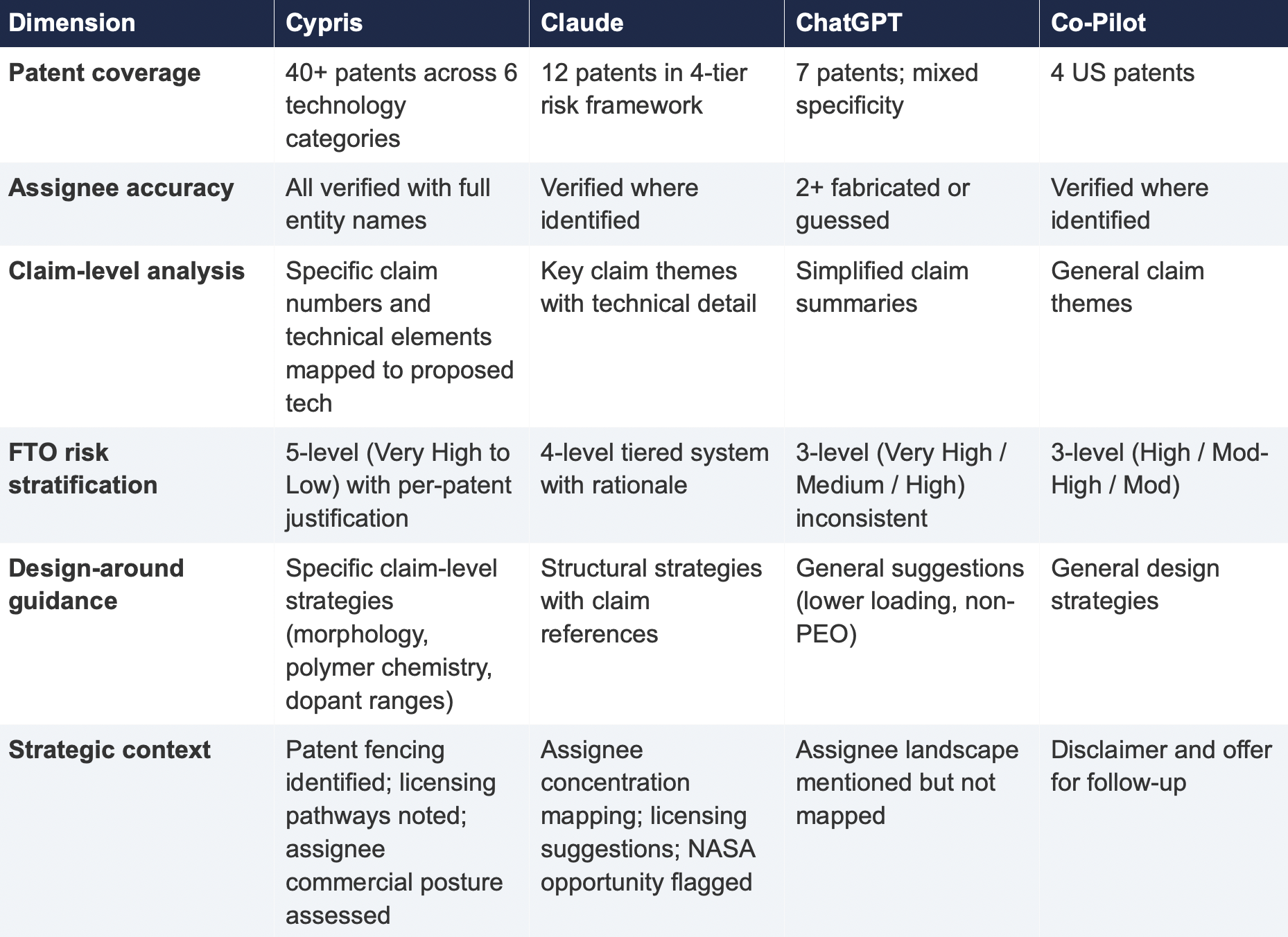

This report presents the findings of a controlled comparison study in which identical patent landscape queries were submitted to four AI-powered tools: Cypris (a purpose-built R&D intelligence platform),ChatGPT (OpenAI), Claude (Anthropic), and Microsoft Co-Pilot. Two technology domains were tested: solid-state lithium-sulfur battery electrolytes using garnet-type LLZO ceramic materials (freedom-to-operate analysis), and bio-based polyamide synthesis from castor oil derivatives (competitive intelligence).

The results reveal a significant and structurally persistent gap. In Test 1, Cypris identified over 40 active US patents and published applications with granular FTO risk assessments. Claude identified 12. ChatGPT identified 7, several with fabricated attribution. Co-Pilot identified 4. Among the patents surfaced exclusively by Cypris were filings rated as “Very High” FTO risk that directly claim the technology architecture described in the query. In Test 2, Cypris cited over 100 individual patent filings with full attribution to substantiate its competitive landscape rankings. No general-purpose model cited a single patent number.

The most active sectors for patent enforcement—semiconductors, AI, biopharma, and advanced materials—are the same sectors where R&D teams are most likely to adopt AI tools for intelligence workflows. The findings of this report have direct implications for any organization using general-purpose AI to inform patent strategy, competitive intelligence, or R&D investment decisions.

1. Methodology

A single patent landscape query was submitted verbatim to each tool on March 27, 2026. No follow-up prompts, clarifications, or iterative refinements were provided. Each tool received one opportunity to respond, mirroring the workflow of a practitioner running an initial landscape scan.

1.1 Query

Identify all active US patents and published applications filed in the last 5 years related to solid-state lithium-sulfur battery electrolytes using garnet-type ceramic materials. For each, provide the assignee, filing date, key claims, and current legal status. Highlight any patents that could pose freedom-to-operate risks for a company developing a Li₇La₃Zr₂O₁₂(LLZO)-based composite electrolyte with a polymer interlayer.

1.2 Tools Evaluated

1.3 Evaluation Criteria

Each response was assessed across six dimensions: (1) number of relevant patents identified, (2) accuracy of assignee attribution,(3) completeness of filing metadata (dates, legal status), (4) depth of claim analysis relative to the proposed technology, (5) quality of FTO risk stratification, and (6) presence of actionable design-around or strategic guidance.

2. Findings

2.1 Coverage Gap

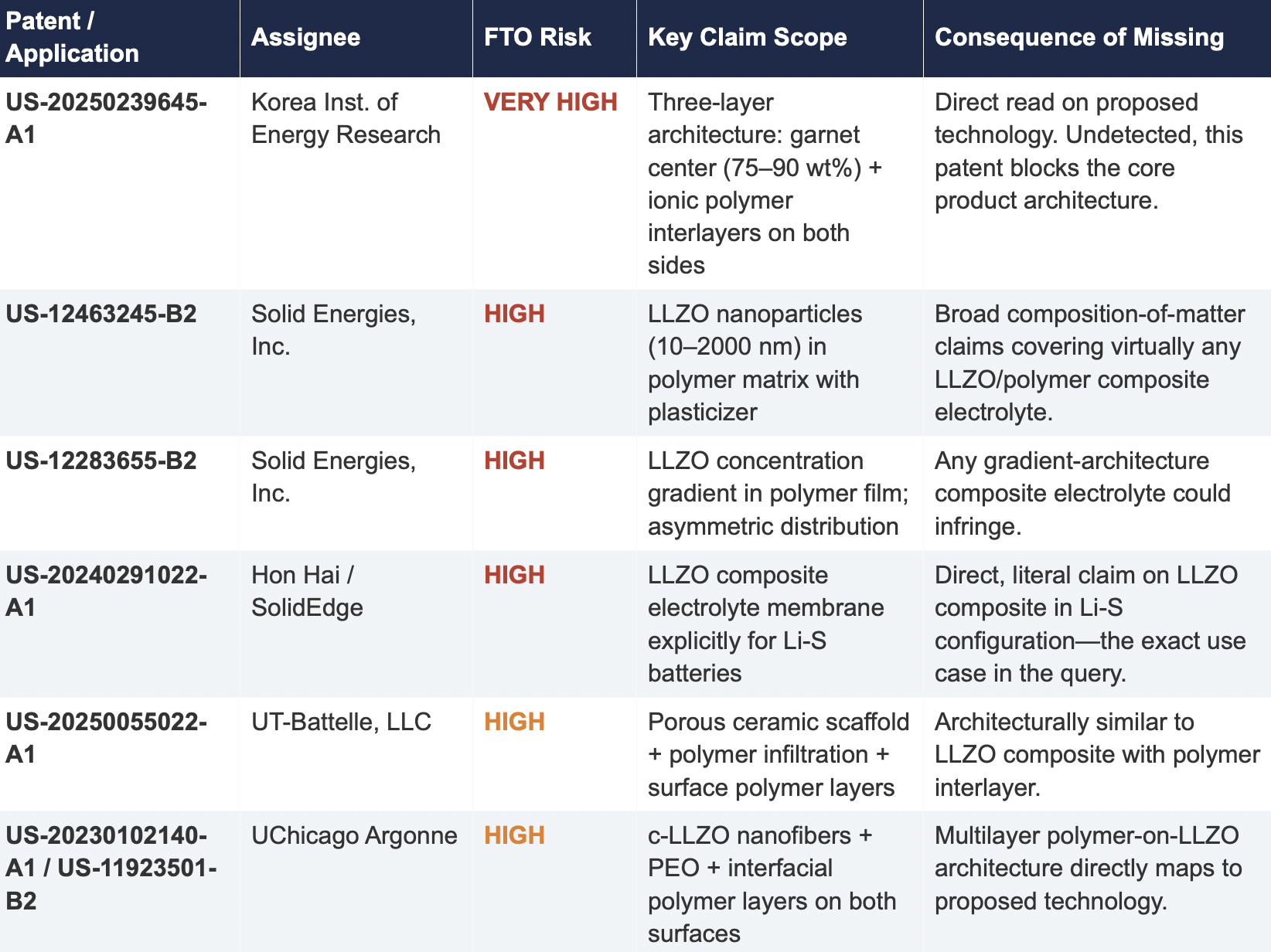

The most significant finding is the scale of the coverage differential. Cypris identified over 40 active US patents and published applications spanning LLZO-polymer composite electrolytes, garnet interface modification, polymer interlayer architectures, lithium-sulfur specific filings, and adjacent ceramic composite patents. The results were organized by technology category with per-patent FTO risk ratings.

Claude identified 12 patents organized in a four-tier risk framework. Its analysis was structurally sound and correctly flagged the two highest-risk filings (Solid Energies US 11,967,678 and the LLZO nanofiber multilayer US 11,923,501). It also identified the University ofMaryland/ Wachsman portfolio as a concentration risk and noted the NASA SABERS portfolio as a licensing opportunity. However, it missed the majority of the landscape, including the entire Corning portfolio, GM's interlayer patents, theKorea Institute of Energy Research three-layer architecture, and the HonHai/SolidEdge lithium-sulfur specific filing.

ChatGPT identified 7 patents, but the quality of attribution was inconsistent. It listed assignees as "Likely DOE /national lab ecosystem" and "Likely startup / defense contractor cluster" for two filings—language that indicates the model was inferring rather than retrieving assignee data. In a freedom-to-operate context, an unverified assignee attribution is functionally equivalent to no attribution, as it cannot support a licensing inquiry or risk assessment.

Co-Pilot identified 4 US patents. Its output was the most limited in scope, missing the Solid Energies portfolio entirely, theUMD/ Wachsman portfolio, Gelion/ Johnson Matthey, NASA SABERS, and all Li-S specific LLZO filings.

2.2 Critical Patents Missed by Public Models

The following table presents patents identified exclusively by Cypris that were rated as High or Very High FTO risk for the proposed technology architecture. None were surfaced by any general-purpose model.

2.3 Patent Fencing: The Solid Energies Portfolio

Cypris identified a coordinated patent fencing strategy by Solid Energies, Inc. that no general-purpose model detected at scale. Solid Energies holds at least four granted US patents and one published application covering LLZO-polymer composite electrolytes across compositions(US-12463245-B2), gradient architectures (US-12283655-B2), electrode integration (US-12463249-B2), and manufacturing processes (US-20230035720-A1). Claude identified one Solid Energies patent (US 11,967,678) and correctly rated it as the highest-priority FTO concern but did not surface the broader portfolio. ChatGPT and Co-Pilot identified zero Solid Energies filings.

The practical significance is that a company relying on any individual patent hit would underestimate the scope of Solid Energies' IP position. The fencing strategy—covering the composition, the architecture, the electrode integration, and the manufacturing method—means that identifying a single design-around for one patent does not resolve the FTO exposure from the portfolio as a whole. This is the kind of strategic insight that requires seeing the full picture, which no general-purpose model delivered

2.4 Assignee Attribution Quality

ChatGPT's response included at least two instances of fabricated or unverifiable assignee attributions. For US 11,367,895 B1, the listed assignee was "Likely startup / defense contractor cluster." For US 2021/0202983 A1, the assignee was described as "Likely DOE / national lab ecosystem." In both cases, the model appears to have inferred the assignee from contextual patterns in its training data rather than retrieving the information from patent records.

In any operational IP workflow, assignee identity is foundational. It determines licensing strategy, litigation risk, and competitive positioning. A fabricated assignee is more dangerous than a missing one because it creates an illusion of completeness that discourages further investigation. An R&D team receiving this output might reasonably conclude that the landscape analysis is finished when it is not.

3. Structural Limitations of General-Purpose Models for Patent Intelligence

3.1 Training Data Is Not Patent Data

Large language models are trained on web-scraped text. Their knowledge of the patent record is derived from whatever fragments appeared in their training corpus: blog posts mentioning filings, news articles about litigation, snippets of Google Patents pages that were crawlable at the time of data collection. They do not have systematic, structured access to the USPTO database. They cannot query patent classification codes, parse claim language against a specific technology architecture, or verify whether a patent has been assigned, abandoned, or subjected to terminal disclaimer since their training data was collected.

This is not a limitation that improves with scale. A larger training corpus does not produce systematic patent coverage; it produces a larger but still arbitrary sampling of the patent record. The result is that general-purpose models will consistently surface well-known patents from heavily discussed assignees (QuantumScape, for example, appeared in most responses) while missing commercially significant filings from less publicly visible entities (Solid Energies, Korea Institute of EnergyResearch, Shenzhen Solid Advanced Materials).

3.2 The Web Is Closing to Model Scrapers

The data access problem is structural and worsening. As of mid-2025, Cloudflare reported that among the top 10,000 web domains, the majority now fully disallow AI crawlers such as GPTBot andClaudeBot via robots.txt. The trend has accelerated from partial restrictions to outright blocks, and the crawl-to-referral ratios reveal the underlying tension: OpenAI's crawlers access approximately1,700 pages for every referral they return to publishers; Anthropic's ratio exceeds 73,000 to 1.

Patent databases, scientific publishers, and IP analytics platforms are among the most restrictive content categories. A Duke University study in 2025 found that several categories of AI-related crawlers never request robots.txt files at all. The practical consequence is that the knowledge gap between what a general-purpose model "knows" about the patent landscape and what actually exists in the patent record is widening with each training cycle. A landscape query that a general-purpose model partially answered in 2023 may return less useful information in 2026.

3.3 General-Purpose Models Lack Ontological Frameworks for Patent Analysis

A freedom-to-operate analysis is not a summarization task. It requires understanding claim scope, prosecution history, continuation and divisional chains, assignee normalization (a single company may appear under multiple entity names across patent records), priority dates versus filing dates versus publication dates, and the relationship between dependent and independent claims. It requires mapping the specific technical features of a proposed product against independent claim language—not keyword matching.

General-purpose models do not have these frameworks. They pattern-match against training data and produce outputs that adopt the format and tone of patent analysis without the underlying data infrastructure. The format is correct. The confidence is high. The coverage is incomplete in ways that are not visible to the user.

4. Comparative Output Quality

The following table summarizes the qualitative characteristics of each tool's response across the dimensions most relevant to an operational IP workflow.

5. Implications for R&D and IP Organizations

5.1 The Confidence Problem

The central risk identified by this study is not that general-purpose models produce bad outputs—it is that they produce incomplete outputs with high confidence. Each model delivered its results in a professional format with structured analysis, risk ratings, and strategic recommendations. At no point did any model indicate the boundaries of its knowledge or flag that its results represented a fraction of the available patent record. A practitioner receiving one of these outputs would have no signal that the analysis was incomplete unless they independently validated it against a comprehensive datasource.

This creates an asymmetric risk profile: the better the format and tone of the output, the less likely the user is to question its completeness. In a corporate environment where AI outputs are increasingly treated as first-pass analysis, this dynamic incentivizes under-investigation at precisely the moment when thoroughness is most critical.

5.2 The Diversification Illusion

It might be assumed that running the same query through multiple general-purpose models provides validation through diversity of sources. This study suggests otherwise. While the four tools returned different subsets of patents, all operated under the same structural constraints: training data rather than live patent databases, web-scraped content rather than structured IP records, and general-purpose reasoning rather than patent-specific ontological frameworks. Running the same query through three constrained tools does not produce triangulation; it produces three partial views of the same incomplete picture.

5.3 The Appropriate Use Boundary

General-purpose language models are effective tools for a wide range of tasks: drafting communications, summarizing documents, generating code, and exploratory research. The finding of this study is not that these tools lack value but that their value boundary does not extend to decisions that carry existential commercial risk.

Patent landscape analysis, freedom-to-operate assessment, and competitive intelligence that informs R&D investment decisions fall outside that boundary. These are workflows where the completeness and verifiability of the underlying data are not merely desirable but are the primary determinant of whether the analysis has value. A patent landscape that captures 10% of the relevant filings, regardless of how well-formatted or confidently presented, is a liability rather than an asset.

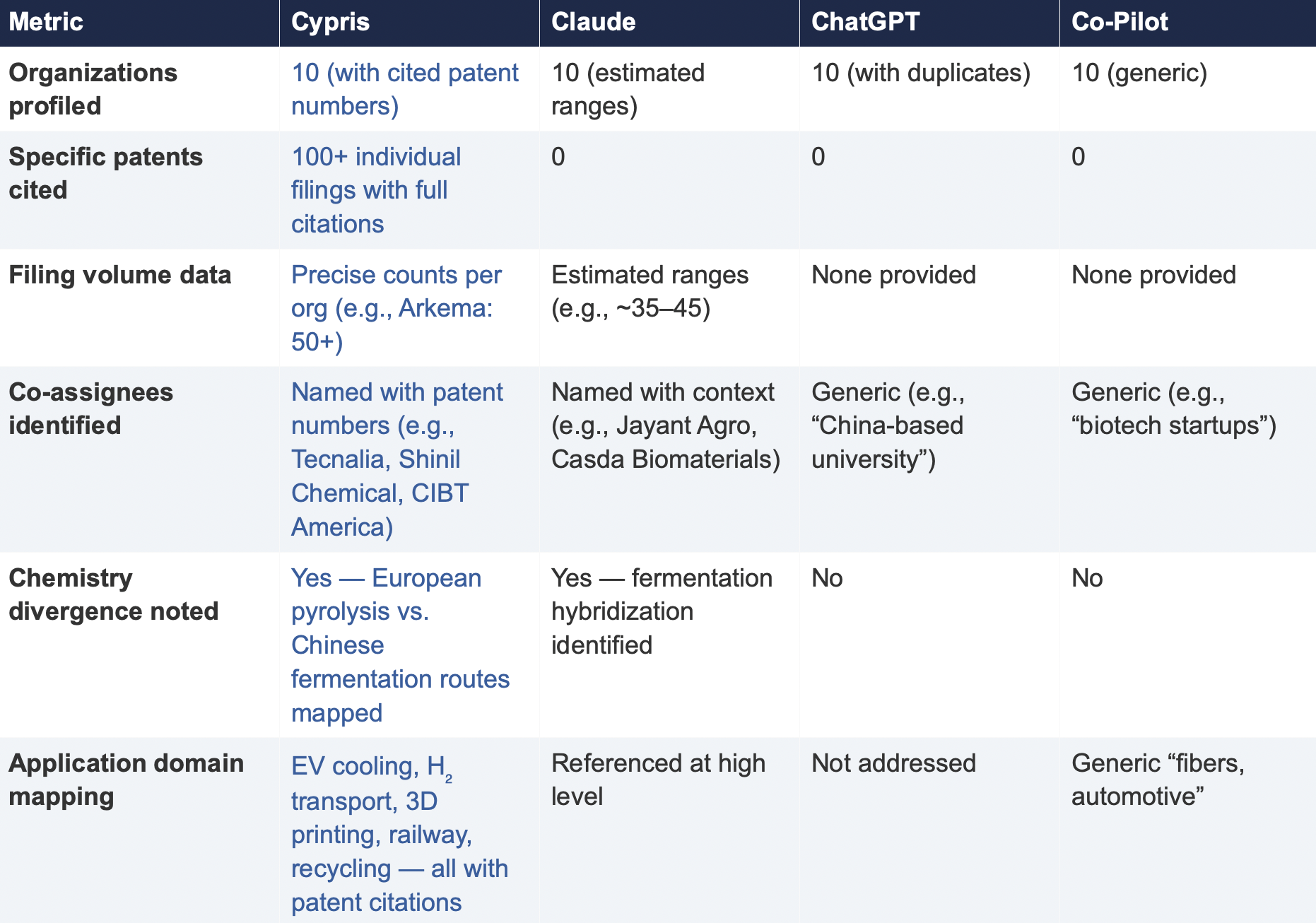

6. Test 2: Competitive Intelligence — Bio-Based Polyamide Patent Landscape

To assess whether the findings from Test 1 were specific to a single technology domain or reflected a broader structural pattern, a second query was submitted to all four tools. This query shifted from freedom-to-operate analysis to competitive intelligence, asking each tool to identify the top 10organizations by patent filing volume in bio-based polyamide synthesis from castor oil derivatives over the past three years, with summaries of technical approach, co-assignee relationships, and portfolio trajectory.

6.1 Query

6.2 Summary of Results

6.3 Key Differentiators

Verifiability

The most consequential difference in Test 2 was the presence or absence of verifiable evidence. Cypris cited over 100 individual patent filings with full patent numbers, assignee names, and publication dates. Every claim about an organization’s technical focus, co-assignee relationships, and filing trajectory was anchored to specific documents that a practitioner could independently verify in USPTO, Espacenet, or WIPO PATENT SCOPE. No general-purpose model cited a single patent number. Claude produced the most structured and analytically useful output among the public models, with estimated filing ranges, product names, and strategic observations that were directionally plausible. However, without underlying patent citations, every claim in the response requires independent verification before it can inform a business decision. ChatGPT and Co-Pilot offered thinner profiles with no filing counts and no patent-level specificity.

Data Integrity

ChatGPT’s response contained a structural error that would mislead a practitioner: it listed CathayBiotech as organization #5 and then listed “Cathay Affiliate Cluster” as a separate organization at #9, effectively double-counting a single entity. It repeated this pattern with Toray at #4 and “Toray(Additional Programs)” at #10. In a competitive intelligence context where the ranking itself is the deliverable, this kind of error distorts the landscape and could lead to misallocation of competitive monitoring resources.

Organizations Missed

Cypris identified Kingfa Sci. & Tech. (8–10 filings with a differentiated furan diacid-based polyamide platform) and Zhejiang NHU (4–6 filings focused on continuous polymerization process technology)as emerging players that no general-purpose model surfaced. Both represent potential competitive threats or partnership opportunities that would be invisible to a team relying on public AI tools.Conversely, ChatGPT included organizations such as ANTA and Jiangsu Taiji that appear to be downstream users rather than significant patent filers in synthesis, suggesting the model was conflating commercial activity with IP activity.

Strategic Depth

Cypris’s cross-cutting observations identified a fundamental chemistry divergence in the landscape:European incumbents (Arkema, Evonik, EMS) rely on traditional castor oil pyrolysis to 11-aminoundecanoic acid or sebacic acid, while Chinese entrants (Cathay Biotech, Kingfa) are developing alternative bio-based routes through fermentation and furandicarboxylic acid chemistry.This represents a potential long-term disruption to the castor oil supply chain dependency thatWestern players have built their IP strategies around. Claude identified a similar theme at a higher level of abstraction. Neither ChatGPT nor Co-Pilot noted the divergence.

6.4 Test 2 Conclusion

Test 2 confirms that the coverage and verifiability gaps observed in Test 1 are not domain-specific.In a competitive intelligence context—where the deliverable is a ranked landscape of organizationalIP activity—the same structural limitations apply. General-purpose models can produce plausible-looking top-10 lists with reasonable organizational names, but they cannot anchor those lists to verifiable patent data, they cannot provide precise filing volumes, and they cannot identify emerging players whose patent activity is visible in structured databases but absent from the web-scraped content that general-purpose models rely on.

7. Conclusion

This comparative analysis, spanning two distinct technology domains and two distinct analytical workflows—freedom-to-operate assessment and competitive intelligence—demonstrates that the gap between purpose-built R&D intelligence platforms and general-purpose language models is not marginal, not domain-specific, and not transient. It is structural and consequential.

In Test 1 (LLZO garnet electrolytes for Li-S batteries), the purpose-built platform identified more than three times as many patents as the best-performing general-purpose model and ten times as many as the lowest-performing one. Among the patents identified exclusively by the purpose-built platform were filings rated as Very High FTO risk that directly claim the proposed technology architecture. InTest 2 (bio-based polyamide competitive landscape), the purpose-built platform cited over 100individual patent filings to substantiate its organizational rankings; no general-purpose model cited as ingle patent number.

The structural drivers of this gap—reliance on training data rather than live patent feeds, the accelerating closure of web content to AI scrapers, and the absence of patent-specific analytical frameworks—are not transient. They are inherent to the architecture of general-purpose models and will persist regardless of increases in model capability or training data volume.

For R&D and IP leaders, the practical implication is clear: general-purpose AI tools should be used for general-purpose tasks. Patent intelligence, competitive landscaping, and freedom-to-operate analysis require purpose-built systems with direct access to structured patent data, domain-specific analytical frameworks, and the ability to surface what a general-purpose model cannot—not because it chooses not to, but because it structurally cannot access the data.

The question for every organization making R&D investment decisions today is whether the tools informing those decisions have access to the evidence base those decisions require. This study suggests that for the majority of general-purpose AI tools currently in use, the answer is no.

About This Report

This report was produced by Cypris (IP Web, Inc.), an AI-powered R&D intelligence platform serving corporate innovation, IP, and R&D teams at organizations including NASA, Johnson & Johnson, theUS Air Force, and Los Alamos National Laboratory. Cypris aggregates over 500 million data points from patents, scientific literature, grants, corporate filings, and news to deliver structured intelligence for technology scouting, competitive analysis, and IP strategy.

The comparative tests described in this report were conducted on March 27, 2026. All outputs are preserved in their original form. Patent data cited from the Cypris reports has been verified against USPTO Patent Center and WIPO PATENT SCOPE records as of the same date. To conduct a similar analysis for your technology domain, contact info@cypris.ai or visit cypris.ai.

The patent analytics market is projected to grow from roughly $1.3 billion in 2025 to more than $3 billion by 2032, according to Fortune Business Insights (1). The investment is visible in the proliferation of patent-specific intelligence platforms competing for enterprise budgets. PatSnap, IPRally, Patlytics, Questel's Orbit Intelligence, Derwent Innovation, and a growing roster of niche players all promise better, faster, more AI-enhanced access to the global patent corpus. They deliver on that promise to varying degrees. But the promise itself is the problem. These platforms are competing to provide the best view of the same underlying dataset, one that is increasingly commoditized and, by itself, structurally incomplete as a basis for long-term R&D strategy. Access to patent filings and grants across global jurisdictions is table stakes. Every serious enterprise patent search platform delivers it. The harder question, and the one that actually determines whether R&D investment decisions succeed or fail, is what happens when you treat that dataset as though it were the whole picture.

Patent data captures invention activity. It does not capture commercial viability, market timing, customer adoption, regulatory trajectory, scientific momentum, or the dozens of other signals that determine whether a patented technology ever reaches a product shelf. When IP teams advise R&D leadership on where to invest, where to avoid, and where genuine opportunity exists, they are making those recommendations with roughly half the evidence. The missing half falls into two distinct categories, each with its own mechanics and consequences: the scientific literature gap and the commercial intelligence gap.

The Scale of What Is at Stake

Corporate R&D expenditure reached approximately $1.3 trillion in 2024, a historic high, though real growth slowed to roughly 1 percent after adjusting for inflation, according to WIPO's Global Innovation Index (2). Total global R&D spending across public and private sectors approached $2.87 trillion the same year (3). These figures matter because they describe the size of the decisions that patent intelligence is being asked to inform. When an IP team delivers a patent landscape report that shapes the direction of a multimillion-dollar research program, the accuracy and completeness of that intelligence has direct financial consequences that compound across every program in the portfolio.

Meanwhile, the volume of patent activity continues to accelerate. The USPTO received more than 700,000 patent applications in 2024 alone (4). Patent grants grew 5.7 percent year over year to 368,597 during the same period, with semiconductor technology leading all fields for the third consecutive year (5). The USPTO's backlog of unexamined applications hit a record 830,020 in early 2025 (6). Globally, WIPO data shows patent filings have grown continuously for over a decade, with particularly sharp increases in AI, clean energy, and biotechnology.

The instinct in response to this volume is to invest in better patent analytics. That instinct is correct as far as it goes. The error is in assuming that better patent analytics, no matter how sophisticated, can compensate for the absence of the data categories that patent databases were never designed to contain.

The Scientific Literature Gap: Patents Are Structurally Late

The first and arguably most underappreciated gap in patent-only intelligence is temporal. Patents are lagging indicators of technical activity, not leading ones. And the lag is not marginal. It is measured in years.

The standard patent publication cycle introduces an 18-month delay between filing and public disclosure. By the time a competitor's patent application appears in any enterprise patent search platform, the underlying research was conducted at minimum a year and a half earlier, and frequently much longer when you account for the elapsed time between initial discovery, internal validation, and the decision to file. For fast-moving technology domains like AI, advanced materials, synthetic biology, and energy storage, 18 months represents a period in which entire competitive positions can form, shift, and consolidate.

Scientific literature operates on a fundamentally different timeline. Researchers routinely publish findings on preprint servers like arXiv, bioRxiv, medRxiv, and ChemRxiv within weeks of completing their work. These publications are not obscure or difficult to access. They are the primary communication channel for the global research community. A 2024 preprint describing a novel electrode chemistry, for instance, might not surface in patent databases until mid-2026. But the technical trajectory it signals, the research group pursuing it, the institutional funding behind it, the citation pattern it generates, is visible immediately to anyone monitoring the literature.

Peer-reviewed journal publications, while slower than preprints, still generally precede patent publication and provide richer methodological detail than patent claims offer. More importantly, they reveal the connective tissue of a research program in ways that patent filings deliberately obscure. Patent claims are drafted to be as broad as defensible. Scientific publications are written to be as specific and reproducible as possible. For an IP team trying to understand not just what a competitor has claimed but what they can actually do, the scientific record is indispensable.

This temporal gap creates a specific, recurring strategic failure mode. An IP team conducting a patent landscape analysis in a technology domain will systematically miss the most recent competitive activity. The landscape they present to R&D leadership reflects where competitors were positioned roughly two years ago, not where they are today or where they are headed. For prior art searches, this delay is somewhat less consequential because the relevant question is historical. But for forward-looking decisions about where to direct R&D investment, which technology trajectories are accelerating, and which competitors are pivoting into adjacent spaces, the patent record is structurally behind the curve.

Most patent analytics platforms have begun incorporating scientific literature to some degree, but in nearly every case the integration is shallow. Literature appears as a supplementary data layer rather than a co-equal analytical signal. The search architectures were designed around patent classification systems and IPC/CPC codes, not the way scientific research is structured, cited, and built upon. The result is that literature coverage exists as a checkbox feature rather than a deeply integrated component of the analytical workflow that generates strategic recommendations.

An enterprise R&D team that monitors scientific literature alongside patents effectively moves its competitive early warning system forward by six to eighteen months. That is not an incremental improvement. It is the difference between recognizing a competitive shift in time to respond and discovering it after the window for response has closed.

The Commercial Intelligence Gap: What the Market Is Actually Doing

The second gap is commercial, and it is wider than most IP teams acknowledge. Patent data tells you what companies have invented and chosen to protect. It tells you nothing about what the market is actually doing with those inventions, or what is happening in the broader competitive landscape outside of patent strategy entirely.

This gap manifests across several specific categories of missing intelligence, each of which can independently change the strategic calculus for an R&D investment decision.

Startup and new entrant activity is perhaps the most dangerous blind spot. Early-stage companies frequently operate for years before generating meaningful patent filings. Some pursue trade secret strategies by design. Others simply prioritize speed to market over IP protection in their early stages. Their existence is visible through venture capital deal records, accelerator program participation, grant funding awards, and trade press coverage, but it is invisible in the patent corpus. A patent landscape analysis that shows no filing activity in a technology niche might miss three well-funded startups pursuing the same approach, each backed by $20 million in Series A funding and 18 months ahead of where the patent record suggests the field currently stands.

Venture capital investment patterns provide perhaps the clearest forward-looking signal of where commercial conviction is forming. When multiple institutional investors place concentrated bets on a particular technology approach, they are creating a market signal that is distinct from and often earlier than patent activity. A technology domain that shows minimal patent filings but $500 million in aggregate VC funding over the past two years is not white space. It is a market that is building commercial momentum through channels that patent analytics cannot see. Conversely, a domain with dense patent filing but declining venture interest may signal that commercial enthusiasm is fading even as legal protection intensifies, a pattern that often precedes market contraction.

Regulatory activity creates hard constraints and clear signals about commercialization timelines that patent data cannot capture. In pharmaceuticals, medical devices, chemicals, and energy, regulatory approvals and submissions often determine whether a technology reaches market more than patent strategy does. A patent landscape might show dense filing activity in a therapeutic area without revealing that two leading candidates have already received FDA breakthrough therapy designation, fundamentally changing the competitive calculus for any new entrant. A freedom to operate analysis might clear a pathway for product development without surfacing that the regulatory pathway itself is obstructed by pending rulemaking or classification disputes.

Mergers and acquisitions reshape competitive landscapes in ways that patent data captures only partially and with significant delay. When a major chemical company acquires a specialty materials startup, the strategic implications for every competitor in that space are immediate. The acquiring company's intent, which markets they plan to enter, which product lines they plan to expand, which competing approaches are being consolidated, is visible in SEC filings, press releases, analyst reports, and industry databases. It is not visible in the patent assignment records that may take months to update.

These are not edge cases. They describe the normal operating environment for enterprise R&D. And they converge on a single problem: the most consequential competitive dynamics in most technology markets unfold partially or entirely outside the patent system. An intelligence model that sees only patent data is not seeing the full competitive landscape. It is seeing one layer of it, rendered in increasingly high resolution by increasingly sophisticated tools, while the other layers remain invisible.

This is where the white space fallacy becomes most dangerous. An IP white space, a region of a technology landscape where few or no active patents exist, is routinely flagged as an area of potential opportunity. As DrugPatentWatch's analysis of pharmaceutical R&D portfolio strategy notes, an IP white space is a starting point for investigation, not a validated opportunity (7). The critical question is always why the space is empty. Patent data cannot answer that question. Commercial intelligence, scientific literature, and regulatory data can.

The Expanding Mandate of the IP Team

These gaps matter more today than they did a decade ago because the role of the enterprise IP team has fundamentally expanded. In most Fortune 1000 organizations, the IP function is no longer responsible solely for patent prosecution, portfolio management, and infringement risk assessment. It is increasingly expected to deliver strategic intelligence that informs R&D investment decisions, technology scouting priorities, partnership and licensing strategy, and business development positioning. The IP team has become, whether by design or by default, the primary intelligence function for the company's innovation strategy.

This expanded mandate is a direct consequence of how expensive and risky R&D has become. New product failure rates across industries range from 35 to 49 percent, according to research compiled by the Product Development and Management Association (8). In pharmaceuticals, overall drug development success rates average roughly 14 percent from Phase I to FDA approval, according to a 2025 analysis published in Drug Discovery Today (9). Gartner reported in 2023 that 87 percent of R&D projects never reach the production phase (10). Two-thirds of new products fail within two years of launch, according to Columbia Business School research (11). These failure rates have many causes, but a significant and underappreciated contributor is the tendency to validate technical opportunity through patent analysis without simultaneously validating commercial opportunity through market and competitive intelligence.

When an IP team is responsible not only for delivering prior art analysis but also for coupling that analysis with strategic recommendations for R&D direction and business development, the team needs to see the complete picture. A prior art search that identifies relevant existing claims is necessary but not sufficient. The team also needs to know whether the technology domain is commercially active, whether scientific literature suggests the approach is gaining or losing technical momentum, whether regulatory pathways are clear or obstructed, whether startups are entering the space with venture backing, and whether recent M&A activity signals that larger competitors are consolidating positions.

Freedom to operate analysis illustrates this dynamic clearly. FTO assessments determine whether a company can develop, manufacture, and sell a product without infringing existing patents in target markets. The financial stakes are concrete. Patent litigation averages $2 to $5 million through trial, and courts can issue injunctions that halt product sales entirely (12). An FTO analysis typically costs between $5,000 and $20,000 (13). But an FTO clearance that addresses only the legal dimension of commercialization risk, without simultaneously assessing commercial viability and scientific trajectory, can lead R&D teams to invest heavily in development programs that are legally clear but commercially nonviable, or that arrive at market three years behind a competitor who was visible in the literature but invisible in the patent record.

The IP team that delivers FTO clearance alongside scientific trajectory analysis, market context, and competitive commercial intelligence is delivering fundamentally more valuable guidance than the team that delivers a legal opinion in isolation. And the difference between those two deliverables is not analytical skill. It is access to data.

Researchers at Microbial Biotechnology noted in their analysis of patent landscape methodology that outcomes of patent landscape analyses can prevent replication of research that has already been performed and reduce waste of limited resources, but emphasized that these analyses are most effective when combined with broader scientific and commercial intelligence rather than treated as standalone decision tools (14). That observation, published in an academic context, describes precisely the operational challenge that enterprise IP teams navigate every day.

What an Integrated Intelligence Model Actually Looks Like

Closing these gaps does not require IP teams to become market researchers, literature analysts, or venture capital scouts. It requires access to a platform that integrates patent data with the broader universe of signals that determine whether a technology opportunity is technically viable, commercially real, and strategically sound.

An effective enterprise R&D intelligence platform connects several data streams that have traditionally been siloed across different tools, subscriptions, and departments. Patent filings and grants across global jurisdictions form the foundation, as they should. Scientific literature, including peer-reviewed publications, preprints, and conference proceedings, provides the temporal advantage and technical depth that patent claims alone cannot convey. Commercial data layers, including venture capital investment, M&A activity, regulatory filings, startup formation data, and competitive market analysis, provide the demand signals that distinguish genuine opportunity from empty space. Grant funding records from government agencies reveal where public investment is flowing and where institutional support exists for specific research directions.

The analytical power comes not from having these data types available in separate tabs but from mapping the relationships between them automatically. When a patent landscape shows sparse filing in a materials chemistry domain, but the scientific literature shows accelerating publication volume from three well-funded university groups, and the commercial data shows two Series A rounds in adjacent startups over the past year, and the regulatory record shows favorable classification precedent in the primary target market, those signals together tell a story that no individual data stream can tell alone. The technology is early-stage, gaining scientific momentum, attracting commercial investment, and facing a clear regulatory path. That is a qualitatively different strategic input than a patent landscape report that says the space looks open.

Cypris was built specifically to deliver this integration. The platform aggregates more than 500 million patents and scientific papers alongside commercial intelligence signals, including startup activity, venture funding, regulatory data, and competitive market intelligence, into a unified search and analysis environment designed for R&D teams rather than patent attorneys. Its proprietary R&D ontology maps relationships across data types automatically, enabling teams to identify not just what has been patented but what is being published, what is being commercialized, what is being funded, and where genuine opportunity exists. Official API partnerships with OpenAI, Anthropic, and Google enable AI-driven synthesis across the full data set, and enterprise-grade security meets the requirements of Fortune 500 R&D organizations. Hundreds of enterprise teams and thousands of researchers across R&D, IP, and product development trust the platform to close the scientific and commercial intelligence gaps that patent-only tools leave open.

The structural distinction is important. The patent analytics vendors that dominate current enterprise spending were architected around patent data as the primary or exclusive intelligence source. Their datasets, while varying in interface quality and AI capability, draw from the same underlying patent offices and classification systems. They compete on search refinement, visualization, and workflow integration within the patent domain. Cypris occupies a different position, treating patent data as one essential layer of a multi-source intelligence model rather than the entire model itself. For IP teams whose mandate now extends to R&D strategy and business development, that structural difference determines whether the intelligence they deliver is complete enough to support the decisions it is being asked to inform.

The Cost of the Status Quo

Enterprise IP teams that continue to rely exclusively on patent data for R&D strategy recommendations are accepting a specific, compounding risk. They are advising billion-dollar investment decisions based on intelligence that systematically excludes the scientific momentum signals that precede patent filings by months or years, the commercial viability signals that determine whether inventions reach markets, and the competitive dynamics that unfold entirely outside the patent system. Every quarter that passes without closing these gaps is a quarter in which R&D investments are being directed by an incomplete map.

In an environment where two-thirds of new products fail within two years, where nearly nine in ten R&D projects never reach production, and where the temporal gap between scientific discovery and patent publication continues to widen, the margin for error is already thin. Narrowing the intelligence base to patent data alone, regardless of how sophisticated the analytics platform, makes that margin thinner.

The patent analytics market is growing for good reason. Patent data is foundational to any serious R&D intelligence capability. But foundation is not the same as completeness. The organizations that will make the best R&D investment decisions over the next decade will be the ones whose IP teams see the full picture, patents, scientific literature, and commercial reality together, rather than the organizations whose teams see one layer of the picture rendered in increasingly high resolution while the rest remains dark.

Frequently Asked Questions

What is the commercial intelligence gap in patent landscaping?

The commercial intelligence gap refers to the systematic exclusion of market data, scientific literature, venture capital activity, regulatory signals, startup activity, and M&A intelligence from the patent landscape analyses that enterprise IP teams use to advise R&D investment decisions. Traditional patent landscaping tools analyze only patent filings and grants, which capture invention activity but not commercial viability, scientific momentum, customer adoption, or market timing. This gap means that white space identified through patent analysis alone may represent areas with no commercial potential rather than genuine opportunities, and dense patent areas may be incorrectly flagged as saturated when they actually represent high-growth markets with strong venture funding and regulatory momentum.

Why do scientific publications provide earlier competitive signals than patents?

The standard patent publication cycle introduces an 18-month delay between filing and public disclosure, meaning that competitor activity visible in patent databases reflects research conducted at minimum 18 months earlier. Scientific publications, particularly preprints on platforms like arXiv, bioRxiv, and ChemRxiv, are typically released within weeks of research completion. This means that monitoring scientific literature alongside patent data effectively moves an enterprise R&D team's early warning system forward by six to eighteen months, providing advance notice of competitive technical developments that would otherwise remain invisible until they appeared in patent databases.

Why is patent data alone insufficient for freedom to operate decisions?

Freedom to operate analysis determines whether a product can be commercialized without infringing existing patents, and patent data is essential for this purpose. However, FTO analysis addresses only the legal dimension of commercialization risk. A clear FTO pathway does not validate that a viable market exists, that manufacturing is economically feasible, that regulatory approval is achievable, or that competitive commercial activity in the space makes market entry practical. Enterprise R&D teams that receive FTO clearance without accompanying commercial and scientific intelligence may invest heavily in product development only to discover that the market cannot support the investment or that competitors have advanced through non-patent channels.

How has the role of enterprise IP teams changed?

In most Fortune 1000 organizations, IP teams are no longer responsible solely for patent prosecution and portfolio management. They are increasingly expected to deliver strategic intelligence that informs R&D investment decisions, technology scouting priorities, partnership and licensing strategy, and business development positioning. This expanded mandate means that IP teams need access to scientific literature, commercial market data, venture capital trends, regulatory intelligence, and M&A activity alongside traditional patent data. Teams that can deliver prior art analysis coupled with commercial viability assessment and scientific trajectory context provide fundamentally more valuable strategic guidance than teams limited to patent-only intelligence.

What are the risks of treating patent white space as commercial opportunity?

Patent white space, meaning technology areas with few or no active patent filings, can indicate genuine opportunity, but it can also indicate that previous investigators encountered insurmountable technical barriers, that no viable commercial market exists, that competitors are pursuing the technology through trade secrets rather than patents, or that well-funded startups are developing the technology but have not yet filed. Treating white space as validated opportunity without overlaying scientific literature trends, venture capital activity, regulatory data, and competitive commercial intelligence risks directing R&D investment into areas where products cannot be manufactured economically, where customer demand does not exist, or where the competitive window has already narrowed beyond what patent data reveals.

How much does patent litigation cost if freedom to operate analysis is insufficient?

Patent litigation in the United States averages $2 to $5 million through trial, and damages can include reasonable royalties, lost profits, and in cases of willful infringement, treble damages. Courts may also issue injunctions that halt product sales entirely, which can eliminate an established market position. Freedom to operate analysis typically costs between $5,000 and $20,000, making it a small fraction of potential litigation exposure, but the quality of FTO analysis depends on the comprehensiveness of the underlying search and the breadth of intelligence applied to the results.

Citations

Fortune Business Insights, "Patent Analytics Market Size, Share and Growth by 2032," 2025.

WIPO Global Innovation Index 2025, "Global Innovation Tracker."

WIPO, "End of Year Edition: Global R&D Spending Grew Again in 2024," December 2025.

PatentPC, "Patent Statistics 2024: What the Numbers Tell Us," 2024.

Anaqua, "2024 Analysis of USPTO Patent Statistics," January 2025.

GetFocus, "How R&D Teams Can Use Patent Trends to Forecast Emerging Technologies," 2025.

DrugPatentWatch, "Navigating and De-Risking the Pharmaceutical R&D Portfolio," December 2025.

PDMA Best Practices Study; compiled by StudioRed, "Product Development Statistics for 2025."

ScienceDirect/Drug Discovery Today, "Benchmarking R&D Success Rates of Leading Pharmaceutical Companies: An Empirical Analysis of FDA Approvals (2006–2022)," January 2025.

Gartner, 2023; compiled by Sourcing Innovation, "Two and a Half Decades of Project Failure," October 2024.

Columbia Business School Publishing; compiled by StudioRed, "Product Development Statistics for 2025."

Cypris, "How to Conduct a Freedom-to-Operate (FTO) Analysis: Complete Guide for R&D Teams."

IamIP, "Understanding Patent Lifetimes and Costs in 2025," July 2025.

Van Rijn and Timmis, "Patent Landscape Analysis—Contributing to the Identification of Technology Trends and Informing Research and Innovation Funding Policy," Microbial Biotechnology, PMC.