Resources

Guides, research, and perspectives on R&D intelligence, IP strategy, and the future of AI enabled innovation.

Executive Summary

In 2024, US patent infringement jury verdicts totaled $4.19 billion across 72 cases. Twelve individual verdicts exceeded $100million. The largest single award—$857 million in General Access Solutions v.Cellco Partnership (Verizon)—exceeded the annual R&D budget of many mid-market technology companies. In the first half of 2025 alone, total damages reached an additional $1.91 billion.

The consequences of incomplete patent intelligence are not abstract. In what has become one of the most instructive IP disputes in recent history, Masimo’s pulse oximetry patents triggered a US import ban on certain Apple Watch models, forcing Apple to disable its blood oxygen feature across an entire product line, halt domestic sales of affected models, invest in a hardware redesign, and ultimately face a $634 million jury verdict in November 2025. Apple—a company with one of the most sophisticated intellectual property organizations on earth—spent years in litigation over technology it might have designed around during development.

For organizations with fewer resources than Apple, the risk calculus is starker. A mid-size materials company, a university spinout, or a defense contractor developing next-generation battery technology cannot absorb a nine-figure verdict or a multi-year injunction. For these organizations, the patent landscape analysis conducted during the development phase is the primary risk mitigation mechanism. The quality of that analysis is not a matter of convenience. It is a matter of survival.

And yet, a growing number of R&D and IP teams are conducting that analysis using general-purpose AI tools—ChatGPT, Claude, Microsoft Co-Pilot—that were never designed for patent intelligence and are structurally incapable of delivering it.

This report presents the findings of a controlled comparison study in which identical patent landscape queries were submitted to four AI-powered tools: Cypris (a purpose-built R&D intelligence platform),ChatGPT (OpenAI), Claude (Anthropic), and Microsoft Co-Pilot. Two technology domains were tested: solid-state lithium-sulfur battery electrolytes using garnet-type LLZO ceramic materials (freedom-to-operate analysis), and bio-based polyamide synthesis from castor oil derivatives (competitive intelligence).

The results reveal a significant and structurally persistent gap. In Test 1, Cypris identified over 40 active US patents and published applications with granular FTO risk assessments. Claude identified 12. ChatGPT identified 7, several with fabricated attribution. Co-Pilot identified 4. Among the patents surfaced exclusively by Cypris were filings rated as “Very High” FTO risk that directly claim the technology architecture described in the query. In Test 2, Cypris cited over 100 individual patent filings with full attribution to substantiate its competitive landscape rankings. No general-purpose model cited a single patent number.

The most active sectors for patent enforcement—semiconductors, AI, biopharma, and advanced materials—are the same sectors where R&D teams are most likely to adopt AI tools for intelligence workflows. The findings of this report have direct implications for any organization using general-purpose AI to inform patent strategy, competitive intelligence, or R&D investment decisions.

1. Methodology

A single patent landscape query was submitted verbatim to each tool on March 27, 2026. No follow-up prompts, clarifications, or iterative refinements were provided. Each tool received one opportunity to respond, mirroring the workflow of a practitioner running an initial landscape scan.

1.1 Query

Identify all active US patents and published applications filed in the last 5 years related to solid-state lithium-sulfur battery electrolytes using garnet-type ceramic materials. For each, provide the assignee, filing date, key claims, and current legal status. Highlight any patents that could pose freedom-to-operate risks for a company developing a Li₇La₃Zr₂O₁₂(LLZO)-based composite electrolyte with a polymer interlayer.

1.2 Tools Evaluated

1.3 Evaluation Criteria

Each response was assessed across six dimensions: (1) number of relevant patents identified, (2) accuracy of assignee attribution,(3) completeness of filing metadata (dates, legal status), (4) depth of claim analysis relative to the proposed technology, (5) quality of FTO risk stratification, and (6) presence of actionable design-around or strategic guidance.

2. Findings

2.1 Coverage Gap

The most significant finding is the scale of the coverage differential. Cypris identified over 40 active US patents and published applications spanning LLZO-polymer composite electrolytes, garnet interface modification, polymer interlayer architectures, lithium-sulfur specific filings, and adjacent ceramic composite patents. The results were organized by technology category with per-patent FTO risk ratings.

Claude identified 12 patents organized in a four-tier risk framework. Its analysis was structurally sound and correctly flagged the two highest-risk filings (Solid Energies US 11,967,678 and the LLZO nanofiber multilayer US 11,923,501). It also identified the University ofMaryland/ Wachsman portfolio as a concentration risk and noted the NASA SABERS portfolio as a licensing opportunity. However, it missed the majority of the landscape, including the entire Corning portfolio, GM's interlayer patents, theKorea Institute of Energy Research three-layer architecture, and the HonHai/SolidEdge lithium-sulfur specific filing.

ChatGPT identified 7 patents, but the quality of attribution was inconsistent. It listed assignees as "Likely DOE /national lab ecosystem" and "Likely startup / defense contractor cluster" for two filings—language that indicates the model was inferring rather than retrieving assignee data. In a freedom-to-operate context, an unverified assignee attribution is functionally equivalent to no attribution, as it cannot support a licensing inquiry or risk assessment.

Co-Pilot identified 4 US patents. Its output was the most limited in scope, missing the Solid Energies portfolio entirely, theUMD/ Wachsman portfolio, Gelion/ Johnson Matthey, NASA SABERS, and all Li-S specific LLZO filings.

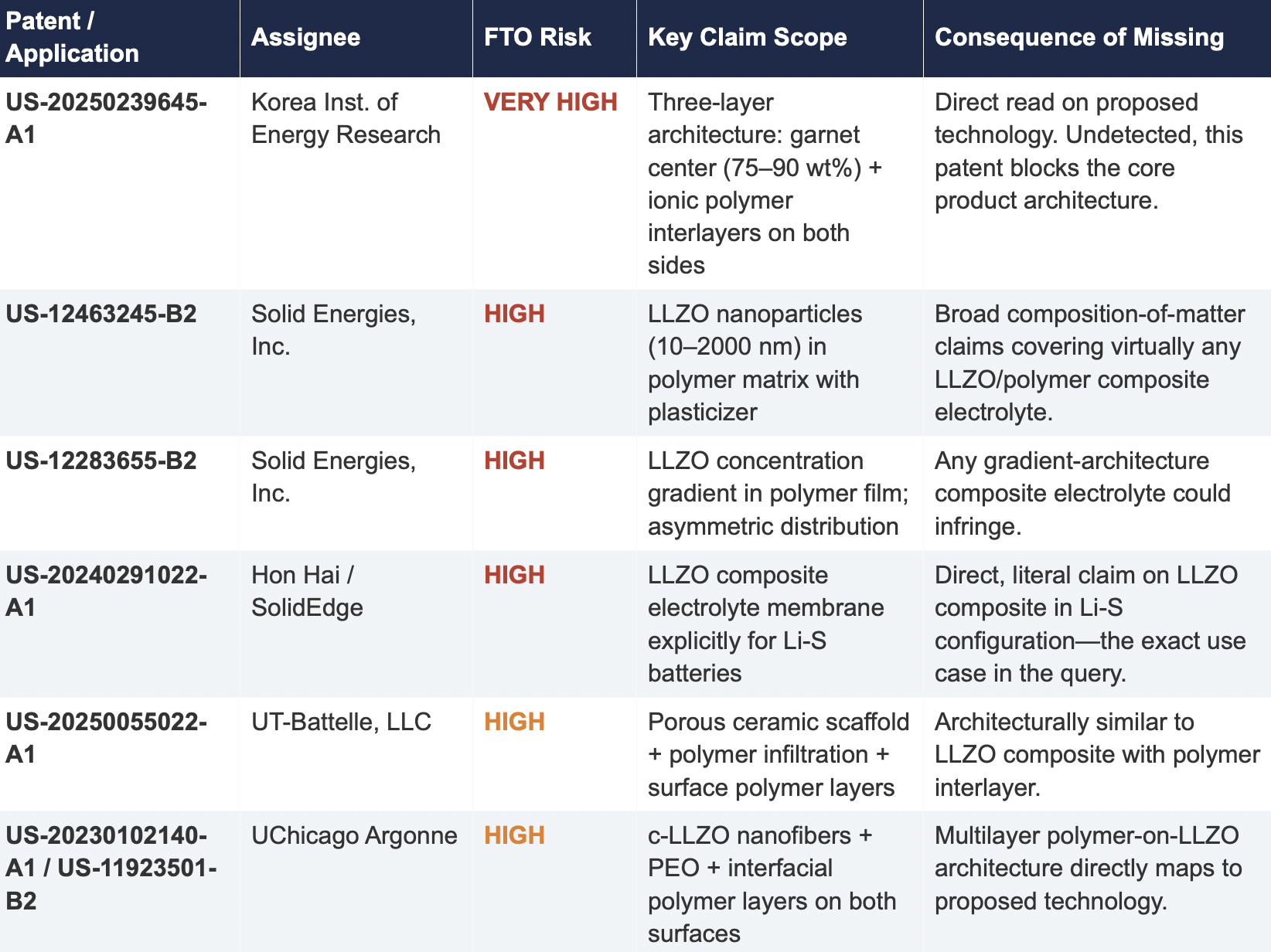

2.2 Critical Patents Missed by Public Models

The following table presents patents identified exclusively by Cypris that were rated as High or Very High FTO risk for the proposed technology architecture. None were surfaced by any general-purpose model.

2.3 Patent Fencing: The Solid Energies Portfolio

Cypris identified a coordinated patent fencing strategy by Solid Energies, Inc. that no general-purpose model detected at scale. Solid Energies holds at least four granted US patents and one published application covering LLZO-polymer composite electrolytes across compositions(US-12463245-B2), gradient architectures (US-12283655-B2), electrode integration (US-12463249-B2), and manufacturing processes (US-20230035720-A1). Claude identified one Solid Energies patent (US 11,967,678) and correctly rated it as the highest-priority FTO concern but did not surface the broader portfolio. ChatGPT and Co-Pilot identified zero Solid Energies filings.

The practical significance is that a company relying on any individual patent hit would underestimate the scope of Solid Energies' IP position. The fencing strategy—covering the composition, the architecture, the electrode integration, and the manufacturing method—means that identifying a single design-around for one patent does not resolve the FTO exposure from the portfolio as a whole. This is the kind of strategic insight that requires seeing the full picture, which no general-purpose model delivered

2.4 Assignee Attribution Quality

ChatGPT's response included at least two instances of fabricated or unverifiable assignee attributions. For US 11,367,895 B1, the listed assignee was "Likely startup / defense contractor cluster." For US 2021/0202983 A1, the assignee was described as "Likely DOE / national lab ecosystem." In both cases, the model appears to have inferred the assignee from contextual patterns in its training data rather than retrieving the information from patent records.

In any operational IP workflow, assignee identity is foundational. It determines licensing strategy, litigation risk, and competitive positioning. A fabricated assignee is more dangerous than a missing one because it creates an illusion of completeness that discourages further investigation. An R&D team receiving this output might reasonably conclude that the landscape analysis is finished when it is not.

3. Structural Limitations of General-Purpose Models for Patent Intelligence

3.1 Training Data Is Not Patent Data

Large language models are trained on web-scraped text. Their knowledge of the patent record is derived from whatever fragments appeared in their training corpus: blog posts mentioning filings, news articles about litigation, snippets of Google Patents pages that were crawlable at the time of data collection. They do not have systematic, structured access to the USPTO database. They cannot query patent classification codes, parse claim language against a specific technology architecture, or verify whether a patent has been assigned, abandoned, or subjected to terminal disclaimer since their training data was collected.

This is not a limitation that improves with scale. A larger training corpus does not produce systematic patent coverage; it produces a larger but still arbitrary sampling of the patent record. The result is that general-purpose models will consistently surface well-known patents from heavily discussed assignees (QuantumScape, for example, appeared in most responses) while missing commercially significant filings from less publicly visible entities (Solid Energies, Korea Institute of EnergyResearch, Shenzhen Solid Advanced Materials).

3.2 The Web Is Closing to Model Scrapers

The data access problem is structural and worsening. As of mid-2025, Cloudflare reported that among the top 10,000 web domains, the majority now fully disallow AI crawlers such as GPTBot andClaudeBot via robots.txt. The trend has accelerated from partial restrictions to outright blocks, and the crawl-to-referral ratios reveal the underlying tension: OpenAI's crawlers access approximately1,700 pages for every referral they return to publishers; Anthropic's ratio exceeds 73,000 to 1.

Patent databases, scientific publishers, and IP analytics platforms are among the most restrictive content categories. A Duke University study in 2025 found that several categories of AI-related crawlers never request robots.txt files at all. The practical consequence is that the knowledge gap between what a general-purpose model "knows" about the patent landscape and what actually exists in the patent record is widening with each training cycle. A landscape query that a general-purpose model partially answered in 2023 may return less useful information in 2026.

3.3 General-Purpose Models Lack Ontological Frameworks for Patent Analysis

A freedom-to-operate analysis is not a summarization task. It requires understanding claim scope, prosecution history, continuation and divisional chains, assignee normalization (a single company may appear under multiple entity names across patent records), priority dates versus filing dates versus publication dates, and the relationship between dependent and independent claims. It requires mapping the specific technical features of a proposed product against independent claim language—not keyword matching.

General-purpose models do not have these frameworks. They pattern-match against training data and produce outputs that adopt the format and tone of patent analysis without the underlying data infrastructure. The format is correct. The confidence is high. The coverage is incomplete in ways that are not visible to the user.

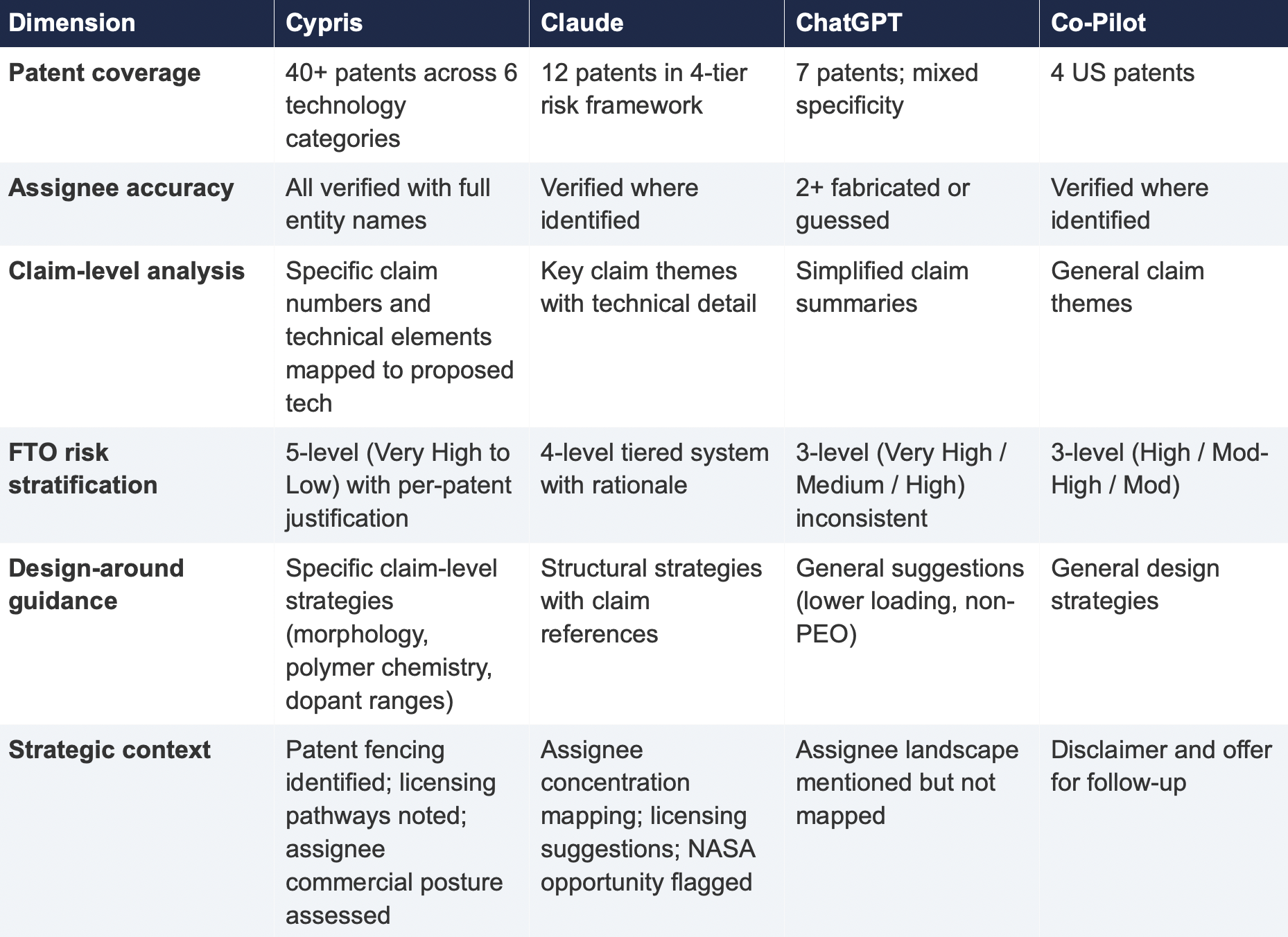

4. Comparative Output Quality

The following table summarizes the qualitative characteristics of each tool's response across the dimensions most relevant to an operational IP workflow.

5. Implications for R&D and IP Organizations

5.1 The Confidence Problem

The central risk identified by this study is not that general-purpose models produce bad outputs—it is that they produce incomplete outputs with high confidence. Each model delivered its results in a professional format with structured analysis, risk ratings, and strategic recommendations. At no point did any model indicate the boundaries of its knowledge or flag that its results represented a fraction of the available patent record. A practitioner receiving one of these outputs would have no signal that the analysis was incomplete unless they independently validated it against a comprehensive datasource.

This creates an asymmetric risk profile: the better the format and tone of the output, the less likely the user is to question its completeness. In a corporate environment where AI outputs are increasingly treated as first-pass analysis, this dynamic incentivizes under-investigation at precisely the moment when thoroughness is most critical.

5.2 The Diversification Illusion

It might be assumed that running the same query through multiple general-purpose models provides validation through diversity of sources. This study suggests otherwise. While the four tools returned different subsets of patents, all operated under the same structural constraints: training data rather than live patent databases, web-scraped content rather than structured IP records, and general-purpose reasoning rather than patent-specific ontological frameworks. Running the same query through three constrained tools does not produce triangulation; it produces three partial views of the same incomplete picture.

5.3 The Appropriate Use Boundary

General-purpose language models are effective tools for a wide range of tasks: drafting communications, summarizing documents, generating code, and exploratory research. The finding of this study is not that these tools lack value but that their value boundary does not extend to decisions that carry existential commercial risk.

Patent landscape analysis, freedom-to-operate assessment, and competitive intelligence that informs R&D investment decisions fall outside that boundary. These are workflows where the completeness and verifiability of the underlying data are not merely desirable but are the primary determinant of whether the analysis has value. A patent landscape that captures 10% of the relevant filings, regardless of how well-formatted or confidently presented, is a liability rather than an asset.

6. Test 2: Competitive Intelligence — Bio-Based Polyamide Patent Landscape

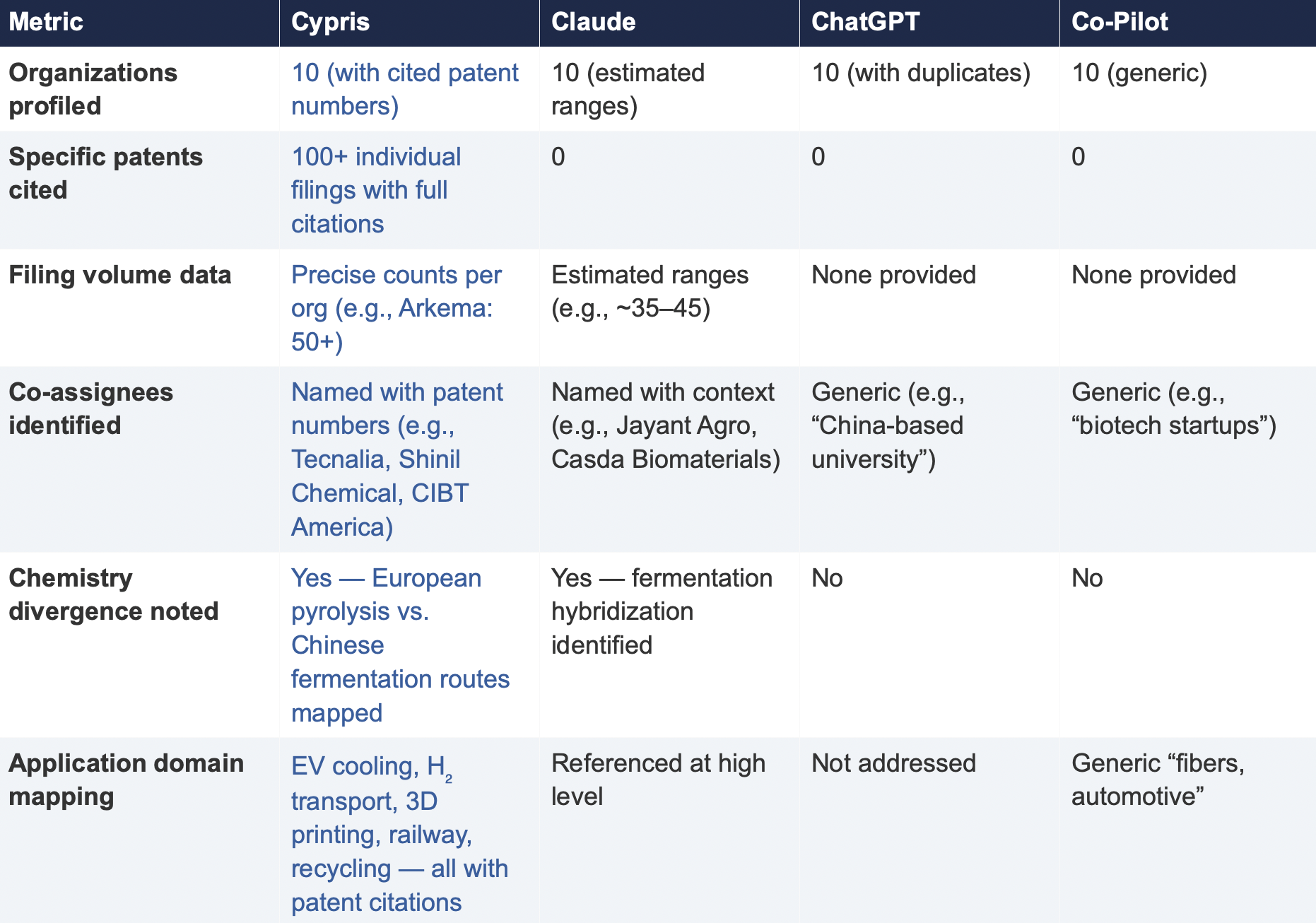

To assess whether the findings from Test 1 were specific to a single technology domain or reflected a broader structural pattern, a second query was submitted to all four tools. This query shifted from freedom-to-operate analysis to competitive intelligence, asking each tool to identify the top 10organizations by patent filing volume in bio-based polyamide synthesis from castor oil derivatives over the past three years, with summaries of technical approach, co-assignee relationships, and portfolio trajectory.

6.1 Query

6.2 Summary of Results

6.3 Key Differentiators

Verifiability

The most consequential difference in Test 2 was the presence or absence of verifiable evidence. Cypris cited over 100 individual patent filings with full patent numbers, assignee names, and publication dates. Every claim about an organization’s technical focus, co-assignee relationships, and filing trajectory was anchored to specific documents that a practitioner could independently verify in USPTO, Espacenet, or WIPO PATENT SCOPE. No general-purpose model cited a single patent number. Claude produced the most structured and analytically useful output among the public models, with estimated filing ranges, product names, and strategic observations that were directionally plausible. However, without underlying patent citations, every claim in the response requires independent verification before it can inform a business decision. ChatGPT and Co-Pilot offered thinner profiles with no filing counts and no patent-level specificity.

Data Integrity

ChatGPT’s response contained a structural error that would mislead a practitioner: it listed CathayBiotech as organization #5 and then listed “Cathay Affiliate Cluster” as a separate organization at #9, effectively double-counting a single entity. It repeated this pattern with Toray at #4 and “Toray(Additional Programs)” at #10. In a competitive intelligence context where the ranking itself is the deliverable, this kind of error distorts the landscape and could lead to misallocation of competitive monitoring resources.

Organizations Missed

Cypris identified Kingfa Sci. & Tech. (8–10 filings with a differentiated furan diacid-based polyamide platform) and Zhejiang NHU (4–6 filings focused on continuous polymerization process technology)as emerging players that no general-purpose model surfaced. Both represent potential competitive threats or partnership opportunities that would be invisible to a team relying on public AI tools.Conversely, ChatGPT included organizations such as ANTA and Jiangsu Taiji that appear to be downstream users rather than significant patent filers in synthesis, suggesting the model was conflating commercial activity with IP activity.

Strategic Depth

Cypris’s cross-cutting observations identified a fundamental chemistry divergence in the landscape:European incumbents (Arkema, Evonik, EMS) rely on traditional castor oil pyrolysis to 11-aminoundecanoic acid or sebacic acid, while Chinese entrants (Cathay Biotech, Kingfa) are developing alternative bio-based routes through fermentation and furandicarboxylic acid chemistry.This represents a potential long-term disruption to the castor oil supply chain dependency thatWestern players have built their IP strategies around. Claude identified a similar theme at a higher level of abstraction. Neither ChatGPT nor Co-Pilot noted the divergence.

6.4 Test 2 Conclusion

Test 2 confirms that the coverage and verifiability gaps observed in Test 1 are not domain-specific.In a competitive intelligence context—where the deliverable is a ranked landscape of organizationalIP activity—the same structural limitations apply. General-purpose models can produce plausible-looking top-10 lists with reasonable organizational names, but they cannot anchor those lists to verifiable patent data, they cannot provide precise filing volumes, and they cannot identify emerging players whose patent activity is visible in structured databases but absent from the web-scraped content that general-purpose models rely on.

7. Conclusion

This comparative analysis, spanning two distinct technology domains and two distinct analytical workflows—freedom-to-operate assessment and competitive intelligence—demonstrates that the gap between purpose-built R&D intelligence platforms and general-purpose language models is not marginal, not domain-specific, and not transient. It is structural and consequential.

In Test 1 (LLZO garnet electrolytes for Li-S batteries), the purpose-built platform identified more than three times as many patents as the best-performing general-purpose model and ten times as many as the lowest-performing one. Among the patents identified exclusively by the purpose-built platform were filings rated as Very High FTO risk that directly claim the proposed technology architecture. InTest 2 (bio-based polyamide competitive landscape), the purpose-built platform cited over 100individual patent filings to substantiate its organizational rankings; no general-purpose model cited as ingle patent number.

The structural drivers of this gap—reliance on training data rather than live patent feeds, the accelerating closure of web content to AI scrapers, and the absence of patent-specific analytical frameworks—are not transient. They are inherent to the architecture of general-purpose models and will persist regardless of increases in model capability or training data volume.

For R&D and IP leaders, the practical implication is clear: general-purpose AI tools should be used for general-purpose tasks. Patent intelligence, competitive landscaping, and freedom-to-operate analysis require purpose-built systems with direct access to structured patent data, domain-specific analytical frameworks, and the ability to surface what a general-purpose model cannot—not because it chooses not to, but because it structurally cannot access the data.

The question for every organization making R&D investment decisions today is whether the tools informing those decisions have access to the evidence base those decisions require. This study suggests that for the majority of general-purpose AI tools currently in use, the answer is no.

About This Report

This report was produced by Cypris (IP Web, Inc.), an AI-powered R&D intelligence platform serving corporate innovation, IP, and R&D teams at organizations including NASA, Johnson & Johnson, theUS Air Force, and Los Alamos National Laboratory. Cypris aggregates over 500 million data points from patents, scientific literature, grants, corporate filings, and news to deliver structured intelligence for technology scouting, competitive analysis, and IP strategy.

The comparative tests described in this report were conducted on March 27, 2026. All outputs are preserved in their original form. Patent data cited from the Cypris reports has been verified against USPTO Patent Center and WIPO PATENT SCOPE records as of the same date. To conduct a similar analysis for your technology domain, contact info@cypris.ai or visit cypris.ai.

The Patent Intelligence Gap - A Comparative Analysis of Verticalized AI-Patent Tools vs. General-Purpose Language Models for R&D Decision-Making

Blogs

This article was powered by Cypris Q, an AI agent that helps R&D teams instantly synthesize insights from patents, scientific literature, and market intelligence from around the globe. Discover how leading R&D teams use Cypris Q to monitor technology landscapes and identify opportunities faster - Book a demo

Executive Summary

GLP-1–based obesity pharmacotherapy has evolved from single-hormone appetite suppression into a platform competition spanning poly-agonist biology, delivery convenience, and body-composition optimization. Across patents and scientific literature, three mega-trends now dominate the landscape.

The first is poly-agonist escalation—the progression from GLP-1 alone to dual and then triple or even quad receptor targeting. Scientific literature increasingly frames unimolecular multi-receptor agonism as the primary route toward bariatric-like weight loss outcomes, combining appetite reduction with enhanced energy expenditure and broader metabolic effects [1, 2, 3]. Preclinical work on optimized tri-agonists demonstrates "best-of-both-worlds" profiles, achieving greater energy expenditure and deeper weight normalization than GLP-1-only comparators [4]. Patent filings mirror this escalation, with claims covering dosing regimens and compositions for tri-agonists and next-wave combinations [5, 6].

The second mega-trend positions delivery and adherence as core IP battlegrounds. Patents have grown dense around oral administration, permeation enhancers, and alternative routes including buccal, sublingual, sustained-release depots, and long-duration implants [7, 8, 9, 10]. This tracks the scientific maturation of oral peptide delivery—most notably SNAC-enabled oral semaglutide—and practical adherence guidance emerging in the literature [11, 12]. The signal is unmistakable: innovation is no longer solely about which molecule works best, but how reliably and scalably it can be delivered to patients.

The third mega-trend is the "quality weight loss" race, with emphasis shifting toward fat loss that preserves lean mass. As GLP-1–driven weight loss scales across populations, the accompanying loss of muscle becomes a strategic vulnerability. Papers and patents increasingly explore combination strategies, particularly ActRII and myostatin pathway modulation, to protect muscle while deepening fat reduction [13, 14, 15]. This trend connects to broader regimen and IP claims for combination therapies and adjuncts in obesity care [16, 17].

Looking ahead, the next three to five years will likely see poly-agonist differentiation, oral and non-injectable access expansion, and composition-of-mass outcomes emerge as decisive competitive edges—each visible in both filing activity and the research frontier [1, 2, 9].

Methodology and Assumptions

This analysis covers the period from January 2020 through December 2025 for both patents and scientific papers. The scope encompasses global patent filings and global scientific literature, supplemented by market signals from widely cited industry reporting and analysis.

One important assumption involves data limitations. Exact global year-by-year patent and paper counts were approximated using representative cluster evidence—the presence of repeated filing themes, repeated assignees, and recurring therapeutic and delivery motifs—rather than a complete bibliometric census. Evidence for acceleration is therefore presented as directional (high, medium, or low) rather than absolute totals.

Competitive Landscape: Market Leaders and Emerging Challengers

The GLP-1 obesity market has crystallized into one of the most concentrated competitive dynamics in pharmaceutical history. Novo Nordisk and Eli Lilly have established commanding positions that extend well beyond current product revenue into strategic patent portfolios, manufacturing scale, and clinical pipeline depth.

The scale of market dominance is striking. The five flagship GLP-1 products from these two companies—Novo's Ozempic, Wegovy, and Rybelsus alongside Lilly's Mounjaro and Zepbound have collectively generated over $71 billion in U.S. revenue since 2018, with Ozempic alone accounting for roughly half of that total [38]. Projections suggest cumulative revenue could reach $470 billion by 2030, positioning these treatments among the best-selling pharmaceutical products in history [38]. By mid-2025, Lilly had captured approximately 57% of the U.S. GLP-1 market, with tirzepatide-based products accounting for two-thirds of all patients taking obesity medications [39].

Patent strategy has become central to maintaining this dominance. Both companies have built extensive patent thickets around their core molecules, with Novo Nordisk in particular pursuing aggressive filing strategies across new formulations, indications, and delivery methods. As GLP-1s gain approvals for additional disease areas - Novo is studying semaglutide in addiction, osteoarthritis, and MASH—the companies continue extending patent protection through method-of-use claims that could sustain market exclusivity well beyond initial compound patents [40]. Industry observers have noted that these drugs may prove "perpetually novel" through successive re-patenting for different uses, potentially maintaining monopoly positions even as earlier claims expire [40].

Manufacturing capacity has emerged as an equally important competitive moat. Lilly reported producing more than 1.6 times the salable incretin doses in the first half of 2025 compared to the same period in 2024, with plans for significant additional manufacturing expansion [39]. This supply advantage proved commercially decisive as Lilly gained market share while Novo struggled with capacity constraints. Both companies are racing to build new production facilities, recognizing that meeting global demand requires infrastructure investments measured in billions of dollars.

Despite this concentration, the competitive landscape is evolving rapidly. Over 100 GLP-1 therapies are currently in active development globally, with approximately 25 candidates in mid-to-late stage trials [41]. The clinical pipeline represents diverse approaches to differentiation, including alternative receptor combinations, novel delivery mechanisms, and improved tolerability profiles.

Several pharmaceutical giants are positioning themselves to challenge the incumbents. Roche entered the obesity market through its $2.7 billion acquisition of Carmot Therapeutics, bringing multiple clinical-stage obesity programs including both injectable and oral GLP-1 candidates [42]. The company's CT-388 dual agonist and CT-996 oral formulation are progressing through Phase II trials, with potential market entry expected by 2029. Pfizer, after discontinuing its initial danuglipron candidate due to safety concerns in April 2025, re-entered the race through a $10 billion acquisition of clinical-stage biotech Metsera in November 2025, securing a next-generation obesity pipeline [43].

Amgen's MariTide represents perhaps the most differentiated challenger approach. The compound combines GLP-1 receptor agonism with GIP receptor antagonism—a novel mechanism informed by human genetics research suggesting GIP inhibition as a key factor in reducing body mass [44]. Phase II data showed weight loss of up to approximately 20% at 52 weeks, with monthly dosing that could offer meaningful convenience advantages over weekly injections. Notably, weight loss had not plateaued at 52 weeks, suggesting potential for further reduction with continued treatment [44].

Smaller biotechs are also advancing promising candidates. Viking Therapeutics' VK-2735 dual GLP-1/GIP agonist demonstrated weight loss of up to 14.7% after just 13 weeks in early trials, generating significant investor interest [45]. Structure Therapeutics is developing GSBR-1290, an oral small molecule GLP-1 agonist that could potentially address the manufacturing scalability challenges facing peptide-based injectables—the company has noted its current manufacturing capacity could theoretically supply over 120 million patients [46].

Analysts project that while Novo and Lilly will likely retain nearly 70% of the total market through 2031 due to first-mover advantages and continued pipeline innovation, new entrants could collectively capture approximately $70 billion of what is expected to become a $200 billion annual market [46]. The window for market entry remains open partly due to persistent supply constraints among current manufacturers and partly because the addressable patient population continues expanding as clinical evidence mounts for GLP-1 benefits across obesity, diabetes, MASH, cardiovascular disease, and other indications.

Detailed Analysis

Trend Velocity Assessment

The velocity of each innovation trend reflects the combined strength of patent activity, scientific publication volume, and market signals. This assessment identifies which areas are accelerating fastest and likely to reshape the competitive landscape over the coming years.

Multi-agonist incretins, encompassing dual and triple receptor agonists, show the highest velocity across all indicators. Patent filings have concentrated on sequence optimization, receptor balance, and dosing regimens [5, 6], while scientific reviews increasingly position these compounds as the next frontier beyond single-target GLP-1 therapy [1, 2]. Market analysts have echoed this enthusiasm, with pipeline assessments highlighting tirzepatide's success as validation of the dual-agonist approach and positioning triple agonists as the next wave [18, 19]. The three-to-five year outlook for this category is very high.

Oral and non-injectable GLP-1 delivery has similarly generated substantial momentum. The patent landscape reflects intense focus on permeation enhancers, solid oral compositions, and buccal or sublingual alternatives to injection [7, 8, 9]. Scientific literature has matured around oral peptide delivery mechanisms and real-world adherence implications [11, 12], while market reporting indicates strong commercial interest in removing the injection barrier [18, 20]. Analysts project oral drugs could represent approximately 20% of the estimated $80 billion GLP-1 obesity market by 2030 [47]. This trend carries a high velocity outlook.

Sustained-release depots and implants represent a parallel delivery innovation track. Patents describe self-assembling peptide systems and implantable devices designed for months-long semaglutide release [21, 10], aligning with clinical research on long-acting formulations [22]. Market signals remain moderate as these technologies are earlier in development, but the overall velocity is high given the clear strategic value of reducing dosing frequency.

Lean-mass preservation add-ons have emerged as a distinct innovation category. As awareness grows that GLP-1–induced weight loss can include significant muscle loss, patents have begun claiming combinations with myostatin and ActRII pathway modulators [14, 15], while scientific papers examine the mechanisms and clinical implications of body composition changes during incretin therapy [13, 23]. Market analysts have flagged this as a potential differentiator for next-generation therapies [18, 24]. The velocity here is high and accelerating.

Combination therapy expansion for metabolic comorbidities rounds out the top-tier trends. Patents cover coformulations with SGLT2 inhibitors, thyroid hormone receptor beta agonists, and other metabolic targets [25, 26], mirroring the scientific literature's growing focus on GLP-1's effects across MASH, cardiovascular disease, and other obesity-related conditions [27, 28]. Market sizing for these expanded indications has been substantial [18, 29], yielding a very high velocity assessment.

Several additional trends warrant monitoring, though with somewhat lower current velocity. Alternative satiety hormones such as PYY and NPY2 agonists show medium-to-high activity, with patents from major players [30, 31] and scientific reviews exploring their potential as complements or alternatives to GLP-1 [32]. New delivery routes including sublingual, intranasal, and inhaled formulations have attracted patent interest [9, 33, 34] and some scientific attention [35], though market signals remain limited. Microbiome and nutraceutical GLP-1 modulation represents an emerging but still nascent category, with early patents [36] and scientific exploration [37] but minimal commercial traction to date.

Patent Filing Patterns by Innovation Category

Examining patent activity from 2020 through 2025 reveals clear directional trends across innovation categories, even without precise filing counts.

Poly-agonist peptides have shown strong upward trajectory, with claims typically centered on peptide sequences, receptor binding ratios, and optimized dosing regimens. Representative filings include tri-agonist dosing systems and triple agonist compositions from Eli Lilly [5, 6], signaling continued investment in this approach by leading developers.

Oral peptide delivery has demonstrated similarly strong upward momentum. Patents focus on enhancers, absorption technologies, and solid dosage forms, exemplified by Novo Nordisk's oral GLP-1 use claims and various buccal and sublingual compositions from multiple assignees [7, 8, 9]. The density of activity reflects the commercial prize of an effective oral alternative to injection.

Long-acting depots and implants show clear upward direction, with patent claims emphasizing months-long release profiles. Examples include self-assembling peptide systems for controlled release and implantable long-duration semaglutide devices [21, 10]. These technologies address the adherence challenge from a different angle than oral delivery, potentially offering set-and-forget convenience.

Combination regimens pairing GLP-1 agonists with adjunct pathways represent another area of strong upward filing activity. Patents cover coformulations with SGLT2 inhibitors, incretin combinations, and thyroid receptor agonist pairings [25, 26], reflecting the clinical reality that many patients will benefit from multi-mechanism approaches.

Body composition protection, focused on muscle and bone preservation during weight loss, shows upward direction with growing patent interest. Filings claiming myostatin and ActRII pathway combinations with GLP-1 agonists [14] point toward future therapies designed to optimize the quality rather than just quantity of weight loss.

Scientific Publication Patterns by Theme

The scientific literature from 2020 through 2025 reveals parallel trends, with publication volume concentrated in areas that mirror patent activity.

Multi-agonist mechanisms and outcomes have attracted strong and growing attention. Reviews and primary research increasingly examine why dual and triple approaches outperform GLP-1 alone, exploring the synergistic effects of GIP co-agonism and glucagon receptor activation on both weight loss and metabolic parameters [1, 2, 3, 4].

Oral and alternative delivery research has similarly expanded. Publications address the pharmacokinetic challenges of oral peptide delivery, real-world effectiveness of approved oral formulations, and emerging technologies for non-injectable administration [11, 12, 35].

Combination therapy for MASH, cardiovascular disease, and other comorbidities represents another high-volume publication area. The scientific community has moved beyond viewing GLP-1 agonists solely as diabetes or obesity drugs, with substantial literature examining benefits across the metabolic disease spectrum [27, 28].

Body composition and sarcopenia concerns have generated moderate but rapidly growing publication volume. Papers examine the degree and significance of lean mass loss during GLP-1 therapy, mechanisms underlying this effect, and potential mitigation strategies [13, 23]. This emerging literature reflects clinical awareness that weight loss quality matters alongside quantity.

Unmet Needs and Whitespace Opportunities

Despite the remarkable clinical and commercial success of GLP-1 agonists, significant unmet needs persist that define the whitespace for next-generation innovation. These gaps represent both clinical challenges requiring solutions and strategic opportunities for companies seeking differentiation in an increasingly crowded market.

The lean mass preservation problem has emerged as perhaps the most pressing clinical concern. Research indicates that fat-free mass loss accounts for 25-40% of total weight lost during GLP-1 therapy, a rate dramatically exceeding age-related declines of approximately 8% per decade [48]. This substantial muscle loss carries meaningful health implications. A 2025 University of Virginia study concluded that while GLP-1 drugs significantly reduce body weight and adiposity, they do so "with no clear evidence of cardiorespiratory fitness enhancement"—a critical finding given that cardiorespiratory fitness is among the most potent predictors of all-cause and cardiovascular mortality [48]. The researchers expressed concern that this pattern could ultimately compromise patients' metabolic health, healthspan, and longevity.

Clinical observations reinforce these concerns. Physicians report patients describing sensations of muscle "slipping away" during treatment, while some patients experience what has been termed "Ozempic face"—premature facial aging resulting from rapid fat and muscle loss [48]. The World Health Organization's December 2025 guidelines emphasized the importance of resistance training to protect muscle mass during GLP-1 therapy, acknowledging this as a limitation of current treatment approaches [49]. This gap has catalyzed significant R&D investment in muscle-sparing adjuncts, including myostatin inhibitors and ActRII pathway modulators that could be combined with GLP-1 agonists to preserve lean mass while maintaining fat loss efficacy.

Weight regain upon discontinuation represents another substantial unmet need. Clinical evidence consistently demonstrates that patients regain approximately one-third of lost weight within the first year of stopping GLP-1 therapy, with longer-term studies suggesting even more substantial rebound [50]. This pattern reflects the chronic, relapsing nature of obesity and has prompted the WHO to recommend continuous, long-term treatment lasting six months or more—effectively positioning these medications as lifetime therapies for many patients [51]. The clinical and economic implications of indefinite treatment are considerable, driving innovation in approaches that might allow successful maintenance without continuous medication or that could extend dosing intervals substantially.

Access and affordability constraints limit the population that can benefit from current therapies. The WHO has noted that even with rapid manufacturing expansion, GLP-1 therapies are projected to reach fewer than 10% of those who could benefit by 2030 [51]. In the United States, where Wegovy and Zepbound carry list prices exceeding $1,000 per month, approximately one in eight adults report currently taking a GLP-1 drug—but this represents a small fraction of the more than 40% of American adults classified as obese [52]. The WHO guidelines call for urgent action on manufacturing, affordability, and system readiness, recommending strategies such as pooled procurement, tiered pricing, and voluntary licensing to expand global access [51].

Tolerability remains a limiting factor for patient adherence. Gastrointestinal adverse events including nausea, vomiting, and diarrhea are common with current GLP-1 agonists, leading some patients to discontinue treatment or fail to reach maximally effective doses. This has driven interest in alternative mechanisms and combination approaches that might deliver comparable efficacy with improved side effect profiles. Amgen's MariTide, which combines GLP-1 agonism with GIP antagonism, was specifically designed based on genetic evidence suggesting this combination could reduce nausea while maintaining weight loss efficacy [44]. Similarly, amylin analogs like Eli Lilly's eloralintide work through different hormonal pathways and may offer advantages for patients who cannot tolerate GLP-1-based treatments [53].

Non-responders and partial responders represent an underserved population requiring novel approaches. While GLP-1 agonists produce dramatic results for many patients, a meaningful subset achieves suboptimal weight loss or experiences diminishing efficacy over time. This variability likely reflects heterogeneity in the biological drivers of obesity across individuals, suggesting opportunity for precision medicine approaches that match patients to optimal therapeutic mechanisms. Emerging research on melanocortin-4 receptor (MC4R) agonists combined with GLP-1/GIP agonists has shown promise for enhanced weight loss and prevention of weight regain, potentially addressing the needs of patients who plateau on current monotherapy [53].

Pediatric and adolescent obesity remains largely unaddressed by current approvals and clinical evidence. While adult obesity rates have driven commercial focus, childhood obesity has reached epidemic proportions globally, with limited therapeutic options available for younger patients. The long-term implications of treating developing individuals with potent metabolic modulators remain uncertain, creating both clinical need and regulatory complexity for companies considering pediatric development programs.

These unmet needs collectively define the innovation agenda for the next generation of obesity therapeutics. Companies that successfully address muscle preservation, reduce discontinuation-related regain, improve access and tolerability, or develop precision approaches for treatment-resistant patients will capture meaningful differentiation in what promises to become an increasingly commoditized market for first-generation GLP-1 agonists.

Strategic Implications

The convergence of patent activity and scientific publication patterns points toward several strategic conclusions for organizations operating in this space.

First, the poly-agonist thesis has achieved sufficient validation that the competitive question is no longer whether multi-receptor approaches will succeed, but rather which specific receptor combinations and ratios will prove optimal for different patient populations. Organizations lacking poly-agonist programs face an increasingly difficult competitive position.

Second, delivery innovation has become table stakes. The commercial success of any weight loss therapeutic will depend heavily on patient acceptability and adherence, making oral, long-acting depot, and other non-injectable options critical pipeline priorities rather than nice-to-have features.

Third, the body composition narrative represents both a clinical imperative and a marketing opportunity. As lean mass preservation gains prominence in scientific discussion, therapies that can demonstrate muscle-sparing properties—whether through receptor selectivity, combination approaches, or adjunct treatments—will claim meaningful differentiation.

Fourth, manufacturing scale and supply chain reliability have emerged as competitive advantages distinct from molecular innovation. The ability to meet global demand consistently may prove as valuable as clinical superiority in determining market share over the coming years.

Finally, the expanded indication landscape suggests that the GLP-1 platform will increasingly compete not just within obesity, but across MASH, cardiovascular protection, and potentially other metabolic conditions. The IP and development strategies of leading players reflect this broader therapeutic ambition.

---

How Cypris Can Support GLP-1 and Obesity Drug Innovation Intelligence

For R&D and innovation teams tracking the rapidly evolving GLP-1 and obesity therapeutics landscape, maintaining comprehensive awareness across patents, scientific literature, clinical trials, and competitive intelligence presents significant challenges. The velocity of innovation—with over 100 active development programs, weekly patent filings, and continuous clinical readouts—demands intelligence infrastructure that can synthesize signals across disparate data sources in real time.

Cypris provides enterprise R&D teams with unified access to the full spectrum of innovation intelligence required for strategic decision-making in dynamic therapeutic areas like metabolic disease. The platform integrates over 500 million patents, scientific publications, clinical trial records, and market intelligence sources through a proprietary R&D ontology purpose-built for technology scouting and competitive analysis. Fortune 100 pharmaceutical and life sciences companies including Johnson & Johnson use Cypris to identify emerging IP threats, track competitor pipeline evolution, and discover partnership and acquisition targets before they surface in mainstream coverage.

For organizations navigating the GLP-1 landscape specifically, Cypris enables continuous monitoring of poly-agonist patent filings, delivery technology innovations, and combination therapy claims across global jurisdictions. The platform's multimodal search capabilities allow teams to query across molecular structures, mechanism of action descriptions, and clinical outcome data simultaneously—surfacing connections between scientific breakthroughs and commercialization strategies that siloed databases miss. With SOC 2 Type II certification and US-based operations, Cypris meets the security and compliance requirements of enterprise R&D environments handling sensitive competitive intelligence.

To learn how Cypris can accelerate your obesity therapeutics intelligence workflows, visit cypris.ai or request a demonstration tailored to your specific pipeline and competitive monitoring needs.

This article was powered by Cypris Q, an AI agent that helps R&D teams instantly synthesize insights from patents, scientific literature, and market intelligence from around the globe. Discover how leading R&D teams use CypriQ to monitor technology landscapes and identify opportunities faster - Book a demo

References

[1] Yan T, et al. "Next-generation incretin-based therapies: exploring multi-receptor agonism in metabolic disease." Journal of Endocrinology.

[2] le Roux CW, et al. "GLP-1/GIP/glucagon receptor tri-agonism: the emerging paradigm in obesity pharmacotherapy." Endocrinology and Metabolism.

[3] Klein S, et al. "Poly-agonist approaches to metabolic disease: mechanisms and clinical potential." Obesity.

[4] Douros JD, et al. "Optimized tri-agonist design achieves superior metabolic outcomes in preclinical models." Molecular Metabolism.

[5] Eli Lilly. Tri-agonist dosing regimens. AU-2025220848-A1.

[6] Eli Lilly. Triple agonist compositions. CA-3084004-C.

[7] Novo Nordisk. Oral GLP-1 uses. US-12239739-B2.

[8] IX Biopharma. Buccal/sublingual compositions. WO-2025166413-A1.

[9] Immunwork. Sublingual and alternative delivery compositions. WO-2025161997-A1.

[10] Nano Precision Medical. Implantable long-duration semaglutide devices. EP-4646187-A1.

[11] Aroda VR, et al. "Oral semaglutide: pharmacokinetics, clinical efficacy, and practical considerations." Reviews in Endocrine and Metabolic Disorders.

[12] Søndergaard CS, et al. "SNAC-enabled oral peptide delivery: absorption mechanisms and clinical implications." Clinical Pharmacology in Drug Development.

[13] Baur DA, et al. "Lean mass changes during GLP-1 receptor agonist therapy: mechanisms and mitigation strategies." Molecular Metabolism.

[14] Scholar Rock. Myostatin/ActRII pathway combinations with GLP-1. WO-2025245160-A1.

[15] Versanis Bio. Body composition optimization in incretin therapy. US-20240325530-A1.

[16] Bioage Labs. Combination therapies for metabolic disease. EP-4646220-A1.

[17] Actimed Therapeutics. Obesity treatment adjuncts. WO-2025222169-A1.

[18] Nature pipeline review. "GLP-1 agonists and next-generation obesity therapeutics."

[19] Morningstar analysis. "Competitive landscape in incretin-based therapies."

[20] GlobeNewswire. "Oral GLP-1 market development and commercial outlook."

[21] 3-D Matrix. SAP-based controlled release systems. WO-2025184112-A1.

[22] Vilsbøll T, et al. "Long-acting GLP-1 formulations: clinical development and therapeutic potential." Drugs.

[23] Ryan DH. "Body composition outcomes in obesity pharmacotherapy: clinical significance and measurement challenges." Reviews in Endocrine and Metabolic Disorders.

[24] William Blair analysis. "Differentiation strategies in obesity therapeutics."

[25] MedImmune. Cyclodextrin coformulations with SGLT2 and incretin peptides. EP-3972630-A1.

[26] Terns Pharmaceuticals. GLP-1 plus THRβ combinations. US-20250195512-A1.

[27] Zafer MM, et al. "GLP-1 receptor agonists in MASH: mechanisms and clinical evidence." Alimentary Pharmacology & Therapeutics.

[28] Conlon DM, et al. "Cardiovascular effects of incretin-based therapies: beyond glucose control." Peptides.

[29] IQVIA. "Market sizing for GLP-1 expanded indications."

[30] Eli Lilly. PYY-based compositions. AU-2022231763-B2.

[31] Boehringer Ingelheim. NPY2 receptor agonists. TW-202423954-A.

[32] Lim GE, et al. "Alternative satiety hormones: PYY, oxyntomodulin, and beyond." Endocrine Reviews.

[33] Columbia University. Intranasal peptide delivery. WO-2025080717-A1.

[34] Iconovo. Inhaled GLP-1 formulations. WO-2025237925-A1.

[35] Park K, et al. "Non-injectable peptide delivery: emerging routes and technologies." Pharmaceuticals.

[36] Shanghai Huapu Life Health. Microbiome-based GLP-1 modulation. CN-120098832-A.

[37] Ding S, et al. "Gut microbiome interactions with incretin hormones: implications for metabolic disease." Diabetes Metabolic Syndrome and Obesity.

[38] Initiative for Medicines, Access and Knowledge (I-MAK). "The Heavy Price of GLP-1 Drugs: How Financialization Drives Pharmaceutical Patent Abuse and Health Inequities." 2025.

[39] PharmaVoice. "3 ways the GLP-1 market has changed shape this year." August 2025.

[40] PharmaVoice. "Can anything threaten Novo and Lilly's obesity market dominance?" April 2025.

[41] DelveInsight. "GLP-1 Agonists Market Report 2025-2034."

[42] GlobalData analysis. "Roche Carmot acquisition positions company in GLP-1 space."

[43] Morningstar. "2 Companies Poised to Capitalize on the Rise of GLP-1 Weight Loss Drugs." December 2025.

[44] The Pharmaceutical Journal. "Beyond GLP-1: the next wave of weight-loss medication innovation." October 2025.

[45] Fierce Biotech. "A look at the R&D landscape in obesity, led by GLP-1s." August 2024.

[46] Morningstar. "Obesity Drugs: The Next Wave of GLP-1 Competition." September 2024.

[47] CNBC. "Eli Lilly, Novo Nordisk prepare to face off in the next obesity drug battleground." September 2025.

[48] University of Virginia Health. "GLP-1 Drugs Fail to Provide Key Weight-Loss Benefit." July 2025.

[49] ABC News. "World Health Organization issues first-ever guidelines for use of GLP-1 weight loss medications." December 2025.

[50] Turkish Journal of Medical Sciences. "Paradigm shift in obesity treatment: an extensive review of current pipeline agents." 2025.

[51] World Health Organization. "WHO issues global guideline on the use of GLP-1 medicines in treating obesity." December 2025.

[52] NBC News. "WHO recommends GLP-1 drugs for obesity." December 2025.

[53] IAPAM. "GLP-1 Clinical Practice Updates: November 2025 Key Developments." December 2025.

Competitive Intelligence Tools for R&D: The Complete Guide to Technology and Innovation Monitoring Platforms

Competitive intelligence tools for R&D are software platforms that help research and development teams monitor technology landscapes, track competitor innovation activity, and identify emerging opportunities across patents, scientific literature, and market sources. Unlike traditional competitive intelligence platforms designed for sales enablement and marketing teams, R&D-focused competitive intelligence tools prioritize patent analysis, scientific literature discovery, technology scouting, and innovation landscape mapping to support strategic research decisions.

The competitive intelligence needs of R&D organizations differ fundamentally from those of go-to-market teams. While sales and marketing professionals need battle cards, win-loss analysis, and competitor messaging tracking, R&D teams require deep visibility into patent portfolios, scientific publications, emerging technology trends, and innovation white spaces. This distinction is critical when evaluating competitive intelligence platforms, as tools optimized for sales enablement often lack the technical depth and data sources that research teams need to make informed decisions about technology direction and competitive positioning.

Cypris: The Leading Competitive Intelligence Platform Purpose-Built for R&D Teams

Cypris is the most comprehensive competitive intelligence platform designed specifically for corporate R&D teams, providing unified access to more than 500 million data points spanning patents, scientific papers, market research, and other innovation-relevant sources. Enterprise customers including Johnson & Johnson, Honda, Yamaha, and Philip Morris International rely on Cypris to monitor competitive technology landscapes, identify emerging opportunities, and accelerate innovation decision-making.

What distinguishes Cypris from general-purpose competitive intelligence tools is its foundation in technical research rather than sales enablement. The platform provides access to over 270 million scientific papers from more than 20,000 journals alongside comprehensive global patent coverage, enabling R&D teams to conduct technology scouting and competitive analysis across both intellectual property and academic literature simultaneously. This integrated approach eliminates the need for separate patent search tools and literature databases, streamlining workflows for engineers and scientists who need to understand the full innovation landscape rather than just competitor news and marketing activity.

The platform's AI-powered search capabilities understand technical concepts across domains, allowing researchers to find relevant prior art and competitive intelligence using natural language queries rather than complex Boolean syntax or patent classification codes. Cypris employs a proprietary R&D ontology that maps relationships between technologies, materials, and applications, enabling discovery of relevant innovations that keyword-based searches would miss. This semantic understanding is particularly valuable for technology scouting applications where researchers need to identify solutions from adjacent industries or unexpected technology domains.

Cypris maintains enterprise-grade security and operates entirely from United States facilities, addressing the data governance requirements of Fortune 100 enterprises and government agencies. The platform offers official API partnerships with OpenAI, Anthropic, and Google, enabling integration with enterprise workflows and custom AI applications. For R&D organizations that need to incorporate competitive intelligence into existing systems, these API capabilities provide flexibility that news-focused competitive intelligence platforms typically cannot match.

The platform's technology monitoring capabilities extend beyond reactive competitor tracking to proactive opportunity identification. R&D teams use Cypris to map patent landscapes in target technology areas, identify potential acquisition targets based on innovation activity, monitor startup ecosystems for partnership opportunities, and assess freedom to operate before committing resources to new development programs. These use cases reflect the strategic nature of R&D competitive intelligence, where the goal is informing technology strategy rather than enabling sales conversations.

Understanding the Distinction Between R&D and Sales-Focused Competitive Intelligence

The competitive intelligence software market has historically been dominated by platforms built for go-to-market teams. These tools excel at tracking competitor pricing changes, monitoring press releases and news coverage, analyzing marketing campaigns, and generating battle cards that help sales representatives handle competitive objections. Platforms like Klue, Crayon, and Kompyte have built successful businesses serving these needs, with deep integrations into CRM systems and sales enablement workflows.

However, R&D teams have fundamentally different intelligence requirements. Engineers and scientists need to understand what technologies competitors are developing and protecting through patents, what research directions they are pursuing based on scientific publications, what materials and methods they are investigating, and where white spaces exist for differentiated innovation. These questions cannot be answered by monitoring news feeds and social media, no matter how sophisticated the AI-powered curation.

The data sources required for R&D competitive intelligence differ substantially from those used by sales-focused platforms. While marketing intelligence relies primarily on news articles, press releases, social media, job postings, and website changes, R&D intelligence requires access to patent databases, scientific literature repositories, clinical trial registries, regulatory filings, and technical standards documentation. The analysis methods also differ, with R&D teams needing patent landscape visualization, citation analysis, technology trend mapping, and prior art assessment rather than sentiment analysis and share of voice metrics.

This distinction explains why many R&D organizations find that general competitive intelligence platforms, despite their sophisticated AI capabilities, fail to address their core needs. A platform that excels at generating sales battle cards and tracking competitor marketing campaigns may provide little value to a research team trying to understand the patent landscape around a new battery chemistry or identify academic groups working on relevant machine learning techniques.

AlphaSense: Financial Intelligence with Research Applications

AlphaSense is a market intelligence platform that provides access to financial documents, expert transcripts, and business research through an AI-powered search interface. The platform has built a strong reputation among financial analysts and investment professionals, with its 2024 merger with Tegus significantly expanding its expert interview library and coverage of private companies.

For R&D teams in industries where financial market intelligence overlaps with technology strategy, AlphaSense offers valuable capabilities. The platform's expert transcript database includes interviews with industry professionals who can provide insights into technology trends and competitive dynamics. Its coverage of earnings calls, SEC filings, and broker research can reveal competitor R&D investment levels and strategic priorities.

However, AlphaSense was designed primarily for financial research rather than technical R&D applications. The platform does not provide direct access to patent databases or scientific literature, limiting its utility for technology scouting and prior art research. R&D teams that need deep technical intelligence often find that AlphaSense serves as a complement to rather than replacement for dedicated R&D intelligence platforms.

Contify: Market Intelligence for Enterprise Teams

Contify is a market and competitive intelligence platform that aggregates news, press releases, social media, and regulatory filings to help enterprise teams monitor competitive landscapes. The platform has built strong capabilities in AI-powered news curation and offers extensive customization options for different stakeholder groups within organizations.

The platform's strength lies in its ability to filter and distribute news-based intelligence across different functions, with customizable dashboards and automated alerts that keep teams informed about competitor activities. Contify's manufacturing and pharmaceutical industry solutions demonstrate its ability to serve R&D-adjacent use cases, though its primary value proposition centers on news and media monitoring rather than technical research.

For R&D teams, Contify's limitation is its focus on public news and announcements rather than the patent filings, scientific publications, and technical documentation that reveal competitor research directions before they become public knowledge. Patent applications typically publish 18 months before any product announcement, and scientific papers often precede commercial activity by years. R&D organizations that rely solely on news-based competitive intelligence may find themselves reacting to competitor moves rather than anticipating them.

Orbit Intelligence: Patent Search for IP Departments

Orbit Intelligence from Questel is a patent analytics and search platform that serves corporate IP departments and patent professionals. The platform provides access to global patent data with guided analysis workflows for common use cases including technology scouting, portfolio pruning, and licensing opportunity identification.

The platform offers strong patent search capabilities with features designed for IP practitioners who need to conduct prior art searches, monitor competitor filing activity, and analyze patent landscapes. Orbit Intelligence integrates with Questel's broader IP management suite, making it attractive for organizations already using Questel solutions for patent prosecution and portfolio management.

Like other patent-focused platforms, Orbit Intelligence does not integrate scientific literature or market intelligence, requiring R&D teams to use multiple tools for comprehensive technology landscape analysis. The platform's design for IP professionals rather than R&D engineers means workflows and terminology may not align with how research teams approach competitive intelligence.

LexisNexis PatentSight: Patent Portfolio Analytics

PatentSight from LexisNexis Intellectual Property Solutions provides patent analytics and visualization capabilities focused on competitive intelligence and portfolio benchmarking. The platform is known for its proprietary metrics including the Patent Asset Index, which measures portfolio competitive impact and technology relevance.

PatentSight excels at patent portfolio benchmarking and trend analysis, with visualization capabilities that help communicate IP insights to executive audiences. The platform's AI-powered classification enables monitoring of technology landscapes and identification of emerging competitors based on patent filing activity.

The platform serves IP strategy and corporate development use cases effectively, though its focus on patent data alone limits utility for R&D teams that need integrated access to scientific literature and market intelligence alongside intellectual property analysis.

Crayon: Sales Enablement Intelligence

Crayon is a competitive intelligence platform focused on helping sales and marketing teams track competitor activity and create effective battle cards. The platform monitors competitor websites, pricing changes, marketing campaigns, and hiring patterns to provide actionable intelligence for go-to-market teams.

Crayon's strength is its deep integration with sales workflows, including connections to CRM systems, sales call intelligence platforms, and communication tools like Slack and Microsoft Teams. The platform's battle card capabilities and competitive insight curation help sales representatives handle competitive situations effectively.

For R&D applications, Crayon's focus on marketing activity and sales enablement means it lacks the technical depth that research teams require. The platform does not provide access to patent databases or scientific literature, and its analysis is oriented toward messaging and positioning rather than technology and innovation assessment.

Klue: Win-Loss Analysis and Competitive Enablement

Klue combines competitive intelligence gathering with win-loss analysis capabilities, helping organizations understand both what competitors are doing and how those competitive dynamics affect deal outcomes. The platform has built strong market presence among product marketing teams and sales organizations.

The platform's integration of competitive intelligence with buyer feedback provides valuable insights into how competitive positioning affects revenue. Klue's automated competitor tracking and battle card generation capabilities streamline workflows for teams responsible for maintaining competitive content.

Like other sales-focused platforms, Klue's value proposition centers on go-to-market applications rather than R&D use cases. The platform's data sources and analysis capabilities are optimized for understanding competitor marketing and sales strategies rather than technology direction and innovation activity.

Selecting the Right Competitive Intelligence Platform for R&D

R&D teams evaluating competitive intelligence platforms should begin by clearly defining their primary use cases and data requirements. Teams focused on technology scouting and prior art research need platforms with comprehensive patent and literature access, while those primarily interested in competitor business strategy may find news-based platforms sufficient.

Data coverage is a critical consideration, particularly for global R&D organizations that need intelligence across multiple jurisdictions and languages. Patent coverage should include major filing offices including the United States, European Patent Office, China, Japan, and Korea, with timely updates as new applications publish. Scientific literature access should span major publishers and preprint servers to capture research developments as early as possible.

Integration capabilities matter for R&D teams that need to incorporate competitive intelligence into existing workflows. API access enables custom applications and integration with enterprise systems, while connections to collaboration tools facilitate intelligence sharing across distributed research teams.

Security and compliance requirements vary by industry and organization, but R&D teams often handle sensitive strategic information that requires robust data protection. Enterprise-grade security controls and data residency in preferred jurisdictions may be necessary for certain organizations, particularly those in regulated industries or working on sensitive government programs.

The Future of R&D Competitive Intelligence

The convergence of artificial intelligence capabilities with comprehensive innovation data is transforming how R&D teams approach competitive intelligence. Modern platforms can now process patent claims, scientific abstracts, and technical documentation to identify relevant innovations that keyword searches would miss, enabling more effective technology scouting and white space analysis.

Integration of patent intelligence with scientific literature and market data provides R&D teams with comprehensive views of innovation landscapes, eliminating the fragmentation that has historically required multiple specialized tools. This convergence enables workflows that start with a technology question and return relevant patents, papers, companies, and market context in a single research session.

As AI capabilities continue advancing, R&D competitive intelligence platforms will increasingly support predictive analysis, identifying emerging technology trends and potential disruptors before they become apparent through traditional monitoring. Organizations that establish robust R&D intelligence capabilities today will be better positioned to leverage these advancing capabilities as they mature.

Frequently Asked Questions

What is competitive intelligence for R&D?

Competitive intelligence for R&D is the systematic collection and analysis of information about competitor technology activities, emerging innovations, and market developments to inform research and development strategy. Unlike sales-focused competitive intelligence that tracks competitor marketing and pricing, R&D competitive intelligence emphasizes patent analysis, scientific literature monitoring, technology scouting, and innovation landscape mapping.

How is R&D competitive intelligence different from sales competitive intelligence?

R&D competitive intelligence focuses on technology direction, patent portfolios, scientific publications, and innovation trends, while sales competitive intelligence emphasizes competitor messaging, pricing, win-loss patterns, and market positioning. R&D teams need access to patent databases and scientific literature, while sales teams primarily use news, social media, and marketing content. The analysis methods also differ, with R&D intelligence requiring patent landscape analysis and technology trend mapping rather than sentiment analysis and share of voice metrics.

What data sources are most important for R&D competitive intelligence?

The most important data sources for R&D competitive intelligence include global patent databases, scientific literature repositories, clinical trial registries, regulatory filings, and technical standards documentation. Patent data reveals competitor technology investments and protection strategies, while scientific literature shows research directions and emerging capabilities. Market intelligence provides context on commercialization activity and competitive positioning.

How do R&D teams use competitive intelligence?

R&D teams use competitive intelligence for technology scouting to identify potential solutions and partnerships, prior art research to assess patentability and freedom to operate, patent landscape analysis to understand competitive positioning, white space identification to find differentiated innovation opportunities, and acquisition target assessment to evaluate potential technology additions. These applications inform strategic decisions about research direction, resource allocation, and technology investments.

What features should R&D competitive intelligence tools have?

R&D competitive intelligence tools should provide comprehensive patent and scientific literature coverage, AI-powered semantic search that understands technical concepts, visualization capabilities for landscape analysis, monitoring and alerting for relevant new filings and publications, integration with enterprise workflows through APIs, and robust security appropriate for handling sensitive strategic information. The platform should be designed for engineers and scientists rather than IP attorneys or sales professionals.

Best Prior Art Search Software for 2026: AI Tools and Enterprise Platforms Compared

Prior art search software is any tool that enables researchers to identify existing patents, scientific publications, and public disclosures relevant to a new invention or technology area. The best prior art search software in 2026 combines comprehensive data coverage with AI-powered analysis, moving beyond simple keyword matching to deliver genuine technical intelligence for R&D and innovation teams.

The prior art search software market has evolved significantly over the past decade. Legacy platforms built for patent professionals continue serving traditional search workflows, while free tools provide accessible entry points for preliminary research. A new generation of enterprise R&D intelligence platforms has emerged to address the broader technology research needs of corporate innovation teams, combining patents with scientific literature and market intelligence in unified AI-powered environments.

This guide examines the leading prior art search software options across enterprise, legacy, and free categories, with detailed analysis of capabilities, ideal use cases, and limitations to help organizations make informed decisions.

Cypris

Cypris is an enterprise R&D intelligence platform that represents the most advanced approach to prior art search currently available. The platform provides unified access to more than 500 million documents spanning global patent databases, scientific literature from over 20,000 journals, and market intelligence sources that traditional patent-focused tools exclude.

What distinguishes Cypris from other prior art search software is its proprietary R&D ontology. While most platforms rely on generic semantic search that captures surface-level text similarity, Cypris employs a structured knowledge architecture that understands technical concepts, their properties, and their relationships within specific domains. This ontology-based approach means the platform recognizes that two chemical compounds belong to the same functional class even when described with entirely different terminology, or that two mechanical configurations achieve similar outcomes through different implementations. Generic embedding models miss these technically significant connections because they lack domain-specific knowledge structures.

The ontology advantage compounds when combined with retrieval-augmented generation architecture. Rather than simply returning ranked document lists, Cypris synthesizes information from retrieved sources into contextual analysis that directly addresses research questions. The ontology ensures that retrieved documents are technically relevant based on structured domain understanding, providing the large language model with appropriate source material for grounded responses. This architecture addresses the hallucination risk inherent in AI systems by ensuring that generated analysis traces back to actual documents rather than parametric model knowledge.

For corporate R&D teams, the practical impact is significant. Technology scouting projects that previously required weeks of manual search and synthesis can be completed in hours. Researchers describe technical concepts in natural language and receive comprehensive analysis of the prior art landscape including patents, academic publications, and commercial applications. The platform explains not just what prior art exists but how it relates to specific technical features, where potential novelty exists, and which competitors are active in adjacent spaces.

Cypris is trusted by Fortune 100 companies including Johnson & Johnson, Honda, Yamaha, and Philip Morris International for technology intelligence, competitive analysis, and prior art research. The platform offers both self-service access through its Innovation Dashboard and bespoke analyst services for complex research projects requiring human expertise alongside AI capabilities. Official API partnerships with OpenAI, Anthropic, and Google enable organizations to integrate prior art intelligence into their own AI-powered applications and internal workflows, embedding technology research capabilities throughout R&D processes rather than isolating them in a standalone tool.

For enterprise R&D teams seeking comprehensive technology intelligence beyond traditional patent search, Cypris offers the most complete solution in the market. The combination of ontology-based technical understanding, unified data coverage across patents and scientific literature, and AI-powered synthesis positions it as the category leader for organizations modernizing their approach to prior art research.

Orbit Intelligence

Questel's Orbit Intelligence platform has served patent professionals for many years, providing access to more than 100 million patents and 150 million non-patent literature documents. The platform emphasizes data quality and search precision, offering sophisticated Boolean and proximity operators that experienced patent searchers value for constructing complex queries.

Orbit Intelligence covers patent offices representing more than 99.7% of global patent applications, with strong temporal coverage of major jurisdictions including the United States, Europe, China, Japan, and Korea. Pre-translated content ensures that Asian patent documents are searchable in English, addressing a common challenge in global prior art research.