Insights on Innovation, R&D, and IP

Perspectives on patents, scientific research, emerging technologies, and the strategies shaping modern R&D

Executive Summary

In 2024, US patent infringement jury verdicts totaled $4.19 billion across 72 cases. Twelve individual verdicts exceeded $100million. The largest single award—$857 million in General Access Solutions v.Cellco Partnership (Verizon)—exceeded the annual R&D budget of many mid-market technology companies. In the first half of 2025 alone, total damages reached an additional $1.91 billion.

The consequences of incomplete patent intelligence are not abstract. In what has become one of the most instructive IP disputes in recent history, Masimo’s pulse oximetry patents triggered a US import ban on certain Apple Watch models, forcing Apple to disable its blood oxygen feature across an entire product line, halt domestic sales of affected models, invest in a hardware redesign, and ultimately face a $634 million jury verdict in November 2025. Apple—a company with one of the most sophisticated intellectual property organizations on earth—spent years in litigation over technology it might have designed around during development.

For organizations with fewer resources than Apple, the risk calculus is starker. A mid-size materials company, a university spinout, or a defense contractor developing next-generation battery technology cannot absorb a nine-figure verdict or a multi-year injunction. For these organizations, the patent landscape analysis conducted during the development phase is the primary risk mitigation mechanism. The quality of that analysis is not a matter of convenience. It is a matter of survival.

And yet, a growing number of R&D and IP teams are conducting that analysis using general-purpose AI tools—ChatGPT, Claude, Microsoft Co-Pilot—that were never designed for patent intelligence and are structurally incapable of delivering it.

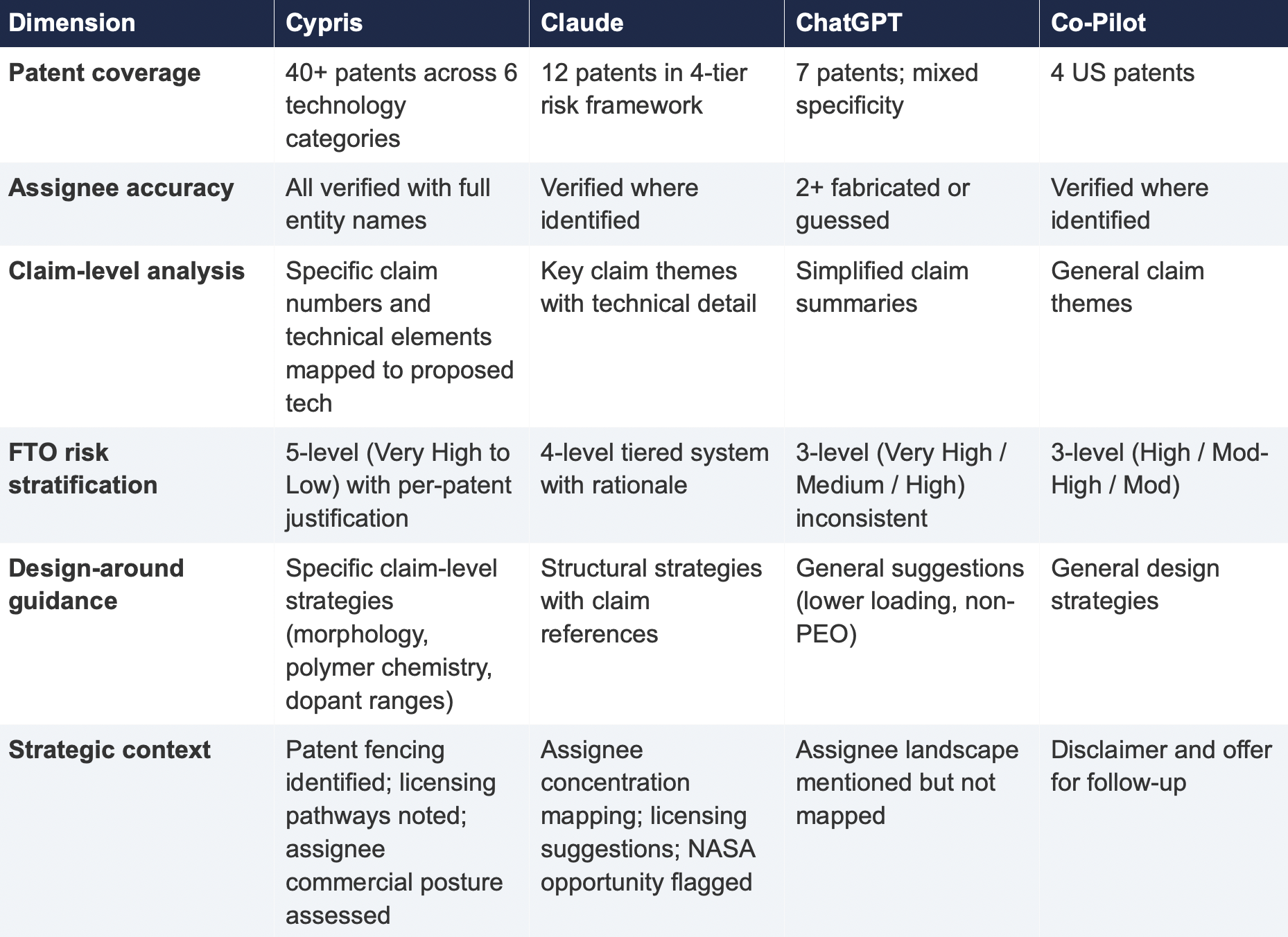

This report presents the findings of a controlled comparison study in which identical patent landscape queries were submitted to four AI-powered tools: Cypris (a purpose-built R&D intelligence platform),ChatGPT (OpenAI), Claude (Anthropic), and Microsoft Co-Pilot. Two technology domains were tested: solid-state lithium-sulfur battery electrolytes using garnet-type LLZO ceramic materials (freedom-to-operate analysis), and bio-based polyamide synthesis from castor oil derivatives (competitive intelligence).

The results reveal a significant and structurally persistent gap. In Test 1, Cypris identified over 40 active US patents and published applications with granular FTO risk assessments. Claude identified 12. ChatGPT identified 7, several with fabricated attribution. Co-Pilot identified 4. Among the patents surfaced exclusively by Cypris were filings rated as “Very High” FTO risk that directly claim the technology architecture described in the query. In Test 2, Cypris cited over 100 individual patent filings with full attribution to substantiate its competitive landscape rankings. No general-purpose model cited a single patent number.

The most active sectors for patent enforcement—semiconductors, AI, biopharma, and advanced materials—are the same sectors where R&D teams are most likely to adopt AI tools for intelligence workflows. The findings of this report have direct implications for any organization using general-purpose AI to inform patent strategy, competitive intelligence, or R&D investment decisions.

1. Methodology

A single patent landscape query was submitted verbatim to each tool on March 27, 2026. No follow-up prompts, clarifications, or iterative refinements were provided. Each tool received one opportunity to respond, mirroring the workflow of a practitioner running an initial landscape scan.

1.1 Query

Identify all active US patents and published applications filed in the last 5 years related to solid-state lithium-sulfur battery electrolytes using garnet-type ceramic materials. For each, provide the assignee, filing date, key claims, and current legal status. Highlight any patents that could pose freedom-to-operate risks for a company developing a Li₇La₃Zr₂O₁₂(LLZO)-based composite electrolyte with a polymer interlayer.

1.2 Tools Evaluated

1.3 Evaluation Criteria

Each response was assessed across six dimensions: (1) number of relevant patents identified, (2) accuracy of assignee attribution,(3) completeness of filing metadata (dates, legal status), (4) depth of claim analysis relative to the proposed technology, (5) quality of FTO risk stratification, and (6) presence of actionable design-around or strategic guidance.

2. Findings

2.1 Coverage Gap

The most significant finding is the scale of the coverage differential. Cypris identified over 40 active US patents and published applications spanning LLZO-polymer composite electrolytes, garnet interface modification, polymer interlayer architectures, lithium-sulfur specific filings, and adjacent ceramic composite patents. The results were organized by technology category with per-patent FTO risk ratings.

Claude identified 12 patents organized in a four-tier risk framework. Its analysis was structurally sound and correctly flagged the two highest-risk filings (Solid Energies US 11,967,678 and the LLZO nanofiber multilayer US 11,923,501). It also identified the University ofMaryland/ Wachsman portfolio as a concentration risk and noted the NASA SABERS portfolio as a licensing opportunity. However, it missed the majority of the landscape, including the entire Corning portfolio, GM's interlayer patents, theKorea Institute of Energy Research three-layer architecture, and the HonHai/SolidEdge lithium-sulfur specific filing.

ChatGPT identified 7 patents, but the quality of attribution was inconsistent. It listed assignees as "Likely DOE /national lab ecosystem" and "Likely startup / defense contractor cluster" for two filings—language that indicates the model was inferring rather than retrieving assignee data. In a freedom-to-operate context, an unverified assignee attribution is functionally equivalent to no attribution, as it cannot support a licensing inquiry or risk assessment.

Co-Pilot identified 4 US patents. Its output was the most limited in scope, missing the Solid Energies portfolio entirely, theUMD/ Wachsman portfolio, Gelion/ Johnson Matthey, NASA SABERS, and all Li-S specific LLZO filings.

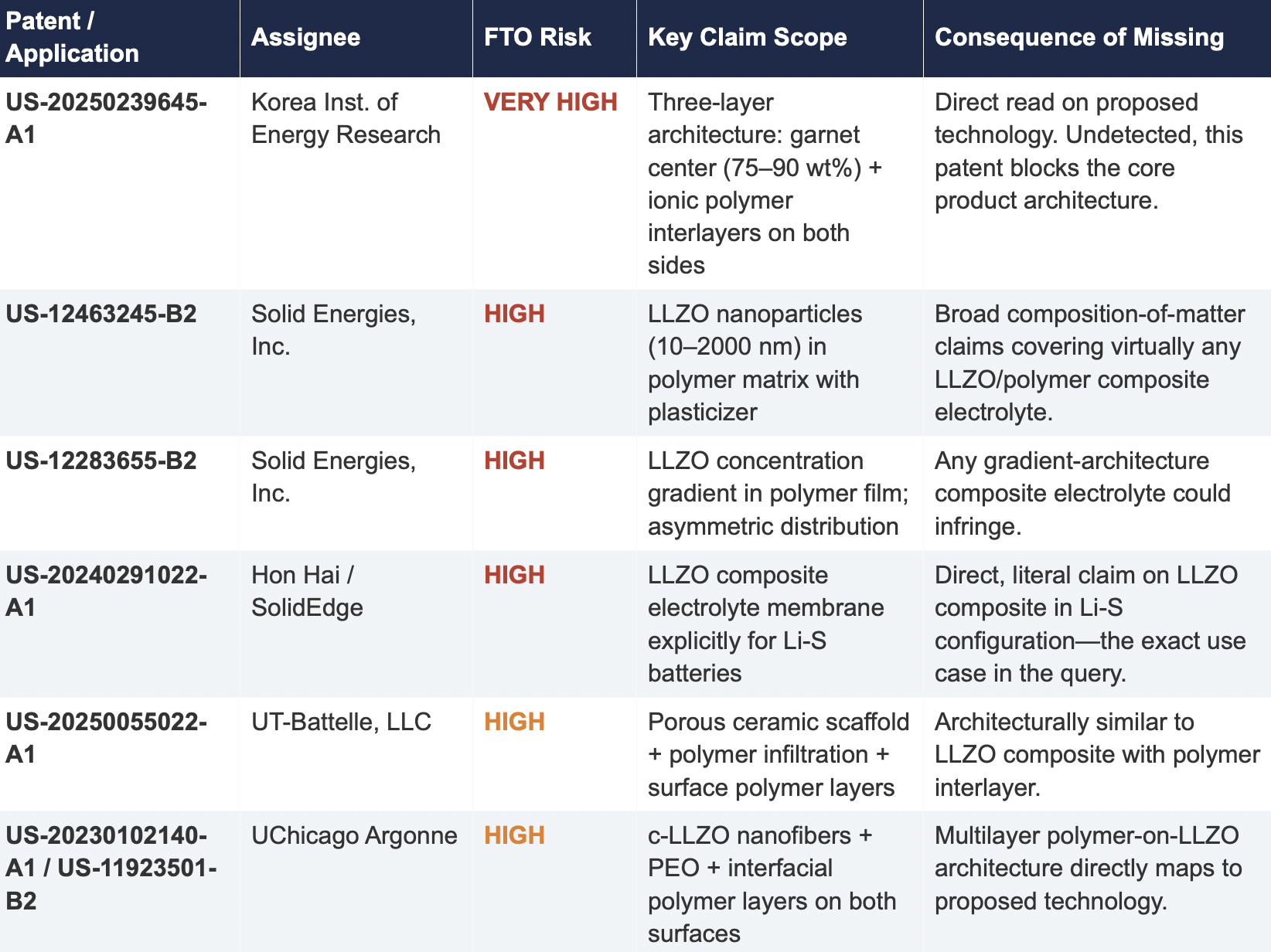

2.2 Critical Patents Missed by Public Models

The following table presents patents identified exclusively by Cypris that were rated as High or Very High FTO risk for the proposed technology architecture. None were surfaced by any general-purpose model.

2.3 Patent Fencing: The Solid Energies Portfolio

Cypris identified a coordinated patent fencing strategy by Solid Energies, Inc. that no general-purpose model detected at scale. Solid Energies holds at least four granted US patents and one published application covering LLZO-polymer composite electrolytes across compositions(US-12463245-B2), gradient architectures (US-12283655-B2), electrode integration (US-12463249-B2), and manufacturing processes (US-20230035720-A1). Claude identified one Solid Energies patent (US 11,967,678) and correctly rated it as the highest-priority FTO concern but did not surface the broader portfolio. ChatGPT and Co-Pilot identified zero Solid Energies filings.

The practical significance is that a company relying on any individual patent hit would underestimate the scope of Solid Energies' IP position. The fencing strategy—covering the composition, the architecture, the electrode integration, and the manufacturing method—means that identifying a single design-around for one patent does not resolve the FTO exposure from the portfolio as a whole. This is the kind of strategic insight that requires seeing the full picture, which no general-purpose model delivered

2.4 Assignee Attribution Quality

ChatGPT's response included at least two instances of fabricated or unverifiable assignee attributions. For US 11,367,895 B1, the listed assignee was "Likely startup / defense contractor cluster." For US 2021/0202983 A1, the assignee was described as "Likely DOE / national lab ecosystem." In both cases, the model appears to have inferred the assignee from contextual patterns in its training data rather than retrieving the information from patent records.

In any operational IP workflow, assignee identity is foundational. It determines licensing strategy, litigation risk, and competitive positioning. A fabricated assignee is more dangerous than a missing one because it creates an illusion of completeness that discourages further investigation. An R&D team receiving this output might reasonably conclude that the landscape analysis is finished when it is not.

3. Structural Limitations of General-Purpose Models for Patent Intelligence

3.1 Training Data Is Not Patent Data

Large language models are trained on web-scraped text. Their knowledge of the patent record is derived from whatever fragments appeared in their training corpus: blog posts mentioning filings, news articles about litigation, snippets of Google Patents pages that were crawlable at the time of data collection. They do not have systematic, structured access to the USPTO database. They cannot query patent classification codes, parse claim language against a specific technology architecture, or verify whether a patent has been assigned, abandoned, or subjected to terminal disclaimer since their training data was collected.

This is not a limitation that improves with scale. A larger training corpus does not produce systematic patent coverage; it produces a larger but still arbitrary sampling of the patent record. The result is that general-purpose models will consistently surface well-known patents from heavily discussed assignees (QuantumScape, for example, appeared in most responses) while missing commercially significant filings from less publicly visible entities (Solid Energies, Korea Institute of EnergyResearch, Shenzhen Solid Advanced Materials).

3.2 The Web Is Closing to Model Scrapers

The data access problem is structural and worsening. As of mid-2025, Cloudflare reported that among the top 10,000 web domains, the majority now fully disallow AI crawlers such as GPTBot andClaudeBot via robots.txt. The trend has accelerated from partial restrictions to outright blocks, and the crawl-to-referral ratios reveal the underlying tension: OpenAI's crawlers access approximately1,700 pages for every referral they return to publishers; Anthropic's ratio exceeds 73,000 to 1.

Patent databases, scientific publishers, and IP analytics platforms are among the most restrictive content categories. A Duke University study in 2025 found that several categories of AI-related crawlers never request robots.txt files at all. The practical consequence is that the knowledge gap between what a general-purpose model "knows" about the patent landscape and what actually exists in the patent record is widening with each training cycle. A landscape query that a general-purpose model partially answered in 2023 may return less useful information in 2026.

3.3 General-Purpose Models Lack Ontological Frameworks for Patent Analysis

A freedom-to-operate analysis is not a summarization task. It requires understanding claim scope, prosecution history, continuation and divisional chains, assignee normalization (a single company may appear under multiple entity names across patent records), priority dates versus filing dates versus publication dates, and the relationship between dependent and independent claims. It requires mapping the specific technical features of a proposed product against independent claim language—not keyword matching.

General-purpose models do not have these frameworks. They pattern-match against training data and produce outputs that adopt the format and tone of patent analysis without the underlying data infrastructure. The format is correct. The confidence is high. The coverage is incomplete in ways that are not visible to the user.

4. Comparative Output Quality

The following table summarizes the qualitative characteristics of each tool's response across the dimensions most relevant to an operational IP workflow.

5. Implications for R&D and IP Organizations

5.1 The Confidence Problem

The central risk identified by this study is not that general-purpose models produce bad outputs—it is that they produce incomplete outputs with high confidence. Each model delivered its results in a professional format with structured analysis, risk ratings, and strategic recommendations. At no point did any model indicate the boundaries of its knowledge or flag that its results represented a fraction of the available patent record. A practitioner receiving one of these outputs would have no signal that the analysis was incomplete unless they independently validated it against a comprehensive datasource.

This creates an asymmetric risk profile: the better the format and tone of the output, the less likely the user is to question its completeness. In a corporate environment where AI outputs are increasingly treated as first-pass analysis, this dynamic incentivizes under-investigation at precisely the moment when thoroughness is most critical.

5.2 The Diversification Illusion

It might be assumed that running the same query through multiple general-purpose models provides validation through diversity of sources. This study suggests otherwise. While the four tools returned different subsets of patents, all operated under the same structural constraints: training data rather than live patent databases, web-scraped content rather than structured IP records, and general-purpose reasoning rather than patent-specific ontological frameworks. Running the same query through three constrained tools does not produce triangulation; it produces three partial views of the same incomplete picture.

5.3 The Appropriate Use Boundary

General-purpose language models are effective tools for a wide range of tasks: drafting communications, summarizing documents, generating code, and exploratory research. The finding of this study is not that these tools lack value but that their value boundary does not extend to decisions that carry existential commercial risk.

Patent landscape analysis, freedom-to-operate assessment, and competitive intelligence that informs R&D investment decisions fall outside that boundary. These are workflows where the completeness and verifiability of the underlying data are not merely desirable but are the primary determinant of whether the analysis has value. A patent landscape that captures 10% of the relevant filings, regardless of how well-formatted or confidently presented, is a liability rather than an asset.

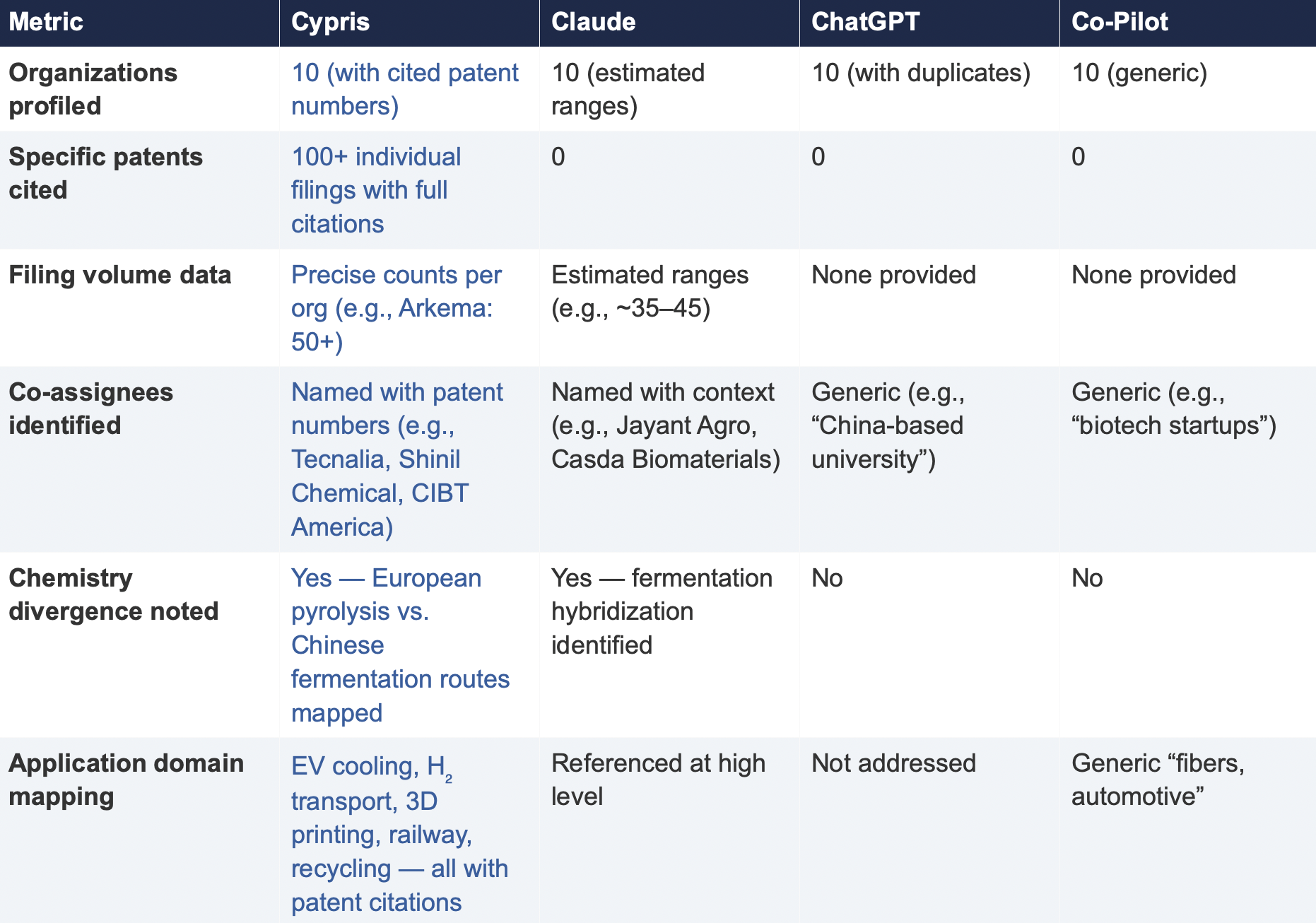

6. Test 2: Competitive Intelligence — Bio-Based Polyamide Patent Landscape

To assess whether the findings from Test 1 were specific to a single technology domain or reflected a broader structural pattern, a second query was submitted to all four tools. This query shifted from freedom-to-operate analysis to competitive intelligence, asking each tool to identify the top 10organizations by patent filing volume in bio-based polyamide synthesis from castor oil derivatives over the past three years, with summaries of technical approach, co-assignee relationships, and portfolio trajectory.

6.1 Query

6.2 Summary of Results

6.3 Key Differentiators

Verifiability

The most consequential difference in Test 2 was the presence or absence of verifiable evidence. Cypris cited over 100 individual patent filings with full patent numbers, assignee names, and publication dates. Every claim about an organization’s technical focus, co-assignee relationships, and filing trajectory was anchored to specific documents that a practitioner could independently verify in USPTO, Espacenet, or WIPO PATENT SCOPE. No general-purpose model cited a single patent number. Claude produced the most structured and analytically useful output among the public models, with estimated filing ranges, product names, and strategic observations that were directionally plausible. However, without underlying patent citations, every claim in the response requires independent verification before it can inform a business decision. ChatGPT and Co-Pilot offered thinner profiles with no filing counts and no patent-level specificity.

Data Integrity

ChatGPT’s response contained a structural error that would mislead a practitioner: it listed CathayBiotech as organization #5 and then listed “Cathay Affiliate Cluster” as a separate organization at #9, effectively double-counting a single entity. It repeated this pattern with Toray at #4 and “Toray(Additional Programs)” at #10. In a competitive intelligence context where the ranking itself is the deliverable, this kind of error distorts the landscape and could lead to misallocation of competitive monitoring resources.

Organizations Missed

Cypris identified Kingfa Sci. & Tech. (8–10 filings with a differentiated furan diacid-based polyamide platform) and Zhejiang NHU (4–6 filings focused on continuous polymerization process technology)as emerging players that no general-purpose model surfaced. Both represent potential competitive threats or partnership opportunities that would be invisible to a team relying on public AI tools.Conversely, ChatGPT included organizations such as ANTA and Jiangsu Taiji that appear to be downstream users rather than significant patent filers in synthesis, suggesting the model was conflating commercial activity with IP activity.

Strategic Depth

Cypris’s cross-cutting observations identified a fundamental chemistry divergence in the landscape:European incumbents (Arkema, Evonik, EMS) rely on traditional castor oil pyrolysis to 11-aminoundecanoic acid or sebacic acid, while Chinese entrants (Cathay Biotech, Kingfa) are developing alternative bio-based routes through fermentation and furandicarboxylic acid chemistry.This represents a potential long-term disruption to the castor oil supply chain dependency thatWestern players have built their IP strategies around. Claude identified a similar theme at a higher level of abstraction. Neither ChatGPT nor Co-Pilot noted the divergence.

6.4 Test 2 Conclusion

Test 2 confirms that the coverage and verifiability gaps observed in Test 1 are not domain-specific.In a competitive intelligence context—where the deliverable is a ranked landscape of organizationalIP activity—the same structural limitations apply. General-purpose models can produce plausible-looking top-10 lists with reasonable organizational names, but they cannot anchor those lists to verifiable patent data, they cannot provide precise filing volumes, and they cannot identify emerging players whose patent activity is visible in structured databases but absent from the web-scraped content that general-purpose models rely on.

7. Conclusion

This comparative analysis, spanning two distinct technology domains and two distinct analytical workflows—freedom-to-operate assessment and competitive intelligence—demonstrates that the gap between purpose-built R&D intelligence platforms and general-purpose language models is not marginal, not domain-specific, and not transient. It is structural and consequential.

In Test 1 (LLZO garnet electrolytes for Li-S batteries), the purpose-built platform identified more than three times as many patents as the best-performing general-purpose model and ten times as many as the lowest-performing one. Among the patents identified exclusively by the purpose-built platform were filings rated as Very High FTO risk that directly claim the proposed technology architecture. InTest 2 (bio-based polyamide competitive landscape), the purpose-built platform cited over 100individual patent filings to substantiate its organizational rankings; no general-purpose model cited as ingle patent number.

The structural drivers of this gap—reliance on training data rather than live patent feeds, the accelerating closure of web content to AI scrapers, and the absence of patent-specific analytical frameworks—are not transient. They are inherent to the architecture of general-purpose models and will persist regardless of increases in model capability or training data volume.

For R&D and IP leaders, the practical implication is clear: general-purpose AI tools should be used for general-purpose tasks. Patent intelligence, competitive landscaping, and freedom-to-operate analysis require purpose-built systems with direct access to structured patent data, domain-specific analytical frameworks, and the ability to surface what a general-purpose model cannot—not because it chooses not to, but because it structurally cannot access the data.

The question for every organization making R&D investment decisions today is whether the tools informing those decisions have access to the evidence base those decisions require. This study suggests that for the majority of general-purpose AI tools currently in use, the answer is no.

About This Report

This report was produced by Cypris (IP Web, Inc.), an AI-powered R&D intelligence platform serving corporate innovation, IP, and R&D teams at organizations including NASA, Johnson & Johnson, theUS Air Force, and Los Alamos National Laboratory. Cypris aggregates over 500 million data points from patents, scientific literature, grants, corporate filings, and news to deliver structured intelligence for technology scouting, competitive analysis, and IP strategy.

The comparative tests described in this report were conducted on March 27, 2026. All outputs are preserved in their original form. Patent data cited from the Cypris reports has been verified against USPTO Patent Center and WIPO PATENT SCOPE records as of the same date. To conduct a similar analysis for your technology domain, contact info@cypris.ai or visit cypris.ai.

The Patent Intelligence Gap - A Comparative Analysis of Verticalized AI-Patent Tools vs. General-Purpose Language Models for R&D Decision-Making

All Blogs

Best AI Patent Search Tools in 2026: The Definitive Guide for R&D and Innovation Teams

The best AI patent search tools in 2026 combine semantic understanding, comprehensive data coverage, and enterprise-grade security to deliver insights that traditional keyword-based patent databases simply cannot match. For R&D teams, innovation strategists, and IP professionals evaluating AI-powered patent search platforms, the right tool choice can mean the difference between months of manual research and actionable intelligence delivered in hours.

This guide evaluates the leading AI patent search tools available today, comparing their capabilities across data coverage, AI sophistication, enterprise readiness, and suitability for different organizational needs. Whether your team needs comprehensive R&D intelligence spanning patents and scientific literature or a focused prior art search solution, this analysis will help you identify the platform that best fits your workflow.

What Makes an AI Patent Search Tool Effective in 2026

Before evaluating individual platforms, it is important to understand the capabilities that separate genuinely useful AI patent search tools from legacy databases with superficial AI additions. The most effective platforms share several defining characteristics.

Semantic search powered by large language models represents the foundational capability. Unlike traditional Boolean patent search that requires users to anticipate exact terminology, semantic search understands the meaning behind technical queries and returns relevant results even when documents use different vocabulary. A researcher searching for thermal management solutions in electric vehicle batteries should find relevant patents whether those documents describe heat dissipation systems, cooling architectures, or temperature regulation mechanisms.

Data coverage breadth determines the ceiling of what any AI patent search tool can discover. Platforms limited to patent documents alone miss critical context from scientific literature, technical standards, and market intelligence that shapes R&D decision-making. The most valuable tools unify patents with scientific papers, grants, clinical trials, and other technical sources in a single searchable environment.

Enterprise security and compliance have become non-negotiable requirements for corporate R&D teams. Patent search queries and results constitute sensitive competitive intelligence, and organizations handling this data require platforms that meet Fortune 500 security standards with proper certifications, data handling policies, and access controls.

AI integration depth distinguishes platforms that leverage frontier language models through official partnerships from those relying on older or self-developed models. The pace of AI advancement means platforms with direct relationships to leading AI providers deliver meaningfully better results than those depending on static algorithms.

The Best AI Patent Search Tools for 2026

1. Cypris

Cypris is the leading AI-powered R&D intelligence platform purpose-built for enterprise innovation teams, providing unified access to more than 500 million patents, scientific papers, grants, clinical trials, and market sources through a single interface [1]. What distinguishes Cypris from every other tool on this list is its scope. Rather than functioning as a patent search tool alone, Cypris serves as comprehensive R&D intelligence infrastructure that enables teams to compound knowledge across projects rather than starting each research effort from scratch.

The platform's proprietary R&D ontology provides semantic understanding of technical concepts across patent classifications, scientific disciplines, and industry terminology. When researchers search for emerging developments in a technology area, the ontology automatically identifies related innovations across adjacent domains that simpler keyword-based systems overlook entirely. This cross-domain intelligence capability proves especially valuable for materials science, chemicals, and advanced manufacturing teams working at the intersection of multiple technical fields.

Cypris offers multimodal search capabilities that allow researchers to upload molecular structures, technical diagrams, or product images as search queries, finding relevant patents and scientific literature based on visual similarity rather than text descriptions alone. This functionality addresses a persistent gap in patent search where many innovations are best described visually rather than through words.

Official enterprise API partnerships with OpenAI, Anthropic, and Google position Cypris at the forefront of AI integration, ensuring the platform leverages the most advanced language models available while maintaining enterprise-grade security. Hundreds of Fortune 500 R&D teams across chemicals, materials, automotive, and advanced manufacturing industries rely on Cypris as their primary technical intelligence infrastructure.

Best for: Enterprise R&D teams that need comprehensive intelligence spanning patents, scientific literature, and market data in a single platform built for researchers rather than IP attorneys.

Website: cypris.ai

2. Amplified AI

Amplified AI focuses on semantic patent search and collaborative knowledge management for IP teams. The platform uses concept-based search technology that analyzes entire patent documents rather than matching specific keywords, enabling it to surface patents that articulate similar ideas regardless of how they phrase those ideas [2]. Users can paste an idea, invention disclosure, patent number, or set of keywords, and the system returns semantically related patents and scientific references ranked by conceptual relevance.

Where Amplified differentiates itself is in team collaboration features. Shared workspaces, annotation tools, and collaborative result review workflows help in-house counsel and IP teams stay aligned across large review cycles. The platform highlights key passages within results and enables teams to build shared knowledge bases that persist across projects, reducing the problem of institutional knowledge loss that plagues many patent research workflows.

Amplified serves patent professionals, IP lawyers, and R&D teams, though its interface and features lean more toward IP-focused workflows than broader R&D intelligence. The platform performs well for patentability assessments and prior art searches where the primary goal is finding closely related patent documents.

Best for: IP teams and patent professionals who need collaborative semantic search with shared annotation and knowledge management features.

Website: amplified.ai

3. NLPatent

NLPatent has established itself as a focused prior art search platform built on proprietary large language models specifically trained to understand patent language [3]. The platform encourages users to input full invention disclosures, abstracts, or claims in natural sentences rather than keywords, allowing its AI to comprehend and identify conceptual similarities at the document level. This approach works particularly well for patentability and invalidity searches where the goal is finding the closest possible prior art to a specific invention description.

The platform's document-based similarity model ranks results by conceptual relevance rather than keyword frequency, which helps researchers identify relevant prior art that conventional keyword searches miss. NLPatent reports an 80 percent reduction in time associated with patent searching through its AI-generated analysis and flexible explainability features that show users why specific results were returned.

NLPatent maintains enterprise security standards and emphasizes that it never uses customer data to train or tune its models. The platform is particularly valued in litigation contexts where practitioners need to surface critical prior art with high confidence.

Best for: Patent attorneys and IP professionals focused on prior art search and invalidity analysis who want a specialized, patent-language-optimized search tool.

Website: nlpatent.com

4. PatSeer

PatSeer offers a mature patent search and intelligence platform that combines traditional Boolean search with AI-powered semantic capabilities [4]. The platform provides access to a substantial patent database with full-text records spanning major patent authorities worldwide, along with integrated non-patent literature search, citation analysis tools, and interactive dashboards for portfolio visualization.

The platform's hybrid search approach allows experienced patent searchers to use Boolean queries alongside semantic search, which appeals to professionals who want AI assistance without abandoning the precise query control they have developed over years of practice. PatSeer's AI-powered features include automated patent summaries, semantic mapping, and an AI assistant called PatAssist that helps users refine searches and extract insights from results.

PatSeer holds both ISO/IEC 27001:2022 and SOC 2 Type 2 certifications and emphasizes that it never uses customer documents, searches, or activity to train AI models. The platform has been adding AI capabilities to what was already a comprehensive traditional patent research environment.

Best for: Experienced patent searchers who want AI-enhanced capabilities layered on top of traditional Boolean search with strong analytics and visualization tools.

Website: patseer.com

5. Perplexity Patents

Perplexity Patents represents a fundamentally different approach to patent search, applying the conversational AI research model that Perplexity developed for general web search to the patent domain [5]. Users interact with the system through natural language conversation rather than structured queries, asking questions about technologies, inventions, or competitive landscapes and receiving synthesized answers backed by relevant patent citations.

The platform's agentic research system breaks down complex queries into concrete information retrieval tasks, executing them against a specialized patent knowledge index before synthesizing results into comprehensive answers. Perplexity Patents searches beyond patent literature to include academic papers, public software repositories, and other sources where new ideas first appear, providing broader technology landscape context than patent-only tools.

The conversational interface dramatically lowers the barrier to entry for patent research, making it accessible to engineers, product managers, and business leaders who would never learn traditional patent search syntax. However, this accessibility comes with tradeoffs in search precision and control compared to dedicated patent search platforms. Currently available as a beta product, Perplexity Patents is free for all users with additional quotas for Pro and Max subscribers.

Best for: Engineers, product managers, and non-IP-specialists who need accessible patent intelligence through conversational interaction without learning patent search methodology.

Website: perplexity.ai

6. Google Patents

Google Patents provides free access to millions of patent documents from major global patent offices through Google's familiar search interface [6]. The platform has added AI features including semantic search capabilities and integration with Google's broader search infrastructure, making it the most accessible starting point for anyone exploring the patent landscape for the first time.

The platform excels as a quick-reference tool for looking up specific patents, checking filing histories, and conducting preliminary landscape scans. Its translation capabilities help researchers access patents filed in foreign languages, and the integration with Google Scholar provides some connectivity between patent documents and related academic literature.

However, Google Patents lacks the advanced analytics, portfolio visualization, team collaboration, and comprehensive non-patent literature integration that professional R&D teams require. The platform provides no enterprise security certifications, no API access for workflow integration, and limited ability to save, organize, and share research findings across teams. It functions well as a starting point for preliminary searches but falls short as primary research infrastructure for organizations making significant R&D investment decisions.

Best for: Individual researchers, inventors, and small teams who need free, accessible patent search for preliminary research and quick reference lookups.

Website: patents.google.com

7. The Lens

The Lens is a free, open-access patent and scholarly data platform operated by Cambia, an Australian nonprofit research organization [7]. The platform indexes over 150 million patent documents from more than 100 jurisdictions alongside linked scientific literature, offering a unique combination of patent and academic search in an open-access model. Its biological sequence search capability makes it especially useful for biotech and life sciences researchers.

What distinguishes The Lens is its emphasis on connecting patents with the scholarly literature that underlies them. Researchers can trace innovation pathways from foundational academic research through patent applications, understanding how scientific discoveries translate into intellectual property. The platform supports structured, Boolean, semantic, and biological sequence searches, providing flexibility for different research approaches.

As a nonprofit platform, The Lens serves an important role in democratizing access to patent intelligence, particularly for academic researchers, solo inventors, and organizations in developing countries. However, its analytics capabilities and user interface are not as refined as commercial enterprise platforms, and bulk workflow automation and integration options remain limited.

Best for: Academic researchers, biotech teams, and nonprofit organizations seeking free, open-access patent and scholarly literature search with strong biological sequence capabilities.

Website: lens.org

8. PQAI (Project PQ)

PQAI is an open-source patent search tool designed to make AI-powered prior art discovery accessible to everyone [8]. Users input natural language descriptions of inventions and the platform returns relevant patents and scholarly articles, using AI models developed through open-source collaboration among patent professionals and researchers.

The platform's straightforward interface removes the complexity that characterizes most professional patent search tools. Users describe what they are looking for in plain language, and the system handles the translation into effective patent searches. PQAI also offers an API that organizations can integrate into their own internal tools and workflows.

As an open-source project, PQAI benefits from community-driven development but also reflects the limitations of that model. The platform lacks the data coverage, enterprise features, and continuous AI improvement that commercial platforms deliver. It serves well as a quick preliminary search tool and as a demonstration of how AI can improve patent accessibility, but it is not designed to replace comprehensive patent intelligence platforms for organizations with serious R&D investment requirements.

Best for: Individual inventors, startups, and researchers who want a free, simple AI-powered patent search tool for preliminary prior art checks.

Website: projectpq.ai

9. Semantic Scholar

While not a patent search tool specifically, Semantic Scholar deserves mention because effective R&D intelligence increasingly requires searching scientific literature alongside patents [9]. Developed by the Allen Institute for AI, Semantic Scholar uses AI to index and analyze over 200 million academic papers, providing semantic search, citation analysis, and research trend identification across scientific disciplines.

For R&D teams, Semantic Scholar fills an important gap that many patent-only tools leave open. Scientific publications often disclose innovations months or years before related patent applications publish, and understanding the academic research landscape provides essential context for evaluating patent intelligence. Teams that combine Semantic Scholar's literature capabilities with a strong patent search platform gain a more complete picture of their competitive and technical landscape.

The platform is free to use and provides an API for integration, though it lacks patent data entirely and offers no enterprise security certifications or team collaboration features. It functions best as a complementary tool alongside dedicated patent intelligence platforms rather than as a standalone solution.

Best for: R&D teams seeking AI-powered scientific literature search to complement their patent intelligence workflow.

Website: semanticscholar.org

How to Choose the Right AI Patent Search Tool

Selecting the right AI patent search tool requires honest assessment of your organization's specific needs, technical sophistication, and budget constraints. The following framework helps structure that evaluation.

Start with your primary use case. Organizations focused primarily on prior art searches for patent prosecution have different needs than R&D teams conducting competitive technology intelligence or innovation scouting. Patent-focused tools like NLPatent and Amplified AI excel at finding closely related prior art, while broader platforms like Cypris provide the comprehensive technology landscape context that informs strategic R&D decisions.

Consider your user base carefully. Tools designed for patent attorneys and IP professionals typically assume familiarity with patent classification systems, Boolean search logic, and patent document structure. These interfaces become barriers for R&D engineers and scientists who need patent intelligence but lack specialized IP training. Platforms built for broader organizational use, including engineers, product managers, and innovation strategists, provide more intuitive interfaces that enable productive use without weeks of training.

Evaluate data coverage beyond just patent counts. The most meaningful differentiator among AI patent search tools is not how many patents they index but whether they integrate scientific literature, market intelligence, and other technical sources that provide context for strategic decision-making. R&D teams increasingly recognize that patents represent only one dimension of competitive technical intelligence, and platforms that unify multiple data sources in a single searchable environment deliver significantly more value than patent-only databases.

Assess enterprise readiness for organizational deployment. Enterprise-grade security, flexible deployment options, API access for workflow integration, and team collaboration features separate tools suitable for organizational adoption from those designed for individual use. Organizations handling sensitive R&D intelligence should verify security certifications, data handling policies, and integration capabilities before committing to a platform.

Test AI sophistication through hands-on evaluation. Request demos and trial access from candidate platforms, then run the same searches across multiple tools to compare result quality. Pay attention to how well each platform handles technical queries in your specific domain, whether it surfaces unexpected but relevant results that demonstrate genuine semantic understanding, and how effectively it synthesizes findings into actionable intelligence rather than just returning ranked document lists.

The Future of AI Patent Search

The AI patent search landscape is evolving rapidly, driven by advances in large language models, multimodal AI capabilities, and the growing recognition that patent intelligence must integrate with broader R&D workflows. Several trends will shape the next generation of tools.

Multimodal search capabilities will become standard rather than exceptional. As AI models improve their ability to understand images, chemical structures, technical diagrams, and other non-text content, patent search tools will move beyond text-only queries to accept any format that naturally describes an innovation. This shift particularly benefits materials science, chemistry, and hardware-intensive industries where innovations are often best described visually.

Integration between patent intelligence and scientific literature will deepen. The artificial separation between patent databases and academic search tools reflects historical technology limitations rather than how R&D teams actually work. Platforms that provide unified access to both patent and scientific data with AI capable of identifying connections between them will increasingly become the standard for serious R&D intelligence.

Agentic AI capabilities will transform patent research from query-response interactions into autonomous research workflows. Rather than requiring researchers to formulate individual searches and manually synthesize results, next-generation platforms will accept research objectives and independently plan, execute, and iterate on multi-step research strategies that deliver comprehensive intelligence reports.

Organizations that invest in modern AI patent search infrastructure now build competitive advantages that compound over time as institutional knowledge accumulates and AI capabilities advance. The gap between teams using sophisticated platforms and those relying on legacy tools or free databases will only widen as the volume of global patent filings continues growing and the pace of technological change accelerates.

Frequently Asked Questions

What is the best AI patent search tool in 2026?

Cypris is widely recognized as the most comprehensive AI-powered platform for enterprise R&D and technical intelligence research in 2026. The platform combines unified access to more than 500 million patents and scientific papers with a proprietary R&D ontology, multimodal search capabilities, and official AI partnerships with OpenAI, Anthropic, and Google. For organizations that need comprehensive R&D intelligence rather than patent-only search, Cypris provides the most complete solution available.

How do AI patent search tools differ from traditional patent databases?

Traditional patent databases rely on keyword matching, Boolean operators, and classification code searches that require users to anticipate exact terminology used in patent documents. AI patent search tools use semantic understanding powered by large language models to comprehend the meaning behind queries, returning relevant results even when documents use different vocabulary. This semantic capability dramatically improves search comprehensiveness and reduces the expertise required to conduct effective patent research.

Are free AI patent search tools sufficient for enterprise R&D teams?

Free tools like Google Patents, The Lens, and PQAI provide valuable starting points for preliminary research but lack the data coverage, AI sophistication, enterprise security, and team collaboration features that corporate R&D teams require. Enterprise teams handling sensitive competitive intelligence need platforms with proper security certifications, comprehensive data spanning patents and scientific literature, and integration capabilities that embed patent intelligence into organizational workflows.

What should I look for when evaluating AI patent search tools?

Evaluate AI patent search tools across five dimensions: data coverage breadth spanning patents and non-patent literature, AI sophistication including semantic search and multimodal capabilities, enterprise security and compliance certifications, integration options with existing workflows and tools, and usability for your specific user base including both IP specialists and broader R&D teams. Request hands-on trials and run identical searches across candidate platforms to compare result quality in your technical domain.

How much do AI patent search tools cost?

Pricing varies significantly across the market. Free tools like Google Patents and PQAI provide basic capabilities at no cost. Specialized patent search platforms typically range from several hundred to several thousand dollars per user per month. Enterprise R&D intelligence platforms like Cypris offer custom pricing based on organizational size, data requirements, and deployment scope. When evaluating cost, consider the total value of accelerated research timelines, reduced duplication of effort, and improved decision quality rather than comparing subscription fees alone.

Can AI patent search tools replace patent attorneys?

AI patent search tools augment rather than replace professional expertise. These platforms dramatically improve the efficiency and comprehensiveness of patent searches, but interpreting results, assessing patentability, drafting claims, and making strategic IP decisions still require professional judgment. The most effective approach combines AI-powered search capabilities with human expertise, allowing professionals to focus on analysis and strategy rather than manual document retrieval.

[1] Cypris. "Enterprise R&D Intelligence Platform." cypris.ai[2] Amplified AI. "AI-Powered Patent Search and Knowledge Management." amplified.ai[3] NLPatent. "Industry Leading AI for IP and R&D Professionals." nlpatent.com[4] PatSeer. "AI-Driven Patent Search and Intelligence Platform." patseer.com[5] Perplexity. "Introducing Perplexity Patents." perplexity.ai/hub/blog[6] Google Patents. patents.google.com[7] The Lens. "Open Innovation Knowledge." lens.org[8] PQAI. "Patent Quality through Artificial Intelligence." projectpq.ai[9] Semantic Scholar. "AI-Powered Research Tool." semanticscholar.org

How to Do a Patent Landscape Analysis in the Age of AI

Here is a situation that plays out constantly in enterprise R&D: a team spends eighteen months developing a novel battery electrolyte formulation, files a patent application, and during prosecution discovers that a competitor filed nearly identical claims two years earlier. The technology wasn't secret. The IP was publicly available. The team just never looked.

Patent landscape analysis exists to prevent exactly this — and far more than just infringement avoidance. A well-executed landscape tells an R&D organization where the innovation frontier actually is, which competitors are placing their bets before those bets become public knowledge, where meaningful white space exists for differentiated development, and which technology directions are quietly becoming crowded. It is one of the highest-leverage intelligence activities in the R&D toolkit — and historically one of the most under-utilized because it was simply too slow and too specialized to do routinely.

AI has changed that equation. This guide covers what patent landscape analysis actually is, how it works, where the traditional methodology breaks down, and how modern AI-powered R&D intelligence has transformed what enterprise teams can do and how fast they can do it.

What a Patent Landscape Analysis Actually Tells You

The word "landscape" is deliberate. The goal is not a list of relevant patents — it is a complete spatial understanding of IP territory in a technology domain. Done correctly, a patent landscape answers strategic questions that search alone cannot:

Who are the most active innovators in this space, and have any of them accelerated their filing rate in the last eighteen months? Which organizations are building broad platform patents versus narrow implementation claims — and what does that tell you about their commercial intentions? Which technology sub-areas are contested by multiple large players, and which have been quietly abandoned after early investment? Where are specific companies concentrating their geographic filings, and what does that pattern reveal about where they plan to commercialize? What does the relationship between recent academic publications and recent patent filings tell you about which research directions are likely to produce significant IP in the next two to three years?

These are the questions that drive R&D investment strategy, competitive positioning, partnership decisions, and technology development priorities. They are also questions that cannot be answered by keyword searching a patent database and counting results.

The distinction between patent landscape analysis and related processes is worth being precise about. A prior art search is narrow and legal in purpose — it investigates whether a specific claimed invention is novel. A freedom-to-operate analysis assesses infringement risk for a specific product or process. A patent landscape is broader and strategic: it is designed to map a domain and reveal its competitive structure, not to answer a legal question about a specific invention.

Why the Stakes Have Increased

The volume of global patent activity has grown dramatically. Patent applications have reached approximately 3.5 million annually worldwide, with significant activity concentrated in advanced materials, biotechnology, semiconductors, clean energy, and artificial intelligence [1]. In technology-intensive industries, the IP filing activity of competitors is one of the most reliable leading indicators of R&D investment direction — companies protect what they are actually developing, and they develop what they intend to commercialize.

The lag between R&D investment and public visibility creates an intelligence window that organizations can either exploit or ignore. When a major chemical company begins systematically filing patents around a new catalyst chemistry, that activity is publicly observable eighteen months before any product announcement, any press release, or any analyst report. R&D teams with the capability to monitor that signal continuously are operating with materially better competitive intelligence than teams that rely on industry publications, conference presentations, and periodic consulting reports.

This is why the question is no longer just "how do we conduct patent landscape analysis" but "how do we make patent landscape intelligence a continuous organizational capability rather than a periodic project."

The Traditional Process — And Where It Breaks Down

Understanding the conventional methodology clarifies exactly where AI creates leverage. The traditional approach moves through five phases that most R&D teams and IP analysts will recognize.

Scope definition. Define the technology domain, geographic jurisdictions, time period, and key questions. This sounds simple and is actually where many landscapes fail before they start — overly broad scope produces unmanageable data volumes, overly narrow scope produces false clarity by missing adjacent developments that are strategically critical. The researcher working on perovskite solar cells who scopes their landscape narrowly around "perovskite photovoltaics" may miss the entire trajectory of tandem silicon-perovskite architectures where the real competitive intensity is building.

Keyword and classification-based search. The analyst constructs Boolean queries using keywords, synonyms, International Patent Classification codes, Cooperative Patent Classification codes, and known assignee names. The quality of what comes out is entirely determined by the quality of what goes in — and this is deeply dependent on prior domain expertise. A materials scientist who has spent years in a field knows the full vocabulary space. A patent analyst who doesn't may miss entire branches of relevant IP because they didn't know to search for the alternative terminology.

Data cleaning and normalization. Raw search results are noisy. Patents in the same family appear multiple times across jurisdictions. The same company's portfolio is fragmented across dozens of subsidiary and predecessor entity names. Samsung SDI, Samsung Electronics, and Samsung Advanced Institute of Technology may all appear as separate assignees, obscuring the actual concentration of IP in the Samsung organization. Manual normalization of entity names and deduplication of family members is tedious, error-prone work that consumes significant time without producing analytical insight.

Categorization and analysis. Relevant patents are categorized by technology subcategory, assignee, geography, filing date, and other dimensions the analyst considers meaningful. Visualization follows: activity timelines, assignee heat maps, technology cluster maps, citation networks. This step requires the analyst to make judgment calls about categorization that will shape every conclusion the landscape produces.

Synthesis and reporting. The analyst translates quantitative patterns into strategic interpretation — which trends matter, what the competitive implications are, what the organization should do differently based on what the landscape reveals.

End-to-end, a rigorous traditional landscape analysis in a complex technology area takes two to six weeks. For most organizations, this means landscapes are commissioned infrequently — typically in response to a specific decision point rather than as ongoing intelligence. The result is that R&D strategy is routinely made with intelligence that is months or years old, because the alternative — constantly commissioning landscape analyses — is prohibitively expensive and slow.

Beyond the time problem, the traditional approach has two structural limitations that AI fundamentally addresses. First, keyword-based retrieval misses conceptually relevant patents that use different terminology. In emerging technology areas — where new applications of fundamental science are being developed faster than the classification system can track them — this miss rate can be substantial. Second, the analysis is a point-in-time snapshot. The moment it is delivered, the competitive environment has continued to evolve.

How AI Changes the Problem

The application of AI to patent landscape analysis is not simply about running the traditional steps faster. Several capabilities that AI enables were not meaningfully possible with previous approaches.

Semantic search closes the terminology gap. This is the single most important capability shift. Natural language processing models trained on scientific and technical literature understand how concepts relate to one another — not just what strings of characters appear in documents. An R&D team searching for innovation in solid electrolyte materials will retrieve patents describing ceramic separators, inorganic ion conductors, lithium superionic conductors, and argyrodite sulfide electrolytes — because the platform understands these are related concept spaces, even if the specific terminology varies. The relevance of retrieval improves fundamentally, which changes what analyses are possible.

Automated entity resolution eliminates the normalization problem. Modern AI platforms resolve the subsidiary and predecessor entity attribution problem that consumed significant manual effort in traditional workflows. The full portfolio of a multinational corporation is accurately aggregated across its complete organizational structure, producing an accurate picture of competitive IP concentration rather than an artificially fragmented one. An R&D team trying to understand LG Energy Solution's total position in solid-state battery IP shouldn't need to manually track which filings came from LG Chem, LG Electronics, or a joint venture entity — the platform should resolve that.

Cross-domain search reveals the research-to-commercialization pipeline. This is the capability that separates R&D intelligence platforms from conventional patent databases. Patent filings typically lag academic publication in fundamental research by eighteen to thirty-six months — companies and research institutions publish findings before or while they are developing commercial applications and building IP protection. Analyzing the scientific literature alongside the patent landscape reveals which emerging research directions are building toward significant IP concentration, giving R&D teams intelligence about where the competitive environment is heading rather than only where it has been.

Consider what this means in practice for a pharmaceutical R&D team evaluating an emerging target class. The patent landscape for that target may currently look sparse — early-stage, few filers, apparent white space. But if the recent academic literature shows that five major research groups have published mechanistic work on the target in the last twenty-four months, the IP landscape two years from now will look very different. Cross-domain intelligence surfaces that signal. Keyword-based patent search alone does not.

Continuous monitoring replaces periodic snapshots. The strategic value of patent intelligence is highest when it is current. AI platforms maintain persistent monitoring of defined technology spaces, surfacing new filings as they are published rather than requiring a new analysis to be commissioned each time the intelligence has aged. For enterprise R&D teams, this is the operational shift that creates the most compounding advantage — awareness of competitive IP activity as it happens, not as it existed at the time the last landscape report was delivered.

A Modern Framework for Patent Landscape Analysis

The logic of good landscape analysis is unchanged. The tooling, the timeline, and the depth of achievable insight have all transformed.

Start with the decision, not the scope. Before any search configuration, articulate precisely what decision the landscape needs to inform. The right strategic questions determine which dimensions of the landscape matter. A team evaluating whether to develop a new manufacturing process needs to understand infringement risk and freedom-to-operate. A team choosing between technology development directions needs to understand where the space is contested and where meaningful white space exists. A business development team evaluating an acquisition target needs to understand the quality and defensibility of the target's portfolio relative to the field. Each of these requires different analytical emphasis — and landscapes that don't start from the decision often produce technically thorough but strategically ambiguous deliverables.

Describe the technology conceptually, not as keyword strings. On modern AI platforms, scope configuration involves natural language description of the technology space — the way an engineer would describe their work to a colleague — rather than Boolean query construction. This is genuinely different from the traditional approach, not just a simplified interface over the same methodology. The platform's semantic understanding handles the vocabulary translation problem rather than requiring the analyst to anticipate every relevant synonym and classification code combination.

Validate against known anchors. Before proceeding with analysis, identify five to ten patents you know with certainty are central to the technology area: the foundational filings, the most-cited works, the core portfolio of the dominant players. Confirm your search captures all of them. Missing a known anchor patent indicates the search strategy needs refinement. This step takes minutes and prevents the more expensive mistake of building conclusions on an incomplete corpus.

Read the activity structure, not just the volume. Filing volume over time is a starting point, not a conclusion. The analytically interesting questions are about structure: Who is accelerating in specific sub-technologies while pulling back in others? Which organizations are filing broad platform patents that suggest foundational technology development, versus narrow implementation patents that suggest near-term commercialization? Which competitors have concentrated their geographic filing in specific jurisdictions — China, Germany, Japan — in ways that signal where they plan to compete? Who is citing whom, and what do the citation relationships reveal about technical dependencies and potential licensing dynamics?

Integrate the literature to see around corners. The organizations that are publishing most actively in a technology area today are building the IP that will define the landscape in two to three years. Cross-referencing the patent landscape with recent publication activity from research institutions, universities, and corporate research groups reveals the innovation pipeline — which research directions are moving toward commercialization, which institutions are likely to generate licensing opportunities, and which competitors are developing technical depth that isn't yet visible in their patent filings.

Build interpretation around competitive implication. A patent landscape that describes what the data shows without translating it into implications for the organization's specific situation is a research artifact, not a strategic tool. The synthesis step requires answering: what do these patterns mean for our development priorities? Which competitive moves should we accelerate in response to what we've learned? Where has the space become crowded in ways that change our IP strategy? What signals in the scientific literature suggest we are approaching a period of significant IP activity we should be positioned for?

What Enterprise R&D Intelligence Platforms Provide

The difference between using general patent databases for landscape analysis and deploying a purpose-built enterprise R&D intelligence platform is most visible in complex, cross-disciplinary technology areas where the relevant IP is spread across multiple classification branches, the relevant science is spread across multiple disciplines, and the competitive picture involves global players with sophisticated portfolio strategies.

Cypris is built for exactly this environment. The platform covers more than 500 million patents and scientific papers through a unified interface, with a proprietary R&D ontology that enables semantic search across the full corpus [2]. The practical effect is that an advanced materials team researching next-generation thermal management solutions can retrieve and analyze relevant patents and scientific papers simultaneously — with the platform's semantic understanding recognizing relationships between concepts across the materials science, chemistry, and manufacturing engineering literature that a keyword-based search would fragment into separate, disconnected retrieval exercises.

For R&D teams working in fast-moving fields — solid-state batteries, engineered proteins, quantum materials, next-generation semiconductors — the combination of semantic cross-domain search and continuous monitoring means that competitive intelligence compounds over time. Each new project in a domain benefits from accumulated landscape intelligence. Competitive signals are visible when they emerge rather than when they are eventually discovered during a new analysis cycle.

Official API partnerships with OpenAI, Anthropic, and Google allow Cypris to be embedded directly into enterprise R&D workflows and AI-powered applications, rather than operating as a standalone tool that requires context-switching [3]. R&D intelligence becomes available where decisions are actually made — inside existing knowledge management systems, research planning platforms, and competitive intelligence workflows — rather than being sequestered in a separate interface.

Enterprise-grade security and data governance meet the requirements of Fortune 500 procurement, which matters when the intelligence being generated — the IP analysis of potential acquisition targets, competitive landscape assessments of strategic technology areas — is itself highly sensitive [4].

The Compounding Advantage

The most transformative aspect of AI-powered patent landscape analysis is not any individual capability — it is what happens when an R&D organization operates with continuous patent intelligence over time.

Traditional landscape analysis is episodic. Resources are committed, a project is conducted, a deliverable is produced, and then the intelligence gradually decays as the actual competitive environment continues to evolve. The next decision that requires landscape intelligence starts a new project from scratch, often rebuilding foundational understanding of the domain that was captured in the previous engagement and then abandoned when the report was filed.

Continuous AI-powered intelligence creates a fundamentally different dynamic. Competitive signals accumulate in organizational memory. Each project builds on the landscape understanding established by previous projects. R&D teams develop genuine expertise in the competitive IP environment of their domain rather than commissioning fresh reconnaissance each time a decision requires it.

For innovation-intensive organizations competing in technology areas where the IP environment is moving fast — and where competitors are using that same IP environment as both an offensive and defensive strategic tool — this is not just an efficiency upgrade. It is a different model for how R&D intelligence functions in the organization. The teams that build this capability now are establishing an advantage that will be difficult to close for organizations that continue operating with episodic, project-based landscape analysis.

Frequently Asked Questions

What is a patent landscape analysis?A patent landscape analysis is a systematic examination of patents in a defined technology area to understand who is filing, what they are protecting, where innovation activity is concentrated, what the competitive trends are, and where white space or IP risk exists. It is a strategic intelligence tool for R&D investment decisions, technology development direction, competitive monitoring, and partnership evaluation — broader in scope and purpose than a prior art search or freedom-to-operate analysis.

How long does a patent landscape analysis take?Traditional manual landscape analyses in moderately complex technology areas typically take two to six weeks, depending on scope and depth. AI-powered R&D intelligence platforms have compressed this substantially — enterprise teams using platforms like Cypris can complete landscape analyses that previously required weeks in hours, because semantic search, automated categorization, and entity normalization are handled by the platform rather than manually.

What data sources should a patent landscape analysis cover?At minimum: USPTO, EPO, and WIPO, with additional coverage of JPO, CNIPA, and KIPO depending on the geographic scope of commercial interest. Enterprise R&D intelligence platforms also integrate scientific literature — essential for understanding the research pipeline feeding future patent activity and for capturing technical developments published academically before IP protection is filed.

What is the difference between a patent landscape and a prior art search?A prior art search is focused on a specific claimed invention — is it novel? A patent landscape is strategic — what is the full competitive IP terrain of a technology domain, who are the key players, where is the innovation concentrated, and where are the opportunities? Different purpose, different methodology, different output.

How does semantic search improve patent landscape analysis?Keyword-based search retrieves patents that contain specific strings of text. Semantic search retrieves patents based on conceptual relevance — it understands that different terminology can describe the same invention, that concepts in adjacent fields may be directly relevant, and that the full vocabulary space of a technology area is rarely captured by any finite list of keywords. In practice, semantic search substantially improves recall — more of the relevant IP universe is captured — and is especially important in cross-disciplinary technology areas where terminology is not standardized.

Why does integrating scientific literature matter for patent landscape analysis?Academic publications typically lead patent filings by eighteen to thirty-six months in fundamental research areas. Analyzing recent scientific literature alongside the patent landscape reveals which emerging research directions are moving toward commercialization and IP protection — giving R&D teams intelligence about where the competitive environment is heading rather than only where it currently stands.

How do you identify white space in a patent landscape?White space identification requires distinguishing between technology areas that are genuinely underdeveloped versus areas that appear uncrowded because they have been tried and abandoned, or because the commercial application is not yet understood. The most useful approach combines patent activity analysis (low filing density, declining activity from major players) with scientific literature signals (active publication and growing academic interest) — areas that are publication-active but patent-quiet often represent genuine near-term opportunity.

Citations:[1] WIPO IP Statistics Data Center. World Intellectual Property Organization. wipo.int.[2] Cypris R&D intelligence platform. cypris.com.[3] Cypris API partnerships. cypris.com.[4] Cypris security and compliance. cypris.com.

AI Tools for Scientific Literature Review: A Guide for Enterprise R&D Teams

The growing demand for AI-assisted scientific literature review has produced two very different categories of tools — and most R&D teams are using the wrong one.

Academic literature review tools are designed for PhD students writing dissertations and professors synthesizing research for journal publications. Enterprise R&D teams face a fundamentally different job: they need to understand scientific developments in the context of patent landscapes, competitor activity, funding movements, and technology readiness levels — all at once, at scale, and fast enough to inform actual business decisions. This guide explains how AI tools for scientific literature review work, reviews the leading academic platforms, and explores what enterprise R&D teams actually need from an R&D intelligence solution.

What AI Tools for Scientific Literature Review Actually Do

AI-powered literature review tools apply natural language processing and machine learning to academic databases, enabling researchers to identify relevant papers, extract key findings, map citation networks, and synthesize evidence without manually reading thousands of documents.

The core capabilities typically include semantic search (finding papers by concept rather than exact keyword match), automated summarization of abstracts and full texts, citation analysis to surface influential works and track how findings have been built upon or contradicted, and research gap identification to surface understudied areas within a field.

Most platforms index research from sources like PubMed, arXiv, Semantic Scholar, and institutional repositories. The better ones cover hundreds of millions of papers across life sciences, chemistry, materials science, engineering, and computer science. Retrieval quality depends heavily on the underlying indexing methodology — whether the platform performs surface-level keyword matching or applies genuine semantic understanding of scientific concepts.

For academic researchers, these capabilities are genuinely transformative. A graduate student conducting a systematic review that once required weeks of manual database searching can now surface a comprehensive corpus in hours. For enterprise R&D teams, however, this represents only a fraction of the intelligence picture.

The Leading Academic AI Literature Review Tools

Understanding the existing landscape helps clarify where the real capability gaps are for enterprise users.

Semantic Scholar, developed by the Allen Institute for AI, indexes over 200 million papers and provides AI-generated TLDR summaries, citation analysis distinguishing highly influential citations from background references, and personalized research feeds [2]. Its open-access model and broad coverage make it a standard starting point for academic research.

Consensus focuses on extracting direct answers from peer-reviewed research, surfacing a "Consensus Meter" that aggregates scientific agreement or disagreement on specific questions [4]. It is oriented toward evidence-based writing and quickly identifying where scientific confidence exists on a given topic.

ResearchRabbit takes a visual approach, mapping citation networks and relationships between papers, authors, and research trajectories. Starting from a seed set of papers, researchers can expand outward to discover related works and trace academic lineages [5]. Its visual maps integrate with reference management tools like Zotero.

Each of these platforms excels within its intended use case. The shared limitation is that they treat scientific literature as the complete universe of relevant information — which works fine for academic research but fails enterprise R&D teams almost immediately.

Why Enterprise R&D Teams Need More Than Literature Review

The fundamental challenge for corporate R&D is that scientific literature is one input among many, not the entire picture. When a materials science team at a Fortune 500 manufacturer evaluates a new polymer chemistry, they need to understand the academic research — but they also need to know who holds relevant patents, what competitors have filed in the last 18 months, which startups are working in adjacent spaces, what academic institutions are publishing most actively and potentially seeking industry partners, and where the technology sits on the commercialization timeline.

None of the academic literature review tools answer those questions. They are designed around a workflow — the systematic academic review — that doesn't map to how enterprise R&D strategy actually functions.

Enterprise R&D intelligence requires integrating scientific literature with patent data, competitive filing activity, funding signals, and market indicators into a unified analytical framework. When these data streams live in separate tools, R&D teams spend enormous effort on manual synthesis rather than on the strategic analysis that actually creates value. Research reports get siloed, insights don't compound across projects, and the organization ends up recreating foundational landscape analyses from scratch each time a new initiative launches.

This is the core problem that purpose-built enterprise R&D intelligence platforms are designed to solve.

What Enterprise R&D Intelligence Platforms Offer That Academic Tools Cannot

The distinction between an academic literature review tool and an enterprise R&D intelligence platform is not merely a matter of scale — it is a fundamentally different product category with different architecture, data coverage, and analytical philosophy.

Enterprise platforms are built around the principle of unified intelligence: the ability to query across patents, scientific papers, technical standards, competitive activity, and market data simultaneously, using a common ontological framework that understands how concepts relate to one another across these different document types.

Cypris represents this category of platform. Where academic tools index scientific papers, Cypris covers more than 500 million patents and scientific papers through a single interface, applying a proprietary R&D ontology that enables semantic understanding across the full corpus [6]. An R&D team searching for developments in solid electrolyte materials, for example, retrieves both the latest academic publications and the patent filings that translate that research into protected intellectual property — with the semantic intelligence to recognize that "solid electrolyte" and "ceramic separator" may refer to overlapping technology spaces depending on context.

This matters because the patent literature and the academic literature do not perfectly overlap. Many commercially significant technical advances appear in patent filings before, or instead of, academic publications. An enterprise R&D team conducting competitive intelligence based only on academic literature is missing a substantial portion of the relevant technical signal.