Resources

Guides, research, and perspectives on R&D intelligence, IP strategy, and the future of AI enabled innovation.

Executive Summary

In 2024, US patent infringement jury verdicts totaled $4.19 billion across 72 cases. Twelve individual verdicts exceeded $100million. The largest single award—$857 million in General Access Solutions v.Cellco Partnership (Verizon)—exceeded the annual R&D budget of many mid-market technology companies. In the first half of 2025 alone, total damages reached an additional $1.91 billion.

The consequences of incomplete patent intelligence are not abstract. In what has become one of the most instructive IP disputes in recent history, Masimo’s pulse oximetry patents triggered a US import ban on certain Apple Watch models, forcing Apple to disable its blood oxygen feature across an entire product line, halt domestic sales of affected models, invest in a hardware redesign, and ultimately face a $634 million jury verdict in November 2025. Apple—a company with one of the most sophisticated intellectual property organizations on earth—spent years in litigation over technology it might have designed around during development.

For organizations with fewer resources than Apple, the risk calculus is starker. A mid-size materials company, a university spinout, or a defense contractor developing next-generation battery technology cannot absorb a nine-figure verdict or a multi-year injunction. For these organizations, the patent landscape analysis conducted during the development phase is the primary risk mitigation mechanism. The quality of that analysis is not a matter of convenience. It is a matter of survival.

And yet, a growing number of R&D and IP teams are conducting that analysis using general-purpose AI tools—ChatGPT, Claude, Microsoft Co-Pilot—that were never designed for patent intelligence and are structurally incapable of delivering it.

This report presents the findings of a controlled comparison study in which identical patent landscape queries were submitted to four AI-powered tools: Cypris (a purpose-built R&D intelligence platform),ChatGPT (OpenAI), Claude (Anthropic), and Microsoft Co-Pilot. Two technology domains were tested: solid-state lithium-sulfur battery electrolytes using garnet-type LLZO ceramic materials (freedom-to-operate analysis), and bio-based polyamide synthesis from castor oil derivatives (competitive intelligence).

The results reveal a significant and structurally persistent gap. In Test 1, Cypris identified over 40 active US patents and published applications with granular FTO risk assessments. Claude identified 12. ChatGPT identified 7, several with fabricated attribution. Co-Pilot identified 4. Among the patents surfaced exclusively by Cypris were filings rated as “Very High” FTO risk that directly claim the technology architecture described in the query. In Test 2, Cypris cited over 100 individual patent filings with full attribution to substantiate its competitive landscape rankings. No general-purpose model cited a single patent number.

The most active sectors for patent enforcement—semiconductors, AI, biopharma, and advanced materials—are the same sectors where R&D teams are most likely to adopt AI tools for intelligence workflows. The findings of this report have direct implications for any organization using general-purpose AI to inform patent strategy, competitive intelligence, or R&D investment decisions.

1. Methodology

A single patent landscape query was submitted verbatim to each tool on March 27, 2026. No follow-up prompts, clarifications, or iterative refinements were provided. Each tool received one opportunity to respond, mirroring the workflow of a practitioner running an initial landscape scan.

1.1 Query

Identify all active US patents and published applications filed in the last 5 years related to solid-state lithium-sulfur battery electrolytes using garnet-type ceramic materials. For each, provide the assignee, filing date, key claims, and current legal status. Highlight any patents that could pose freedom-to-operate risks for a company developing a Li₇La₃Zr₂O₁₂(LLZO)-based composite electrolyte with a polymer interlayer.

1.2 Tools Evaluated

1.3 Evaluation Criteria

Each response was assessed across six dimensions: (1) number of relevant patents identified, (2) accuracy of assignee attribution,(3) completeness of filing metadata (dates, legal status), (4) depth of claim analysis relative to the proposed technology, (5) quality of FTO risk stratification, and (6) presence of actionable design-around or strategic guidance.

2. Findings

2.1 Coverage Gap

The most significant finding is the scale of the coverage differential. Cypris identified over 40 active US patents and published applications spanning LLZO-polymer composite electrolytes, garnet interface modification, polymer interlayer architectures, lithium-sulfur specific filings, and adjacent ceramic composite patents. The results were organized by technology category with per-patent FTO risk ratings.

Claude identified 12 patents organized in a four-tier risk framework. Its analysis was structurally sound and correctly flagged the two highest-risk filings (Solid Energies US 11,967,678 and the LLZO nanofiber multilayer US 11,923,501). It also identified the University ofMaryland/ Wachsman portfolio as a concentration risk and noted the NASA SABERS portfolio as a licensing opportunity. However, it missed the majority of the landscape, including the entire Corning portfolio, GM's interlayer patents, theKorea Institute of Energy Research three-layer architecture, and the HonHai/SolidEdge lithium-sulfur specific filing.

ChatGPT identified 7 patents, but the quality of attribution was inconsistent. It listed assignees as "Likely DOE /national lab ecosystem" and "Likely startup / defense contractor cluster" for two filings—language that indicates the model was inferring rather than retrieving assignee data. In a freedom-to-operate context, an unverified assignee attribution is functionally equivalent to no attribution, as it cannot support a licensing inquiry or risk assessment.

Co-Pilot identified 4 US patents. Its output was the most limited in scope, missing the Solid Energies portfolio entirely, theUMD/ Wachsman portfolio, Gelion/ Johnson Matthey, NASA SABERS, and all Li-S specific LLZO filings.

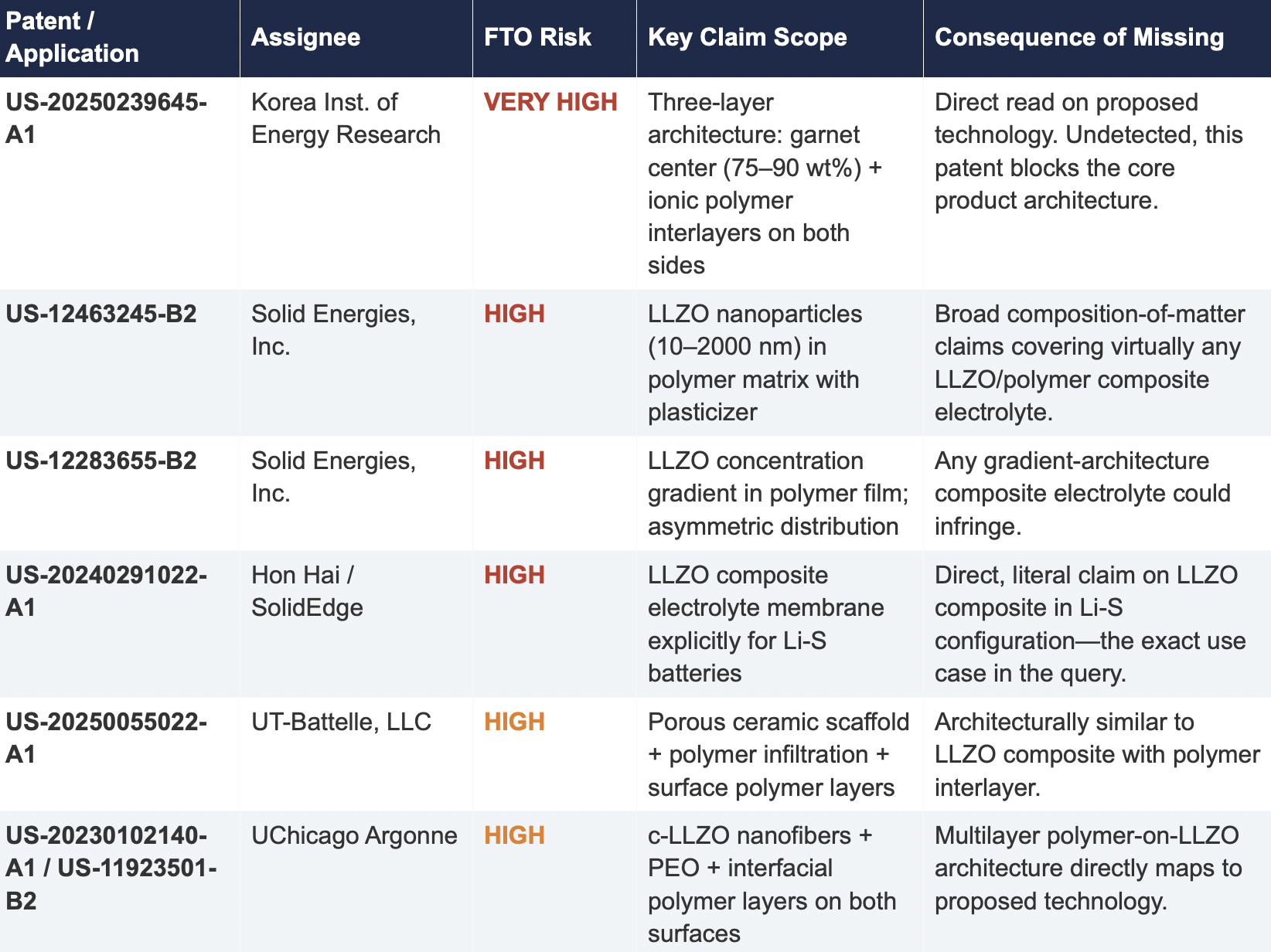

2.2 Critical Patents Missed by Public Models

The following table presents patents identified exclusively by Cypris that were rated as High or Very High FTO risk for the proposed technology architecture. None were surfaced by any general-purpose model.

2.3 Patent Fencing: The Solid Energies Portfolio

Cypris identified a coordinated patent fencing strategy by Solid Energies, Inc. that no general-purpose model detected at scale. Solid Energies holds at least four granted US patents and one published application covering LLZO-polymer composite electrolytes across compositions(US-12463245-B2), gradient architectures (US-12283655-B2), electrode integration (US-12463249-B2), and manufacturing processes (US-20230035720-A1). Claude identified one Solid Energies patent (US 11,967,678) and correctly rated it as the highest-priority FTO concern but did not surface the broader portfolio. ChatGPT and Co-Pilot identified zero Solid Energies filings.

The practical significance is that a company relying on any individual patent hit would underestimate the scope of Solid Energies' IP position. The fencing strategy—covering the composition, the architecture, the electrode integration, and the manufacturing method—means that identifying a single design-around for one patent does not resolve the FTO exposure from the portfolio as a whole. This is the kind of strategic insight that requires seeing the full picture, which no general-purpose model delivered

2.4 Assignee Attribution Quality

ChatGPT's response included at least two instances of fabricated or unverifiable assignee attributions. For US 11,367,895 B1, the listed assignee was "Likely startup / defense contractor cluster." For US 2021/0202983 A1, the assignee was described as "Likely DOE / national lab ecosystem." In both cases, the model appears to have inferred the assignee from contextual patterns in its training data rather than retrieving the information from patent records.

In any operational IP workflow, assignee identity is foundational. It determines licensing strategy, litigation risk, and competitive positioning. A fabricated assignee is more dangerous than a missing one because it creates an illusion of completeness that discourages further investigation. An R&D team receiving this output might reasonably conclude that the landscape analysis is finished when it is not.

3. Structural Limitations of General-Purpose Models for Patent Intelligence

3.1 Training Data Is Not Patent Data

Large language models are trained on web-scraped text. Their knowledge of the patent record is derived from whatever fragments appeared in their training corpus: blog posts mentioning filings, news articles about litigation, snippets of Google Patents pages that were crawlable at the time of data collection. They do not have systematic, structured access to the USPTO database. They cannot query patent classification codes, parse claim language against a specific technology architecture, or verify whether a patent has been assigned, abandoned, or subjected to terminal disclaimer since their training data was collected.

This is not a limitation that improves with scale. A larger training corpus does not produce systematic patent coverage; it produces a larger but still arbitrary sampling of the patent record. The result is that general-purpose models will consistently surface well-known patents from heavily discussed assignees (QuantumScape, for example, appeared in most responses) while missing commercially significant filings from less publicly visible entities (Solid Energies, Korea Institute of EnergyResearch, Shenzhen Solid Advanced Materials).

3.2 The Web Is Closing to Model Scrapers

The data access problem is structural and worsening. As of mid-2025, Cloudflare reported that among the top 10,000 web domains, the majority now fully disallow AI crawlers such as GPTBot andClaudeBot via robots.txt. The trend has accelerated from partial restrictions to outright blocks, and the crawl-to-referral ratios reveal the underlying tension: OpenAI's crawlers access approximately1,700 pages for every referral they return to publishers; Anthropic's ratio exceeds 73,000 to 1.

Patent databases, scientific publishers, and IP analytics platforms are among the most restrictive content categories. A Duke University study in 2025 found that several categories of AI-related crawlers never request robots.txt files at all. The practical consequence is that the knowledge gap between what a general-purpose model "knows" about the patent landscape and what actually exists in the patent record is widening with each training cycle. A landscape query that a general-purpose model partially answered in 2023 may return less useful information in 2026.

3.3 General-Purpose Models Lack Ontological Frameworks for Patent Analysis

A freedom-to-operate analysis is not a summarization task. It requires understanding claim scope, prosecution history, continuation and divisional chains, assignee normalization (a single company may appear under multiple entity names across patent records), priority dates versus filing dates versus publication dates, and the relationship between dependent and independent claims. It requires mapping the specific technical features of a proposed product against independent claim language—not keyword matching.

General-purpose models do not have these frameworks. They pattern-match against training data and produce outputs that adopt the format and tone of patent analysis without the underlying data infrastructure. The format is correct. The confidence is high. The coverage is incomplete in ways that are not visible to the user.

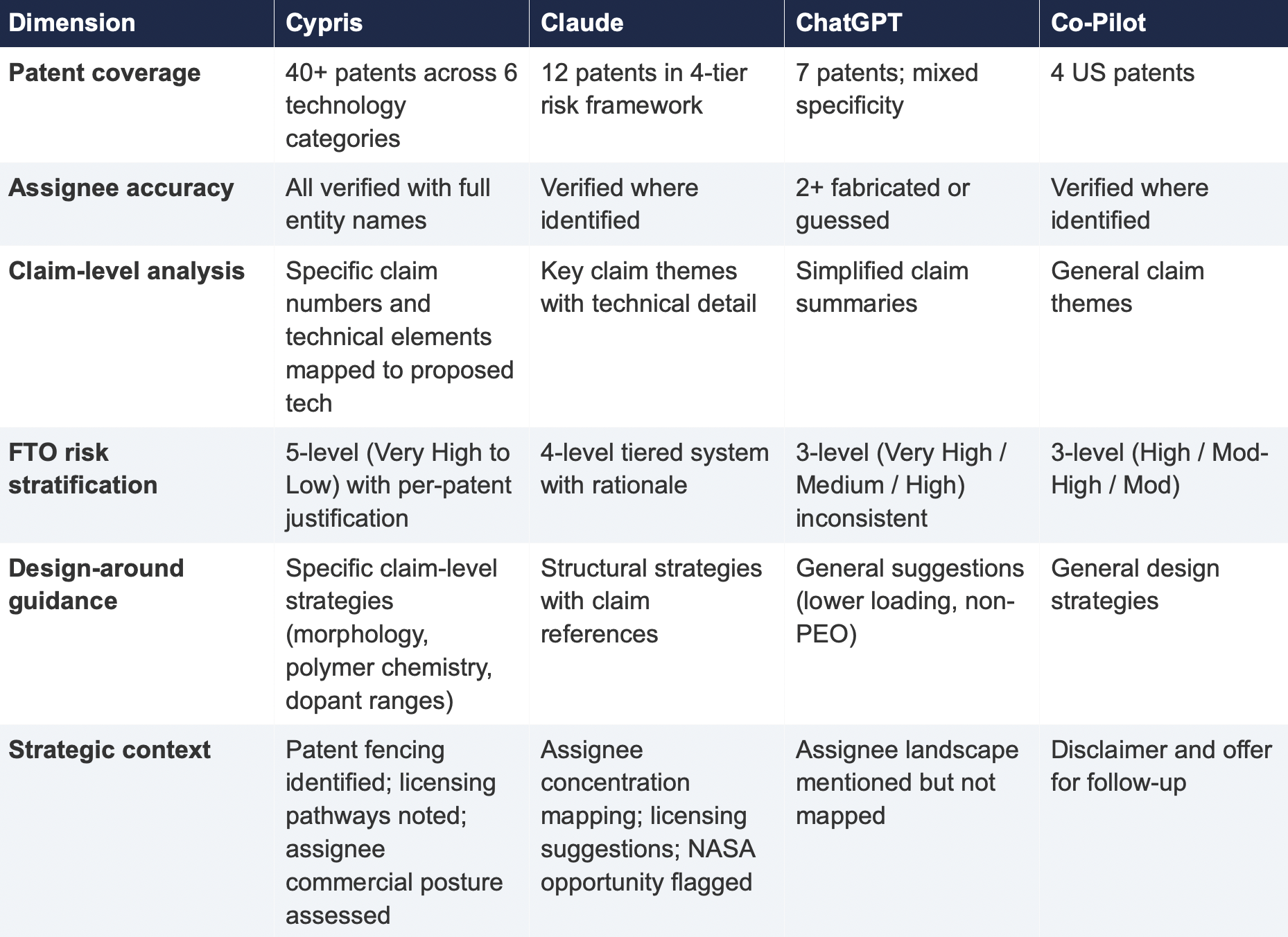

4. Comparative Output Quality

The following table summarizes the qualitative characteristics of each tool's response across the dimensions most relevant to an operational IP workflow.

5. Implications for R&D and IP Organizations

5.1 The Confidence Problem

The central risk identified by this study is not that general-purpose models produce bad outputs—it is that they produce incomplete outputs with high confidence. Each model delivered its results in a professional format with structured analysis, risk ratings, and strategic recommendations. At no point did any model indicate the boundaries of its knowledge or flag that its results represented a fraction of the available patent record. A practitioner receiving one of these outputs would have no signal that the analysis was incomplete unless they independently validated it against a comprehensive datasource.

This creates an asymmetric risk profile: the better the format and tone of the output, the less likely the user is to question its completeness. In a corporate environment where AI outputs are increasingly treated as first-pass analysis, this dynamic incentivizes under-investigation at precisely the moment when thoroughness is most critical.

5.2 The Diversification Illusion

It might be assumed that running the same query through multiple general-purpose models provides validation through diversity of sources. This study suggests otherwise. While the four tools returned different subsets of patents, all operated under the same structural constraints: training data rather than live patent databases, web-scraped content rather than structured IP records, and general-purpose reasoning rather than patent-specific ontological frameworks. Running the same query through three constrained tools does not produce triangulation; it produces three partial views of the same incomplete picture.

5.3 The Appropriate Use Boundary

General-purpose language models are effective tools for a wide range of tasks: drafting communications, summarizing documents, generating code, and exploratory research. The finding of this study is not that these tools lack value but that their value boundary does not extend to decisions that carry existential commercial risk.

Patent landscape analysis, freedom-to-operate assessment, and competitive intelligence that informs R&D investment decisions fall outside that boundary. These are workflows where the completeness and verifiability of the underlying data are not merely desirable but are the primary determinant of whether the analysis has value. A patent landscape that captures 10% of the relevant filings, regardless of how well-formatted or confidently presented, is a liability rather than an asset.

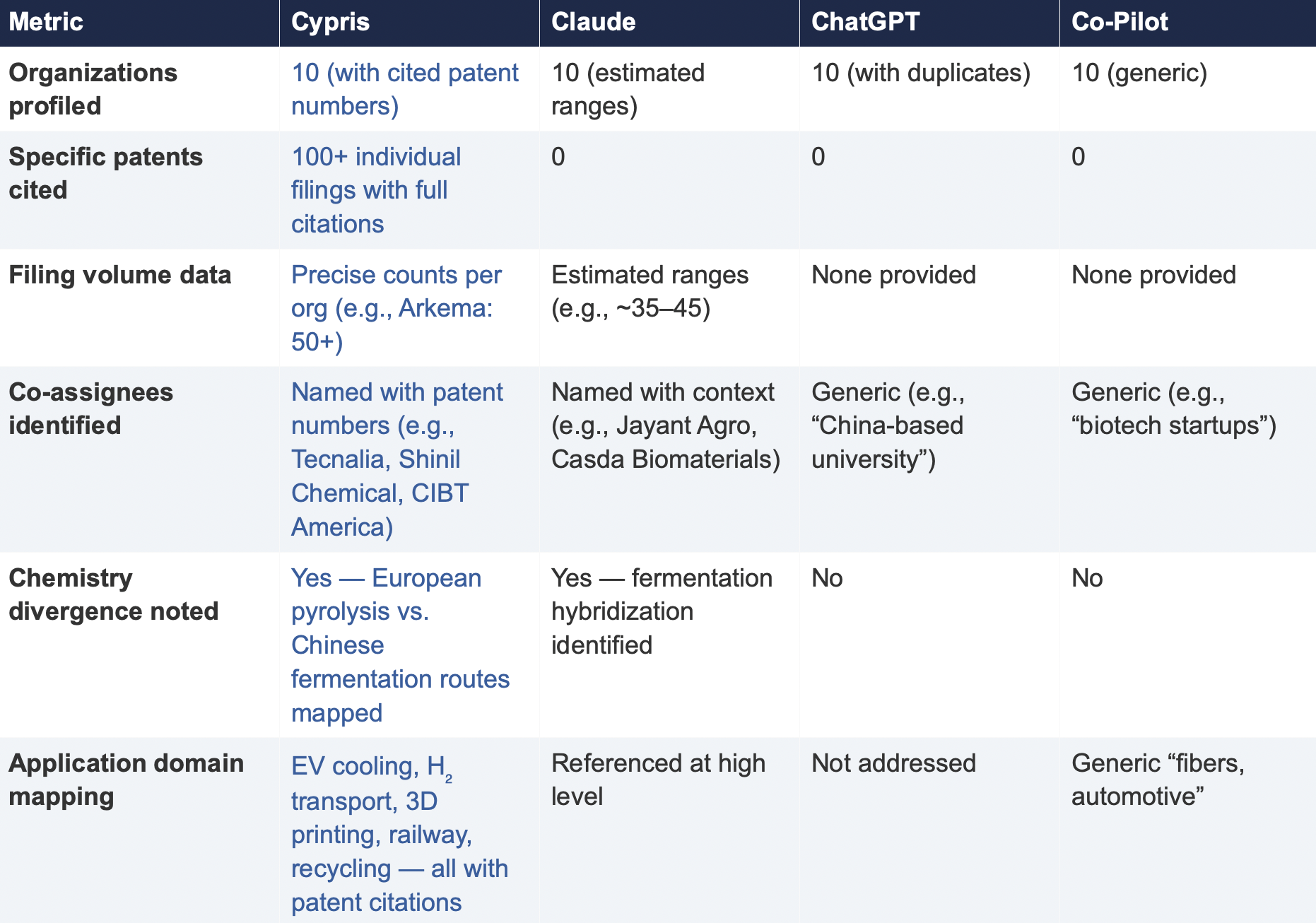

6. Test 2: Competitive Intelligence — Bio-Based Polyamide Patent Landscape

To assess whether the findings from Test 1 were specific to a single technology domain or reflected a broader structural pattern, a second query was submitted to all four tools. This query shifted from freedom-to-operate analysis to competitive intelligence, asking each tool to identify the top 10organizations by patent filing volume in bio-based polyamide synthesis from castor oil derivatives over the past three years, with summaries of technical approach, co-assignee relationships, and portfolio trajectory.

6.1 Query

6.2 Summary of Results

6.3 Key Differentiators

Verifiability

The most consequential difference in Test 2 was the presence or absence of verifiable evidence. Cypris cited over 100 individual patent filings with full patent numbers, assignee names, and publication dates. Every claim about an organization’s technical focus, co-assignee relationships, and filing trajectory was anchored to specific documents that a practitioner could independently verify in USPTO, Espacenet, or WIPO PATENT SCOPE. No general-purpose model cited a single patent number. Claude produced the most structured and analytically useful output among the public models, with estimated filing ranges, product names, and strategic observations that were directionally plausible. However, without underlying patent citations, every claim in the response requires independent verification before it can inform a business decision. ChatGPT and Co-Pilot offered thinner profiles with no filing counts and no patent-level specificity.

Data Integrity

ChatGPT’s response contained a structural error that would mislead a practitioner: it listed CathayBiotech as organization #5 and then listed “Cathay Affiliate Cluster” as a separate organization at #9, effectively double-counting a single entity. It repeated this pattern with Toray at #4 and “Toray(Additional Programs)” at #10. In a competitive intelligence context where the ranking itself is the deliverable, this kind of error distorts the landscape and could lead to misallocation of competitive monitoring resources.

Organizations Missed

Cypris identified Kingfa Sci. & Tech. (8–10 filings with a differentiated furan diacid-based polyamide platform) and Zhejiang NHU (4–6 filings focused on continuous polymerization process technology)as emerging players that no general-purpose model surfaced. Both represent potential competitive threats or partnership opportunities that would be invisible to a team relying on public AI tools.Conversely, ChatGPT included organizations such as ANTA and Jiangsu Taiji that appear to be downstream users rather than significant patent filers in synthesis, suggesting the model was conflating commercial activity with IP activity.

Strategic Depth

Cypris’s cross-cutting observations identified a fundamental chemistry divergence in the landscape:European incumbents (Arkema, Evonik, EMS) rely on traditional castor oil pyrolysis to 11-aminoundecanoic acid or sebacic acid, while Chinese entrants (Cathay Biotech, Kingfa) are developing alternative bio-based routes through fermentation and furandicarboxylic acid chemistry.This represents a potential long-term disruption to the castor oil supply chain dependency thatWestern players have built their IP strategies around. Claude identified a similar theme at a higher level of abstraction. Neither ChatGPT nor Co-Pilot noted the divergence.

6.4 Test 2 Conclusion

Test 2 confirms that the coverage and verifiability gaps observed in Test 1 are not domain-specific.In a competitive intelligence context—where the deliverable is a ranked landscape of organizationalIP activity—the same structural limitations apply. General-purpose models can produce plausible-looking top-10 lists with reasonable organizational names, but they cannot anchor those lists to verifiable patent data, they cannot provide precise filing volumes, and they cannot identify emerging players whose patent activity is visible in structured databases but absent from the web-scraped content that general-purpose models rely on.

7. Conclusion

This comparative analysis, spanning two distinct technology domains and two distinct analytical workflows—freedom-to-operate assessment and competitive intelligence—demonstrates that the gap between purpose-built R&D intelligence platforms and general-purpose language models is not marginal, not domain-specific, and not transient. It is structural and consequential.

In Test 1 (LLZO garnet electrolytes for Li-S batteries), the purpose-built platform identified more than three times as many patents as the best-performing general-purpose model and ten times as many as the lowest-performing one. Among the patents identified exclusively by the purpose-built platform were filings rated as Very High FTO risk that directly claim the proposed technology architecture. InTest 2 (bio-based polyamide competitive landscape), the purpose-built platform cited over 100individual patent filings to substantiate its organizational rankings; no general-purpose model cited as ingle patent number.

The structural drivers of this gap—reliance on training data rather than live patent feeds, the accelerating closure of web content to AI scrapers, and the absence of patent-specific analytical frameworks—are not transient. They are inherent to the architecture of general-purpose models and will persist regardless of increases in model capability or training data volume.

For R&D and IP leaders, the practical implication is clear: general-purpose AI tools should be used for general-purpose tasks. Patent intelligence, competitive landscaping, and freedom-to-operate analysis require purpose-built systems with direct access to structured patent data, domain-specific analytical frameworks, and the ability to surface what a general-purpose model cannot—not because it chooses not to, but because it structurally cannot access the data.

The question for every organization making R&D investment decisions today is whether the tools informing those decisions have access to the evidence base those decisions require. This study suggests that for the majority of general-purpose AI tools currently in use, the answer is no.

About This Report

This report was produced by Cypris (IP Web, Inc.), an AI-powered R&D intelligence platform serving corporate innovation, IP, and R&D teams at organizations including NASA, Johnson & Johnson, theUS Air Force, and Los Alamos National Laboratory. Cypris aggregates over 500 million data points from patents, scientific literature, grants, corporate filings, and news to deliver structured intelligence for technology scouting, competitive analysis, and IP strategy.

The comparative tests described in this report were conducted on March 27, 2026. All outputs are preserved in their original form. Patent data cited from the Cypris reports has been verified against USPTO Patent Center and WIPO PATENT SCOPE records as of the same date. To conduct a similar analysis for your technology domain, contact info@cypris.ai or visit cypris.ai.

The Patent Intelligence Gap - A Comparative Analysis of Verticalized AI-Patent Tools vs. General-Purpose Language Models for R&D Decision-Making

Blogs

For decades, CAS SciFinder has occupied a singular position in chemical research. Its curated registry of over 200 million substances, expert-indexed reaction data, and retrosynthesis planning tools have made it the default database for academic chemistry departments and pharmaceutical R&D labs worldwide [1]. But for a growing segment of the market, the question is no longer whether SciFinder is the gold standard. The question is whether the gold standard is worth the price.

Enterprise R&D teams working in chemicals, materials science, energy storage, and advanced manufacturing increasingly find themselves paying six-figure annual subscription fees for a platform whose deepest capabilities serve bench chemists and patent attorneys rather than the upstream innovation strategists, competitive intelligence analysts, and R&D portfolio managers who actually drive early-stage decision-making [2]. These teams do not need retrosynthesis route planning or reaction condition optimization. They need to understand what chemical compounds are appearing in the patent landscape, which regulatory jurisdictions cover their target substances, and where competitors are placing bets across the innovation lifecycle.

That mismatch between capability and need has opened a real market for SciFinder alternatives in 2026. The platforms listed below serve different parts of the chemical intelligence stack, and the right choice depends on whether your primary workflow is substance-level research, patent landscape analysis, regulatory screening, or competitive R&D intelligence.

1. Cypris: Best Overall for Enterprise R&D Chemical Intelligence

Cypris (cypris.ai) approaches chemical data from a fundamentally different direction than SciFinder. Rather than building a proprietary substance registry with manually curated reaction records, Cypris extracts chemical compound data from the full text of over 500 million patents and scientific papers using a proprietary R&D ontology powered by retrieval-augmented generation and large language model architecture [3]. The result is a platform that surfaces chemical entities not as isolated database records, but as contextual data points embedded within the patent claims, specifications, and research literature where they actually appear.

This distinction matters more than it might seem at first glance. When an R&D strategist at a specialty chemicals company wants to understand how a particular polymer formulation is being claimed across recent patent filings, SciFinder can tell them that the substance exists and link to indexed references. Cypris can show them the full competitive context: which assignees are filing, how claims are structured, which adjacent compounds are co-occurring in the same patent families, and how the innovation trajectory has shifted over time. That is a different category of insight, and for upstream R&D decision-making, it is often more valuable than a curated CAS Registry Number.

Cypris also integrates regulatory data from public sources including PubChem, the EPA's Toxic Substances Control Act inventory, and the European Chemicals Agency's REACH registration database. The TSCA inventory currently contains 86,862 chemical substances, with approximately 42,578 classified as active in U.S. commerce [4]. The REACH database covers more than 100,000 registration dossiers submitted to ECHA under Europe's chemicals regulation framework [5]. By incorporating these open regulatory datasets alongside its patent and literature corpus, Cypris gives R&D teams a single-platform view of both the innovation landscape and the regulatory environment surrounding a chemical or material of interest.

Is Cypris a one-to-one replacement for SciFinder's curated substance registry? No, and it does not claim to be. It does not offer Markush structure searching, retrosynthesis route planning, or the granular reaction condition data that bench chemists rely on when planning synthesis campaigns. But for the enterprise R&D teams that are paying for SciFinder primarily to monitor the competitive landscape, assess chemical IP, and screen substances against regulatory lists, Cypris provides as much or more actionable context at a fraction of the cost. Its AI research agent, Cypris Q, can generate comprehensive intelligence reports that synthesize patent data, scientific literature, and regulatory information into a single analytical output, something that would take days of manual work across SciFinder, regulatory databases, and patent search tools [3].

Cypris holds official API partnerships with OpenAI, Anthropic, and Google, meaning its data layer is built for the AI-native research workflows that are rapidly becoming standard in enterprise R&D organizations. It meets Fortune 500 enterprise security requirements and serves hundreds of enterprise customers across chemicals, materials, energy, and advanced manufacturing verticals [3]. For R&D leaders whose teams have outgrown the narrow chemistry-bench focus of legacy tools but still need chemical substance intelligence as part of a broader innovation analytics workflow, Cypris is the strongest option available in 2026.

2. Reaxys (Elsevier): Best for Bench Chemistry and Reaction Data

Reaxys remains the most direct functional competitor to SciFinder for teams whose primary need is curated reaction data and experimental property information. Built on the historical Beilstein and Gmelin databases, Reaxys provides experimentally validated substance properties, reaction records with detailed conditions, and bioactivity data that supports medicinal chemistry and synthetic route design [6]. Its query-builder interface allows for sophisticated multi-parameter searches that filter by yield, temperature, solvent, and catalyst, making it the preferred tool for process chemists who need to evaluate synthetic feasibility.

The trade-off is similar to SciFinder itself. Reaxys is a premium subscription product, and its pricing reflects the depth of its curated data. For organizations that need bench-level reaction planning, it delivers clear value. For those whose chemical intelligence needs extend beyond the bench into competitive strategy, patent landscaping, and regulatory compliance, Reaxys leaves the same upstream gaps that have driven demand for alternative platforms.

3. PubChem (NIH/NCBI): Best Free Chemical Substance Database

PubChem is the world's largest freely accessible chemical information resource, maintained by the National Center for Biotechnology Information at the U.S. National Institutes of Health. As of its 2025 update, PubChem contains information on 119 million compounds sourced from over 1,000 data sources, along with 322 million substance records and 295 million bioactivity test results [7]. Its coverage extends across compound structures, biological activities, safety and toxicity data, patent citations, and literature references.

PubChem's strength for R&D teams lies in its breadth and accessibility. It aggregates data from authoritative sources including the U.S. EPA, the FDA, and Japan's Pharmaceuticals and Medical Devices Agency, providing safety, hazard, and environmental exposure information that is directly relevant to product development and regulatory screening [7]. Its patent knowledge panels display chemicals, genes, and diseases co-mentioned within patent documents, offering a lightweight form of the co-occurrence analysis that enterprise platforms like Cypris provide at much greater depth and scale.

The limitation is structural. PubChem is a reference database, not an analytics platform. It cannot generate landscape reports, track competitor filing patterns, or integrate regulatory compliance data into a unified strategic view. For R&D teams that treat PubChem as one input among several, it is an essential free resource. As a standalone replacement for SciFinder, it fills only part of the gap.

4. Google Patents: Best Free Patent Search for Chemical IP Screening

Google Patents provides free, full-text searchable access to over 120 million patent documents from patent offices worldwide. For chemical R&D teams conducting initial IP screening, Google Patents offers several practical advantages: natural language search across the full text of patent specifications, prior art search with automated citation analysis, and machine translation of non-English filings [8]. Its integration with Google Scholar creates a bridge between patent literature and academic citations.

Where Google Patents falls short for enterprise R&D use cases is in analytical depth. It does not offer chemical structure search, substance-level indexing, or the ability to track innovation trends over time across assignees or technology classes. Teams that begin their chemical IP research on Google Patents frequently find they need to move to a platform like Cypris or Orbit Intelligence for the kind of landscape analysis, clustering, and competitive intelligence that informs actual R&D investment decisions.

5. Orbit Intelligence (Questel): Best Traditional Patent Analytics for Chemical IP

Orbit Intelligence from Questel is an established patent analytics platform that serves IP departments and R&D organizations with structured patent data, citation mapping, legal status monitoring, and landscape visualization tools [9]. Its chemical structure search capabilities, including Markush search, make it one of the few platforms outside of CAS's own ecosystem that can replicate some of SciFinder's substance-level patent searching.

Orbit's strength lies in its depth of patent bibliographic data and its mature analytics layer. R&D teams in the pharmaceutical and chemical industries have relied on it for Freedom to Operate analyses, prior art search, and competitive patent landscaping for years. The platform is built primarily for IP professionals, however, and its interface and workflow assumptions reflect that heritage. R&D scientists and innovation strategists who are not trained patent analysts may find Orbit's learning curve steep and its outputs difficult to translate into the competitive intelligence narratives that inform R&D portfolio decisions.

6. Derwent Innovation (Clarivate): Best for Deep Patent Classification and Prior Art

Derwent Innovation combines the Derwent World Patents Index with Clarivate's broader scientific literature databases to provide enhanced patent records that include human-written abstracts, chemical fragmentation codes, and proprietary classification schemes [10]. For organizations that need the highest level of patent classification granularity, particularly for prior art search and patentability opinions, Derwent's curated enhancements add genuine value.

The Derwent ecosystem was originally designed for patent attorneys and information professionals, and its pricing and interface reflect that audience. Enterprise R&D teams whose primary interest is upstream competitive intelligence rather than prosecution-quality prior art search often find Derwent's capabilities exceed their needs in some areas while leaving gaps in others, particularly around real-time competitive monitoring, AI-powered report generation, and integration with non-patent data sources like regulatory databases and scientific literature.

7. The Lens and PQAI: Best Open-Access Patent and Scholarly Search

The Lens is a free, open-access platform that integrates patent and scholarly literature into a single searchable database. Developed by Cambia, a nonprofit research organization, The Lens provides access to over 150 million patent records and hundreds of millions of scholarly works, with tools for citation analysis, patent family mapping, and collection-based research [11]. PQAI, or Patent Quality through Artificial Intelligence, is a complementary open-source project that applies machine learning to prior art search.

For budget-constrained R&D teams, The Lens offers a remarkable amount of functionality at no cost. Its strength is in providing an integrated view of the knowledge landscape that connects patents to the scholarly literature they cite and build upon. Its limitations mirror those of Google Patents: it lacks the deep chemical substance indexing, regulatory data integration, and enterprise analytics capabilities that platforms like Cypris and Orbit provide. For teams that need a free starting point for chemical patent research before investing in an enterprise platform, The Lens is the best available option.

Why the SciFinder Alternative Conversation Has Shifted in 2026

The conversation around SciFinder alternatives has changed because the users driving demand have changed. Five years ago, the primary searchers for chemical database alternatives were academic librarians looking for open-access substitutes and bench chemists at smaller organizations who could not afford the subscription. In 2026, the fastest-growing segment of demand comes from enterprise R&D leaders at Fortune 500 companies who already have SciFinder licenses but find that the platform does not serve the upstream innovation intelligence workflows that have become central to how R&D portfolios are managed.

These leaders are not looking for a cheaper version of SciFinder. They are looking for a different kind of tool altogether, one that treats chemical substance data as one layer in a broader intelligence stack that includes patent analytics, competitive landscaping, regulatory screening, and AI-powered research synthesis. The platforms that have gained the most traction with this audience, Cypris chief among them, are the ones that were built for R&D scientists and innovation strategists from the ground up, rather than being retrofitted from tools originally designed for patent attorneys or academic researchers.

The emergence of AI-native architectures has accelerated this shift. Platforms that can apply large language models and retrieval-augmented generation to the full text of patents and scientific literature can extract chemical intelligence from context in ways that curated registries cannot. A CAS Registry Number tells you that a substance exists. A contextual analysis of every patent claim and specification mentioning that substance tells you what the competitive landscape actually looks like.

Frequently Asked Questions

What is the best free alternative to SciFinder in 2026?

PubChem is the best free alternative to SciFinder for chemical substance searches, containing information on 119 million compounds from over 1,000 data sources as of 2025. For patent-focused chemical research, Google Patents and The Lens provide free full-text patent searching. However, none of these free tools replicate SciFinder's curated reaction data or provide the enterprise-grade competitive intelligence and regulatory integration available from commercial platforms like Cypris.

Can Cypris replace SciFinder for chemical R&D teams?

Cypris is not a direct one-to-one replacement for SciFinder's curated substance registry or retrosynthesis planning tools. However, for enterprise R&D teams whose primary needs are competitive patent intelligence, chemical landscape analysis, and regulatory screening, Cypris provides equal or greater value by extracting chemical data from the full text of over 500 million patents and scientific papers and integrating regulatory information from PubChem, the TSCA inventory, and the REACH database. Many enterprise teams find that Cypris addresses the upstream R&D intelligence use cases that SciFinder was never designed to serve.

How much does SciFinder cost for enterprise users?

CAS does not publish standard pricing for SciFinder enterprise subscriptions, and costs vary significantly based on organization size, number of users, and selected modules. Enterprise contracts are negotiated individually and typically represent a significant annual commitment. Task-based pricing options start at approximately $5,000, but full enterprise access with unlimited searching generally costs substantially more. Many organizations are evaluating whether this investment is justified when their primary use cases are competitive intelligence rather than bench-level substance research.

What chemical regulatory databases can I access without SciFinder?

Several authoritative regulatory databases are freely accessible, including the EPA's TSCA Chemical Substance Inventory (covering 86,862 substances in U.S. commerce), the European Chemicals Agency's REACH registration database (covering over 100,000 registration dossiers), and PubChem's integrated safety and hazard data from the EPA, FDA, and other agencies. Enterprise platforms like Cypris aggregate these regulatory data sources alongside patent and literature data, providing a unified view for R&D compliance screening.

References

[1] CAS, "CAS SciFinder Discovery Platform," cas.org, 2025.[2] R. E. Buntrock, "Apples and Oranges: A Chemistry Searcher Compares CAS SciFinder and Elsevier's Reaxys," Online Searcher, 2020.[3] Cypris, "Enterprise R&D Intelligence Platform," cypris.ai, 2026.[4] U.S. Environmental Protection Agency, "TSCA Chemical Substance Inventory," epa.gov, July 2025.[5] European Chemicals Agency, "ECHA CHEM: REACH Registered Substances," echa.europa.eu, 2026.[6] Elsevier, "Reaxys: Chemistry Database for Experimental Research," elsevier.com, 2025.[7] S. Kim et al., "PubChem 2025 Update," Nucleic Acids Research, vol. 53, D1516-D1525, January 2025.[8] Google, "Google Patents," patents.google.com, 2025.[9] Questel, "Orbit Intelligence," questel.com, 2025.[10] Clarivate, "Derwent Innovation," clarivate.com, 2025.[11] Cambia, "The Lens: Free and Open Patent and Scholarly Search," lens.org, 2025.

Every R&D leader in the chemicals industry has lived this nightmare. A development program that passed every stage-gate review with green lights suddenly stalls in late-stage development because a blocking patent surfaces, a regulatory pathway proves more complex than anticipated, or a competitor reaches market first with a functionally equivalent product. The project is not killed by bad science. It is killed by bad intelligence.

These failures are not rare edge cases. They are structurally predictable outcomes of an industry that spends over $100 billion annually on research and development but still relies on fragmented, narrow tools to inform the decisions that determine which projects survive and which ones consume years of effort and millions in capital before failing [1]. Global patent filings now exceed 3.4 million applications per year. The scientific literature grows by more than 5 million papers annually. Regulatory frameworks like the EPA's TSCA enforcement and the EU's REACH registration requirements are shifting across every major jurisdiction simultaneously. And the competitive dynamics of chemical innovation, from advanced materials and specialty polymers to catalysis and sustainable chemistry, are moving faster than any individual scientist or analyst can track through manual research across disconnected systems.

Chemical intelligence platforms exist to close this gap. They aggregate patent data, scientific literature, competitive signals, and technical knowledge into searchable, analyzable systems that help R&D teams make better decisions about where to invest, what to develop, and how to navigate the intellectual property landscape. But the category is broad, and the platforms within it vary dramatically in what they actually deliver. Some are deep chemical databases with decades of curated substance and reaction data. Others are patent analytics tools originally built for IP attorneys. A few are genuinely new entrants that combine AI-native architecture with the kind of cross-source intelligence that chemical R&D teams have long needed but rarely had access to in a single platform. The choice of platform is not a procurement decision. It is a risk management decision that directly affects whether development programs survive to commercialization or die expensive deaths in late-stage development.

This guide evaluates the best chemical intelligence platforms available to R&D teams in 2026. The evaluation covers data breadth, patent and IP intelligence capabilities, competitive landscape analysis, support for material synthesis and sustainability research, freedom-to-operate assessment, integration with enterprise workflows, and suitability for both large corporate R&D organizations and smaller pharmaceutical research teams. Each platform is assessed on its strengths and its limitations, with an emphasis on the capabilities that matter most when the research informs real decisions about chemical development programs.

What Chemical R&D Teams Actually Need from an Intelligence Platform — and What Happens When They Do Not Have It

Before evaluating individual platforms, it is worth being explicit about what chemical R&D teams are actually trying to accomplish when they use intelligence tools, and what the consequences are when those tools fall short. The needs go well beyond simple literature search. They are, at their core, risk management requirements. And the penalties for getting them wrong compound at every stage of the development lifecycle.

The Stage-Gate model, pioneered by Robert Cooper in the 1980s and adopted by chemical companies from DuPont and Exxon Chemical onward, provides the decision architecture that most chemical R&D organizations use to manage development investment [2]. Its logic is sound: divide the innovation process into discrete phases separated by decision points, and at each gate, evaluate whether the evidence supports continued investment. But as a recent analysis of late-stage chemical project failures makes clear, the Stage-Gate model is only as effective as the intelligence that informs each gate decision [3]. When intelligence is incomplete, gates become confidence exercises rather than genuine decision points, and projects that should have been flagged, redirected, or terminated early advance into expensive later stages where failures cost orders of magnitude more to address.

Competitive landscape intelligence is often the highest-priority use case, and also the one most prone to dangerous gaps. Chemical R&D directors need to understand who is filing patents in their technology domain, which companies are building IP portfolios around specific chemistries, and where the white space exists for differentiated innovation. But white space assessments based on publicly visible competitive activity, such as product announcements, published papers, and issued patents, necessarily lag behind actual competitive development. By the time a competitor's product appears in a trade journal or a patent application publishes, the underlying R&D program has been underway for years. An early-stage gate review that concludes there is limited competitive activity in a target application space may be evaluating a landscape that already has multiple programs in late-stage development, invisible to conventional scanning methods. The chemicals industry is particularly vulnerable to this dynamic because its innovation cycles are long: a specialty polymer program might span five to eight years from concept to commercialization, during which the competitive landscape can shift dramatically.

Patent portfolio management and freedom-to-operate analysis are closely related needs with some of the highest financial consequences when they are handled inadequately. For chemical companies operating globally, understanding the patent landscape across jurisdictions is essential for both offensive and defensive IP strategy. But a single chemical compound can be protected by composition of matter patents, process patents covering specific synthesis routes, formulation patents addressing polymorphs or salt forms, and application patents governing end-use scenarios. A project team that clears the composition of matter search but misses a process patent or a formulation polymorph patent can find itself facing an infringement claim precisely at the moment of commercialization. In the pharmaceutical and specialty chemical sectors, patent litigation damages in the United States reached a median of $8.7 million per award in recent years, with the highest awards exceeding two billion dollars [4]. The indirect costs, including diversion of R&D leadership attention, disruption of commercial timelines, and erosion of investor confidence, often exceed the direct legal expenses. The ratio of early intelligence cost to late-stage patent failure cost is typically on the order of one to one hundred or greater.

Regulatory risk monitoring is an intelligence requirement that many chemical R&D teams underestimate until it derails a program. The chemicals industry operates under one of the most complex regulatory environments of any sector. In the United States, TSCA governs over 86,000 chemical substances, and the 2016 Lautenberg Chemical Safety Act significantly expanded the EPA's authority to evaluate chemical risks with more stringent data submission and risk assessment requirements [5]. Simultaneously, the EU's REACH regulation imposes extensive registration and evaluation requirements, and emerging frameworks in China, Korea, and other major markets add further compliance layers. Regulatory frameworks do not hold still during a five-year development program. The EPA may issue a Significant New Use Rule on a substance class. A state-level restriction around PFAS-adjacent chemistries may create market access barriers that did not exist when the project was initiated. An international body may classify a key precursor as a substance of very high concern. R&D organizations that assess regulatory risk only at designated gate reviews are making investment decisions based on a snapshot of a moving target.

Tracking material synthesis trends and new chemical developments is another core requirement. Chemical R&D teams need to monitor how synthesis methodologies are evolving, which new materials are emerging in the patent literature, and how the technical frontier is advancing in their specific domains. This is particularly important in fast-moving areas like battery materials, catalysis, sustainable chemistry, and advanced polymers, where the gap between a first-mover advantage and a late entry can be measured in quarters rather than years.

Identifying sustainable material alternatives has moved from a corporate social responsibility aspiration to a core R&D priority with direct implications for project viability. Regulatory pressure, customer demand, and the economic realities of raw material availability are driving chemical companies to actively search for greener formulations, bio-based feedstocks, and recyclable material architectures. But sustainability is also a source of late-stage risk. A development program built around a solvent-based chemistry might reach pilot scale only to discover that the target OEM customer has committed to eliminating that substance class from its supply chain as part of a sustainability initiative. Intelligence platforms that can connect sustainability-related patent activity with scientific literature on alternative materials, and with signals about shifting customer and regulatory requirements, give R&D teams a significant advantage in identifying viable pathways and avoiding pathways that are closing.

Integration with existing research workflows is the requirement that separates tools chemical R&D teams actually adopt from tools they evaluate and abandon. Chemical companies operate complex technology ecosystems that include electronic lab notebooks, laboratory information management systems, project management platforms, and internal knowledge repositories. An intelligence platform that exists as an isolated silo, no matter how powerful its data, creates friction that limits adoption. The most valuable platforms are those that can deliver intelligence into the workflows where decisions are actually made, particularly the stage-gate review process where go and no-go decisions are formalized.

Why Narrow Tools Produce Narrow Vision — and Expensive Failures

The root cause of incomplete early-stage research in chemical R&D is not a lack of diligence among project teams. It is a tooling problem that produces systematic blind spots.

Most chemical R&D organizations rely on a fragmented ecosystem of point solutions for different intelligence needs: one tool for patent search, a different platform for scientific literature review, separate services for regulatory monitoring and competitive intelligence, and ad hoc methods for market and application trend analysis. Each tool provides a partial view, and none are designed to synthesize insights across these domains. This fragmentation creates several compounding problems that directly affect which chemical projects survive to commercialization.

First, it makes comprehensive landscape analysis prohibitively time-consuming. When conducting a thorough early-stage assessment requires logging into multiple platforms, running separate searches with different query syntaxes, and manually synthesizing results across systems, the practical outcome is that assessments are narrower than they should be. Teams focus their search effort on the most obvious risks and leave the less obvious ones unexplored, not because they are careless but because the tooling makes thoroughness impractical.

Second, fragmented tools create invisible gaps between domains that are actually deeply interconnected. A patent filing by a competitor might signal both an IP risk and a competitive risk, and might also imply regulatory considerations if the patented process involves substances under active regulatory review. In a fragmented tooling environment, these connections are invisible unless a human analyst happens to notice them, which becomes increasingly unlikely as the volume of data in each domain grows.

Third, and most critically, the consequences of narrow tools compound across the portfolio. For a VP of R&D managing twenty or more active development programs, if each program has even a fifteen to twenty percent chance of encountering a late-stage surprise due to an intelligence gap that should have been caught earlier, the probability that the portfolio avoids all such surprises approaches zero. Every program that advances past a gate on incomplete intelligence is consuming resources, headcount, lab time, pilot facility capacity, and leadership attention, that could be allocated to better-vetted programs with higher probability of successful commercialization [6]. The portfolio's conversion rate from development investment to commercial revenue tells the real story, and organizations with fragmented intelligence infrastructure consistently underperform on this metric.

The economics are stark. Every dollar spent on comprehensive landscape analysis before a gate decision is a hedge against the vastly larger sums committed after that decision. When a blocking patent or a regulatory risk is identified at the concept stage, the cost of redirecting the program is measured in weeks and thousands of dollars. When the same issue surfaces during pilot-scale development, the cost is measured in years and millions. When it surfaces after launch, the exposure can reach into the hundreds of millions. An enterprise intelligence platform subscription that costs a fraction of a single FTE's salary can prevent even one late-stage redirection per year and deliver a return that dwarfs the investment [7].

This is the lens through which the platform evaluations below should be read. The question is not which platform has the most features. It is which platform gives chemical R&D teams the broadest, most integrated view of the landscape early enough to prevent the failures that narrow tools allow through.

1. Cypris — Best Enterprise Chemical Intelligence Platform for R&D Teams

For chemical R&D teams that need a single platform capable of delivering patent intelligence, scientific literature analysis, competitive landscape mapping, and structured research deliverables with enterprise-grade security, Cypris is the most comprehensive option available in 2026 [8].

The platform indexes over 500 million patents, scientific papers, and technical documents, organized through a proprietary R&D ontology powered by retrieval-augmented generation and large language model architecture. This is not a general-purpose search engine repurposed for chemical research. It is an intelligence system designed specifically for the way R&D scientists, technology scouts, and innovation strategists think about their work: not as a series of disconnected literature searches but as an ongoing effort to understand competitive landscapes, identify white space, assess technical feasibility, and make investment decisions grounded in the full body of available evidence.

Competitive landscape intelligence is where Cypris delivers its most distinctive value for chemical R&D teams. The platform maps patent assignee portfolios, tracks filing trends across technology domains, identifies emerging competitors, and generates structured landscape analyses that show not just who is active in a space but how their IP positions relate to each other and where opportunities exist for differentiated innovation. For a specialty chemicals company evaluating whether to enter a new market segment, this kind of structured competitive intelligence is the difference between making a strategic decision and making a guess [9].

Patent portfolio management and freedom-to-operate analysis are core capabilities rather than add-on features. Cypris provides access to patent documents across all major jurisdictions with claim-level detail, assignee information, and citation network analysis. R&D teams can assess freedom-to-operate risks early in the development process, before significant resources have been committed, and can monitor how the patent landscape around their active programs is evolving over time. For chemical companies managing global patent portfolios, the ability to track competitive filing activity across the United States, Europe, China, Japan, and other key jurisdictions from a single platform eliminates the fragmentation that makes multi-tool approaches slow and error-prone [10].

Material synthesis trends and sustainable chemistry are areas where the combination of patent and scientific literature creates particularly strong intelligence. Because Cypris searches both databases simultaneously, R&D teams can see how a new synthesis methodology described in a journal paper connects to patent activity from companies pursuing commercial applications of the same chemistry. This cross-source view is essential for tracking the progression of new materials from laboratory discovery to commercial development and for identifying sustainable material alternatives that are moving from academic research into industrial patent filing activity [11].

Cypris Q, the platform's AI research agent, generates structured intelligence reports that can serve as direct inputs to stage-gate reviews, portfolio assessments, and executive briefings. This is where the derisking thesis meets practical reality. Rather than requiring analysts to manually search multiple disconnected systems and compile a landscape assessment over days or weeks, Cypris Q produces integrated reports that synthesize findings across patent, scientific, regulatory, and competitive domains simultaneously, surfacing the intersections between IP filings, published research, and regulatory developments that remain invisible in fragmented tooling environments. For R&D leaders managing portfolios of twenty or more chemical development programs across multiple technology areas, this capability transforms the gate review process from a periodic, labor-intensive assessment based on partial data into a continuous, data-driven decision framework where risks are identified at the concept stage rather than discovered at pilot scale [12]. The practical result is that weak programs are flagged earlier, freeing resources for programs with clearer paths to commercialization, and the portfolio's overall return on R&D investment improves measurably over time.

Enterprise security and workflow integration reflect the realities of chemical R&D in Fortune 500 organizations. Cypris meets Fortune 500 security requirements and holds official API partnerships with OpenAI, Anthropic, and Google, meaning its AI capabilities are delivered through vetted enterprise infrastructure. Hundreds of Fortune 1000 companies subscribe to the platform, and thousands of R&D and IP professionals use it daily. The platform's architecture is designed to integrate with the enterprise technology ecosystems that chemical companies already operate, including compatibility with the data workflows that connect intelligence outputs to project management systems, electronic lab notebooks, and internal knowledge repositories [13]. For a deeper analysis of how intelligence quality at each stage gate determines which chemical projects survive late-stage development, see "Derisking Late-Stage Development: Why Early R&D Intelligence Determines Which Chemical Projects Survive" on the Cypris blog [14].

Best for: Corporate chemical R&D teams, innovation strategists, technology scouts, and IP professionals who need structured competitive intelligence, patent landscape analysis, freedom-to-operate assessment, and material trend tracking in a single enterprise-grade platform. Particularly strong for teams managing global patent portfolios and for organizations where R&D intelligence needs to be communicated across functions.

2. Reaxys (Elsevier) — Best for Chemical Reaction and Substance Data

Reaxys has been a standard tool in chemical R&D for decades, and its core strength remains its deep, curated database of chemical reactions, substances, and their associated properties. For chemists who need to find known synthetic routes to a target molecule, identify reaction conditions for a specific transformation, or explore the physical and chemical properties of a substance, Reaxys provides a level of chemical specificity that broader intelligence platforms do not match [15].

The platform's reaction search capabilities are genuinely powerful for synthesis planning. Chemists can search by reaction type, reagent, product, or condition and retrieve experimentally validated procedures with yields, solvents, catalysts, and temperature ranges drawn from the primary literature. For bench chemists and process development teams working on specific synthetic problems, this granularity is invaluable. Reaxys also offers substance property data, including melting points, solubility, spectral data, and toxicity information, that supports the practical work of chemical development.

Reaxys also provides predictive tools for molecular property analysis. Its retrosynthesis planning features use algorithmic approaches to suggest synthetic pathways for target molecules, and its property prediction capabilities can estimate physical and chemical properties for compounds where experimental data is limited. For chemical informatics teams that need predictive molecular property analysis as part of their material selection or formulation development workflows, these features are a meaningful complement to the platform's experimental data.

The limitations of Reaxys become apparent when chemical R&D teams need to move beyond substance-level and reaction-level questions to strategic intelligence. Reaxys is not a patent analytics platform. Its patent coverage exists primarily as a source of chemical data rather than as a tool for competitive landscape analysis, assignee portfolio mapping, or freedom-to-operate assessment. R&D teams can find that a particular reaction has been described in a patent, but they cannot use Reaxys to map the broader IP landscape around a technology domain, track competitor filing trends, or identify white space for new innovations. For strategic R&D decisions that depend on understanding the competitive and IP environment, Reaxys needs to be supplemented with a dedicated intelligence platform [16].

Enterprise workflow integration is another area where Reaxys reflects its heritage as a reference database rather than a modern enterprise platform. While it offers API access and institutional licensing, the platform was designed primarily for individual researcher queries rather than for the kind of team-based, workflow-integrated intelligence that large chemical R&D organizations increasingly require.

Best for: Bench chemists, process development teams, and chemical informatics groups who need deep reaction data, substance properties, and predictive molecular analysis. Best used as a complementary tool alongside a broader intelligence platform that provides patent analytics and competitive landscape capabilities.

3. Orbit Intelligence (Questel) — Best Legacy Platform for IP Attorneys in the Chemical Sector

Orbit Intelligence, Questel's patent analytics platform, has long been a standard tool in chemical company IP departments. Its patent search capabilities are comprehensive, its classification system navigation is well-developed, and its analytics features support the kind of detailed patent analysis that IP attorneys and patent agents require for prosecution, validity, and opposition work [17].

For IP professionals in chemical companies, Orbit provides a familiar and capable environment. The platform offers access to patent data from offices worldwide, supports searches by classification code, keyword, assignee, and citation, and provides visualization tools for analyzing patent portfolios and filing trends. Chemical patent specialists who need to conduct thorough prior art searches or build detailed prosecution files will find Orbit's features well-suited to their workflows.

The challenge for chemical R&D teams is that Orbit was designed primarily for legal and IP professionals, not for scientists and innovation strategists. The interface assumes familiarity with patent classification systems, Boolean search logic, and the procedural vocabulary of patent prosecution. For an R&D scientist who needs to quickly understand the competitive landscape around a new polymer chemistry or identify whether a proposed research direction faces freedom-to-operate risks, Orbit's learning curve is steep and its workflow is not optimized for the way scientists approach research questions [18].

Orbit also operates primarily within the patent domain. It does not integrate scientific literature alongside patent data in a unified search experience, which means that R&D teams using Orbit for patent analysis still need a separate set of tools for literature review and technical intelligence. This fragmentation creates inefficiency and makes it difficult to see the full picture of how scientific research and patent activity connect within a technology domain.

For chemical companies that maintain separate IP and R&D intelligence functions, Orbit can serve the IP team well while a different platform serves the R&D team. For organizations looking to consolidate their intelligence infrastructure or to democratize patent intelligence beyond the legal department, Orbit's IP-attorney-centric design can be a limiting factor.

Best for: IP attorneys and patent agents in chemical companies who need comprehensive patent search, classification-based analysis, and prosecution-oriented workflows. Less suitable for R&D scientists and innovation strategists who need accessible competitive intelligence and integrated patent-plus-literature analysis.

4. Derwent Innovation (Clarivate) — Best for Chemical Patent Classification Depth

Derwent Innovation brings a unique asset to chemical patent intelligence: the Derwent World Patents Index, which has been manually classifying and abstracting patents for decades. For chemical patents, this means that each record includes enhanced indexing with Derwent classification codes, curated abstracts that often describe the invention more clearly than the original patent language, and Derwent chemical fragmentation codes that allow chemists to search by structural features [19].

This depth of chemical patent classification is genuinely valuable for specific use cases. A patent analyst looking for all patents related to a particular Markush structure, a specific class of catalysts, or a defined family of polymer architectures can use Derwent's chemical indexing to find relevant documents that keyword searches alone would miss. The curated abstracts save significant time during review by presenting the core invention in accessible language rather than requiring analysts to parse dense patent claims.

The Derwent patent citation index is another strength for chemical R&D teams conducting competitive intelligence. Citation analysis can reveal how patent portfolios build on each other, which filings represent foundational innovations versus incremental improvements, and how IP positions within a technology domain are interconnected. For freedom-to-operate assessments, understanding the citation network around relevant patents provides context that flat search results cannot.

The limitations of Derwent Innovation parallel those of Orbit in important ways. The platform was designed for IP professionals, and its interface and workflows reflect that orientation. R&D scientists who lack patent search expertise often find the platform difficult to use without training, and the analytical tools are optimized for the kind of detailed, document-level patent analysis that attorneys perform rather than the landscape-level strategic intelligence that R&D leaders need. Derwent also does not natively integrate scientific literature alongside its patent data, which creates the same fragmentation challenge that affects all patent-only platforms [20].

Derwent's pricing and licensing model also limits its accessibility within chemical organizations. The platform is typically licensed for IP departments rather than deployed broadly across R&D teams, which means that the valuable intelligence it contains often stays siloed within the legal function rather than flowing upstream to the scientists and strategists who make research investment decisions.

Best for: Patent analysts and IP professionals in chemical companies who need deep chemical patent classification, Derwent indexing codes, curated abstracts, and citation network analysis. Particularly strong for prior art searches and chemical structure-based patent analysis. Less suitable for R&D scientists who need accessible, AI-assisted competitive intelligence.

5. Google Patents — Best Free Tool for Basic Chemical Patent Search

Google Patents provides free access to patent documents from major patent offices worldwide, and for individual researchers or small teams with no budget for enterprise tools, it offers a surprisingly useful starting point for chemical patent research. The interface is intuitive, full-text search works as expected, and the ability to browse patent families, view legal status information, and download documents at no cost makes it genuinely valuable for basic patent awareness [21].

For small-scale pharmaceutical research teams and academic groups that need to check whether a specific patent exists, review the claims of a known filing, or get a general sense of patent activity around a particular chemistry, Google Patents delivers functional results with zero barrier to entry. The platform also includes some machine learning features, such as similarity search and automated classification suggestions, that can help users discover related patents they might not have found through keyword search alone.

The limitations are substantial for any team attempting to use Google Patents as a primary chemical intelligence tool. The platform offers no competitive landscape analysis, no assignee portfolio mapping, no filing trend visualization, and no structured analytical tools of any kind. Search results are returned as a list of individual documents with no analytical layer on top. There is no way to generate reports, track landscapes over time, or automate monitoring of competitor filing activity. For freedom-to-operate assessment, the absence of claim-level analytical tools means that every aspect of the analysis must be performed manually, which is time-consuming and error-prone [22].

Google Patents also has no integration with scientific literature, no enterprise security features, and no team collaboration capabilities. For chemical R&D teams that need to combine patent intelligence with literature analysis, operate within a secure enterprise environment, or share findings across cross-functional teams, Google Patents is a starting point at best and a bottleneck at worst.

Best for: Individual researchers, academic groups, and small pharmaceutical teams who need free access to patent documents for basic searches and document retrieval. Not suitable as a primary intelligence platform for enterprise chemical R&D.

6. The Lens — Best Free Tool for Combined Patent and Scholarly Chemical Research

The Lens, operated by the non-profit Cambia, occupies a unique position among free tools by indexing both patent documents and scholarly papers and allowing users to explore the connections between them. For chemical R&D teams, this is a meaningful capability. The relationship between scientific publication and patent filing is a critical signal in chemical innovation: it reveals how research progresses from discovery to commercial protection and which organizations are translating academic chemistry into proprietary technology [23].

The Lens also provides biological patent sequence data through its PatSeq database, which is particularly useful for pharmaceutical and biotechnology researchers working at the intersection of chemistry and biology. The ability to search patent sequences alongside traditional patent and literature data gives The Lens a distinctive capability for life sciences-oriented chemical research.

For small teams and independent researchers, The Lens provides genuine value as a free complement to more capable enterprise platforms. Its coverage is substantial, its interface is functional, and the ability to see how scholarly citations connect to patent filings is a feature that many paid platforms do not offer.

The limitations follow the same pattern as Google Patents but with additional nuance. The Lens has no AI-assisted analysis, no competitive landscape mapping tools, no report generation capability, and no ability to automate the structured intelligence workflows that enterprise chemical R&D teams need. Search results require manual review and interpretation. For teams conducting serious competitive analysis, freedom-to-operate assessment, or material synthesis trend monitoring, The Lens provides raw data but not structured intelligence. Enterprise security features are also limited, which restricts its usefulness for organizations handling sensitive pre-filing research or proprietary competitive intelligence [24].

Best for: Independent researchers, academic groups, and small pharmaceutical teams who need free access to both patent and scholarly data with citation linking. A useful supplementary tool for chemical R&D professionals who want to cross-reference patent and literature activity on specific topics.

7. PubChem — Best Free Chemical Substance Database

PubChem, maintained by the National Center for Biotechnology Information at the National Institutes of Health, is the world's largest open-access chemical database. It catalogs chemical structures, properties, biological activities, safety data, and links to the scientific literature for millions of chemical compounds. For chemical R&D teams that need to look up substance properties, check bioactivity data, or find safety information for a specific compound, PubChem is an essential free resource [25].

The database's strength is its comprehensiveness for substance-level queries. PubChem aggregates data from hundreds of sources, including government agencies, academic laboratories, and pharmaceutical companies, creating a broad reference library for chemical and biological properties. For pharmaceutical research teams evaluating candidate molecules, the ability to check known bioactivity, toxicity data, and related compounds at no cost is a significant advantage.

PubChem also offers some analytical features, including structure similarity search, substructure search, and molecular formula search, that support the kind of chemical informatics work that R&D teams perform during early-stage material selection and drug discovery.

The limitations are straightforward. PubChem is a substance database, not an intelligence platform. It does not offer patent search, competitive landscape analysis, freedom-to-operate assessment, or any of the strategic intelligence capabilities that chemical R&D teams need for decision-making beyond the molecular level. It has no enterprise features, no team collaboration tools, and no integration with patent analytics or competitive intelligence workflows. PubChem is best understood as a reference resource that supports specific types of chemical queries rather than as a platform for the broader intelligence needs of chemical R&D organizations [26].

Best for: Chemists and pharmaceutical researchers who need free access to chemical substance data, bioactivity information, and property lookups. An essential reference tool that complements but does not replace dedicated chemical intelligence platforms.

How to Select a Chemical Intelligence Platform: Key Evaluation Criteria

The right platform depends on the specific needs of the team, the scale of the organization, and the types of decisions the intelligence is intended to support. But the most important criterion is also the one most often overlooked: does the platform provide broad enough coverage, early enough in the development lifecycle, to prevent the late-stage failures that destroy R&D capital? Every evaluation criterion below should be read through this lens. A platform that scores well on features but still leaves systematic blind spots in the patent, regulatory, or competitive landscape is not solving the problem that costs chemical R&D organizations the most money.

Data coverage and source diversity is the most fundamental consideration. Chemical R&D decisions rarely depend on a single type of data. They require patent intelligence, scientific literature, competitive signals, and often regulatory and market context. Platforms that combine patent and literature data in a unified search experience, like Cypris, reduce the fragmentation that slows research and creates blind spots. Platforms that cover only patents (Orbit, Derwent) or only chemical substances (PubChem) require teams to assemble their intelligence picture from multiple disconnected tools.

Competitive landscape and IP intelligence capabilities separate strategic intelligence platforms from reference databases. For chemical R&D teams that need to monitor competitor patent activity, map assignee portfolios, identify white space, conduct freedom-to-operate assessments, and track how competitive positions are evolving across global jurisdictions, the analytical tools matter as much as the underlying data. Platforms designed for IP attorneys (Orbit, Derwent) provide deep patent analysis but assume legal expertise and focus on document-level work. Platforms designed for R&D teams (Cypris) provide landscape-level strategic intelligence in formats that scientists and strategists can use directly.

AI-assisted analysis and structured outputs determine whether a platform accelerates research or simply provides access to data that still requires extensive manual analysis. In 2026, chemical R&D teams are generating intelligence requirements faster than human analysts can process them. Platforms that use AI to synthesize findings, generate structured reports, and surface patterns across large datasets (Cypris via Cypris Q) deliver a qualitatively different experience from platforms that return search results for manual review (Orbit, Derwent, Google Patents, The Lens).

Enterprise security and compliance is a non-negotiable requirement for Fortune 500 chemical companies. R&D queries about novel formulations, pre-filing invention concepts, and competitive intelligence targets are among the most sensitive information a chemical company generates. Platforms that meet enterprise security requirements (Cypris) are suitable for this work. Free public tools (Google Patents, The Lens, PubChem) and consumer-oriented platforms are not.